A previous post[MIR] focused on our

Annus Mirabilis 1990-1991

at TU Munich. Back then we published many of the basic ideas that powered the Artificial Intelligence Revolution of the 2010s through Artificial Neural Networks (NNs) and Deep Learning. The present post is partially redundant but much shorter (an 8 min read), and focuses on the last decade's most important developments and how they relate to both our work and the work of others. We then conclude by looking forward at the opportunities and challenges the 2020s hold, also addressing privacy and data markets.

Table of Contents

Sec. 1: The Reign of Long Short-Term Memory

Sec. 2: The Decade of Feedforward Neural Networks

Sec. 3: LSTMs & FNNs/CNNs. LSTMs vs Fast Weight Programmers/Transformers

Sec. 4: The Astounding Power of Adversarial Curiosity (1990): GANs

Sec. 5: Other Hot Topics of the 2010s: Deep Reinforcement Learning, Meta-Learning, World Models, Distilling NNs, Neural Architecture Search, Attention Learning, Self-Invented Problems, and more

Sec. 6: The Future of Data Markets and Privacy

Sec. 7: Outlook: 2010s vs 2020s—Virtual AI vs Real AI?

1. The Reign of Long Short-Term Memory (LSTM)

Much of AI in the 2010s was powered by the recurrent NN called Long Short-Term Memory (LSTM).[LSTM0-17][DL4][DLH] The world is sequential by nature, so naturally LSTMs have been able to revolutionize sequential data processing (e.g., speech recognition, machine translation, video recognition, connected handwriting recognition, robotics, video game playing, time series prediction, chat bots, innumerable healthcare applications, etc.). By 2019, the most famous LSTM paper[LSTM1] got more citations per year than any other computer science paper of the 20th century.[MOST] Below I'll list some of the most visible and historically most significant applications.

2009: Connected Handwriting Recognition.

Enormous interest from industry was triggered right before the 2010s when out of the blue

my PhD student Alex Graves won three connected handwriting competitions (French, Farsi, Arabic) at ICDAR 2009: the famous conference on document analysis and recognition. He used a combination of two methods developed in my research

groups at TU Munich and the Swiss AI Lab IDSIA: LSTM (1990s-2005)[LSTM0-6]

(which overcomes the famous vanishing gradient problem first analyzed by my PhD student Sepp Hochreiter[VAN1] in 1991) and Connectionist Temporal Classification[CTC] (2006).

CTC-trained LSTM was the first recurrent NN, or RNN,[MC43][K56]

to win international contests.

CTC-Trained LSTM was also the First Superior End-to-End Neural Speech Recognizer.

Our team successfully applied CTC-trained LSTM to speech in 2007.[LSTM4][LSTM14] This was very different from previous hybrid methods since the late 1980s which combined NNs and traditional approaches such as Hidden Markov Models (HMMs).[BW][BRI][BOU][HYB12][DLP]

Alex kept doing great things with CTC-LSTM as a postdoc in Toronto.[LSTM8]

CTC-LSTM has had massive industrial impact.

By 2015, it dramatically improved Google's speech recognition.[GSR15][DL4]

This was soon on almost every smartphone.

By 2016, more than a quarter of the power of all the

Tensor Processing Units in Google's datacenters

was used for LSTM (and only 5% for convolutional NNs).[JOU17]

Google's

on-device speech recognition of 2019

is still based

on

LSTM.[MIR](Sec. 4)

Microsoft, Baidu, Amazon, Samsung, Apple, and many other major companies are also using LSTM.[DL4][DL1]

2016: The First Superior End-to-End Neural Machine Translation was also Based on LSTM.

In 1995, we already had excellent neural probabilistic models

of text.[SNT]

It was only in 2001, however, that my PhD student Felix Gers used LSTMs to learn languages that were unlearneable by traditional models (e.g., HMMs): a neural "subsymbolic" model suddenly excelled at learning "symbolic" tasks.[LSTM13]

Compute still had to get 1000 times cheaper, but by 2016-17, both Google Translate[GT16]—whose whitepaper[WU] mentions LSTM over 50 times—and Facebook Translate[FB17] were based on two connected LSTMs,[S2S] one for incoming texts, and one for outgoing translations.[DL4] These systems performed vastly better than their predecessors. By 2017, Facebook's users made 30 billion LSTM-based translations per week[FB17][DL4]

(in comparison, the most popular youtube video—the song "Baby Shark Dance"—needed years to achieve only 10 billion clicks[MIR](Sec. 4)).

LSTM-Based Robotics. Since 2003, our team has used LSTM for Reinforcement Learning (RL) and robotics.[LSTM-RL][RPG]

In the 2010s,

combinations of RL and LSTM became standard,

in particular, our

LSTM trained by policy gradients—or PGs (2007).[RPG07][RPG][LSTMPG]

For example, in 2018, a PG-trained LSTM was the core of OpenAI's famous Dactyl which learned to control a dextrous robot hand without a teacher.[OAI1-1a]

2018-2019: LSTM for Video Games. In 2019, DeepMind (co-founded by a student from my lab) famously

beat a pro player in the game of Starcraft—which is known to be much harder than either Chess or Go[DM2]—using

Alphastar whose brain has a deep LSTM core trained by PG.[DM3]

An RL LSTM (with 84% of the model's total parameter count) also was the core of the famous

OpenAI Five

which learned to defeat human experts in the

Dota 2 video game (2018).[OAI2]

Bill Gates called this a "huge milestone in advancing artificial intelligence".[OAI2a][MIR](Sec. 4)[LSTMPG]

LSTM for Healthcare.

A simple Google Scholar search turns up innumerable medical articles that have "LSTM" in their title, e.g.,

for learning to diagnose, ECG time signal analysis and classification, patient subtyping,

clinical concept extraction, diagnosis of arrhythmia,

drug-drug interaction extraction, hospitalization prediction, monitoring on personal wearable devices,

automatic pain level classification, cardiovascular disease risk factors prediction, 4D medical image segmentation, detection of radiological abnormalities, automated sleep stage classification, blood glucose prediction,

diabetes detection, lung cancer detection,

respiration prediction, real-time tumor tracking, breast cancer detection from histopathological images,

air pollution forecasting, protein model quality assessment, protein secondary structure prediction, modeling genome data, generation of drug-like chemical matter, pandemic forecasting,

Covid-19

detection, Covid-19 classification, Covid-19 prediction, and many more.

Other LSTM Applications.

Similar Google Scholar searches turn up many

additional LSTM use cases of the last decade, e.g., for

chemistry, molecule design,

document classification to unclog courts,

evaluating the rationality of judicial decision,

lip reading,[LIP1]

mapping brain signals to speech (Nature, vol 568, 2019),

predicting what's going on in nuclear fusion reactors (same volume, p. 526),

stock market prediction, self-driving cars, industrial fault diagnosis and prognosis, predictive maintenance,

smart grid applications, sustainable management of energy in microgrids,

anomaly detection for industrial big data,

traffic congestion prediction,

education, tutoring, teaching, analysis and prediction of water quality,

air pollutant concentration estimation,

forecasting of renewable energy resources,

predicting concentrations of fine dust,

solar irradiance forecasting under complicated weather conditions,

tracking fish, prediction of vegetation dynamics,

climate change assessment studies on droughts and floods, and many more.

2. The Decade of Feedforward Neural Networks

An LSTM is an RNN that can in principle implement any program that runs on your laptop. The more limited feedforward NNs (FNNs) cannot (although they are good enough for board games such as Backgammon[T94]

and Go[DM2] and Chess). That is, if we want to build an NN-based Artificial General Intelligence (AGI), then its underlying computational substrate must be something like an RNN. FNNs are fundamentally insufficient. RNNs relate to FNNs like general computers relate to mere calculators.

Nevertheless, our Decade of Deep Learning was also about FNNs, as described next.

2010: Deep FNNs Don't Need Unsupervised Pre-Training!

In 2009, many thought that deep FNNs cannot learn much without

unsupervised pre-training, a technique introduced by myself in 1991.[MIR][UN-UN5]

However, in 2010, our team with my

postdoc Dan Ciresan[MLP1-3]

showed that deep FNNs

can be trained by plain

backpropagation[BP1-2][BPA-C][R7]

and do not at all require unsupervised

pre-training for important applications.

Our system set a new performance record[MLP1] on

the back then famous and widely used image recognition benchmark called MNIST.

This was achieved by greatly accelerating traditional FNNs on highly parallel

graphics processing units called GPUs. A reviewer called this a

"wake-up call to the machine learning community."

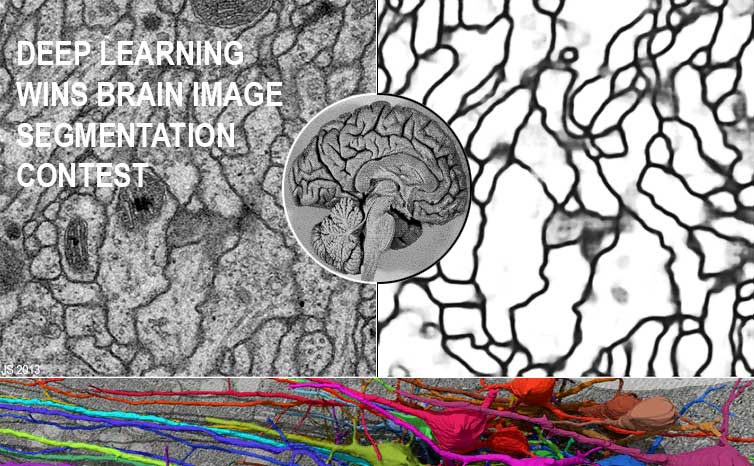

2011: CNN-Based Computer Vision Revolution. Our team in Switzerland (Dan Ciresan et al.)

greatly sped up the convolutional NNs (CNNs)

invented and developed by others since the 1970s.[CNN1-5c]

The first superior award-winning CNN, often called DanNet,

was created in 2011.[GPUCNN1-3,5]

It was a practical breakthrough. It was much deeper and faster than earlier GPU-accelerated

CNNs.[GPUCNN][GPUCNN1]

Already in 2011, it showed that deep learning worked far better than the existing state-of-the-art for recognizing objects in images.

In fact, it

won 4 important computer vision competitions in a row

between May 15, 2011, and September 10, 2012[GPUCNN5]

before a similar GPU-accelerated CNN of the University of Toronto won the ImageNet 2012 contest.[GPUCNN4-5][R6][DLP]

At IJCNN 2011 in Silicon Valley, DanNet blew away the competition through the first superhuman visual pattern recognition in a contest. Even the New York Times mentioned this.

DanNet performed twice better than humans, three times better than the closest artificial competitor, and six times better than the best non-neural method.

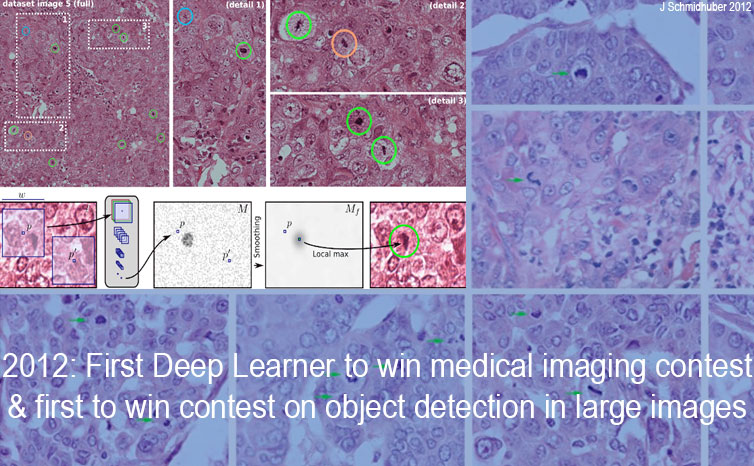

DanNet was also the first deep CNN to win:

a Chinese handwriting contest (ICDAR 2011),

an image segmentation contest (ISBI, May 2012),

a contest on object detection in large images (ICPR, 10 Sept 2012),

and at the same time a medical imaging contest on cancer detection

(all before ImageNet 2012).[GPUCNN4-5][R6])

Our CNN image scanners were 1000 times faster than previous methods,[SCAN]

with tremendous importance for healthcare etc. Today IBM, Siemens, Google and many startups are pursuing this approach (healthcare accounts for 10% of the world's GDP), and

much of modern computer vision is extending our work of 2011.[MIR](Sec. 19)

It is especially gratifying to observe our work being used by the healthcare sector, helping to

prolong human lives, especially in light of the Covid-19 pandemic

that is ravaging the world at the time of writing.

Already in 2010, we introduced our

deep and fast GPU-based NNs to Arcelor Mittal, the world's largest steel maker,

and were able to greatly improve steel defect detection through CNNs[ST]

(before ImageNet 2012).

This may have been the first Deep Learning breakthrough in heavy industry,

and helped to jump-start our company NNAISENSE.

The early 2010s

saw several other applications of our Deep Learning methods.

Through my students

Rupesh Kumar Srivastava and Klaus Greff,

the LSTM principle also

led to

our Highway Networks[HW1] of May 2015, the first working very deep FNNs with hundreds of layers, 10 times deeper than previous FNNs. The Highway Nets were published 7 months before Microsoft's open-gated Highway Net variants (and ImageNet 2015 winners) called ResNets[HW2] (ResNets are like Highway Nets whose gates are always set to 1.0).

The earlier Highway Nets perform roughly as well as ResNets on ImageNet.[HW3]

3. LSTMs & FNNs/CNNs. LSTMs vs Fast Weight Programmers/Transfomers

In the recent Decade of Deep Learning,

the recognition of static patterns (such as images)

was mostly driven by CNNs (which are FNNs; see Sec. 2),

while sequence processing (of speech, text, etc.) was mostly driven by LSTMs (which are RNNs;[L20][I25][K41][MC43][W45][K56][AMH1-2][DLH][NOB] see Sec. 1).

Often CNNs and LSTMs were combined, e.g., for video recognition.

Business Week called LSTM "arguably the most commercial AI achievement".[AV1]

As mentioned above,

by 2019, [LSTM1] got more citations per year than all other computer science papers of the 20th century.[R5] The most cited neural net of the 21st century is an FNN related to LSTM: the above-mentioned ResNet[HW2] (Dec 2015) is an open-gated variant of our earlier Highway Net (May 2015),[HW1][MOST] the FNN version of vanilla LSTM.[LSTM2]

FNNs and LSTMs

invaded each other's territories on occasion. For example, multi-dimensional LSTM[LSTM15] does not suffer from the limited fixed patch size of CNNs and excels at certain computer vision

problems.[LSTM16] Nevertheless, as of 2020,

most of computer vision is still based on CNNs.

The first Large Language Models (LLMs) were based on LSTM. However, towards the end of the decade,

despite their limited time windows,

FNN-based Transformers[TR1-2]

(see the T in ChatGPT)

started to excel at Natural Language Processing (NLP), the traditional LSTM domain (see Sec. 1). Remarkably, Transformers also have their roots in our Miraculous Year of 1991![MIR]

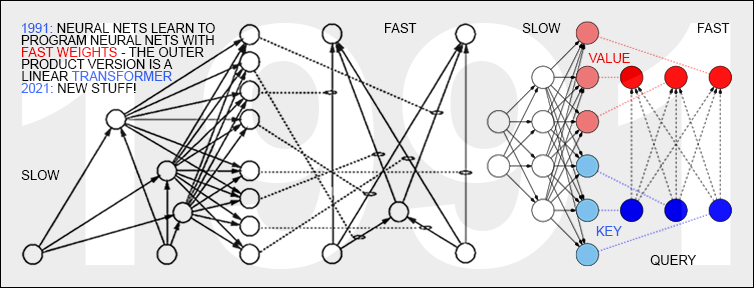

In March 1991, when compute was a million times more expensive than in 2022, even before the LSTM, I published the first Transformer variant, which is now called the unnormalized linear Transformer (ULTRA).[ULTRA][FWP0]

It had to be more efficient than Google's 2017 quadratic Transformer:[TR1] ULTRA's computational costs scale linearly in input size, rather than quadratically—in 1991, no journal would have accepted an NN that scales quadratically.

My 1993 paper on recurrent ULTRA extensions[FWP2] talked about learning "internal spotlights of attention”—compare the recent attention terminology, e.g., "attention is all you need,"[TR1] and tweets of

2022 &

2023.

Nevertheless, there are still many language tasks that LSTM can

rapidly learn to solve quickly[LSTM13,17]

(in time proportional to sentence length)

while plain Transformers can't—see recent studies[TR3-4]

for additional limitations of Transformers.

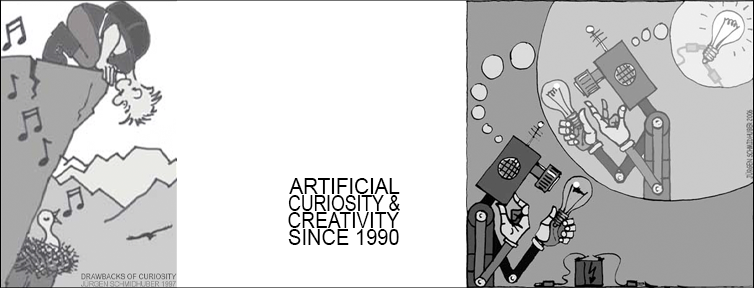

4. The Astounding Power of Adversarial Curiosity (1990): GANs

Another concept that has become very popular in the 2010s are Generative Adversarial Networks (GANs), e.g.,[GAN0-1] (2010-14). GANs are an instance of my popular

adversarial

curiosity principle

from 1990.[AC90, AC90b][AC09-10] This principle works as follows. One NN (the controller) probabilistically generates outputs, another NN (the world model) sees those outputs and predicts environmental reactions to them. Using gradient descent, the predictor NN minimizes its error, while the generator NN tries to make outputs that maximize this error. One net's loss is the other net's gain.

A 2010 survey[AC10] summarised the work of 1990 as follows: a

"neural network as a predictive world model is used to maximize the controller's intrinsic reward, which is proportional to the model's prediction errors."

GANs are a special case of this where the environment simply returns 1 or 0 depending on whether the generator's output is in a given set.[AC20][T22][R2][MIR](Sec. 5)

Other

early adversarial machine learning settings[S59][H90]

neither involved unsupervised NNs nor were about modeling data nor used gradient descent.[AC20]

5. Other Hot Topics of the 2010s: Deep Reinforcement Learning, Meta-Learning, World Models, Distilling NNs, Neural Architecture Search, Attention Learning, Self-Invented Problems ...

In July 2013, our

Compressed Network Search[CO2]

was the

first deep learning model to successfully learn control policies

directly from high-dimensional sensory input (video) using deep reinforcement learning (RL) (see Sec. 6 of the survey[DL1]),

without any

unsupervised pre-training

(extending earlier work on large NNs with

compact codes;[KO0][KO2] see also more recent work[WAV1][OAI3]).

A few months later, neuroevolution-based RL[K96]

also successfully learned to play Atari games.[H13]

Soon afterwards, the company

DeepMind also had a Deep RL system for high-dimensional sensory input.[DM1-2][MIR](Sec. 8)

By 2016, DeepMind had a famous superhuman Go player.[DM4]

DeepMind was founded in 2010: the very advent of the 2010s. The first DeepMinders with AI publications and PhDs in computer science came from my lab: a co-founder and employee nr. 1.

Our work since 1990 on RL and planning based on a combination of two RNNs called the controller and the world model[PLAN2-6][MIR](Sec. 11) also has become popular in the 2010s.

The decade's end also saw a very simple yet novel approach to the old problem of RL.[UDRL]

For decades, few have cared for our work on meta-learning or learning to learn since 1987.[META1][FWPMETA1-7][R3] In the 2010s, however,

meta-learning has finally become

a hot topic.[META10][META17]

Similar for our work since 1990 on Artificial Curiosity & Creativity[AC][MIR](Sec. 5, Sec. 6)[AC90-AC20] and

self-invented problems[AC][MIR](Sec. 12)

in POWERPLAY style (2011).[PP-PP2]

Similar for our work since 1990 on Hierarchical RL,[HRL0-4][LEC][MIR](Sec. 10)

Deterministic Policy Gradients,[AC90][DPG][DDPG][MIR](Sec. 14)

and Synthetic Gradients.[NAN1-5][MIR](Sec. 15)

Similar for our work since 1991

on encoding data by factorial disentangled representations

through adversarial NNs[PM1-2]

and other methods.[LOC]—see also [IG][MIR](Sec. 7)

Similar for our work since 2009 on Neural Architecture Search for LSTM-like architectures

that outperform vanilla LSTM in certain applications,[LSTM7][NAS] and our work

since 1991 on compressing or distilling NNs into other NNs.[UN-UN1][DIST2][R4][T22][MIR](Sec. 2)

Already in the early 1990s, we had both of the now common types of neural sequential attention:

end-to-end-differentiable "soft" attention (in latent space)[FWP2]

through multiplicative units within networks[DEEP1-2] (1965),

and

"hard" attention (in observation space) in

the context of RL.[ATT-ATT1]

This led to lots of follow-up work.

In the 2010s, many have used sequential attention-learning NNs.[MIR](Sec. 9)

Many other concepts of the previous millennium[DL1][MIR]

had to wait for the much faster computers of the 2010s to become popular.

As mentioned earlier,[MIR](Sec. 21)

when only consulting surveys from the Anglosphere,

it is not always clear[DLC][DLH]

that Deep Learning was first conceived outside of it.

It started in 1965 in the Ukraine (back then the USSR) with the first nets of arbitrary depth that really learned.[DEEP1-2][R8] Five years later, modern

backpropagation

was published "next door" in Finland (1970).[BP1] The basic deep convolutional NN architecture (now widely used) was invented in the 1970s in Japan[CNN1] where NNs with convolutions were later (1987-88) also combined with "weight sharing" and backpropagation.[CNN1a] We are standing on the shoulders of these authors and many others—see 888 references in [DL1].

Our own work since the 1980s mostly took place in Germany and Switzerland.

Of course,

Deep Learning is just a small

part of AI, in most applications limited to passive

pattern recognition.

We view it as a by-product of our research on more general

artificial intelligence, which includes

optimal universal learning machines such

as the Gödel machine

(2003-),

asymptotically optimal search for programs

running on general purpose computers such as RNNs, etc.

The work summarized above has yielded many benefits for humanity, ranging from better healthcare to better communication across national borders. However, since many NNs are trained on data from real persons, the privacy implications of this kind of work remain of paramount importance. Sec. 6 will discuss aspects thereof.

6. The Future of Data Markets and Privacy

AIs are trained by data. If it is true that data is the new oil, then it should have a price, just like oil. In the 2010s,

the major surveillance

platforms[SV1] (see Sec. 1) did not offer users any money for their data and the transitive loss of privacy.

The 2020s, however, will likely see attempts at creating efficient data markets to figure out the data's true financial value through the interplay between supply and demand. Even some of the

sensitive medical data

will not be priced by governmental regulators but by

patients (and healthy persons) who own it and who may sell parts thereof as micro-entrepreneurs in a healthcare data market.[SR18][CNNTV2]

Are surveillance and loss of privacy inevitable consequences of increasingly complex societies? Super-organisms such as cities and states and companies consist of numerous people, just like people consist of numerous cells. These cells enjoy little privacy. They are constantly monitored by specialized "police cells" and "border guard cells": Are you a cancer cell? Are you an external intruder, a pathogen? Individual cells sacrifice their freedom for the benefits of being part of a multicellular organism.

Similar for super-organisms such as nations.[FATV]

Over 5000 years ago, writing enabled recorded history and thus became its

inaugural and most important invention.

Its initial purpose,

however, was to facilitate surveillance, to track citizens and their tax payments. The more complex a super-organism, the more comprehensive its collection of information about its constituents.

200 years ago, at least the parish priest in each village knew everything about all the village people, even about those who did not confess, because they appeared in the confessions of others. Also, everyone soon knew about the stranger who had entered the village, because some occasionally peered out of the window, and what they saw got around. Such control mechanisms were temporarily lost through anonymization in rapidly growing cities, but are now returning with the help of new surveillance devices such as smartphones as part of digital nervous systems that tell companies and governments a lot about billions of users.[SV1-2]

Cameras and drones[DR16] etc. are becoming tinier all the time and ubiquitous; excellent recognition

of faces etc. is becoming cheaper and cheaper, and many will use it to identify others anywhere on earth—the big wide world will not offer any more privacy than the local village. Is this good or bad? Some nations may find it easier than others to become more complex kinds of super-organisms at the expense of the privacy rights of their constituents.[FATV]

7. Outlook: 2010s vs 2020s—Virtual AI vs Real AI?

In the 2010s, AI excelled in virtual worlds, e.g., in video games, board games, and especially on the major WWW platforms (see Sec. 1). Most AI profits were in marketing. Passive pattern recognition through NNs helped some of the

most valuable companies

such as Amazon & Alibaba & Google & Facebook & Tencent to keep users longer on their platforms, to predict which items they might be interested in, to make them click at tailored ads etc.

However, marketing is just a tiny part of the world economy. What will the next decade bring?

In the 2020s, Active AI will more and more invade the real world, driving industrial processes and machines and robots, a bit like in the movies (better self-driving cars[CAR1] will be part of this, especially fleets of simple electric cars with small & cheap batteries[CAR2]). Although the real world is much more complex than virtual worlds, and less forgiving, the coming wave of "Real World AI" or simply "Real AI" will be much bigger than the previous AI wave, because it will affect all of production, and thus a much bigger part of the economy. That is why for many years I have been focusing my efforts with

NNAISENSE on Real AI in the physical world.

Some claim that big platform companies with lots of data from many users will dominate AI. That's absurd. How does a baby learn to become intelligent? Not "by downloading lots of data from Facebook".[NAT2] No, it learns by actively creating its own data through its own self-invented experiments with toys etc, learning to predict the consequences of its actions, and using this predictive model of physics and the world to become a better and better planner and problem solver.[AC90][PLAN-PLAN6]

We already know how to build AIs that also learn a bit like babies, using what is called

artificial curiosity[AC,AC90-AC10][PP-PP2]

and incorporating mechanisms that aid in reasoning

[FWP3a][DNC][DNC2]

and in the extraction of

abstract objects

from raw data.[UN1][OBJ1-3]

In the not too distant future, this will help to create what I call in interviews see-and-do robotics: quickly teach an NN to control a complex robot with many degrees of freedom to execute complex tasks, such as assembling a smartphone, solely by visual demonstration, and by talking to it, without touching or otherwise directly guiding the robot—a bit like we'd teach a kid.[FA18] This will revolutionize many aspects of our civilization.

Like all emergent technologies, AI continues to be investigated by many for its potential military applications. While this means that some sort of AI arms race is likely inevitable,[SPE17] it is comforting to recognize that almost all of AI research in the 2020s will be about improving the human condition—not about obtaining any form of military superiority. For the benefit of each and every human, we refuse to let AI be solely in the hands of a small number of massive multinational corporations or global superpowers.

Our goal is to make human lives longer, healthier,

easier, and happier.[SR18]

Our motto is: AI For All!

Since 1941, every 5 years, compute has been

getting 10 times

cheaper.[ACM16]

This trend won't break anytime soon, which means that

everybody will own

cheap but powerful

AIs

that will help them in all aspects of their daily lives.

Beyond 2030, in the more distant future,

most self-driven, self-replicating,

curious,

creative, and

conscious

AIs[INV16]

will go where most of the physical resources are,

eventually

colonizing and transforming the entire visible

universe[ACM16][SA17][FA15][SP16][DLH]

(which may be just one of countably many

computable universes[ALL1-3]).

Acknowledgments

Thanks to several expert reviewers for useful comments, and to Dylan Ashley for comments on the revised version. (Let me know under juergen@idsia.ch if you can spot any remaining error.) Many additional publications of the past decade can be found in my

publication page and my

arXiv page.

The contents of this article may be used for educational and non-commercial purposes, including articles for Wikipedia and similar sites.

This work is licensed under a Creative Commons Attribution-NonCommercial-ShareAlike 4.0 International License.

References

[MIR] J. Schmidhuber (AI Blog, Oct 2019, updated 2025). Deep Learning: Our Miraculous Year 1990-1991. Preprint

arXiv:2005.05744. The deep learning neural networks (NNs) of our team have revolutionised pattern recognition & machine learning & AI. Many of the basic ideas behind this revolution were published within fewer than 12 months in our "Annus Mirabilis" 1990-1991 at TU Munich, including principles of (1)

LSTM, the most cited AI of the 20th century (based on constant error flow through residual connections); (2) ResNet, the most cited AI of the 21st century (based on our LSTM-inspired Highway Network, 10 times deeper than previous NNs); (3)

GAN (for artificial curiosity and creativity); (4) Transformer (the T in ChatGPT—see the 1991 Unnormalized Linear Transformer); (5) Pre-training for deep NNs (the P in ChatGPT); (6) NN distillation (see DeepSeek); (7) recurrent World Models, and more.

[DEC] J. Schmidhuber (AI Blog, 02/20/2020; updated 2025). The 2010s: Our Decade of Deep Learning / Outlook on the 2020s. The recent decade's most important developments and industrial applications based on our AI, with an outlook on the 2020s, also addressing privacy and data markets.

[BW] H. Bourlard, C. J. Wellekens (1989).

Links between Markov models and multilayer perceptrons. NIPS 1989, p. 502-510.

[BRI] Bridle, J.S. (1990). Alpha-Nets: A Recurrent "Neural" Network Architecture with a Hidden Markov Model Interpretation, Speech Communication, vol. 9, no. 1, pp. 83-92.

[BOU] H Bourlard, N Morgan (1993). Connectionist speech recognition. Kluwer, 1993.

[HYB12]

Hinton, G. E., Deng, L., Yu, D., Dahl, G. E., Mohamed, A., Jaitly, N., Senior, A., Vanhoucke, V.,

Nguyen, P., Sainath, T. N., and Kingsbury, B. (2012). Deep neural networks for acoustic modeling

in speech recognition: The shared views of four research groups. IEEE Signal Process. Mag.,

29(6):82-97.

This work did not cite the earlier LSTM[LSTM0-6] trained by Connectionist Temporal Classification (CTC, 2006).[CTC] CTC-LSTM was successfully applied to speech in 2007[LSTM4] (also with hierarchical LSTM stacks[LSTM14]) and became the first superior end-to-end neural speech recogniser that outperformed the

state of the art, dramatically improving Google's speech recognition.[GSR][GSR15][DL4]

This was very different from previous hybrid methods since the late 1980s which combined NNs and traditional approaches such as hidden Markov models (HMMs).[BW][BRI][BOU] [HYB12] still used the old hybrid approach. Later, however, the first author switched to LSTM, too.[LSTM8]

[TR1]

A. Vaswani, N. Shazeer, N. Parmar, J. Uszkoreit, L. Jones, A. N. Gomez, L. Kaiser, I. Polosukhin (2017). Attention is all you need. NIPS 2017, pp. 5998-6008.

This paper introduced the name "Transformers" for a now widely used NN type. It did not cite

the 1991 publication on what's now called unnormalised "linear Transformers" with "linearized self-attention."[ULTRA]

Schmidhuber also introduced the now popular

attention terminology in 1993.[ATT][FWP2][R4]

See tweet of 2022 for 30-year anniversary.

[TR2]

J. Devlin, M. W. Chang, K. Lee, K. Toutanova (2018). Bert: Pre-training of deep bidirectional transformers for language understanding. Preprint arXiv:1810.04805.

[TR3] K. Tran, A. Bisazza, C. Monz. The Importance of Being Recurrent for Modeling Hierarchical Structure. EMNLP 2018, p 4731-4736. ArXiv preprint 1803.03585.

[TR4]

M. Hahn. Theoretical Limitations of Self-Attention in Neural Sequence Models. Transactions of the Association for Computational Linguistics, Volume 8, p.156-171, 2020.

[TR5]

A. Katharopoulos, A. Vyas, N. Pappas, F. Fleuret.

Transformers are RNNs: Fast autoregressive Transformers

with linear attention. In Proc. Int. Conf. on Machine

Learning (ICML), July 2020.

[TR6]

K. Choromanski, V. Likhosherstov, D. Dohan, X. Song,

A. Gane, T. Sarlos, P. Hawkins, J. Davis, A. Mohiuddin,

L. Kaiser, et al. Rethinking attention with Performers.

In Int. Conf. on Learning Representations (ICLR), 2021.

[T94]

G. Tesauro. TD-Gammon, a Self-Teaching Backgammon Program,

Achieves Master-Level Play. Neural Computation 6:2, p 215-219, 1994.

[DM4]

Mastering the game of Go with deep neural networks and tree search.

D. Silver, A. Huang, C. J. Maddison, A. Guez, L. Sifre, G. Van Den Driessche,

J. Schrittwieser, I. Antonoglou, V. Panneershelvam, M. Lanctot, S. Dieleman,

D. Grewe, J. Nham, N. Kalchbrenner, I. Sutskever, T. Lillicrap, M. Leach,

K. Kavukcuoglu, T. Graepel, and D. Hassabis.

Nature 529:7587, p 484-489, 2016.

[T22] J. Schmidhuber (2022).

Scientific Integrity and the History of Deep Learning: The 2021 Turing Lecture, and the 2018 Turing Award. Technical Report IDSIA-77-21, IDSIA, Lugano, Switzerland, 2022.

[HIN] J. Schmidhuber (AI Blog, 2020). Critique of 2019 Honda Prize. Science must not allow corporate PR to distort the academic record.

[NOB] J. Schmidhuber.

A Nobel Prize for Plagiarism.

Technical Report IDSIA-24-24 (7 Dec 2024).

Sadly, the Nobel Prize in Physics 2024 for Hopfield & Hinton is a Nobel Prize for plagiarism. They republished methodologies for artificial neural networks developed in Ukraine and Japan by Ivakhnenko and Amari in the 1960s & 1970s, as well as other techniques, without citing the original papers. Even in later surveys, they didn't credit the original inventors (thus turning what may have been unintentional plagiarism into a deliberate form). None of the important algorithms for modern Artificial Intelligence were created by Hopfield & Hinton.

See also popular

tweet1,

tweet2, and

LinkedIn post.

[CAR1]

Prof. Schmidhuber's highlights of robot car history (2007, updated 2011).

Link.

[NAT2]

D. Heaven.

Why deep-learning AIs are so easy to fool.

Nature 574, 163-166 (2019).

Link.

"A baby doesn't learn by downloading data from Facebook," says Schmidhuber.

[SV1]

S. Zuboff (2019). The age of surveillance capitalism. The Fight for a Human Future at the New Frontier of Power. NY: PublicAffairs.

[SV2]

Facial recognition changes China.

Twitter discussion @hardmaru

[LIP1]

M. Wand, J. Koutnik, J. Schmidhuber. Lipreading with Long Short-Term Memory. Proc. ICASSP, p 6115-6119, 2016.

[DR16]

A Giusti, J Guzzi, DC Ciresan, F He, JP Rodriguez, F Fontana, M Faessler, C Forster, J Schmidhuber, G Di Caro, D Scaramuzza, LM Gambardella (2016):

First drone with onboard vision based on deep neural nets learns to navigate in the forest.

Youtube video (Feb 2016).

[DNC2]

R. Csordas, J. Schmidhuber.

Improving Differentiable Neural Computers Through Memory Masking, De-allocation, and Link Distribution Sharpness Control.

International Conference on Learning Representations (ICLR 2019).

PDF.

[UDRL] Upside Down Reinforcement Learning (2019).

Google it.

[K96] Kaelbling, L. P., Littman, M. L., & Moore, A. W. (1996). Reinforcement learning: A survey. Journal of Artificial Intelligence Research, 237-285.

[H13] M. Hausknecht, J. Lehman, R. Miikkulainen, P. Stone. A Neuroevolution Approach to General Atari Game Playing. IEEE Transactions on Computational Intelligence and AI in Games, 16 Dec. 2013.

[LOC]

S. Hochreiter and J. Schmidhuber.

Feature extraction through LOCOCODE.

PDF.

Neural Computation 11(3): 679-714, 1999

[OBJ1] Greff, K., Rasmus, A., Berglund, M., Hao, T., Valpola, H., Schmidhuber, J. (2016). Tagger: Deep unsupervised perceptual grouping. NIPS 2016, pp. 4484-4492.

[OBJ2] Greff, K., Van Steenkiste, S., Schmidhuber, J. (2017). Neural expectation maximization. NIPS 2017, pp. 6691-6701.

[OBJ3] van Steenkiste, S., Chang, M., Greff, K., Schmidhuber, J. (2018). Relational neural expectation maximization: Unsupervised discovery of objects and their interactions. ICLR 2018.

[IG]

X Chen, Y Duan, R Houthooft, J Schulman, I Sutskever, P Abbeel (2016). Infogan: Interpretable representation learning by information maximizing generative adversarial nets. NIPS 2016, pp. 2172-2180.

[WAV1] van Steenkiste, S., Koutnik, J., Driessens, K., Schmidhuber, J. (July 2016). A wavelet-based encoding for neuroevolution. GECCO 2016, pp. 517-524.

[OAI3] Salimans, T., Ho, J., Chen, X., Sidor, S., Sutskever, I. (2017). Evolution strategies as a scalable alternative to reinforcement learning. Preprint arXiv:1703.03864.

[SLG] S. Le Grand. Medium (2019).

TLDR: Schmidhuber's Lab did it first.

[MIR]-related discussions (mostly of 2019) with many comments at reddit/ml (the largest machine learning forum with over 800k subscribers), ranked by votes (my name is often misspelled):

[R2] Reddit/ML, 2019. J. Schmidhuber really had GANs in 1990.

[R3] Reddit/ML, 2019. NeurIPS 2019 Bengio Schmidhuber Meta-Learning Fiasco.

Schmidhuber started

metalearning (learning to learn—now a hot topic)

in 1987[META1][META] long before Bengio

who suggested in public at N(eur)IPS 2019

that he did it before Schmidhuber.

[R4] Reddit/ML, 2019. Five major deep learning papers by G. Hinton did not cite similar earlier work by J. Schmidhuber.

[R5] Reddit/ML, 2019. The 1997 LSTM paper by Hochreiter & Schmidhuber has become the most cited deep learning research paper of the 20th century.

[R6] Reddit/ML, 2019. DanNet, the CUDA CNN of Dan Ciresan in J. Schmidhuber's team, won 4 image recognition challenges prior to AlexNet.

[R7] Reddit/ML, 2019. J. Schmidhuber on Seppo Linnainmaa, inventor of backpropagation in 1970.

[R8] Reddit/ML, 2019. J. Schmidhuber on Alexey Ivakhnenko, godfather of deep learning 1965.

[R15] Reddit/ML, 2021. J. Schmidhuber's work on fast weights from 1991 is similar to linearized variants of Transformers

Selected interviews of the 2010s in newspapers and magazines (use

DeepL

or Google Translate (see Sec. 1)

to translate German texts). Hundreds of additional interviews and news articles (mostly in English or German) can be found

through search engines.

[ACM16] ACM interview by S. Ibaraki (2016). Chat with J. Schmidhuber:

Artificial Intelligence & Deep Learning—Now & Future.

Link.

[INV16] J. Carmichael. AI gained consciousness in 1991... J. Schmidhuber is convinced the ultimate breakthrough already happened. Inverse, Dec 2016.

Link.

[SR18] JS interviewed by Swiss Re (2018):

The intelligence behind artificial intelligence.

Link.

[CNNTV2] JS interviewed by CNNmoney (2019):

Part 2 on a healthcare data market where every patient can become a micro-entrepreneur.

(Part 1

is more general.)

[FA15] Intelligente Roboter werden vom Leben fasziniert sein.

(Intelligent robots will be fascinated by life.)

FAZ, 1 Dec 2015.

Link.

[SP16] JS interviewed by C. Stoecker:

KI wird das All erobern. (AI will conquer the universe.)

SPIEGEL, 6 Feb 2016.

Link.

[FA18] KI ist eine Riesenchance für Deutschland.

(AI is a huge chance for Germany.)

FAZ, 2018.

Link.

[SPE17] JS interviewed by P. Hummel: Ein Wettrüsten wird sich nicht verhindern lassen. (An AI arms race is inevitable.)

Spektrum, 28 Aug 2017.

Link.

[CAR2]

Interview with J. Schmidhuber at the Geneva Motor Show 2019:

KI wird die Autobranche revolutionieren.

(AI will revolutionise the car industry.) Blick, 11/03/2019.

Link.

[FATV] AI & Economy. Public Night Talk with J. Schmidhuber,

organised by FAZ and Hertie Stiftung (2019, in German).

Youtube link.

[DL1] J. Schmidhuber, 2015.

Deep learning in neural networks: An overview. Neural Networks, 61, 85-117.

More.

Got the first Best Paper Award ever issued by the journal Neural Networks, founded in 1988.

[DL2] J. Schmidhuber, 2015.

Deep Learning.

Scholarpedia, 10(11):32832.

[DL4] J. Schmidhuber (AI Blog, 2017).

Our impact on the world's most valuable public companies: Apple, Google, Microsoft, Facebook, Amazon... By 2015-17, neural nets developed in my labs were on over 3 billion devices such as smartphones, and used many billions of times per day, consuming a significant fraction of the world's compute. Examples: greatly improved (CTC-based) speech recognition on all Android phones, greatly improved machine translation through Google Translate and Facebook (over 4 billion LSTM-based translations per day), Apple's Siri and Quicktype on all iPhones, the answers of Amazon's Alexa, etc. Google's 2019

on-device speech recognition

(on the phone, not the server)

is still based on LSTM.

[DLC] J. Schmidhuber, 2015. Critique of Paper by self-proclaimed "Deep Learning Conspiracy" (Nature 521 p 436). June 2015.

HTML.

[DLH]

J. Schmidhuber (AI Blog, 2022).

Annotated History of Modern AI and Deep Learning. Technical Report IDSIA-22-22, IDSIA, Lugano, Switzerland, 2022.

Preprint arXiv:2212.11279.

Tweet of 2022.

[DLP]

J. Schmidhuber (AI Blog, 2023).

How 3 Turing awardees republished key methods and ideas whose creators they failed to credit.. Technical Report IDSIA-23-23, Swiss AI Lab IDSIA, 14 Dec 2023.

Tweet of 2023.

[AV1] A. Vance. Google Amazon and Facebook Owe Jürgen Schmidhuber a Fortune—This Man Is the Godfather the AI Community Wants to Forget. Business Week,

Bloomberg, May 15, 2018.

[KO0]

J. Schmidhuber.

Discovering problem solutions with low Kolmogorov complexity and

high generalization capability.

Technical Report FKI-194-94, Fakultät für Informatik,

Technische Universität München, 1994.

PDF.

Also at ICML'95.

[KO2]

J. Schmidhuber.

Discovering neural nets with low Kolmogorov complexity

and high generalization capability.

Neural Networks, 10(5):857-873, 1997.

PDF.

[CO1]

J. Koutnik, F. Gomez, J. Schmidhuber (2010). Evolving Neural Networks in Compressed Weight Space. Proceedings of the Genetic and Evolutionary Computation Conference

(GECCO-2010), Portland, 2010.

PDF.

[CO2]

J. Koutnik, G. Cuccu, J. Schmidhuber, F. Gomez.

Evolving Large-Scale Neural Networks for Vision-Based Reinforcement Learning.

In Proceedings of the Genetic and Evolutionary

Computation Conference (GECCO), Amsterdam, July 2013.

PDF.

[CO3]

R. K. Srivastava, J. Schmidhuber, F. Gomez.

Generalized Compressed Network Search.

Proc. GECCO 2012.

PDF.

[DM1]

V. Mnih, K. Kavukcuoglu, D. Silver, A. Graves, I. Antonoglou, D. Wierstra, M. Riedmiller. Playing Atari with Deep Reinforcement Learning. Tech Report, 19 Dec. 2013,

arxiv:1312.5602.

[DM2] V. Mnih, K. Kavukcuoglu, D. Silver, A. A. Rusu, J. Veness, M. G. Bellemare, A. Graves, M. Riedmiller, A. K. Fidjeland, G. Ostrovski, S. Petersen, C. Beattie, A. Sadik, I. Antonoglou, H. King, D. Kumaran, D. Wierstra, S. Legg, D. Hassabis. Human-level control through deep reinforcement learning. Nature, vol. 518, p 1529, 26 Feb. 2015.

Link.

DeepMind's first famous paper. Its abstract claims: "While reinforcement learning agents have achieved some successes in a variety of domains, their applicability has previously been limited to domains in which useful features can be handcrafted, or to domains with fully observed, low-dimensional state spaces." It also claims to bridge "the divide between high-dimensional sensory inputs and actions." Similarly, the first sentence of the abstract of the earlier tech report version[DM1] of [DM2] claims to "present the first deep learning model to successfully learn control policies directly from high-dimensional sensory input using reinforcement learning."

However, the first such system (requiring no unsupervised pre-training) was created earlier by Jan Koutnik et al. in Schmidhuber's lab.[CO2] DeepMind was co-founded by Shane Legg, a PhD student from this lab; he and Daan Wierstra (another PhD student of Schmidhuber and DeepMind's 1st employee) were the first persons at DeepMind who had AI publications and PhDs in computer science. More.

[DM3]

S. Stanford. DeepMind's AI, AlphaStar Showcases Significant Progress Towards AGI. Medium ML Memoirs, 2019.

Alphastar has a "deep LSTM core."

[OAI1]

G. Powell, J. Schneider, J. Tobin, W. Zaremba, A. Petron, M. Chociej, L. Weng, B. McGrew, S. Sidor, A. Ray, P. Welinder, R. Jozefowicz, M. Plappert, J. Pachocki, M. Andrychowicz, B. Baker.

Learning Dexterity. OpenAI Blog, 2018.

[OAI1a]

OpenAI, M. Andrychowicz, B. Baker, M. Chociej, R. Jozefowicz, B. McGrew, J. Pachocki, A. Petron, M. Plappert, G. Powell, A. Ray, J. Schneider, S. Sidor, J. Tobin, P. Welinder, L. Weng, W. Zaremba.

Learning Dexterous In-Hand Manipulation. arxiv:1312.5602 (PDF).

[OAI2]

OpenAI:

C. Berner, G. Brockman, B. Chan, V. Cheung, P. Debiak, C. Dennison, D. Farhi, Q. Fischer, S. Hashme, C. Hesse, R. Jozefowicz, S. Gray, C. Olsson, J. Pachocki, M. Petrov, H. P. de Oliveira Pinto, J. Raiman, T. Salimans, J. Schlatter, J. Schneider, S. Sidor, I. Sutskever, J. Tang, F. Wolski, S. Zhang (Dec 2019).

Dota 2 with Large Scale Deep Reinforcement Learning.

Preprint

arxiv:1912.06680.

An LSTM composes 84% of the model's total parameter count.

[OAI2a]

J. Rodriguez. The Science Behind OpenAI Five that just Produced One of the Greatest Breakthrough in the History of AI. Towards Data Science, 2018. An LSTM with 84% of the model's total parameter count was the core of OpenAI Five.

[L20]

W. Lenz (1920). Beiträge zum Verständnis der magnetischen

Eigenschaften in festen Körpern. Physikalische Zeitschrift, 21:

613-615.

[I25]

E. Ising (1925). Beitrag zur Theorie des Ferromagnetismus. Z. Phys., 31 (1): 253-258, 1925.

First non-learning recurrent NN architecture: the Lenz-Ising model.

[K41]

H. A. Kramers and G. H. Wannier (1941). Statistics of the Two-Dimensional Ferromagnet. Phys. Rev. 60, 252 and 263, 1941.

[K56]

S.C. Kleene. Representation of Events in Nerve Nets and Finite Automata. Automata Studies, Editors: C.E. Shannon and J. McCarthy, Princeton University Press, p. 3-42, Princeton, N.J., 1956.

[MC43]

W. S. McCulloch, W. Pitts. A Logical Calculus of Ideas Immanent in Nervous Activity.

Bulletin of Mathematical Biophysics, Vol. 5, p. 115-133, 1943.

[W45]

G. H. Wannier (1945).

The Statistical Problem in Cooperative Phenomena.

Rev. Mod. Phys. 17, 50.

[AMH1]

S. I. Amari (1972).

Learning patterns and pattern sequences by self-organizing nets of threshold elements. IEEE Transactions, C 21, 1197-1206, 1972.

PDF.

First published learning RNN.

First publication of what was later sometimes called the Hopfield network[AMH2] or Amari-Hopfield Network.

[AMH2]

J. J. Hopfield (1982). Neural networks and physical systems with emergent

collective computational abilities. Proc. of the National Academy of Sciences,

vol. 79, pages 2554-2558, 1982.

The basic equations of the Hopfield network or Amari-Hopfield Network were published in 1972 by Amari.[AMH1]

[VAN1] S. Hochreiter. Untersuchungen zu dynamischen neuronalen Netzen. Diploma thesis, TUM, 1991 (advisor J. Schmidhuber). PDF.

More on the Fundamental Deep Learning Problem.

[VAN2] Y. Bengio, P. Simard, P. Frasconi. Learning long-term dependencies with gradient descent is difficult. IEEE TNN 5(2), p 157-166, 1994.

Results are essentially identical to those of Schmidhuber's diploma student Sepp Hochreiter (1991).[VAN1] Even after a common publication,[VAN3] the first author of [VAN2] published papers[VAN4] that cited only their

own [VAN2] but not the original work.

[VAN3] S. Hochreiter, Y. Bengio, P. Frasconi, J. Schmidhuber. Gradient flow in recurrent nets: the difficulty of learning long-term dependencies. In S. C. Kremer and J. F. Kolen, eds., A Field Guide to Dynamical Recurrent Neural Networks. IEEE press, 2001.

PDF.

[LSTM0]

S. Hochreiter and J. Schmidhuber.

Long Short-Term Memory.

TR FKI-207-95, TUM, August 1995.

PDF.

[LSTM1] S. Hochreiter, J. Schmidhuber. Long Short-Term Memory. Neural Computation, 9(8):1735-1780, 1997. PDF.

Based on [LSTM0]. More.

[LSTM2] F. A. Gers, J. Schmidhuber, F. Cummins. Learning to Forget: Continual Prediction with LSTM. Neural Computation, 12(10):2451-2471, 2000.

PDF.

The "vanilla LSTM architecture" with forget gates

that everybody is using today, e.g., in Google's Tensorflow.

[LSTM3] A. Graves, J. Schmidhuber. Framewise phoneme classification with bidirectional LSTM and other neural network architectures. Neural Networks, 18:5-6, pp. 602-610, 2005.

PDF.

[LSTM4]

S. Fernandez, A. Graves, J. Schmidhuber. An application of

recurrent neural networks to discriminative keyword

spotting.

Intl. Conf. on Artificial Neural Networks ICANN'07,

2007.

PDF.

[LSTM5] A. Graves, M. Liwicki, S. Fernandez, R. Bertolami, H. Bunke, J. Schmidhuber. A Novel Connectionist System for Improved Unconstrained Handwriting Recognition. IEEE Transactions on Pattern Analysis and Machine Intelligence, vol. 31, no. 5, 2009.

PDF.

[LSTM6] A. Graves, J. Schmidhuber. Offline Handwriting Recognition with Multidimensional Recurrent Neural Networks. NIPS'22, p 545-552, Vancouver, MIT Press, 2009.

PDF.

[LSTM7] J. Bayer, D. Wierstra, J. Togelius, J. Schmidhuber.

Evolving memory cell structures for sequence learning.

Proc. ICANN-09, Cyprus, 2009.

PDF.

[LSTM8] A. Graves, A. Mohamed, G. E. Hinton. Speech Recognition with Deep Recurrent Neural Networks. ICASSP 2013, Vancouver, 2013.

PDF.

[LSTM9]

O. Vinyals, L. Kaiser, T. Koo, S. Petrov, I. Sutskever, G. Hinton.

Grammar as a Foreign Language. Preprint arXiv:1412.7449 [cs.CL].

[LSTM10]

A. Graves, D. Eck and N. Beringer, J. Schmidhuber. Biologically Plausible Speech Recognition with LSTM Neural Nets. In J. Ijspeert (Ed.), First Intl. Workshop on Biologically Inspired Approaches to Advanced Information Technology, Bio-ADIT 2004, Lausanne, Switzerland, p. 175-184, 2004.

PDF.

[LSTM11]

N. Beringer and A. Graves and F. Schiel and J. Schmidhuber. Classifying unprompted speech by retraining LSTM Nets. In W. Duch et al. (Eds.): Proc. Intl. Conf. on Artificial Neural Networks ICANN'05, LNCS 3696, pp. 575-581, Springer-Verlag Berlin Heidelberg, 2005.

[LSTM12]

D. Wierstra, F. Gomez, J. Schmidhuber. Modeling systems with internal state using Evolino. In Proc. of the 2005 conference on genetic and evolutionary computation (GECCO), Washington, D. C., pp. 1795-1802, ACM Press, New York, NY, USA, 2005. Got a GECCO best paper award.

[LSTM13]

F. A. Gers and J. Schmidhuber.

LSTM Recurrent Networks Learn Simple Context Free and

Context Sensitive Languages.

IEEE Transactions on Neural Networks 12(6):1333-1340, 2001.

PDF.

[LSTM14]

S. Fernandez, A. Graves, J. Schmidhuber.

Sequence labelling in structured domains with

hierarchical recurrent neural networks. In Proc.

IJCAI 07, p. 774-779, Hyderabad, India, 2007 (talk).

PDF.

[LSTM15]

A. Graves, J. Schmidhuber.

Offline Handwriting Recognition with Multidimensional Recurrent Neural Networks.

Advances in Neural Information Processing Systems 22, NIPS'22, p 545-552,

Vancouver, MIT Press, 2009.

PDF.

[LSTM16]

M. Stollenga, W. Byeon, M. Liwicki, J. Schmidhuber. Parallel Multi-Dimensional LSTM, With Application to Fast Biomedical Volumetric Image Segmentation. Advances in Neural Information Processing Systems (NIPS), 2015.

Preprint: arxiv:1506.07452.

[LSTM17]

J. A. Perez-Ortiz, F. A. Gers, D. Eck, J. Schmidhuber.

Kalman filters improve LSTM network performance in

problems unsolvable by traditional recurrent nets.

Neural Networks 16(2):241-250, 2003.

PDF.

[NAS] B. Zoph, Q. V. Le. Neural Architecture Search with Reinforcement Learning.

Preprint arXiv:1611.01578 (PDF), 2017.

[S2S]

I. Sutskever, O. Vinyals, Quoc V. Le. Sequence to sequence learning with neural networks. In: Advances in Neural Information Processing Systems (NIPS), 2014, 3104-3112.

[CTC] A. Graves, S. Fernandez, F. Gomez, J. Schmidhuber. Connectionist Temporal Classification: Labelling Unsegmented Sequence Data with Recurrent Neural Networks. ICML 06, Pittsburgh, 2006.

PDF.

[GSR15] Dramatic

improvement of Google's speech recognition through LSTM:

Alphr Technology, Jul 2015, or 9to5google, Jul 2015

[META]

J. Schmidhuber (AI Blog, 2020). 1/3 century anniversary of

first publication on metalearning machines that learn to learn (1987).

For its cover I drew a robot that bootstraps itself.

1992-: gradient descent-based neural metalearning. 1994-: Meta-Reinforcement Learning with self-modifying policies. 1997: Meta-RL plus artificial curiosity and intrinsic motivation.

2002-: asymptotically optimal metalearning for curriculum learning. 2003-: mathematically optimal Gödel Machine. 2020: new stuff!

[META1]

J. Schmidhuber.

Evolutionary principles in self-referential learning, or on learning

how to learn: The meta-meta-... hook. Diploma thesis,

Institut für Informatik, Technische Universität München, 1987.

Searchable PDF scan (created by OCRmypdf which uses

LSTM).

HTML.

For example,

Genetic Programming

(GP) is applied to itself, to recursively evolve

better GP methods through Meta-Evolution. More.

[META10]

T. Schaul and J. Schmidhuber. Metalearning. Scholarpedia, 5(6):4650, 2010.

[META17]

R. Miikkulainen, Q. Le, K. Stanley, C. Fernando. NIPS 2017 Metalearning Symposium.

[FWP]

J. Schmidhuber (AI Blog, 26 March 2021, updated 2025).

26 March 1991: Neural nets learn to program neural nets with fast weights—like Transformer variants. 2021: New stuff!

30-year anniversary of a now popular

alternative[FWP0-1] to recurrent NNs.

A slow feedforward NN learns by gradient descent to program the changes of

the fast weights[FAST,FASTa] of

another NN, separating memory and control like in traditional computers.

Such Fast Weight Programmers[FWP0-6,FWPMETA1-8] can learn to memorize past data, e.g.,

by computing fast weight changes through additive outer products of self-invented activation patterns[FWP0-1]

(now often called keys and values for self-attention[TR1-6]).

The similar Transformers[TR1-2] combine this with projections

and softmax and

are now widely used in natural language processing.

For long input sequences, their efficiency was improved through

Transformers with linearized self-attention[TR5-6]

which are formally equivalent to Schmidhuber's 1991 outer product-based Fast Weight Programmers (apart from normalization), now called unnormalized linear Transformers.[ULTRA]

In 1993, he introduced

the attention terminology[FWP2] now used

in this context,[ATT] and

extended the approach to

RNNs that program themselves.

See tweet of 2022.

[FWP0]

J. Schmidhuber.

Learning to control fast-weight memories: An alternative to recurrent nets.

Technical Report FKI-147-91, Institut für Informatik, Technische

Universität München, 26 March 1991.

PDF.

First paper on fast weight programmers that separate storage and control: a slow net learns by gradient descent to compute weight changes of a fast net. The outer product-based version (Eq. 5) is now known as an unnormalized linear Transformer or "Transformer with linearized self-attention."[FWP]

[FWP1] J. Schmidhuber. Learning to control fast-weight memories: An alternative to recurrent nets. Neural Computation, 4(1):131-139, 1992. Based on [FWP0].

PDF.

HTML.

Pictures (German).

See tweet of 2022 for 30-year anniversary.

[FWP2] J. Schmidhuber. Reducing the ratio between learning complexity and number of time-varying variables in fully recurrent nets. In Proceedings of the International Conference on Artificial Neural Networks, Amsterdam, pages 460-463. Springer, 1993.

PDF.

First recurrent NN-based fast weight programmer using outer products (a recurrent extension of the 1991 unnormalized linear Transformer), introducing the terminology of learning "internal spotlights of attention."

[FWP3] I. Schlag, J. Schmidhuber. Gated Fast Weights for On-The-Fly Neural Program Generation. Workshop on Meta-Learning, @N(eur)IPS 2017, Long Beach, CA, USA.

[FWP3a] I. Schlag, J. Schmidhuber. Learning to Reason with Third Order Tensor Products. Advances in Neural Information Processing Systems (N(eur)IPS), Montreal, 2018.

Preprint: arXiv:1811.12143. PDF.

[FWP5]

F. J. Gomez and J. Schmidhuber.

Evolving modular fast-weight networks for control.

In W. Duch et al. (Eds.):

Proc. ICANN'05,

LNCS 3697, pp. 383-389, Springer-Verlag Berlin Heidelberg, 2005.

PDF.

HTML overview.

Reinforcement-learning fast weight programmer.

[FWP6] I. Schlag, K. Irie, J. Schmidhuber.

Linear Transformers Are Secretly Fast Weight Programmers. ICML 2021. Preprint: arXiv:2102.11174.

[FWP7] K. Irie, I. Schlag, R. Csordas, J. Schmidhuber.

Going Beyond Linear Transformers with Recurrent Fast Weight Programmers.

Preprint: arXiv:2106.06295 (June 2021).

[FWPMETA1] J. Schmidhuber. Steps towards `self-referential' learning. Technical Report CU-CS-627-92, Dept. of Comp. Sci., University of Colorado at Boulder, November 1992.

PDF.

[FWPMETA2] J. Schmidhuber. A self-referential weight matrix.

In Proceedings of the International Conference on Artificial

Neural Networks, Amsterdam, pages 446-451. Springer, 1993.

PDF.

[FWPMETA3] J. Schmidhuber.

An introspective network that can learn to run its own weight change algorithm. In Proc. of the Intl. Conf. on Artificial Neural Networks,

Brighton, pages 191-195. IEE, 1993.

[FWPMETA4]

J. Schmidhuber.

A neural network that embeds its own meta-levels.

In Proc. of the International Conference on Neural Networks '93,

San Francisco. IEEE, 1993.

[FWPMETA5]

J. Schmidhuber. Habilitation thesis, TUM, 1993. PDF.

A recurrent neural net with a self-referential, self-reading, self-modifying weight matrix

can be found here.

[FWPMETA6]

L. Kirsch and J. Schmidhuber. Meta Learning Backpropagation & Improving It. Advances in Neural Information Processing Systems (NeurIPS), 2021. Preprint arXiv:2012.14905 [cs.LG], 2020.

[FWPMETA7]

I. Schlag, T. Munkhdalai, J. Schmidhuber.

Learning Associative Inference Using Fast Weight Memory.

Report arXiv:2011.07831 [cs.AI], 2020.

[FWPMETA8]

K. Irie, I. Schlag, R. Csordas, J. Schmidhuber.

A Modern Self-Referential Weight Matrix That Learns to Modify Itself.

International Conference on Machine Learning (ICML), 2022.

Preprint: arXiv:2202.05780.

[FWPMETA9]

L. Kirsch and J. Schmidhuber.

Self-Referential Meta Learning.

First Conference on Automated Machine Learning (Late-Breaking Workshop), 2022.

[DNC]

A. Graves, G. Wayne, M. Reynolds, T. Harley, I. Danihelka, A. Grabska-Barwinska, S. G. Colmenarejo, E. Grefenstette, T. Ramalho, J. Agapiou, A. P. Badia, K. M. Hermann, Y. Zwols, G. Ostrovski, A. Cain, H. King, C. Summerfield, P. Blunsom, K. Kavukcuoglu, D. Hassabis.

Hybrid computing using a neural network with dynamic external memory.

Nature, 538:7626, p 471, 2016.

This work of DeepMind did not cite the original work of the early 1990s on neural networks learning to control dynamic external memories.[PDA1-2][FWP0-1]

[PDA1]

G.Z. Sun, H.H. Chen, C.L. Giles, Y.C. Lee, D. Chen. Neural Networks with External Memory Stack that Learn Context—Free Grammars from Examples. Proceedings of the 1990 Conference on Information Science and Systems, Vol.II, pp. 649-653, Princeton University, Princeton, NJ, 1990.

[PDA2]

M. Mozer, S. Das. A connectionist symbol manipulator that discovers the structure of context-free languages. Proc. NIPS 1993.

[WU] Y. Wu et al. Google's Neural Machine Translation System: Bridging the Gap between Human and Machine Translation.

Preprint arXiv:1609.08144 (PDF), 2016.

[GT16] Google's

dramatically improved Google Translate of 2016 is based on LSTM, e.g.,

WIRED, Sep 2016,

or

siliconANGLE, Sep 2016

[FB17]

By 2017, Facebook

used LSTM

to handle

over 4 billion automatic translations per day (The Verge, August 4, 2017);

see also

Facebook blog by J.M. Pino, A. Sidorov, N.F. Ayan (August 3, 2017)

[LSTM-RL]

B. Bakker, F. Linaker, J. Schmidhuber.

Reinforcement Learning in Partially Observable Mobile Robot

Domains Using Unsupervised Event Extraction.

In Proceedings of the 2002

IEEE/RSJ International Conference on

Intelligent Robots and Systems (IROS 2002), Lausanne, 2002.

PDF.

[LSTMPG]

J. Schmidhuber (AI Blog, Dec 2020). 10-year anniversary of our journal paper on deep reinforcement learning with policy gradients for LSTM (2007-2010). Recent famous applications: DeepMind's Starcraft player (2019) and OpenAI's dextrous robot hand & Dota player (2018)—Bill Gates called this a huge milestone in advancing AI.

[RPG07]

D. Wierstra, A. Foerster, J. Peters, J. Schmidhuber. Solving Deep Memory POMDPs

with Recurrent Policy Gradients.

Intl. Conf. on Artificial Neural Networks ICANN'07,

2007.

PDF.

[RPG]

D. Wierstra, A. Foerster, J. Peters, J. Schmidhuber (2010). Recurrent policy gradients. Logic Journal of the IGPL, 18(5), 620-634.

[HW1] R. K. Srivastava, K. Greff, J. Schmidhuber. Highway networks.

Preprints arXiv:1505.00387 (May 2015) and arXiv:1507.06228 (July 2015). Also at NIPS 2015. The first working very deep feedforward nets with over 100 layers (previous NNs had at most a few tens of layers). Let g, t, h, denote non-linear differentiable functions. Each non-input layer of a highway net computes g(x)x + t(x)h(x), where x is the data from the previous layer. (Like LSTM with forget gates[LSTM2] for RNNs.) Resnets[HW2] are a version of this where the gates are always open: g(x)=t(x)=const=1.

Highway Nets perform roughly as well as ResNets[HW2] on ImageNet.[HW3] Variants of highway gates are also used for certain algorithmic tasks, where the simpler residual layers do not work as well.[NDR]

More.

[HW1a]

R. K. Srivastava, K. Greff, J. Schmidhuber. Highway networks. Presentation at the Deep Learning Workshop, ICML'15, July 10-11, 2015.

Link.

[HW2] He, K., Zhang,

X., Ren, S., Sun, J. Deep residual learning for image recognition. Preprint

arXiv:1512.03385

(Dec 2015). Residual nets are a version of Highway Nets[HW1]

where the gates are always open:

g(x)=1 (a typical highway net initialization) and t(x)=1.

More.

[HW3]

K. Greff, R. K. Srivastava, J. Schmidhuber. Highway and Residual Networks learn Unrolled Iterative Estimation. Preprint arxiv:1612.07771 (2016). Also at ICLR 2017.

[JOU17] Jouppi et al. (2017). In-Datacenter Performance Analysis of a Tensor Processing Unit.

Preprint arXiv:1704.04760

[CNN1] K. Fukushima: Neural network model for a mechanism of pattern

recognition unaffected by shift in position—Neocognitron.

Trans. IECE, vol. J62-A, no. 10, pp. 658-665, 1979.

The first deep convolutional neural network architecture, with alternating convolutional layers and downsampling layers. In Japanese. English version: [CNN1+]. More in Scholarpedia.

[CNN1+]

K. Fukushima: Neocognitron: a self-organizing neural network model for a mechanism of pattern recognition unaffected by shift in position.

Biological Cybernetics, vol. 36, no. 4, pp. 193-202 (April 1980).

Link.

[CNN1a] A. Waibel. Phoneme Recognition Using Time-Delay Neural Networks. Meeting of IEICE, Tokyo, Japan, 1987. First application of backpropagation[BP1][BP2] and weight-sharing

to a 1-dimensional convolutional architecture.

[CNN1a+]

W. Zhang, J. Tanida, K. Itoh, Y. Ichioka. Shift-invariant pattern recognition neural network and its optical architecture. Proc. Annual Conference of the Japan Society of Applied Physics, 1988.

First "modern" backpropagation-trained 2-dimensional CNN.

[CNN1b] A. Waibel, T. Hanazawa, G. Hinton, K. Shikano and K. J. Lang. Phoneme recognition using time-delay neural networks. IEEE Transactions on Acoustics, Speech, and Signal Processing, vol. 37, no. 3, pp. 328-339, March 1989. Based on [CNN1a].

[CNN1c] Bower Award Ceremony 2021:

Jürgen Schmidhuber lauds Kunihiko Fukushima. YouTube video, 2021.

[CNN2] Y. LeCun, B. Boser, J. S. Denker, D. Henderson, R. E. Howard, W. Hubbard, L. D. Jackel: Backpropagation Applied to Handwritten Zip Code Recognition, Neural Computation, 1(4):541-551, 1989.

PDF.

[CNN3a]

K. Yamaguchi, K. Sakamoto, A. Kenji, T. Akabane, Y. Fujimoto. A Neural Network for Speaker-Independent Isolated Word Recognition. First International Conference on Spoken Language Processing (ICSLP 90), Kobe, Japan, Nov 1990.

A 1-dimensional NN with convolutions using Max-Pooling instead of Fukushima's

Spatial Averaging.[CNN1]

[CNN3] Weng, J.,

Ahuja, N., and Huang, T. S. (1993). Learning recognition and segmentation of 3-D objects from 2-D images. Proc. 4th Intl. Conf. Computer Vision, Berlin, Germany, pp. 121-128. A 2-dimensional CNN whose downsampling layers use Max-Pooling

(which has become very popular) instead of Fukushima's

Spatial Averaging.[CNN1]

[CNN4] M. A. Ranzato, Y. LeCun: A Sparse and Locally Shift Invariant Feature Extractor Applied to Document Images. Proc. ICDAR, 2007

[CNN5a]

S. Behnke. Learning iterative image reconstruction in the neural abstraction pyramid. International Journal of Computational Intelligence and Applications, 1(4):427-438, 1999.

[CNN5b]

S. Behnke. Hierarchical Neural Networks for Image Interpretation, volume LNCS 2766 of Lecture Notes in Computer Science. Springer, 2003.

[CNN5c]

D. Scherer, A. Mueller, S. Behnke. Evaluation of pooling operations in convolutional architectures for object recognition. In Proc. International Conference on Artificial Neural Networks (ICANN), pages 92-101, 2010.

[GPUNN]

Oh, K.-S. and Jung, K. (2004). GPU implementation of neural networks. Pattern Recognition, 37(6):1311-1314. Speeding up traditional NNs on GPU by a factor of 20.

[GPUCNN]

K. Chellapilla, S. Puri, P. Simard. High performance convolutional neural networks for document processing. International Workshop on Frontiers in Handwriting Recognition, 2006. Speeding up shallow CNNs on GPU by a factor of 4.

[DAN]

J. Schmidhuber (AI Blog, 2021).

10-year anniversary. In 2011, DanNet triggered the deep convolutional neural network (CNN) revolution. Named after my outstanding postdoc Dan Ciresan, it was the first deep and fast CNN to win international computer vision contests, and had a temporary monopoly on winning them, driven by a very fast implementation based on graphics processing units (GPUs).

1st superhuman result in 2011.[DAN1] Now everybody is using this approach.

[DAN1]

J. Schmidhuber (AI Blog, 2011; updated 2021 for 10th birthday of DanNet): First superhuman visual pattern recognition.

At the IJCNN 2011 computer vision competition in Silicon Valley,

our artificial neural network called DanNet performed twice better than humans, three times better than the closest artificial competitor, and six times better than the best non-neural method.

[Drop1] S. J. Hanson (1990). A Stochastic Version of the Delta Rule, PHYSICA D,42, 265-272.

What's now called "dropout" is a variation of the stochastic delta rule—compare preprint

arXiv:1808.03578, 2018.

[Drop2]

N. Frazier-Logue, S. J. Hanson (2020). The Stochastic Delta Rule: Faster and More Accurate Deep Learning Through Adaptive Weight Noise. Neural Computation 32(5):1018-1032.

[Drop3]

J. Hertz, A. Krogh, R. Palmer (1991). Introduction to the Theory of Neural Computation. Redwood City, California: Addison-Wesley Pub. Co., pp. 45-46.

[Drop4]

N. Frazier-Logue, S. J. Hanson (2018). Dropout is a special case of the stochastic delta rule: faster and more accurate deep learning.

Preprint

arXiv:1808.03578, 2018.

[GPUCNN1] D. C. Ciresan, U. Meier, J. Masci, L. M. Gambardella, J. Schmidhuber. Flexible, High Performance Convolutional Neural Networks for Image Classification. International Joint Conference on Artificial Intelligence (IJCAI-2011, Barcelona), 2011. PDF. ArXiv preprint.

Speeding up deep CNNs on GPU by a factor of 60.

Used to

win four important computer vision competitions 2011-2012 before others won any

with similar approaches.

[GPUCNN2] D. C. Ciresan, U. Meier, J. Masci, J. Schmidhuber.

A Committee of Neural Networks for Traffic Sign Classification.

International Joint Conference on Neural Networks (IJCNN-2011, San Francisco), 2011.

PDF.

HTML overview.

First superhuman performance in a computer vision contest, with half the error rate of humans, and one third the error rate of the closest competitor.[DAN1] This led to massive interest from industry.

[GPUCNN3] D. C. Ciresan, U. Meier, J. Schmidhuber. Multi-column Deep Neural Networks for Image Classification. Proc. IEEE Conf. on Computer Vision and Pattern Recognition CVPR 2012, p 3642-3649, July 2012. PDF. Longer TR of Feb 2012: arXiv:1202.2745v1 [cs.CV]. More.

[GPUCNN4] A. Krizhevsky, I. Sutskever, G. E. Hinton. ImageNet Classification with Deep Convolutional Neural Networks. NIPS 25, MIT Press, Dec 2012.

PDF.

This paper describes AlexNet, which is similar to the earlier

DanNet,[DAN,DAN1][R6]

the first pure deep CNN

to win computer vision contests in 2011[GPUCNN2-3,5] (AlexNet and VGG Net[GPUCNN9] followed in 2012-2014). [GPUCNN4] emphasizes benefits of Fukushima's ReLUs (1969)[RELU1] and dropout (a variant of Hanson 1990 stochastic delta rule)[Drop1-4] but neither cites the original work[RELU1][Drop1] nor the basic CNN architecture (Fukushima, 1979).[CNN1]

[GPUCNN5]

J. Schmidhuber (AI Blog, 2017; updated 2021 for 10th birthday of DanNet): History of computer vision contests won by deep CNNs since 2011. DanNet won 4 of them in a row before the similar AlexNet/VGG Net and the Resnet (a Highway Net with open gates) joined the party. Today, deep CNNs are standard in computer vision.

[GPUCNN6] J. Schmidhuber, D. Ciresan, U. Meier, J. Masci, A. Graves. On Fast Deep Nets for AGI Vision. In Proc. Fourth Conference on Artificial General Intelligence (AGI-11), Google, Mountain View, California, 2011.

PDF.

[GPUCNN7] D. C. Ciresan, A. Giusti, L. M. Gambardella, J. Schmidhuber. Mitosis Detection in Breast Cancer Histology Images using Deep Neural Networks. MICCAI 2013.

PDF.

[GPUCNN8] J. Schmidhuber (AI Blog, 2017; updated 2021 for 10th birthday of DanNet).

First deep learner to win a contest on object detection in large images—

first deep learner to win a medical imaging contest (2012). Link.

How the Swiss AI Lab IDSIA used GPU-based CNNs to win the

ICPR 2012 Contest on Mitosis Detection

and the MICCAI 2013 Grand Challenge.

[LEC] J. Schmidhuber (AI Blog, 2022). LeCun's 2022 paper on autonomous machine intelligence rehashes but does not cite essential work of 1990-2015. Years ago, Schmidhuber's team published most of what Y. LeCun calls his "main original contributions:" neural nets that learn multiple time scales and levels of abstraction, generate subgoals, use intrinsic motivation to improve world models, and plan (1990); controllers that learn informative predictable representations (1997), etc. This was also discussed on Hacker News, reddit, and in the media.

See tweet1.

LeCun also listed the "5 best ideas 2012-2022" without mentioning that

most of them are from Schmidhuber's lab, and older.

See tweet2.

[SCAN] J. Masci,

A. Giusti, D. Ciresan, G. Fricout, J. Schmidhuber. A Fast Learning Algorithm for Image Segmentation with Max-Pooling Convolutional Networks. ICIP 2013. Preprint arXiv:1302.1690.

[ST]

J. Masci, U. Meier, D. Ciresan, G. Fricout, J. Schmidhuber

Steel Defect Classification with Max-Pooling Convolutional Neural Networks.

Proc. IJCNN 2012, June 2012.

PDF.

[MLP1] D. C. Ciresan, U. Meier, L. M. Gambardella, J. Schmidhuber. Deep Big Simple Neural Nets For Handwritten Digit Recognition. Neural Computation 22(12): 3207-3220, 2010. ArXiv Preprint.

Showed that plain backprop for deep standard NNs is sufficient to break benchmark records, without any unsupervised pre-training.

[MLP2] J. Schmidhuber

(AI Blog, Sep 2020). 10-year anniversary of supervised deep learning breakthrough (2010). No unsupervised pre-training. By 2010, when compute was 100 times more expensive than today, both the feedforward NNs[MLP1] and the earlier recurrent NNs of Schmidhuber's team were able to beat all competing algorithms on important problems of that time.

[MLP3] J. Schmidhuber

(AI Blog, 2025). 2010: Breakthrough of end-to-end deep learning (no layer-by-layer training, no unsupervised pre-training). The rest is history..

By 2010, when compute was 1000 times more expensive than in 2025, both our feedforward NNs[MLP1] and our earlier recurrent NNs were able to beat all competing algorithms on important problems of that time.

This deep learning revolution quickly spread from Europe to North America and Asia. The rest is history.

[MOST]

J. Schmidhuber (AI Blog, 2021, updated 2025). The most cited neural networks all build on work done in my labs: 1. Long Short-Term Memory (LSTM), the most cited AI of the 20th century. 2. ResNet (open-gated Highway Net), the most cited AI of the 21st century. 3. AlexNet & VGG Net (the similar but earlier DanNet of 2011 won 4 image recognition challenges before them). 4. GAN (an instance of Adversarial Artificial Curiosity of 1990). 5. Transformer variants—see the 1991 unnormalised linear Transformer (ULTRA). Foundations of Generative AI were published in 1991: the principles of GANs (now used for deepfakes), Transformers (the T in ChatGPT), Pre-training for deep NNs (the P in ChatGPT), NN distillation, and the famous DeepSeek—see the tweet.

[GAN0]

O. Niemitalo. A method for training artificial neural networks to generate missing data within a variable context.

Blog post, Internet Archive, 2010

[GAN1]

I. Goodfellow, J. Pouget-Abadie, M. Mirza, B. Xu, D. Warde-Farley, S. Ozair,

A. Courville, Y. Bengio.

Generative adversarial nets. NIPS 2014, 2672-2680, Dec 2014.

A description of GANs that does not cite Schmidhuber's original GAN principle of 1990[AC][AC90,AC90b][AC20][R2][T22] (also containing wrong claims about Schmidhuber's adversarial NNs for Predictability Minimization[PM0-2][AC20][T22]).

[AC]

J. Schmidhuber (AI Blog, 2021). 3 decades of artificial curiosity & creativity. Our artificial scientists not only answer given questions but also invent new questions. They achieve curiosity through: (1990) the principle of generative adversarial networks, (1991) neural nets that maximise learning progress, (1995) neural nets that maximise information gain (optimally since 2011), (1997) adversarial design of surprising computational experiments, (2006) maximizing compression progress like scientists/artists/comedians do, (2011) PowerPlay... Since 2012: applications to real robots.

[AC90]

J. Schmidhuber.

Making the world differentiable: On using fully recurrent

self-supervised neural networks for dynamic reinforcement learning and

planning in non-stationary environments.

Technical Report FKI-126-90, TUM, Feb 1990, revised Nov 1990.

PDF.

The first paper on planning with reinforcement learning recurrent neural networks (NNs) (more) and on generative adversarial networks

where a generator NN is fighting a predictor NN in a minimax game (more).

[AC90b]

J. Schmidhuber.

A possibility for implementing curiosity and boredom in

model-building neural controllers.

In J. A. Meyer and S. W. Wilson, editors, Proc. of the

International Conference on Simulation

of Adaptive Behavior: From Animals to

Animats, pages 222-227. MIT Press/Bradford Books, 1991.

PDF.

More.

[AC91]

J. Schmidhuber. Adaptive confidence and adaptive curiosity. Technical Report FKI-149-91, Inst. f. Informatik, Tech. Univ. Munich, April 1991.

PDF.

[AC91b]

J. Schmidhuber.

Curious model-building control systems.

in ref Proc. International Joint Conference on Neural Networks,

Singapore, volume 2, pages 1458-1463. IEEE, 1991.

PDF.

[AC06]

J. Schmidhuber.

Developmental Robotics,

Optimal Artificial Curiosity, Creativity, Music, and the Fine Arts.

Connection Science, 18(2): 173-187, 2006.

PDF.

[AC09]

J. Schmidhuber. Art & science as by-products of the search for novel patterns, or data compressible in unknown yet learnable ways. In M. Botta (ed.), Et al. Edizioni, 2009, pp. 98-112.

PDF. (More on

artificial scientists and artists.)

[AC10]

J. Schmidhuber. Formal Theory of Creativity, Fun, and Intrinsic Motivation (1990-2010). IEEE Transactions on Autonomous Mental Development, 2(3):230-247, 2010.

IEEE link.

PDF.

[AC18]

Y. Burda, H. Edwards, D. Pathak, A. Storkey, T. Darrell, and A. A. Efros.

Large-scale study of curiosity-driven learning.

Preprint arXiv:1808.04355, 2018.

[AC20]

J. Schmidhuber. Generative Adversarial Networks are Special Cases of Artificial Curiosity (1990) and also Closely Related to Predictability Minimization (1991).

Neural Networks, Volume 127, p 58-66, 2020.

Preprint arXiv/1906.04493.

[PM1] J. Schmidhuber. Learning factorial codes by predictability minimization. Neural Computation, 4(6):863-879, 1992. Based on TR CU-CS-565-91, CU, 1991. PDF.

More.