Abstract.

In 1991, I published

my

first very deep learning machine:

the

neural sequence chunker

aka

neural history compressor

[UN0-UN2]. It uses

unsupervised/self-supervised Pre-training (see the P in ChatGPT) and predictive coding

in a deep hierarchy of recurrent neural networks (RNNs)

to find compact internal

representations of long sequences of data,

across multiple time scales and levels of abstraction.

Each RNN tries to solve the pretext task of predicting its next input, sending only unexpected inputs to the next RNN above.

This greatly facilitates downstream supervised deep learning such as sequence classification.

By 1993, the approach solved problems of depth 1000

(requiring 1000 subsequent computational stages/layers—the more such stages, the deeper the learning).

A variant "collapses" or "distills" the hierarchy into a single deep net.

It uses a so-called conscious chunker RNN

which attends to unexpected events that surprise

a lower-level so-called subconscious automatiser RNN.

The chunker learns to understand the surprising events by predicting them.

The automatiser uses my

neural knowledge distillation procedure

of 1991

[UN0-UN2][DLP]

to compress and absorb the formerly conscious insights and

behaviours of the chunker, thus making them subconscious.

The systems of 1991 allowed for much deeper learning than previous methods.

Today's most powerful neural networks

(NNs) tend to be very deep, that is, they have many layers of neurons or many subsequent computational stages.

In the 1980s, however, gradient-based training did not work well for deep NNs, only for shallow

ones [DL1-2].

This deep learning problem

was perhaps most obvious for recurrent NNs (RNNs) studied since the 1920s

[L20][I25][K41][MC43][W45][K56][AMH1-2]. Like the human brain,

but unlike the more limited feedforward NNs (FNNs),

RNNs have feedback connections.

This makes RNNs powerful,

general purpose, parallel-sequential computers

that can process input sequences of arbitrary length (think of speech or videos).

RNNs can in principle implement any program that can run on your laptop.

Proving this is simple: since a few neurons can implement a NAND gate,

a big network of neurons can implement a network of NAND gates.

This is sufficient to emulate the microchip powering your laptop. Q.E.D.

If we want to build an artificial general intelligence (AGI),

then its underlying computational substrate must be something like an RNN—standard FNNs

are fundamentally insufficient.

In particular, unlike FNNs, RNNs can in principle deal with problems

of arbitrary depth, that is, with data sequences of arbitrary length whose processing may require

an a priori unknown number of subsequent computational steps [DL1].

Early RNNs of the 1980s, however, failed to learn very deep problems in practice—compare [DL1-2][MOZ].

I wanted to overcome this drawback, to achieve

RNN-based general purpose deep learning.

In particular, unlike FNNs, RNNs can in principle deal with problems

of arbitrary depth, that is, with data sequences of arbitrary length whose processing may require

an a priori unknown number of subsequent computational steps [DL1].

Early RNNs of the 1980s, however, failed to learn very deep problems in practice—compare [DL1-2][MOZ].

I wanted to overcome this drawback, to achieve

RNN-based general purpose deep learning.

In 1991, my first idea to solve the deep learning problem mentioned above was to

facilitate supervised learning in deep RNNs through

unsupervised pre-training of a hierarchical stack of RNNs.

This led to the first very deep learner called the

Neural Sequence Chunker [UN0] or

Neural History Compressor [UN1][DLP][NOB].

In this architecture, each higher level RNN tries to

reduce the description length (or negative log probability)

of the data representations in the levels below.

This is done

using the Predictive Coding trick: while trying to predict the next input in the incoming data stream using the previous inputs, only update neural activations in the case of unpredictable data so that only what is not yet known is stored.

In other words, given a training set of observation sequences,

the chunker learns to compress typical data streams such that the

deep learning problem

becomes less severe, and can be solved by gradient descent through standard backpropagation,

an old technique from 1970 [BP1-4][R7].

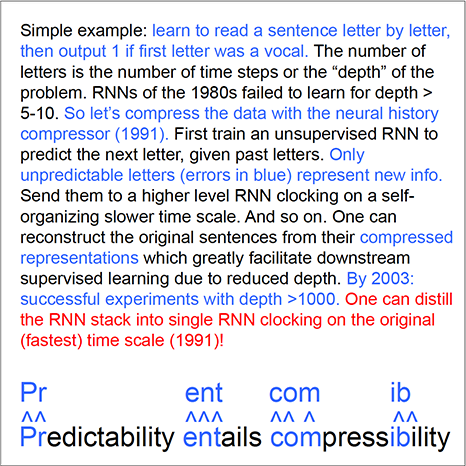

Let us consider an example. At the bottom of the text box, one can read the sentence "Predictability entails compressibility." That's what the lowest level RNN observes, one letter at a time. It is trained in unsupervised fashion to predict the next letter, given the previous letters. The first letter is a "P". It was not correctly predicted. So it is sent (as raw data) to the next level which sees only the unpredictable letters from the lower level. The second letter "r" is also unpredicted. But then, in this example, the lower RNN starts predicting well, because it was pre-trained (see the "P" in "ChatGPT") on a set of typical sentences. The higher level updates its activations only when there is an unexpected error on the lower level. That is, the higher level RNN is operating or "clocking" more slowly because it clocks only in response to unpredictable observations. What's predictable is compressible. Given

the RNN stack and relative positional encodings of the time intervals between unexpected events [UN0-1],

one can reconstruct the exact original sentence from its compressed representation. Finally, the reduced depth on the top level can greatly facilitate downstream learning, e.g., supervised sequence classification [UN0-2].

Let us consider an example. At the bottom of the text box, one can read the sentence "Predictability entails compressibility." That's what the lowest level RNN observes, one letter at a time. It is trained in unsupervised fashion to predict the next letter, given the previous letters. The first letter is a "P". It was not correctly predicted. So it is sent (as raw data) to the next level which sees only the unpredictable letters from the lower level. The second letter "r" is also unpredicted. But then, in this example, the lower RNN starts predicting well, because it was pre-trained (see the "P" in "ChatGPT") on a set of typical sentences. The higher level updates its activations only when there is an unexpected error on the lower level. That is, the higher level RNN is operating or "clocking" more slowly because it clocks only in response to unpredictable observations. What's predictable is compressible. Given

the RNN stack and relative positional encodings of the time intervals between unexpected events [UN0-1],

one can reconstruct the exact original sentence from its compressed representation. Finally, the reduced depth on the top level can greatly facilitate downstream learning, e.g., supervised sequence classification [UN0-2].

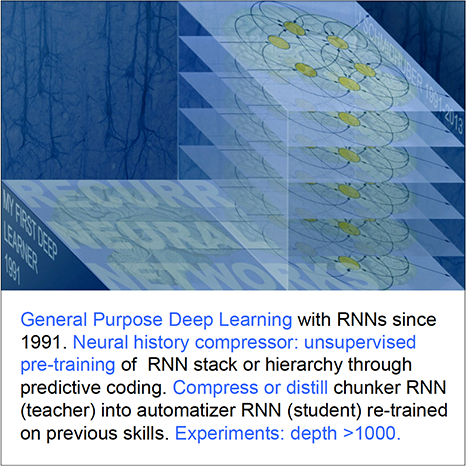

To my knowledge, the Neural Sequence Chunker [UN0]

was also the first system made of RNNs operating on multiple

(self-organizing) time scales [LEC][DLP][T22](Sec. XVII, item (B3)).

Although computers back then were about a million times slower per dollar than today,

by 1993, the neural history compressor was able to solve previously unsolvable

very deep learning tasks of depth > 1000 [UN2], i.e., tasks requiring

more than 1000 subsequent computational stages—the more such stages, the deeper the learning.

I also had a way of compressing or distilling

all those RNNs down into a single deep RNN operating on the original, fastest time scale.

This method is described in

Section 4 of the 1991 reference [UN0] on a

"conscious" chunker

and a "subconscious" automatiser, which

introduced a general principle for

transferring the knowledge of one NN to another—compare

Sec. 2 of [MIR].

To understand this method,

first suppose a teacher NN has learned to predict (conditional expectations of) data,

given other data. Its knowledge can be compressed into a student NN,

by training the student NN to imitate the behavior and internal representations of the teacher NN

(while also re-training the student NN on previously learned skills such that it does not forget them).

I called this collapsing or compressing the behavior of one net into another.

Today, this is widely used,

and also called distilling [DLP][HIN][T22][R4] or cloning the

behavior of a teacher net into a student net.

To summarize, one can compress or distill the RNN hierarchy

down into the original RNN clocking on the fastest time scale.

Then we get a single RNN which solves the entire deep, long time lag problem.

To my knowledge, this was the first very deep learning compressed into a single NN, and the first distillation of the knowledge in a neural network.

I also had a way of compressing or distilling

all those RNNs down into a single deep RNN operating on the original, fastest time scale.

This method is described in

Section 4 of the 1991 reference [UN0] on a

"conscious" chunker

and a "subconscious" automatiser, which

introduced a general principle for

transferring the knowledge of one NN to another—compare

Sec. 2 of [MIR].

To understand this method,

first suppose a teacher NN has learned to predict (conditional expectations of) data,

given other data. Its knowledge can be compressed into a student NN,

by training the student NN to imitate the behavior and internal representations of the teacher NN

(while also re-training the student NN on previously learned skills such that it does not forget them).

I called this collapsing or compressing the behavior of one net into another.

Today, this is widely used,

and also called distilling [DLP][HIN][T22][R4] or cloning the

behavior of a teacher net into a student net.

To summarize, one can compress or distill the RNN hierarchy

down into the original RNN clocking on the fastest time scale.

Then we get a single RNN which solves the entire deep, long time lag problem.

To my knowledge, this was the first very deep learning compressed into a single NN, and the first distillation of the knowledge in a neural network.

In January 2025, the DeepSeek "Sputnik" [DS1] wiped out a trillion USD from the stock market. DeepSeek-R1 [DS1] uses elements of my 2015 reinforcement learning (RL) prompt engineer [PLAN4] and its 2018 refinement [PLAN5] which collapses the 2015 RL machine and its world model [PLAN4] into a single net through the neural net distillation procedure of 1991 [UN0-3][UN][DLP]: a distilled "chain of thought" system. See the popular tweet of 31 Jan 2025.

In 1993, we also published a

Neural History Compressor without varying time scales where the time-varying "update strengths" of a higher level RNN depend

on the magnitudes of the surprises in the level below [UN3].

Soon after the advent of the unsupervised pre-training-based very deep learner above,

the fundamental deep learning problem (first analyzed in 1991 by my student Sepp Hochreiter [VAN1]—see Sec. 3 of [MIR] and item (B2) of Sec. XVII of [T22]) was also overcome

through purely supervised Long Short-Term Memory or LSTM—see

Sec. 4 of [MIR]

(and Sec. A & B of [T22]).

Subsequently, this new type of superior supervised learning made unsupervised pre-training [NOB] less important,

and LSTM drove much of the supervised deep learning revolution [DL4][DEC].

Between 2006 and 2011, my lab also drove

a very similar shift from unsupervised pre-training to pure supervised learning,

this time for the simpler

feedforward NNs (FNNs)

[MLP1-3][R4]

rather

than recurrent NNs (RNNs). This led to revolutionary applications

to

image recognition [DAN]

and

cancer detection, among many other problems. See Sec. 19 of [MIR].

Soon after the advent of the unsupervised pre-training-based very deep learner above,

the fundamental deep learning problem (first analyzed in 1991 by my student Sepp Hochreiter [VAN1]—see Sec. 3 of [MIR] and item (B2) of Sec. XVII of [T22]) was also overcome

through purely supervised Long Short-Term Memory or LSTM—see

Sec. 4 of [MIR]

(and Sec. A & B of [T22]).

Subsequently, this new type of superior supervised learning made unsupervised pre-training [NOB] less important,

and LSTM drove much of the supervised deep learning revolution [DL4][DEC].

Between 2006 and 2011, my lab also drove

a very similar shift from unsupervised pre-training to pure supervised learning,

this time for the simpler

feedforward NNs (FNNs)

[MLP1-3][R4]

rather

than recurrent NNs (RNNs). This led to revolutionary applications

to

image recognition [DAN]

and

cancer detection, among many other problems. See Sec. 19 of [MIR].

Of course, deep learning in feedforward NNs started much earlier, with Ivakhnenko & Lapa, who published the first general, working learning algorithms for deep multilayer perceptrons with arbitrarily many layers back in 1965 [DEEP1]. For example, Ivakhnenko's paper from 1971 [DEEP2] already described a deep learning net with 8 layers, trained by a highly cited method still popular in the new millennium [DL2][NOB]. But unlike the deep FNNs of Ivakhnenko and his successors of the 1970s and 80s, our deep RNNs had general purpose parallel-sequential computational architectures [UN0-3]. By the early 1990s, most NN research was still limited to rather shallow nets with fewer than 10 subsequent computational stages, while our methods already enabled over 1000 such stages.

Finally let me emphasize that the above-mentioned

supervised deep learning revolutions of

the early 1990s (for recurrent NNs)[MIR]

and of

2010 (for feedforward NNs)

[MLP1-3] did

not at all kill unsupervised learning and pre-training.

For example, pre-trained language models based on Transformers (the T in ChatGPT)

excel at the traditional LSTM domain of

Natural Language Processing [TR1-6]

(although there are still many language tasks that LSTM can

rapidly learn to solve quickly [LSTM13]

while plain Transformers can't).

Remarkably,

unnormalised linear Transformers [ULTRA] were also first published [FWP0-6] in

our

Annus Mirabilis of 1990-1991 [MIR][MOST],

together with unsupervised pre-training (the P in ChatGPT) for deep learning [UN0-3][NOB] and

NN distillation [UN1-3][DLP], now used by

DeepSeek and others.

And

our unsupervised generative adversarial NNs since

1990

[AC90-AC20][PLAN]

are still used to endow agents with

artificial curiosity [MIR](Sec. 5 & Sec. 6)—see also a version of our adversarial NNs [AC90b] called GANs [AC20][R2][PLAN][MOST][T22](Sec. XVII).

Unsupervised learning still has a bright future!

Acknowledgments

Thanks to several expert reviewers for useful comments. Since science is about self-correction, let me know under juergen@idsia.ch if you can spot any remaining error. The contents of this article may be used for educational and non-commercial purposes, including articles for Wikipedia and similar sites. This work is licensed under a Creative Commons Attribution-NonCommercial-ShareAlike 4.0 International License.

Thanks to several expert reviewers for useful comments. Since science is about self-correction, let me know under juergen@idsia.ch if you can spot any remaining error. The contents of this article may be used for educational and non-commercial purposes, including articles for Wikipedia and similar sites. This work is licensed under a Creative Commons Attribution-NonCommercial-ShareAlike 4.0 International License.

References

[AC]

J. Schmidhuber (AI Blog, 2021). 3 decades of artificial curiosity & creativity. Our artificial scientists not only answer given questions but also invent new questions. They achieve curiosity through: (1990) the principle of generative adversarial networks, (1991) neural nets that maximise learning progress, (1995) neural nets that maximise information gain (optimally since 2011), (1997) adversarial design of surprising computational experiments, (2006) maximizing compression progress like scientists/artists/comedians do, (2011) PowerPlay... Since 2012: applications to real robots.

[AC90]

J. Schmidhuber.

Making the world differentiable: On using fully recurrent

self-supervised neural networks for dynamic reinforcement learning and

planning in non-stationary environments.

Technical Report FKI-126-90, TUM, Feb 1990, revised Nov 1990.

PDF.

The first paper on planning with reinforcement learning recurrent neural networks (NNs) (more) and on generative adversarial networks

where a generator NN is fighting a predictor NN in a minimax game

(more).

[AC90b]

J. Schmidhuber.

A possibility for implementing curiosity and boredom in

model-building neural controllers.

In J. A. Meyer and S. W. Wilson, editors, Proc. of the

International Conference on Simulation

of Adaptive Behavior: From Animals to

Animats, pages 222-227. MIT Press/Bradford Books, 1991.

PDF.

More.

[AC91]

J. Schmidhuber. Adaptive confidence and adaptive curiosity. Technical Report FKI-149-91, Inst. f. Informatik, Tech. Univ. Munich, April 1991.

PDF.

[AC91b]

J. Schmidhuber.

Curious model-building control systems.

Proc. International Joint Conference on Neural Networks,

Singapore, volume 2, pages 1458-1463. IEEE, 1991.

PDF.

[AC06]

J. Schmidhuber.

Developmental Robotics,

Optimal Artificial Curiosity, Creativity, Music, and the Fine Arts.

Connection Science, 18(2): 173-187, 2006.

PDF.

[AC09]

J. Schmidhuber. Art & science as by-products of the search for novel patterns, or data compressible in unknown yet learnable ways. In M. Botta (ed.), Et al. Edizioni, 2009, pp. 98-112.

PDF. (More on

artificial scientists and artists.)

[AC10]

J. Schmidhuber. Formal Theory of Creativity, Fun, and Intrinsic Motivation (1990-2010). IEEE Transactions on Autonomous Mental Development, 2(3):230-247, 2010.

IEEE link.

PDF.

With a brief summary of the generative adversarial neural networks of 1990[AC90,90b][AC20]

where a generator NN is fighting a predictor NN in a minimax game

(more).

[AC11]

Sun Yi, F. Gomez, J. Schmidhuber.

Planning to Be Surprised: Optimal Bayesian Exploration in Dynamic Environments.

In Proc. Fourth Conference on Artificial General Intelligence (AGI-11),

Google, Mountain View, California, 2011.

PDF.

[AC13]

J. Schmidhuber.

POWERPLAY: Training an Increasingly General Problem Solver by Continually Searching for the Simplest Still Unsolvable Problem.

Frontiers in Cognitive Science, 2013.

Preprint (2011):

arXiv:1112.5309 [cs.AI]

[AC20]

J. Schmidhuber. Generative Adversarial Networks are Special Cases of Artificial Curiosity (1990) and also Closely Related to Predictability Minimization (1991).

Neural Networks, Volume 127, p 58-66, 2020.

Preprint arXiv/1906.04493.

[AMH1]

S. I. Amari (1972).

Learning patterns and pattern sequences by self-organizing nets of threshold elements. IEEE Transactions, C 21, 1197-1206, 1972.

PDF.

First published learning RNN.

First publication of what was later sometimes called the Hopfield network[AMH2] or Amari-Hopfield Network.[AMH3]

[AMH2]

J. J. Hopfield (1982). Neural networks and physical systems with emergent

collective computational abilities. Proc. of the National Academy of Sciences,

vol. 79, pages 2554-2558, 1982.

The Hopfield network or Amari-Hopfield Network was published in 1972 by Amari.[AMH1]

[ATT] J. Schmidhuber (AI Blog, 2020). 30-year anniversary of end-to-end differentiable sequential neural attention. Plus goal-conditional reinforcement learning.We had both hard attention (1990) and soft attention (1991-93).[FWP][ULTRA] Today, both types are very popular.

[BPA]

H. J. Kelley. Gradient Theory of Optimal Flight Paths. ARS Journal, Vol. 30, No. 10, pp. 947-954, 1960.

Precursor of modern backpropagation.[BP1-4]

[BPB]

A. E. Bryson. A gradient method for optimizing multi-stage allocation processes. Proc. Harvard Univ. Symposium on digital computers and their applications, 1961.

[BPC]

S. E. Dreyfus. The numerical solution of variational problems. Journal of Mathematical Analysis and Applications, 5(1): 30-45, 1962.

[BP1] S. Linnainmaa. The representation of the cumulative rounding error of an algorithm as a Taylor expansion of the local rounding errors. Master's Thesis (in Finnish), Univ. Helsinki, 1970.

See chapters 6-7 and FORTRAN code on pages 58-60.

PDF.

See also BIT 16, 146-160, 1976.

Link.

The first publication on "modern" backpropagation, also known as the reverse mode of automatic differentiation.

[BP2] P. J. Werbos. Applications of advances in nonlinear sensitivity analysis. In R. Drenick, F. Kozin, (eds): System Modeling and Optimization: Proc. IFIP,

Springer, 1982.

PDF.

First application of backpropagation[BP1] to NNs (concretizing thoughts in his 1974 thesis).

[BP4] J. Schmidhuber (AI Blog, 2014; updated 2020).

Who invented backpropagation?

More.[DL2]

[BP5]

A. Griewank (2012). Who invented the reverse mode of differentiation?

Documenta Mathematica, Extra Volume ISMP (2012): 389-400.

[CATCH]

J. Schmidhuber. Philosophers & Futurists, Catch Up! Response to The Singularity.

Journal of Consciousness Studies, Volume 19, Numbers 1-2, pp. 173-182(10), 2012.

PDF.

[CON16]

J. Carmichael (2016).

Artificial Intelligence Gained Consciousness in 1991.

Why A.I. pioneer Jürgen Schmidhuber is convinced the ultimate breakthrough already happened.

Inverse, 2016. Link.

[DEEP1]

Ivakhnenko, A. G. and Lapa, V. G. (1965). Cybernetic Predicting Devices. CCM Information Corporation. First working Deep Learners with many layers, learning internal representations.

[DEEP1a]

Ivakhnenko, Alexey Grigorevich. The group method of data of handling; a rival of the method of stochastic approximation. Soviet Automatic Control 13 (1968): 43-55.

[DEEP2]

Ivakhnenko, A. G. (1971). Polynomial theory of complex systems. IEEE Transactions on Systems, Man and Cybernetics, (4):364-378.

[DAN]

J. Schmidhuber (AI Blog, 2021).

10-year anniversary. In 2011, DanNet triggered the deep convolutional neural network (CNN) revolution. Named after my outstanding postdoc Dan Ciresan, it was the first deep and fast CNN to win international computer vision contests, and had a temporary monopoly on winning them, driven by a very fast implementation based on graphics processing units (GPUs).

1st superhuman result in 2011.[DAN1]

Now everybody is using this approach.

[DAN1]

J. Schmidhuber (AI Blog, 2011; updated 2021 for 10th birthday of DanNet): First superhuman visual pattern recognition.

At the IJCNN 2011 computer vision competition in Silicon Valley,

our artificial neural network called DanNet performed twice better than humans, three times better than the closest artificial competitor, and six times better than the best non-neural method.

[DEC] J. Schmidhuber (AI Blog, 02/20/2020, revised 2021). The 2010s: Our Decade of Deep Learning / Outlook on the 2020s. The recent decade's most important developments and industrial applications based on our AI, with an outlook on the 2020s, also addressing privacy and data markets.

[DL1] J. Schmidhuber, 2015.

Deep Learning in neural networks: An overview. Neural Networks, 61, 85-117.

More.

[DL2] J. Schmidhuber, 2015.

Deep Learning.

Scholarpedia, 10(11):32832.

[DL4] J. Schmidhuber (AI Blog, 2017).

Our impact on the world's most valuable public companies: Apple, Google, Microsoft, Facebook, Amazon... By 2015-17, neural nets developed in my labs were on over 3 billion devices such as smartphones, and used many billions of times per day, consuming a significant fraction of the world's compute. Examples: greatly improved (CTC-based) speech recognition on all Android phones, greatly improved machine translation through Google Translate and Facebook (over 4 billion LSTM-based translations per day), Apple's Siri and Quicktype on all iPhones, the answers of Amazon's Alexa, etc. Google's 2019

on-device speech recognition

(on the phone, not the server)

is still based on LSTM.

[DLP]

J. Schmidhuber (AI Blog, 2023).

How 3 Turing awardees republished key methods and ideas whose creators they failed to credit.

Technical Report IDSIA-23-23, Swiss AI Lab IDSIA, 14 Dec 2023.

The piece is aimed at people who are not aware of the numerous AI priority disputes, but are willing to check the facts (see tweet).

[DS1]

DeepSeek-AI (2025).

DeepSeek-R1: Incentivizing Reasoning Capability in LLMs via Reinforcement Learning. Preprint arXiv:2501.12948. See the popular DeepSeek tweet of Jan 2025.

[FWP]

J. Schmidhuber (AI Blog, 26 March 2021, updated 2023).

26 March 1991: Neural nets learn to program neural nets with fast weights—like Transformer variants. 2021: New stuff!

In 2022, ChatGPT took the world by storm, generating large volumes of text that are almost indistinguishable from what a human might write.[GPT3] ChatGPT and similar large language models (LLMs) are based on a family of artificial neural networks (NNs) called Transformers.[TR1-2] Already in 1991, when compute was a million times more expensive than today, Schmidhuber published the first Transformer variant, which is now called an unnormalised linear Transformer.[ULTRA][FWP0-1,6][TR5-6] That wasn't the name it got given at the time, but today the mathematical equivalence is obvious. In a sense, computational restrictions drove it to be even more efficient than later "quadratic" Transformer variants,[TR1-2] resulting in costs that scale linearly in input size, rather than quadratically. In the same year, Schmidhuber also introduced self-supervised pre-training for deep NNs, now used to train LLMs (the "P" in "GPT" stands for "pre-trained").[UN][UN0-3]

In 1993, he introduced

the attention terminology[FWP2] now used

in this context,[ATT] and

extended the approach to

recurrent NNs that program themselves.

See tweet of 2022.

[FWP0]

J. Schmidhuber.

Learning to control fast-weight memories: An alternative to recurrent nets.

Technical Report FKI-147-91, Institut für Informatik, Technische

Universität München, 26 March 1991.

PDF.

First paper on fast weight programmers that separate storage and control: a slow net learns by gradient descent to compute weight changes of a fast net. The outer product-based version (Eq. 5) is now known as the "unnormalized linear Transformer"[ULTRA] a.k.a. "Transformer with linearized self-attention."[FWP]

[FWP1] J. Schmidhuber. Learning to control fast-weight memories: An alternative to recurrent nets. Neural Computation, 4(1):131-139, 1992. Based on [FWP0].

PDF.

HTML.

Pictures (German).

See tweet of 2022 for 30-year anniversary.

[FWP2] J. Schmidhuber. Reducing the ratio between learning complexity and number of time-varying variables in fully recurrent nets. In Proceedings of the International Conference on Artificial Neural Networks, Amsterdam, pages 460-463. Springer, 1993.

PDF.

First recurrent NN-based fast weight programmer using outer products, introducing the terminology of learning "internal spotlights of attention."

[FWP6] I. Schlag, K. Irie, J. Schmidhuber.

Linear Transformers Are Secretly Fast Weight Programmers. ICML 2021. Preprint: arXiv:2102.11174.

[HIN] J. Schmidhuber (AI Blog, 2020). Critique of Honda Prize for Dr. Hinton. Science must not allow corporate PR to distort the academic record.

[I25]

E. Ising (1925). Beitrag zur Theorie des Ferromagnetismus. Z. Phys., 31 (1): 253-258, 1925.

First non-learning recurrent NN architecture: the Lenz-Ising model.

[K41]

H. A. Kramers and G. H. Wannier (1941). Statistics of the Two-Dimensional Ferromagnet. Phys. Rev. 60, 252 and 263, 1941.

[K56]

S.C. Kleene. Representation of Events in Nerve Nets and Finite Automata. Automata Studies, Editors: C.E. Shannon and J. McCarthy, Princeton University Press, p. 3-42, Princeton, N.J., 1956.

[L20]

W. Lenz (1920). Beiträge zum Verständnis der magnetischen

Eigenschaften in festen Körpern. Physikalische Zeitschrift, 21:

613-615.

[LEC] J. Schmidhuber (AI Blog, 2022). LeCun's 2022 paper on autonomous machine intelligence rehashes but does not cite essential work of 1990-2015. Years ago we published most of what LeCun calls his "main original contributions:" neural nets that learn multiple time scales and levels of abstraction, generate subgoals, use intrinsic motivation to improve world models, and plan (1990); controllers that learn informative predictable representations (1997), etc. This was also discussed on Hacker News, reddit, and various media.

[LSTM1] S. Hochreiter, J. Schmidhuber. Long Short-Term Memory. Neural Computation, 9(8):1735-1780, 1997. PDF.

More.

[LSTM2] F. A. Gers, J. Schmidhuber, F. Cummins. Learning to Forget: Continual Prediction with LSTM. Neural Computation, 12(10):2451-2471, 2000.

PDF.

The "vanilla LSTM architecture" with forget gates that everybody is using today, e.g., in Google's Tensorflow.

[LSTM13]

F. A. Gers and J. Schmidhuber.

LSTM Recurrent Networks Learn Simple Context Free and

Context Sensitive Languages.

IEEE Transactions on Neural Networks 12(6):1333-1340, 2001.

PDF.

[MC43]

W. S. McCulloch, W. Pitts. A Logical Calculus of Ideas Immanent in Nervous Activity.

Bulletin of Mathematical Biophysics, Vol. 5, p. 115-133, 1943.

[MIR] J. Schmidhuber (AI Blog, Oct 2019, updated 2025). Deep Learning: Our Miraculous Year 1990-1991. Preprint

arXiv:2005.05744. The deep learning neural networks (NNs) of our team have revolutionised pattern recognition & machine learning & AI. Many of the basic ideas behind this revolution were published within fewer than 12 months in our "Annus Mirabilis" 1990-1991 at TU Munich, including principles of (1)

LSTM, the most cited AI of the 20th century (based on constant error flow through residual connections); (2) ResNet, the most cited AI of the 21st century (based on our LSTM-inspired Highway Network, 10 times deeper than previous NNs); (3)

GAN (for artificial curiosity and creativity); (4) Transformer (the T in ChatGPT—see the 1991 Unnormalized Linear Transformer); (5) Pre-training for deep NNs (the P in ChatGPT); (6) NN distillation (see DeepSeek); (7) recurrent World Models, and more.

[MLP1] D. C. Ciresan, U. Meier, L. M. Gambardella, J. Schmidhuber. Deep Big Simple Neural Nets For Handwritten Digit Recognition. Neural Computation 22(12): 3207-3220, 2010. ArXiv Preprint.

Showed that plain backprop for deep standard NNs is sufficient to break benchmark records, without any unsupervised pre-training.

[MLP2] J. Schmidhuber

(AI Blog, Sep 2020). 10-year anniversary of supervised deep learning breakthrough (2010). No unsupervised pre-training. By 2010, when compute was 100 times more expensive than today, both the feedforward NNs[MLP1] and the earlier recurrent NNs of Schmidhuber's team were able to beat all competing algorithms on important problems of that time.

[MLP3] J. Schmidhuber

(AI Blog, 2025). 2010: Breakthrough of end-to-end deep learning (no layer-by-layer training, no unsupervised pre-training). The rest is history.

By 2010, when compute was 1000 times more expensive than in 2025, both our feedforward NNs[MLP1] and our earlier recurrent NNs were able to beat all competing algorithms on important problems of that time.

This deep learning revolution quickly spread from Europe to North America and Asia.

[MOST]

J. Schmidhuber (AI Blog, 2021, updated 2025). The most cited neural networks all build on work done in my labs: 1. Long Short-Term Memory (LSTM), the most cited AI of the 20th century. 2. ResNet (open-gated Highway Net), the most cited AI of the 21st century. 3. AlexNet & VGG Net (the similar but earlier DanNet of 2011 won 4 image recognition challenges before them). 4. GAN (an instance of Adversarial Artificial Curiosity of 1990). 5. Transformer variants—see the 1991 unnormalised linear Transformer (ULTRA). Foundations of Generative AI were published in 1991: the principles of GANs (now used for deepfakes), Transformers (the T in ChatGPT), Pre-training for deep NNs (the P in ChatGPT), NN distillation, and the famous DeepSeek—see the tweet.

[MOZ]

M. Mozer.

A Focused Backpropagation Algorithm for Temporal Pattern Recognition.

Complex Systems, vol 3(4), p. 349-381, 1989.

[NOB] J. Schmidhuber.

A Nobel Prize for Plagiarism.

Technical Report IDSIA-24-24.

Sadly, the Nobel Prize in Physics 2024 for Hopfield & Hinton is a Nobel Prize for plagiarism. They republished methodologies developed in Ukraine and Japan by Ivakhnenko and Amari in the 1960s & 1970s, as well as other techniques, without citing the original papers. Even in later surveys, they didn't credit the original inventors (thus turning what may have been unintentional plagiarism into a deliberate form). None of the important algorithms for modern Artificial Intelligence were created by Hopfield & Hinton.

See also popular

tweet1,

tweet2, and

LinkedIn post.

[PLAN]

J. Schmidhuber (AI Blog, 2020). 30-year anniversary of planning & reinforcement learning with recurrent world models and artificial curiosity (1990).This work also introduced high-dimensional reward signals, deterministic policy gradients for RNNs,

the GAN principle (widely used today). Agents with adaptive recurrent world models even suggest a simple explanation of consciousness & self-awareness.

[PLAN4]

J. Schmidhuber.

On Learning to Think: Algorithmic Information Theory for Novel Combinations of Reinforcement Learning Controllers and Recurrent Neural World Models.

Report arXiv:1210.0118 [cs.AI], 2015.

[PLAN5]

One Big Net For Everything. Preprint arXiv:1802.08864 [cs.AI], Feb 2018. See also

the DeepSeek tweet of Jan 2025.

[R2] Reddit/ML, 2019. J. Schmidhuber really had GANs in 1990.

[R4] Reddit/ML, 2019. Five major deep learning papers by G. Hinton did not cite similar earlier work by J. Schmidhuber.

[R7] Reddit/ML, 2019. J. Schmidhuber on Seppo Linnainmaa, inventor of backpropagation in 1970.

[SNT]

J. Schmidhuber, S. Heil (1996).

Sequential neural text compression.

IEEE Trans. Neural Networks, 1996.

PDF.

A probabilistic language model based on predictive coding;

an earlier version appeared at NIPS 1995.

[T22] J. Schmidhuber (AI Blog, 2022).

Scientific Integrity and the History of Deep Learning: The 2021 Turing Lecture, and the 2018 Turing Award. Technical Report IDSIA-77-21 (v3), IDSIA, Lugano, Switzerland, 2021-2022.

[TR1]

A. Vaswani, N. Shazeer, N. Parmar, J. Uszkoreit, L. Jones, A. N. Gomez, L. Kaiser, I. Polosukhin (2017). Attention is all you need. NIPS 2017, pp. 5998-6008.

[TR2]

J. Devlin, M. W. Chang, K. Lee, K. Toutanova (2018). Bert: Pre-training of deep bidirectional transformers for language understanding. Preprint arXiv:1810.04805.

[TR3] K. Tran, A. Bisazza, C. Monz. The Importance of Being Recurrent for Modeling Hierarchical Structure. EMNLP 2018, p 4731-4736. ArXiv preprint 1803.03585.

[TR4]

M. Hahn. Theoretical Limitations of Self-Attention in Neural Sequence Models. Transactions of the Association for Computational Linguistics, Volume 8, p.156-171, 2020.

[TR5]

A. Katharopoulos, A. Vyas, N. Pappas, F. Fleuret.

Transformers are RNNs: Fast autoregressive Transformers

with linear attention. In Proc. Int. Conf. on Machine

Learning (ICML), July 2020.

[TR6]

K. Choromanski, V. Likhosherstov, D. Dohan, X. Song,

A. Gane, T. Sarlos, P. Hawkins, J. Davis, A. Mohiuddin,

L. Kaiser, et al. Rethinking attention with Performers.

In Int. Conf. on Learning Representations (ICLR), 2021.

[ULTRA]

References on the 1991 unnormalized linear Transformer (ULTRA): original tech report (1991) [FWP0]. Journal publication (1992) [FWP1]. Recurrent ULTRA extension (1993) introducing the terminology of learning "internal spotlights of attention” [FWP2]. Modern "quadratic" Transformer (2017: "attention is all you need") scaling quadratically in input size [TR1]. Papers of 2020-21 using the terminology "linearized attention" for more efficient "linear Transformers" that scale linearly [TR5,TR6]. 2021 paper [FWP6] pointing out that ULTRA dates back to 1991 [FWP0] when compute was a million times more expensive. ULTRA overview (2021) [FWP]. See the T in ChatGPT! See also surveys [DLH][DLP], 2022 tweet for ULTRA's 30-year anniversary, and 2024 tweet.

[UN]

J. Schmidhuber (AI Blog, 2021). 30-year anniversary. 1991: First very deep learning with unsupervised or self-supervised pre-training. Unsupervised hierarchical predictive coding (with self-supervised target generation) finds compact internal representations of sequential data to facilitate downstream deep learning. The hierarchy can be distilled into a single deep neural network (suggesting a simple model of conscious and subconscious information processing). 1993: solving problems of depth >1000.

[UN0]

J. Schmidhuber.

Neural sequence chunkers.

Technical Report FKI-148-91, Institut für Informatik, Technische

Universität München, April 1991.

PDF.

Unsupervised/self-supervised learning and predictive coding is used

in a deep hierarchy of recurrent neural networks (RNNs)

to find compact internal

representations of long sequences of data,

across multiple time scales and levels of abstraction.

Each RNN tries to solve the pretext task of predicting its next input, sending only unexpected inputs to the next RNN above.

The resulting compressed sequence representations

greatly facilitate downstream supervised deep learning such as sequence classification.

By 1993, the approach solved problems of depth 1000 [UN2]

(requiring 1000 subsequent computational stages/layers—the more such stages, the deeper the learning).

A variant collapses the hierarchy into a single deep net.

It uses a so-called conscious chunker RNN

which attends to unexpected events that surprise

a lower-level so-called subconscious automatiser RNN.

The chunker learns to understand the surprising events by predicting them.

The automatiser uses a

neural knowledge distillation procedure

to compress and absorb the formerly conscious insights and

behaviours of the chunker, thus making them subconscious.

The systems of 1991 allowed for much deeper learning than previous methods.

[UN1] J. Schmidhuber. Learning complex, extended sequences using the principle of history compression. Neural Computation, 4(2):234-242, 1992. Based on TR FKI-148-91, TUM, 1991.[UN0] PDF.

First working Deep Learner based on a deep RNN hierarchy (with different self-organising time scales),

overcoming the vanishing gradient problem through unsupervised pre-training and predictive coding (with self-supervised target generation).

Also: compressing or distilling a teacher net (the chunker) into a student net (the automatizer) that does not forget its old skills—such approaches are now widely used. More.

[UN2] J. Schmidhuber. Habilitation thesis, TUM, 1993. PDF.

An ancient experiment on "Very Deep Learning" with credit assignment across 1200 time steps or virtual layers and unsupervised / self-supervised pre-training for a stack of recurrent NN

can be found here (depth > 1000).

[UN3]

J. Schmidhuber, M. C. Mozer, and D. Prelinger.

Continuous history compression.

In H. Hüning, S. Neuhauser, M. Raus, and W. Ritschel, editors,

Proc. of Intl. Workshop on Neural Networks, RWTH Aachen, pages 87-95.

Augustinus, 1993.

[VAN1] S. Hochreiter. Untersuchungen zu dynamischen neuronalen Netzen. Diploma thesis, TUM, 1991 (advisor J. Schmidhuber). PDF.

More on the Fundamental Deep Learning Problem.

[W45]

G. H. Wannier (1945).

The Statistical Problem in Cooperative Phenomena.

Rev. Mod. Phys. 17, 50.

.