Abstract.

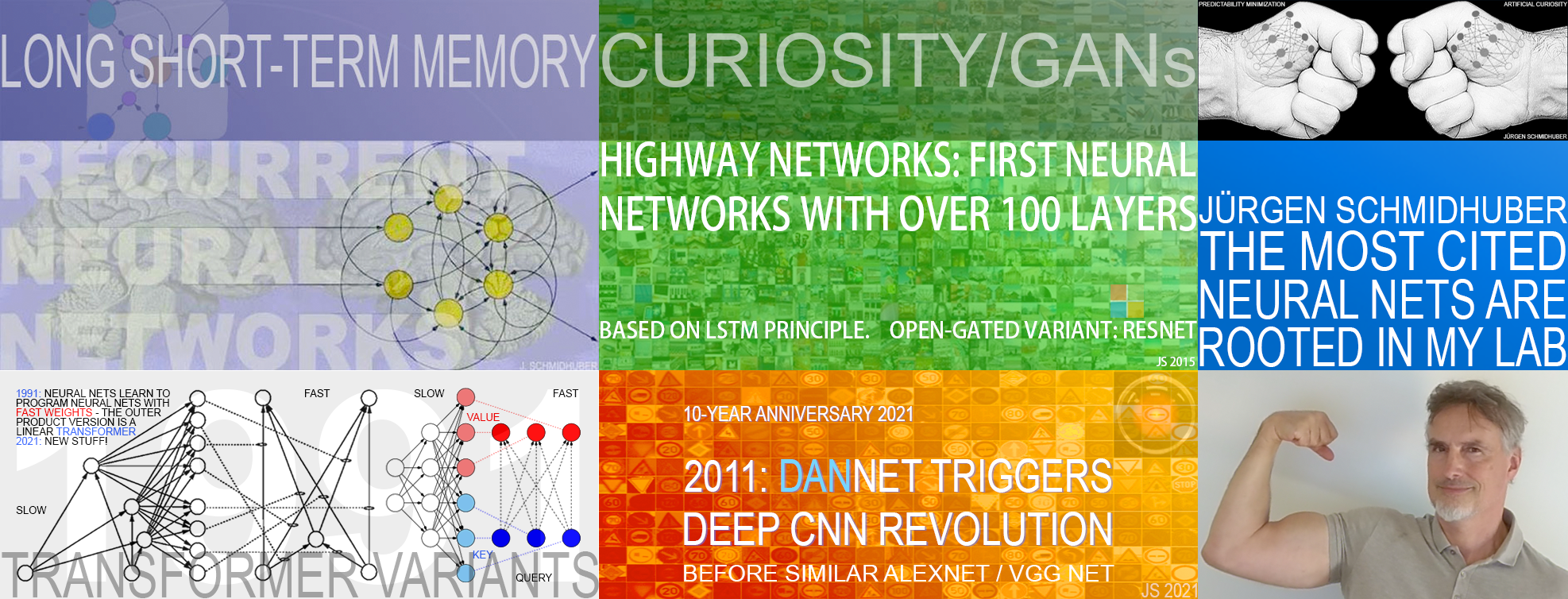

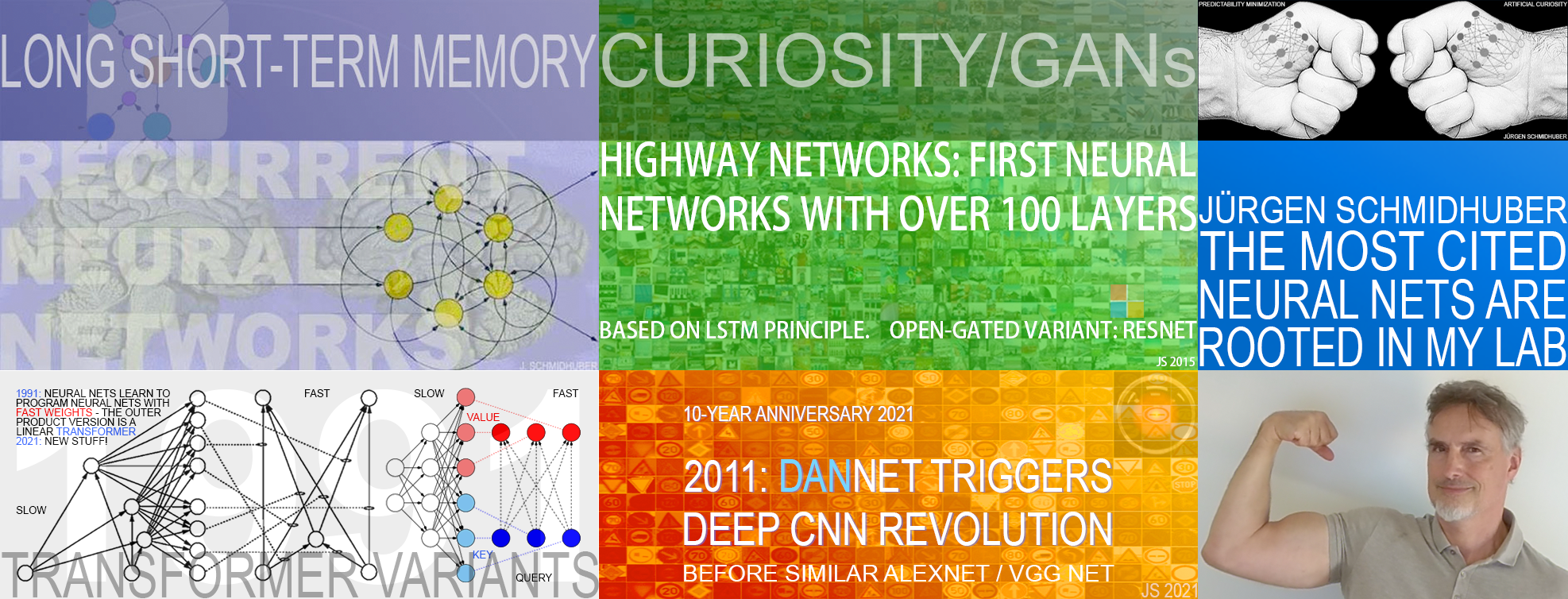

Modern Artificial Intelligence is dominated by artificial neural networks (NNs) and deep learning.[DL1-4][DLH][WHO4-11] Foundations of the most popular NNs originated in my labs at TU Munich and IDSIA. Here I discuss: (1) Long Short-Term Memory[LSTM0-17] (LSTM),

the most cited AI of the 20th century, (2) The most cited AI of the 21st century, an open-gated variant of our earlier Highway Net[HW1-25b] (a gated ResNet) which was 10 times deeper than previous feedforward NNs, (3) AlexNet and VGG Net, two highly cited NNs building on our similar earlier DanNet:[GPUCNN1-9] the first deep convolutional NN[CN79-25b] to win

image recognition competitions and achieve superhuman performance (2011),

(4) Generative Adversarial Networks[GAN90-25] (an instance of my earlier

Adversarial Artificial Curiosity[AC90-20][DLH]), and (5) Transformers (the 2nd most-cited AI of the 21st century—unnormalized linear Transformers are formally equivalent to my 1991 Fast Weight Programmers).[ULTRA][TR1-8][FWP0-1,6][DLH]

Most of this started with our

Annus Mirabilis of 1990-1991[MIR] when compute was millions of times more expensive than today.

Back then we laid foundations of Generative AI, publishing principles of GANs (now used for deepfakes),[GAN90-25][DLH] Transformers (the T in ChatGPT),[ULTRA][TR1-8][FWP0-1,6][DLH] Pre-training for deep NNs (the P in ChatGPT),[UN][UN0-3] and

NN distillation[UN1-3][DLP]

(key for DeepSeek[DS1][UN0-3][PLAN4-5]).

As of 2025, three of the eight most-cited scientific articles of the 21st century, across all disciplines, are about NNs: #1, #7, and #8.[MOST25] They are based directly on the techniques published in our previous work mentioned above: (1, 2) → #1, (5) → #7, (3) → #8. In fact, as of 2025, the two most frequently cited scientific articles of all time (with the most Google Scholar citations within 3 years—manuals excluded) are both directly based on our 1991 work.[MOST26]

(1) LSTM

According to Google Scholar, the most cited AI paper of the 20th century is our 1997 journal publication on Long Short-Term Memory (LSTM).[LSTM1][DLH] To the best of my knowledge, it is also the most cited Computer Science paper of the 20th century (Shannon's information theory paper[SHA48] is about math, not CS).

LSTM NNs are now permeating the modern world, with innumerable applications

including in healthcare,[DEC] learning robots,[LSTM-RL][LSTMPG][OAI1,1a]

game playing,[LSTMPG][OAI2,2a][DM3]

speech processing,[AM16][GSR][GSR15-19]

and machine translation.[GT16][WU][FB17][DEC] They are used billions of times a day by countless people.[DL4] This led Bloomberg to say LSTM is arguably the most commercial AI achievement.[AV1][DL4][MIR](Sec. 4) LSTMs as we know them today go beyond earlier work[MOZ] and were

made possible through my students

Sepp Hochreiter, Felix Gers, Alex Graves, Daan Wierstra, and others.[LSTM0-17,PG]

(2) Highway Net to ResNet

The most cited paper of the 21st century is about deep residual learning (Dec 2015).[HW25][MOST25,25b] It cites our earlier Highway Net (May 2015) and describes a variant thereof: the ResNet.[HW1-25b][R5][DLH] Highway Nets were the first working gradient-based feedforward NNs with hundreds of layers, 10 times deeper than previous FNNs. They are so deep because their gates are initialized to create residual connections, while

ResNets are like Highway Nets whose gates remain always open.[HW1-25b]

In turn, Highway Nets are gated ResNets. Highway Nets

showed how very deep NNs can be trained by gradient descent, and

perform roughly as well as ResNets on ImageNet.[HW3]

They were made possible through my students Rupesh Kumar Srivastava and Klaus Greff.

The USPTO granted a patent for this invention to NNAISENSE in 2021.

All of this was based on the residual connections introduced in 1991 by my student Sepp Hochreiter to solve the vanishing gradient problem.[VAN1][HW25]

Remarkably, the most cited AIs of the 20th and 21st century (1 & 2) are closely connected, because

the Highway Net is actually the feedforward NN version of our

recurrent

LSTM.[LSTM2][HW25]

Deep learning

is all about NN depth.[DL1]

LSTMs

brought essentially unlimited depth to supervised recurrent NNs; Highway Nets brought it to feedforward NNs.

(3) DanNet to AlexNet to VGG Net

The fourth most-cited AI paper of the 21st century[MOST25] describes AlexNet (2012),[GPUCNN4] a convolutional NN[CNN1-4] (CNN) similar to our earlier DanNet (2011).[GPUCNN1-3][DLH] DanNet had a temporary

monopoly on winning computer vision contests and

won 4 of them before AlexNet arrived on the scene.[GPUCNN5][R5-6][CN25b]

At the IJCNN 2011 computer vision competition in Silicon Valley, DanNet crushed the competition, performing

three times better than the closest competitor (by LeCun's team), and

twice as good as humans.

AlexNet cited DanNet but also used ReLUs (1969)[RELU1-2] and stochastic delta rule/dropout (1990)[Drop1-3] without citation.[DLP][T22](Sec. XIV)

DanNet and AlexNet actually followed

our earlier work on

supervised deep NNs (2010)[MLP1-3]

which abandoned the

unsupervised pre-training for deep NNs

introduced

by myself in 1991[UN][UN0-3]—and later

championed by an AlexNet co-author.[UN4][VID1]

Another of the most cited NNs of the 21st century—the VGG network[GPUCNN9]—is also similar to DanNet (also using its trick of

increasing NN depth through small convolution filters).

Other highly cited CNNs[RCNN1-3]

further extended the work of 2011.

DanNet was made possible through my postdoc Dan Ciresan with the help of Ueli Meier and Jonathan Masci.[GPUCNN1-3,5-8]

(4) Curiosity to GANs Another highly cited NN paper of 2014

on Generative Adversarial Networks (GANs)[GAN14]

describes a system similar to

my

adversarial NNs using Predictability Minimization for creating disentangled representations

(1991).[PM0-2][GAN20][R2][MIR](Sec. 7)

In fact, GANs are

a simple application

of my even earlier popular Adversarial

Curiosity Principle

from 1990[AC90-20][MIR](Sec. 5) where

two dueling NNs (a generator and a predictor that sees the generator's output) are trying to maximize each other's loss in a minimax game:[AC](Sec. 1)

the first NNs that were both generative and adversarial.

GANs are an instance of this where the trials are constrained such that they remain very short, like in bandit problems.[GAN20][GAN25][AC][DLH][DLP]

(5) Linear Transformers to Quadratic Transformers The third most-cited AI paper of the 21st century[MOST25] is about Transformer NNs (2017).[TR1][GPT3]

In March 1991, when compute was a million times more expensive than in 2022, even before the LSTM, I published the first Transformer variant, which is now called the unnormalized linear Transformer (ULTRA).[ULTRA][FWP0]

It had to be more efficient than Google's 2017 quadratic Transformer:[TR1][TR25] ULTRA's computational costs scale linearly in input size, rather than quadratically (in 1991, no journal would have accepted an NN that scales quadratically).

My 1993 paper on recurrent ULTRA extensions[FWP2] talked about learning "internal spotlights of attention”—compare the recent attention terminology, e.g., "attention is all you need,"[TR1] and tweets of

2022 &

2023.

As of 2025, everybody is talking about Generative AI. As shown above, in 1990-91, I laid foundations of this field, publishing principles of (4) GANs (1990, now used for deepfakes), (5) Transformers (1991, now used in ChatGPT and similar large language models or LLMs), and (6) self-supervised pre-training for deep NNs (1991, now used to pre-train LLMs).[UN][UN0-3]

The world's most valuable companies (NVIDIA, Microsoft, Apple, Google, Meta, others) were deeply influenced by our contributions (1-5) above.[DL4][DEC][MLP3] The paper on

ResNet—the open-gated variant of our earlier

Highway Net[HW1-25] (2)—was published by Microsoft, and its first author was hired by Facebook. Most of the

AlexNet/VGG Net authors[GPUCNN4,9]—who built on our 2011

DanNet[GPUCNN1-3,5-8]

(3)—went to Google. Google also

published the 2017 quadratic Transformer[TR1] closely related to my

unnormalized linear Transformer of 1991[ULTRA][FWP0-1,6] (5), and bought the company DeepMind co-founded by a student from my lab (now the core of Google).[MIR]

The second author of the DanNet papers[GPUCNN1-3] (3)

and the first author of a

2014 paper on GANs[GAN14] (an instance of my ancient Adversarial Curiosity[GAN90-25]) (4) were hired by Apple. All of these companies have also

made extensive use of our LSTM[DL4][DEC] (1).

In January 2025, the DeepSeek Sputnik[DS1] wiped out a trillion USD from the stock market. DeepSeek-R1[DS1] used elements of my 2015 reinforcement learning (RL) prompt engineer[PLAN4] and its 2018 refinement[PLAN5] which collapses the 2015 RL machine and its world model[PLAN4] into a single net through my

neural net distillation procedure

of 1991[UN0-3][UN][DLP]: a distilled chain of thought system. See the popular tweet of 31 Jan 2025.

Concluding remarks

Disclaimer.

Of course, citation counts

are poor indicators of truly pioneering work. As I pointed out in

Nature (2011):

"like the less-than-worthless collateralized debt obligations that drove the 2008 financial bubble, citations are easy to print and inflate, providing an incentive for professors to maximize citation counts instead of scientific progress—witness how relatively unknown scientists can now collect more citations than the most influential founders of their fields."[NAT1]

Deep Learning History.

As mentioned earlier,[MIR](Sec. 21)

when only consulting surveys from the Anglosphere,

it is not always clear[NOB][DLP]

that deep learning was first conceived outside of it. Deep learning was—in fact—born in 1965 in Ukraine (back then the USSR) with the first nets of arbitrary depth that really learned,[DEEP1-2][R8][NOB][WHO5] going beyond the "shallow learning" (linear regression or 1-layer neural networks) of Gauss and Legendre (1795-1805).[DL1][DLH]

Soon afterwards, multilayer perceptrons learned internal representations through stochastic gradient descent in Japan.[GD1-2][DLH] A few years later,

modern backpropagation[BP1-6][BPA-C] (the reverse mode of automatic differentiation)

was published in Finland (1970).[BP1] The basic deep convolutional NN architecture (now widely used) was invented in the 1970s in Japan[CN79][CN25] where NNs with convolutions were later also combined with weight sharing and backpropagation (1987);[CN87] the first backpropagation-trained 2D CNNs emerged there in 1988.[CN88]

We are standing on the shoulders of these works and many others (see the 888 references in my

award-winning survey[DL1] if you want to understand just how much we borrow from these).

Gradient-based unsupervised or self-supervised adversarial networks that duel each other in a minimax game originated in Munich[GAN90-25] (also the birthplace of the first self-driving cars in traffic[AUT]).

The principles of

linear Transformers,[ULTRA][FWP,FWP0][WHO10]

NN distillation,[UN][UN0-3][WHO9]

and the

fundamental problem of backpropagation-based Deep Learning[VAN1][WHO11] were also discovered in Munich (1991). So were the first "modern" Deep Learners to overcome this problem, through (1) unsupervised or self-supervised pre-training[UN-UN2][NOB] (1991), and (2) Long Short-Term Memory.[LSTM0-7][VAN1]

LSTM was further developed in Switzerland, which is also home of

the first image recognition contest-winning

deep

GPU-based CNNs,[DAN][DAN1][GPUCNN3,5]

the first

superhuman visual pattern recognition (2011),[GPUCNN3,5][DAN]

and the first very deep, working feedforward NNs with hundreds of layers.[HW1-25]

In the 2010s and 2020s, all of this work was feverishly built on by an outstanding community of machine learning researchers, engineers, and practitioners to create amazing things that have impacted the lives of billions of people worldwide.[DL4][DEC]

Acknowledgments

Thanks for useful comments to Dylan Ashley, Kazuki Irie, Sjoerd van Steenkiste, Aleksandar Stanic, Cesare Alippi, Róbert Csordás, Sepp Hochreiter, Mike Mozer, Michael Bronstein, Christoph von der Malsburg, David Ha, and Stephen J. Hanson. Since science is about self-correction, let me know under juergen@idsia.ch if you can spot any remaining error. The contents of this article may be used for educational and non-commercial purposes, including articles for Wikipedia and similar sites. This work is licensed under a Creative Commons Attribution-NonCommercial-ShareAlike 4.0 International License.

Thanks for useful comments to Dylan Ashley, Kazuki Irie, Sjoerd van Steenkiste, Aleksandar Stanic, Cesare Alippi, Róbert Csordás, Sepp Hochreiter, Mike Mozer, Michael Bronstein, Christoph von der Malsburg, David Ha, and Stephen J. Hanson. Since science is about self-correction, let me know under juergen@idsia.ch if you can spot any remaining error. The contents of this article may be used for educational and non-commercial purposes, including articles for Wikipedia and similar sites. This work is licensed under a Creative Commons Attribution-NonCommercial-ShareAlike 4.0 International License.

References

[AC]

J. Schmidhuber (AI Blog, 2021). 3 decades of artificial curiosity & creativity. Schmidhuber's artificial scientists not only answer given questions but also invent new questions. They achieve curiosity through: (1990) the principle of generative adversarial networks, (1991) neural nets that maximise learning progress, (1995) neural nets that maximise information gain (optimally since 2011), (1997) adversarial design of surprising computational experiments, (2006) maximizing compression progress like scientists/artists/comedians do, (2011) PowerPlay... Since 2012: applications to real robots.

[AC90]

J. Schmidhuber.

Making the world differentiable: On using fully recurrent

self-supervised neural networks for dynamic reinforcement learning and

planning in non-stationary environments.

Technical Report FKI-126-90, TUM, Feb 1990, revised Nov 1990.

PDF.

The first paper on online planning with reinforcement learning recurrent neural networks (NNs) (more) and on generative adversarial networks

where a generator NN is fighting a predictor NN in a minimax game

(more).

[AC90b]

J. Schmidhuber.

A possibility for implementing curiosity and boredom in

model-building neural controllers.

In J. A. Meyer and S. W. Wilson, editors, Proc. of the

International Conference on Simulation

of Adaptive Behavior: From Animals to

Animats, pages 222-227. MIT Press/Bradford Books, 1991.

PDF.

More.

[AC09]

J. Schmidhuber. Art & science as by-products of the search for novel patterns, or data compressible in unknown yet learnable ways. In M. Botta (ed.), Et al. Edizioni, 2009, pp. 98-112.

PDF. (More on

artificial scientists and artists.)

[AC10]

J. Schmidhuber. Formal Theory of Creativity, Fun, and Intrinsic Motivation (1990-2010). IEEE Transactions on Autonomous Mental Development, 2(3):230-247, 2010.

IEEE link.

PDF.

[AC20]

J. Schmidhuber. Generative Adversarial Networks are Special Cases of Artificial Curiosity (1990) and also Closely Related to Predictability Minimization (1991).

Neural Networks, Volume 127, p 58-66, 2020.

Preprint arXiv/1906.04493.

[AM16]

Blog of Werner Vogels, CTO of Amazon (Nov 2016):

Amazon's Alexa

"takes advantage of bidirectional long short-term memory (LSTM) networks using a massive amount of data to train models that convert letters to sounds and predict the intonation contour. This technology enables high naturalness, consistent intonation, and accurate processing of texts."

[ATT] J. Schmidhuber (AI Blog, 2020, updated 2025). 30-year anniversary of end-to-end differentiable sequential neural attention. Plus goal-conditional reinforcement learning. Schmidhuber had both hard attention for foveas (1990) and soft attention in form of Transformers with linearized self-attention (1991-93).[FWP] Today, both types are very popular.

[ATT0] J. Schmidhuber and R. Huber.

Learning to generate focus trajectories for attentive vision.

Technical Report FKI-128-90, Institut für Informatik, Technische

Universität München, 1990.

PDF.

[ATT1] J. Schmidhuber and R. Huber. Learning to generate artificial fovea trajectories for target detection. International Journal of Neural Systems, 2(1 & 2):135-141, 1991. Based on TR FKI-128-90, TUM, 1990.

PDF.

More.

[ATT2]

J. Schmidhuber.

Learning algorithms for networks with internal and external feedback.

In D. S. Touretzky, J. L. Elman, T. J. Sejnowski, and G. E. Hinton,

editors, Proc. of the 1990 Connectionist Models Summer School, pages

52-61. San Mateo, CA: Morgan Kaufmann, 1990.

PS. (PDF.)

[ATT3]

H. Larochelle, G. E. Hinton. Learning to combine foveal glimpses with a third-order Boltzmann machine. NIPS 2010. This work is very similar to [ATT0-2] which the authors did not cite.

In fact, the 2nd author was the reviewer of a 1990 paper[ATT2] which summarised in its Section 5 Schmidhuber's early work on attention: the first implemented neural system for combining glimpses that jointly trains a recognition & prediction component

with an attentional component (the fixation controller).

Two decades later, he wrote about

his own work:[ATT3]

"To our knowledge, this is the first implemented system for combining glimpses that jointly trains a recognition component ... with an attentional component (the fixation controller)." See [MIR](Sec. 9)[R4].

[AUT]

J. Schmidhuber (AI Blog, 2005). Highlights of robot car history. Around 1986, Ernst Dickmanns and his group at Univ. Bundeswehr Munich built the world's first real autonomous robot cars, using saccadic vision, probabilistic approaches such as Kalman filters, and parallel computers. By 1994, they were in highway traffic, at up to 180 km/h, automatically passing other cars.

[AV1] A. Vance. Google Amazon and Facebook Owe Jürgen Schmidhuber a Fortune—This Man Is the Godfather the AI Community Wants to Forget. Business Week,

Bloomberg, May 15, 2018.

[BPA]

H. J. Kelley. Gradient Theory of Optimal Flight Paths. ARS Journal, Vol. 30, No. 10, pp. 947-954, 1960.

Precursor of modern backpropagation.[BP1-4]

[BPB]

A. E. Bryson. A gradient method for optimizing multi-stage allocation processes. Proc. Harvard Univ. Symposium on digital computers and their applications, 1961.

[BPC]

S. E. Dreyfus. The numerical solution of variational problems. Journal of Mathematical Analysis and Applications, 5(1): 30-45, 1962.

[BP1] S. Linnainmaa. The representation of the cumulative rounding error of an algorithm as a Taylor expansion of the local rounding errors. Master's Thesis (in Finnish), Univ. Helsinki, 1970.

See chapters 6-7 and FORTRAN code on pages 58-60.

PDF.

See also BIT 16, 146-160, 1976.

Link.

The first publication on "modern" backpropagation, also known as the reverse mode of automatic differentiation.

[BP2] P. J. Werbos. Applications of advances in nonlinear sensitivity analysis. In R. Drenick, F. Kozin, (eds): System Modeling and Optimization: Proc. IFIP,

Springer, 1982.

PDF.

First application of backpropagation[BP1] to NNs (concretizing thoughts in Werbos' 1974 thesis).

[BP4] J. Schmidhuber (AI Blog, 2014; updated 2025).

Who invented backpropagation?

See also LinkedIn post (2025).

[BP5]

A. Griewank (2012). Who invented the reverse mode of differentiation?

Documenta Mathematica, Extra Volume ISMP (2012): 389-400.

[BP6]

S. I. Amari (1977).

Neural Theory of Association and Concept Formation.

Biological Cybernetics, vol. 26, p. 175-185, 1977.

See Section 3.1 on using gradient descent for learning in multilayer networks.

[CN69]

K. Fukushima (1969). Visual feature extraction by a multilayered network of analog threshold elements. IEEE Transactions on Systems Science and Cybernetics. 5 (4): 322-333. doi:10.1109/TSSC.1969.300225. This work described rectified linear units or ReLUs, now widely used in CNNs and other neural nets.

[CN79] K. Fukushima (1979). Neural network model for a mechanism of pattern

recognition unaffected by shift in position—Neocognitron.

Trans. IECE, vol. J62-A, no. 10, pp. 658-665, 1979.

The first deep convolutional neural network architecture, with alternating convolutional layers and downsampling layers. In Japanese. English version: [CN80]. More in Scholarpedia.

[CN80]

K. Fukushima: Neocognitron: a self-organizing neural network model for a mechanism of pattern recognition unaffected by shift in position.

Biological Cybernetics, vol. 36, no. 4, pp. 193-202 (April 1980).

Link.

[CN86]

Movie produced by K. Fukushima, S. Miyake and T. Ito (NHK Science and Technical Research Laboratories), in 1986.

YouTube Link. See a popular video tweet by J. Schmidhuber (2025).

[CN87] A. Waibel. Phoneme Recognition Using Time-Delay Neural Networks. Meeting of IEICE, Tokyo, Japan, 1987. Application of backpropagation[BP1][BP2] and weight sharing

to a 1-dimensional convolutional architecture.

[CN87b]

T. Homma, L. Atlas; R. Marks II (1987). An Artificial Neural Network for Spatio-Temporal Bipolar Patterns: Application to Phoneme Classification. Advances in Neural Information Processing Systems (N(eur)IPS), 1:31-40.

[CN88]

W. Zhang, J. Tanida, K. Itoh, Y. Ichioka. Shift-invariant pattern recognition neural network and its optical architecture. Proc. Annual Conference of the Japan Society of Applied Physics, 1988.

PDF.

First "modern" backpropagation-trained 2-dimensional CNN, applied to character recognition.

[CN89]

W. Zhang, J. Tanida, K. Itoh, Y. Ichioka (received 13 April 1989). Parallel distributed processing model with local space-invariant interconnections and its optical architecture. Applied Optics / Vol. 29, No. 32, 1990. PDF.

First journal submission on a "modern" backpropagation-trained 2-dimensional CNN (applied to character recognition).

[CN89b] Y. LeCun, B. Boser, J. S. Denker, D. Henderson, R. E. Howard, W. Hubbard, L. D. Jackel (received July 1989). Backpropagation Applied to Handwritten Zip Code Recognition, Neural Computation, 1(4):541-551, 1989.

Second journal submission on a "modern" backpropagation-trained 2-dimensional CNN (applied to character recognition). Compare [CN88][CN89].

[CN89c] A. Waibel, T. Hanazawa, G. Hinton, K. Shikano and K. J. Lang. Phoneme recognition using time-delay neural networks. IEEE Transactions on Acoustics, Speech, and Signal Processing, vol. 37, no. 3, pp. 328-339, March 1989. Based on [CN87] (1-dimensional convolutions).

[CN89d]

J. Hampshire, A. Waibel (1989). Connectionist architectures for multi-speaker phoneme recognition. In Advances in Neural Information Processing Systems, N(eur)IPS'2. Conference publication on 2D-TDNNs or 2D-CNNs for speech recognition.

[CN90]

K. Yamaguchi, K. Sakamoto, A. Kenji, T. Akabane, Y. Fujimoto. A Neural Network for Speaker-Independent Isolated Word Recognition. First International Conference on Spoken Language Processing (ICSLP 90), Kobe, Japan, Nov 1990.

A 1-dimensional NN with convolutions using Max-Pooling instead of Fukushima's

Spatial Averaging.[CN79]

[CN93] Weng, J.,

Ahuja, N., and Huang, T. S. (1993). Learning recognition and segmentation of 3-D objects from 2-D images. Proc. 4th Intl. Conf. Computer Vision, Berlin, Germany, pp. 121-128. A 2-dimensional CNN whose downsampling layers use Max-Pooling

(which has become very popular) instead of Fukushima's

Spatial Averaging.[CN79]

[CN91]

W. Zhang, A. Hasegawa, K. Itoh, Y. Ichioka. Image processing of human corneal

endothelium based on a learning network. Applied Optics, Vol. 30, No. 29, 1991.

First published CNN-based image segmentation.

[CN91b]

W. Zhang, A. Hasegawa, K. Itoh, Y. Ichioka.

Error Back Propagation With Minimum-Entropy Weights: A Technique for

Better Generalization of 2-D Shift-Invariant NNs. IJCNN, 1991.

Got an IJCNN student paper award.

[CN92]

W. Zhang, A. Hasegawa, O. Matoba, K. Itoh, Y. Ichioka, K. Doi. Shift-invariant Neural

Network for Image Processing: Learning and Generalization. SPIE Vol. 1709,

Application of Artificial Neural Networks III, Orlando, 1992.

[CN94]

W. Zhang, K. Doi, M. Giger, Y. Wu, R. Nishikawa, R. Schmidt. Computerized

detection of cluster microcalcifications in digital mammogram using a shift-invariant

neural network. Medical Physics, 21(4), 1994.

First CNN for object detection, commercialised by R2 Technology, which processed

over 30

million mammography exams annually to aid radiologists in breast cancer detection.

[CN98]

Y. Lecun, L. Bottou, Y. Bengio, P. Haffner (1998). Gradient-based learning applied to document recognition. Proceedings of the IEEE. 86 (11): 2278-2324.

This work about backpropagation-trained 2-dimensional CNNs for character recognition failed to cite the original work on this by Zhang et al. (1988).[CN88][CN89]

[CN99]

S. Behnke. Learning iterative image reconstruction in the neural abstraction pyramid. International Journal of Computational Intelligence and Applications, 1(4):427-438, 1999.

[CN03]

S. Behnke. Hierarchical Neural Networks for Image Interpretation, volume LNCS 2766 of Lecture Notes in Computer Science. Springer, 2003.

[CN07] M. A. Ranzato, Y. LeCun: A Sparse and Locally Shift Invariant Feature Extractor Applied to Document Images. Proc. ICDAR, 2007

[CN08]

D. Ciresan.

Recognition of handwritten numerical strings (in Romanian).

UPT Doctoral Theses, Series 10, No. 10, Politehnica Publishing House,

2008. ISBN: 978-973-625-777-3. GPU-based

convolutional neural networks recognise

partially overlapping digits, without prior segmentation.

20 times faster than the CPU version.

[CN10]

D. Scherer, A. Mueller, S. Behnke. Evaluation of pooling operations in convolutional architectures for object recognition. In Proc. International Conference on Artificial Neural Networks (ICANN), pages 92-101, 2010.

[CN21] Bower Award Ceremony 2021:

Jürgen Schmidhuber lauds Kunihiko Fukushima. YouTube video, 2021.

[CN25]

J. Schmidhuber.

Who invented convolutional neural networks? Technical Note IDSIA-17-25, IDSIA, 2025. See popular tweet.

[CN25b]

F. Chollet, ex-Google, creator of Keras (tweets, 3 Aug 2025): "The big breakthrough for convnets was the first GPU-accelerated CUDA implementation, which immediately started winning first place in image classification competitions. Remember when that happened? I do. That was Dan Ciresan in 2011 [...] it was an actual system that won 2 competitions and even got significant media coverage at the time. Most computer vision researchers knew about it in late 2011 / early 2012."

[DAN]

J. Schmidhuber (AI Blog, 2021).

10-year anniversary. In 2011, DanNet triggered the deep convolutional neural network (CNN) revolution. Named after Schmidhuber's outstanding postdoc Dan Ciresan, it was the first deep and fast CNN to win international computer vision contests, and had a temporary monopoly on winning them, driven by a very fast implementation based on graphics processing units (GPUs).

1st superhuman result in 2011.[DAN1] Now everybody is using this approach.

[DAN1]

J. Schmidhuber (AI Blog, 2011; updated 2021 for 10th birthday of DanNet): First superhuman visual pattern recognition.

At the IJCNN 2011 computer vision competition in Silicon Valley,

the artificial neural network called DanNet performed twice better than humans, three times better than the closest artificial competitor (from LeCun's team), and six times better than the best non-neural method.

[DEC] J. Schmidhuber (AI Blog, 02/20/2020, updated 2025). The 2010s: Our Decade of Deep Learning / Outlook on the 2020s. The recent decade's most important developments and industrial applications based on our AI, with an outlook on the 2020s, also addressing privacy and data markets.

[DEEP1]

Ivakhnenko, A. G. and Lapa, V. G. (1965). Cybernetic Predicting Devices. CCM Information Corporation. First working Deep Learners with many layers, learning internal representations.

[DEEP1a]

Ivakhnenko, Alexey Grigorevich. The group method of data of handling; a rival of the method of stochastic approximation. Soviet Automatic Control 13 (1968): 43-55.

[DEEP2]

Ivakhnenko, A. G. (1971). Polynomial theory of complex systems. IEEE Transactions on Systems, Man and Cybernetics, (4):364-378.

[DL1] J. Schmidhuber, 2015.

Deep learning in neural networks: An overview. Neural Networks, 61, 85-117.

More.

Got the first Best Paper Award ever issued by the journal Neural Networks, founded in 1988.

[DL2] J. Schmidhuber, 2015.

Deep Learning.

Scholarpedia, 10(11):32832.

[DL3] Y. LeCun, Y. Bengio, G. Hinton (2015). Deep Learning. Nature 521, 436-444.

HTML.

A "survey" of deep learning that does not mention the pioneering works of deep learning [T22][DLP].

[DL4] J. Schmidhuber (AI Blog, 2017).

Our impact on the world's most valuable public companies: Apple, Google, Microsoft, Facebook, Amazon... By 2015-17, neural nets developed in my labs were on over 3 billion devices such as smartphones, and used many billions of times per day, consuming a significant fraction of the world's compute. Examples: greatly improved (CTC-based) speech recognition on all Android phones, greatly improved machine translation through Google Translate and Facebook (over 4 billion LSTM-based translations per day), Apple's Siri and Quicktype on all iPhones, the answers of Amazon's Alexa, etc. Google's 2019

on-device speech recognition

(on the phone, not the server)

is still based on

LSTM.

[DLH]

J. Schmidhuber.

Annotated History of Modern AI and Deep Learning. Technical Report IDSIA-22-22, IDSIA, Switzerland, 2022, updated 2025.

Preprint arXiv:2212.11279.

Tweet of 2022.

[DLP]

J. Schmidhuber.

How 3 Turing awardees republished key methods and ideas whose creators they failed to credit. Technical Report IDSIA-23-23, Swiss AI Lab IDSIA, 14 Dec 2023, updated 2025.

Tweet of 2023.

[DM3]

S. Stanford. DeepMind's AI, AlphaStar Showcases Significant Progress Towards AGI. Medium ML Memoirs, 2019.

Alphastar has a "deep LSTM core."

[DS1]

DeepSeek-AI (2025).

DeepSeek-R1: Incentivizing Reasoning Capability in LLMs via Reinforcement Learning. Preprint arXiv:2501.12948. See the popular DeepSeek tweet of Jan 2025.

[DNC]

A. Graves, G. Wayne, M. Reynolds, T. Harley, I. Danihelka, A. Grabska-Barwinska, S. G. Colmenarejo, E. Grefenstette, T. Ramalho, J. Agapiou, A. P. Badia, K. M. Hermann, Y. Zwols, G. Ostrovski, A. Cain, H. King, C. Summerfield, P. Blunsom, K. Kavukcuoglu, D. Hassabis.

Hybrid computing using a neural network with dynamic external memory.

Nature, 538:7626, p 471, 2016.

This work of DeepMind did not cite the original work of the early 1990s on

neural networks learning to control dynamic external memories.[PDA1-2][FWP0-1]

[Drop1] S. J. Hanson (1990). A Stochastic Version of the Delta Rule, PHYSICA D,42, 265-272.

What's now called "dropout" is a variation of the stochastic delta rule—compare preprint

arXiv:1808.03578, 2018.

[Drop2]

N. Frazier-Logue, S. J. Hanson (2020). The Stochastic Delta Rule: Faster and More Accurate Deep Learning Through Adaptive Weight Noise. Neural Computation 32(5):1018-1032.

[Drop3]

J. Hertz, A. Krogh, R. Palmer (1991). Introduction to the Theory of Neural Computation. Redwood City, California: Addison-Wesley Pub. Co., pp. 45-46.

[FAST] C. v.d. Malsburg. Tech Report 81-2, Abteilung f. Neurobiologie,

Max-Planck Institut f. Biophysik und Chemie, Goettingen, 1981.

First paper on fast weights or dynamic links.

[FASTa]

J. A. Feldman. Dynamic connections in neural networks.

Biological Cybernetics, 46(1):27-39, 1982.

2nd paper on fast weights.

[FB17]

By 2017, Facebook

used LSTM

to handle

over 4 billion automatic translations per day (The Verge, August 4, 2017);

see also

Facebook blog by J.M. Pino, A. Sidorov, N.F. Ayan (August 3, 2017)

[FWP]

J. Schmidhuber (AI Blog, 26 March 2021, updated 2025).

26 March 1991: Neural nets learn to program neural nets with fast weights—like Transformer variants. 2021: New stuff!

30-year anniversary of a now popular

alternative[FWP0-1] to recurrent NNs.

A slow feedforward NN learns by gradient descent to program the changes of

the fast weights[FAST,FASTa] of

another NN, separating memory and control like in traditional computers.

Such Fast Weight Programmers[FWP0-6,FWPMETA1-8] can learn to memorize past data, e.g.,

by computing fast weight changes through additive outer products of self-invented activation patterns[FWP0-1]

(now often called keys and values for self-attention[TR1-6]).

The similar Transformers[TR1-2] combine this with projections

and softmax and

are now widely used in natural language processing.

For long input sequences, their efficiency was improved through

Transformers with linearized self-attention[TR5-6]

which are formally equivalent to Schmidhuber's 1991 outer product-based Fast Weight Programmers (apart from normalization), now called unnormalized linear Transformers.[ULTRA]

In 1993, he introduced

the attention terminology[FWP2] now used

in this context,[ATT] and

extended the approach to

RNNs that program themselves.

See tweet of 2022.

[FWP0]

J. Schmidhuber.

Learning to control fast-weight memories: An alternative to recurrent nets.

Technical Report FKI-147-91, Institut für Informatik, Technische

Universität München, 26 March 1991.

PDF.

First paper on neural fast weight programmers that separate storage and control: a slow net learns by gradient descent to compute weight changes of a fast net. The outer product-based version (Eq. 5) is now known as the unnormalized linear Transformer or the "Transformer with linearized self-attention."[ULTRA][FWP]

[FWP1] J. Schmidhuber. Learning to control fast-weight memories: An alternative to recurrent nets. Neural Computation, 4(1):131-139, 1992. Based on [FWP0].

PDF.

HTML.

Pictures (German).

See tweet of 2022 for 30-year anniversary.

[FWP2] J. Schmidhuber. Reducing the ratio between learning complexity and number of time-varying variables in fully recurrent nets. In Proceedings of the International Conference on Artificial Neural Networks, Amsterdam, pages 460-463. Springer, 1993.

PDF.

A recurrent extension of the unnormalized linear Transformer,[ULTRA] introducing the terminology of learning "internal spotlights of attention." First recurrent NN-based fast weight programmer using outer products to program weight matrices.

[FWP3] I. Schlag, J. Schmidhuber. Gated Fast Weights for On-The-Fly Neural Program Generation. Workshop on Meta-Learning, @N(eur)IPS 2017, Long Beach, CA, USA.

[FWP3a] I. Schlag, J. Schmidhuber. Learning to Reason with Third Order Tensor Products. Advances in Neural Information Processing Systems (N(eur)IPS), Montreal, 2018.

Preprint: arXiv:1811.12143. PDF.

[FWP6] I. Schlag, K. Irie, J. Schmidhuber.

Linear Transformers Are Secretly Fast Weight Programmers. ICML 2021. Preprint: arXiv:2102.11174.

[FWP7] K. Irie, I. Schlag, R. Csordas, J. Schmidhuber.

Going Beyond Linear Transformers with Recurrent Fast Weight Programmers.

NeurIPS 2021.

Preprint: arXiv:2106.06295 (June 2021).

[FWP8] K. Irie, F. Faccio, J. Schmidhuber.

Neural Differential Equations for Learning to Program Neural Nets Through Continuous Learning Rules.

NeurIPS 2022.

[FWP9] K. Irie, J. Schmidhuber.

Images as Weight Matrices: Sequential Image Generation Through Synaptic Learning Rules.

ICLR 2023.

[FWP23]

J.von Oswald, E. Niklasson, E. Randazzo, J. Sacramento, A. Mordvintsev, A. Zhmoginov, M. Vladymyrov.

Transformers learn in-context by gradient descent.

ICML 2023. The core FWP principle of "NNs that learn to program the fast weight changes of other NNs" [FWP0] and [FWP6] provide an intuitive conception of what's now called "in-context learning."

[FWP24]

A. Behrouz, P. Zhong, V. Mirrokni.

Titans: Learning to Memorize at Test Time.

Arxiv preprint 2501.00663, 2024.

[FWP25]

J. von Oswald, N. Scherrer, S. Kobayashi, L. Versari, S. Yang, M. Schlegel, K. Maile, Y. Schimpf, O. Sieberling, A. Meulemans, R. A. Saurous, G. Lajoie, C. Frenkel, R. Pascanu, B. Aguera y Arcas, J. Sacramento.

MesaNet: Sequence Modeling by Locally Optimal Test-Time Training.

Arxiv preprint 2506.05233, 2025.

[FWP25b]

Y. Sun, X. Li, K. Dalal, J. Xu, A. Vikram, G. Zhang, Y. Dubois, X. Chen, X. Wang, S. Koyejo, T. Hashimoto, C. Guestrin.

Learning to (Learn at Test Time): RNNs with Expressive Hidden States.

ICML 2025.

[FWP25c]

K. Irie, S. J. Gershman.

Fast weight programming and linear Transformers: from machine learning to neurobiology.

Arxiv preprint 2508.08435, 2025.

[FWPMETA1] J. Schmidhuber. Steps towards `self-referential' learning. Technical Report CU-CS-627-92, Dept. of Comp. Sci., University of Colorado at Boulder, November 1992.

PDF.

[FWPMETA2] J. Schmidhuber. A self-referential weight matrix.

In Proceedings of the International Conference on Artificial

Neural Networks, Amsterdam, pages 446-451. Springer, 1993.

PDF.

[FWPMETA3] J. Schmidhuber.

An introspective network that can learn to run its own weight change algorithm. In Proc. of the Intl. Conf. on Artificial Neural Networks,

Brighton, pages 191-195. IEE, 1993.

[FWPMETA4]

J. Schmidhuber.

A neural network that embeds its own meta-levels.

In Proc. of the International Conference on Neural Networks '93,

San Francisco. IEEE, 1993.

[FWPMETA5]

J. Schmidhuber. Habilitation thesis, TUM, 1993. PDF.

A recurrent neural net with a self-referential, self-reading, self-modifying weight matrix

can be found here.

[FWPMETA6]

L. Kirsch and J. Schmidhuber. Meta Learning Backpropagation & Improving It. Advances in Neural Information Processing Systems (NeurIPS), 2021.

Preprint arXiv:2012.14905 [cs.LG], 2020.

[FWPMETA7]

I. Schlag, T. Munkhdalai, J. Schmidhuber.

Learning Associative Inference Using Fast Weight Memory.

Report arXiv:2011.07831 [cs.AI], 2020.

[FWPMETA8]

K. Irie, I. Schlag, R. Csordas, J. Schmidhuber.

A Modern Self-Referential Weight Matrix That Learns to Modify Itself.

International Conference on Machine Learning (ICML), 2022.

Preprint: arXiv:2202.05780.

[FWPMETA9]

L. Kirsch and J. Schmidhuber.

Self-Referential Meta Learning.

First Conference on Automated Machine Learning (Late-Breaking Workshop), 2022.

[FWPMETA10] K. Irie, R. Csordas, J. Schmidhuber.

Metalearning Continual Learning Algorithms.

TMLR 2025.

[GAN90]

J. Schmidhuber.

Making the world differentiable: On using fully recurrent

self-supervised neural networks for dynamic reinforcement learning and

planning in non-stationary environments.

Technical Report FKI-126-90, TUM, Feb 1990, revised Nov 1990.

PDF.

The first paper on planning with reinforcement learning recurrent neural networks (NNs) (more) and on generative adversarial networks

where a generator NN is fighting a predictor NN in a minimax game

(more).

See [AC90].

[GAN91]

J. Schmidhuber.

A possibility for implementing curiosity and boredom in

model-building neural controllers.

In J. A. Meyer and S. W. Wilson, editors, Proc. of the

International Conference on Simulation

of Adaptive Behavior: From Animals to

Animats, pages 222-227. MIT Press/Bradford Books, 1991.

PDF.

More.

Based on [GAN90].

See [AC90b].

[GAN10]

J. Schmidhuber. Formal Theory of Creativity, Fun, and Intrinsic Motivation (1990-2010). IEEE Transactions on Autonomous Mental Development, 2(3):230-247, 2010.

IEEE link.

PDF.

This well-known 2010 survey summarised the generative adversarial NNs of 1990 as follows: a

"neural network as a predictive world model is used to maximize the controller's intrinsic reward, which is proportional to the model's prediction errors" (which are minimized).

See [AC10].

[GAN10b]

O. Niemitalo. A method for training artificial neural networks to generate missing data within a variable context.

Blog post, Internet Archive, 2010.

A blog post describing the basic ideas[GAN90-91][GAN20][AC] of GANs.

[GAN14]

I. Goodfellow, J. Pouget-Abadie, M. Mirza, B. Xu, D. Warde-Farley, S. Ozair,

A. Courville, Y. Bengio.

Generative adversarial nets. NIPS 2014, 2672-2680, Dec 2014.

A description of GANs that does not cite Schmidhuber's original GAN principle of 1990[GAN90-91][GAN20][AC][R2][DLP] and contains wrong claims about Schmidhuber's adversarial NNs for

Predictability Minimization.[PM0-2][GAN20][DLP]

[GAN19]

T. Karras, S. Laine, T. Aila. A style-based generator architecture for generative adversarial

networks. In Proc. IEEE Conf. on Computer Vision and Pattern Recognition (CVPR), pages

4401-4410, 2019.

[GAN19b]

D. Fallis. The epistemic threat of deepfakes. Philosophy & Technology 34.4 (2021):623-643.

[GAN20]

J. Schmidhuber. Generative Adversarial Networks are Special Cases of Artificial Curiosity (1990) and also Closely Related to Predictability Minimization (1991).

Neural Networks, Volume 127, p 58-66, 2020.

Preprint arXiv/1906.04493. See [AC20].

[GAN25]

J. Schmidhuber. Who Invented Generative Adversarial Networks? Technical Note IDSIA-14-25, IDSIA, December 2025.

[GD1]

S. I. Amari (1967).

A theory of adaptive pattern classifier, IEEE Trans, EC-16, 279-307 (Japanese version published in 1965).

PDF.

Probably the first paper on using stochastic gradient descent for learning in multilayer neural networks

(without specifying the specific gradient descent method now known as reverse mode of automatic differentiation or backpropagation[BP1]).

[GD2]

S. I. Amari (1968).

Information Theory—Geometric Theory of Information, Kyoritsu Publ., 1968 (in Japanese).

PDF.

Contains computer simulation results for a five layer network (with 2 modifiable layers) which learns internal representations to classify

non-linearily separable pattern classes.

[GPT3]

T. B. Brown, B. Mann, N. Ryder, M. Subbiah, J. Kaplan, P. Dhariwal, A. Neelakantan, P. Shyam, G. Sastry, A. Askell, S. Agarwal, A. Herbert-Voss, G. Krueger, T. Henighan, R. Child, A. Ramesh, D. M. Ziegler, J. Wu, C. Winter, C. Hesse, M. Chen, E. Sigler, M. Litwin, S. Gray, B. Chess, J. Clark, C. Berner, S. McCandlish, A. Radford, I. Sutskever, D. Amodei.

Language Models are Few-Shot Learners (2020).

Preprint arXiv/2005.14165.

[GPUNN]

Oh, K.-S. and Jung, K. (2004). GPU implementation of neural networks. Pattern Recognition, 37(6):1311-1314. Speeding up traditional NNs on GPU by a factor of 20.

[GPUCNN]

K. Chellapilla, S. Puri, P. Simard. High performance convolutional neural networks for document processing. International Workshop on Frontiers in Handwriting Recognition, 2006. Speeding up shallow CNNs on GPU by a factor of 4.

[GPUCNN1] D. C. Ciresan, U. Meier, J. Masci, L. M. Gambardella, J. Schmidhuber. Flexible, High Performance Convolutional Neural Networks for Image Classification. International Joint Conference on Artificial Intelligence (IJCAI-2011, Barcelona), 2011. PDF. ArXiv preprint.

Speeding up deep CNNs on GPU by a factor of 60.

Used to

win four important computer vision competitions 2011-2012 before others won any

with similar approaches.

[GPUCNN2] D. C. Ciresan, U. Meier, J. Masci, J. Schmidhuber.

A Committee of Neural Networks for Traffic Sign Classification.

International Joint Conference on Neural Networks (IJCNN-2011, San Francisco), 2011.

PDF.

HTML overview.

First superhuman performance in a computer vision contest, with half the error rate of humans, and one third the error rate of the closest competitor.[DAN1] This led to massive interest from industry.

[GPUCNN3] D. C. Ciresan, U. Meier, J. Schmidhuber. Multi-column Deep Neural Networks for Image Classification. Proc. IEEE Conf. on Computer Vision and Pattern Recognition CVPR 2012, p 3642-3649, July 2012. PDF. Longer TR of Feb 2012: arXiv:1202.2745v1 [cs.CV]. More.

[GPUCNN4] A. Krizhevsky, I. Sutskever, G. E. Hinton. ImageNet Classification with Deep Convolutional Neural Networks. NIPS 25, MIT Press, Dec 2012.

PDF.

The paper describes AlexNet, which is similar to the earlier

DanNet,[DAN,DAN1][R6]

which was the first pure deep CNN

to win computer vision contests in 2011.[GPUCNN2-3,5] AlexNet and VGG Net[GPUCNN9] followed in 2012-2014 (using stochastic delta rule/dropout[Drop1-3] and ReLUs[RELU1] without citation).

[GPUCNN5]

J. Schmidhuber (AI Blog, 2017; updated 2021 for 10th birthday of DanNet): History of computer vision contests won by deep CNNs since 2011. DanNet was the first CNN to win one, and won 4 of them in a row before the similar AlexNet/VGG Net and the Resnet (a Highway Net with open gates) joined the party. Today, deep CNNs are standard in computer vision.

[GPUCNN6] J. Schmidhuber, D. Ciresan, U. Meier, J. Masci, A. Graves. On Fast Deep Nets for AGI Vision. In Proc. Fourth Conference on Artificial General Intelligence (AGI-11), Google, Mountain View, California, 2011.

PDF.

[GPUCNN7] D. C. Ciresan, A. Giusti, L. M. Gambardella, J. Schmidhuber. Mitosis Detection in Breast Cancer Histology Images using Deep Neural Networks. MICCAI 2013.

PDF.

[GPUCNN8] J. Schmidhuber (AI Blog, 2017; updated 2021 for 10th birthday of DanNet).

First deep learner to win a contest on object detection in large images—

first deep learner to win a medical imaging contest (2012). Link.

How the Swiss AI Lab IDSIA used GPU-based CNNs to win the

ICPR 2012 Contest on Mitosis Detection

and the MICCAI 2013 Grand Challenge.

[GPUCNN9]

K. Simonyan, A. Zisserman. Very deep convolutional networks for large-scale image recognition. Preprint arXiv:1409.1556 (2014).

[GSR]

H. Sak, A. Senior, K. Rao, F. Beaufays, J. Schalkwyk—Google Speech Team.

Google voice search: faster and more accurate.

Google Research Blog, Sep 2015, see also

Aug 2015 Google's speech recognition based on CTC and LSTM.

[GSR15] Dramatic

improvement of Google's speech recognition through LSTM:

Alphr Technology, Jul 2015, or 9to5google, Jul 2015

[GSR19]

Y. He, T. N. Sainath, R. Prabhavalkar, I. McGraw, R. Alvarez, D. Zhao, D. Rybach, A. Kannan, Y. Wu, R. Pang, Q. Liang, D. Bhatia, Y. Shangguan, B. Li, G. Pundak, K. Chai Sim, T. Bagby, S. Chang, K. Rao, A. Gruenstein.

Streaming end-to-end speech recognition for mobile devices. ICASSP 2019-2019 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP). IEEE, 2019.

[GT16] Google's

dramatically improved Google Translate of 2016 is based on LSTM, e.g.,

WIRED, Sep 2016,

or

siliconANGLE, Sep 2016

[HIN] J. Schmidhuber (AI Blog, 2020). Critique of Honda Prize for Dr. Hinton. Science must not allow corporate PR to distort the academic record. See also [T22][DLP].

[HW]

J. Schmidhuber

(AI Blog, 2015, updated 2025 for 10-year anniversary).

Overview of Highway Networks: First working really deep feedforward neural networks with hundreds of layers.

[HW1] R. K. Srivastava, K. Greff, J. Schmidhuber. Highway networks.

Preprints arXiv:1505.00387 (May 2015) and arXiv:1507.06228 (Training Very Deep Networks; July 2015). Also at NeurIPS 2015. The first working very deep gradient-based feedforward neural nets (FNNs) with hundreds of layers, ten times deeper than previous gradient-based FNNs. Let g, t, h, denote non-linear differentiable functions. Each non-input layer of a Highway Net computes g(x)x + t(x)h(x), where x is the data from the previous layer. The gates g(x) are typically initialised to 1.0, to obtain plain residual connections (weight 1.0) [VAN1][HW25]. This allows for very deep error propagation, which makes Highway NNs so deep. The later Resnet (Dec 2015) [HW2] adopted this principle. It is like a Highway net variant whose gates are always open: g(x)=t(x)=const=1. That is, Highway Nets are gated ResNets: set the gates to 1.0→ResNet.

The residual parts of a Highway Net are like those of an unfolded 1999 LSTM [LSTM2a], while the residual parts of a ResNet are like those of an unfolded 1997 LSTM [LSTM1][HW25].

Highway Nets perform roughly as well as ResNets on ImageNet [HW3]. Variants of Highway gates are also used for certain algorithmic tasks, where plain residual layers do not work as well [NDR]. See also [HW25]: who invented deep residual learning?

More.

[HW1a]

R. K. Srivastava, K. Greff, J. Schmidhuber. Highway networks. Presentation at the Deep Learning Workshop, ICML'15, July 10-11, 2015.

Link.

[HW2] He, K., Zhang,

X., Ren, S., Sun, J. Deep residual learning for image recognition. Preprint

arXiv:1512.03385

(Dec 2015).

Microsoft's ResNet paper refers to the Highway Net (May 2015) [HW1] as 'concurrent'. However, this is incorrect: ResNet was published seven months later. Although the ResNet paper acknowledges the problem of vanishing/exploding gradients, it fails to recognise that S. Hochreiter first identified the issue in 1991 and developed the residual connection solution (weight 1.0) [VAN1][HW25]. The ResNet paper cites the earlier Highway Net in a way that does not make it clear that ResNets are essentially open-gated Highway Nets and that Highway Nets are gated ResNets. It also fails to mention that the gates of residual connections in Highway Nets are initially open (1.0), meaning that Highway Nets start out with standard residual connections, to achieve deep residual learning (Highway Nets were ten times deeper than previous gradient-based feedforward nets). The residual parts of a Highway Net are like those of an unfolded 1999 LSTM [LSTM2a], while the residual parts of a ResNet are like those of an unfolded 1997 LSTM [LSTM1][HW25].

A follow-up paper by the ResNet authors was flawed in its design, leading to incorrect conclusions about gated residual connections [HW25b]. See also [HW25]: who invented deep residual learning?

More.

[HW3]

K. Greff, R. K. Srivastava, J. Schmidhuber. Highway and Residual Networks learn Unrolled Iterative Estimation. Preprint

arxiv:1612.07771 (2016). Also at ICLR 2017.

[HW25]

J. Schmidhuber. Who Invented Deep Residual Learning? Technical Report IDSIA-09-25, IDSIA, 2025. Preprint arXiv:2509.24732.

[HW25b]

R. K. Srivastava (January 2025). Weighted Skip Connections are Not Harmful for Deep Nets.

Shows that a follow-up paper by the authors of [HW2] suffered from

design flaws leading to incorrect conclusions about gated residual connections.

[LSTM0]

S. Hochreiter and J. Schmidhuber.

Long Short-Term Memory.

TR FKI-207-95, TUM, August 1995.

PDF.

[LSTM1] S. Hochreiter, J. Schmidhuber. Long Short-Term Memory. Neural Computation, 9(8):1735-1780, 1997. PDF.

Based on [LSTM0]. More.

[LSTM2] F. A. Gers, J. Schmidhuber, F. Cummins. Learning to Forget: Continual Prediction with LSTM. Neural Computation, 12(10):2451-2471, 2000.

PDF.

The "vanilla LSTM architecture" with forget gates

that everybody is using today, e.g., in Google's Tensorflow.

[LSTM3] A. Graves, J. Schmidhuber. Framewise phoneme classification with bidirectional LSTM and other neural network architectures. Neural Networks, 18:5-6, pp. 602-610, 2005.

PDF.

[LSTM4]

S. Fernandez, A. Graves, J. Schmidhuber. An application of

recurrent neural networks to discriminative keyword

spotting.

Intl. Conf. on Artificial Neural Networks ICANN'07,

2007.

PDF.

[LSTM5] A. Graves, M. Liwicki, S. Fernandez, R. Bertolami, H. Bunke, J. Schmidhuber. A Novel Connectionist System for Improved Unconstrained Handwriting Recognition. IEEE Transactions on Pattern Analysis and Machine Intelligence, vol. 31, no. 5, 2009.

PDF.

[LSTM6] A. Graves, J. Schmidhuber. Offline Handwriting Recognition with Multidimensional Recurrent Neural Networks. NIPS'22, p 545-552, Vancouver, MIT Press, 2009.

PDF.

[LSTM7] J. Bayer, D. Wierstra, J. Togelius, J. Schmidhuber.

Evolving memory cell structures for sequence learning.

Proc. ICANN-09, Cyprus, 2009.

PDF.

[LSTM10]

A. Graves, D. Eck and N. Beringer, J. Schmidhuber. Biologically Plausible Speech Recognition with LSTM Neural Nets. In J. Ijspeert (Ed.), First Intl. Workshop on Biologically Inspired Approaches to Advanced Information Technology, Bio-ADIT 2004, Lausanne, Switzerland, p. 175-184, 2004.

PDF.

[LSTM11]

N. Beringer and A. Graves and F. Schiel and J. Schmidhuber. Classifying unprompted speech by retraining LSTM Nets. In W. Duch et al. (Eds.): Proc. Intl. Conf. on Artificial Neural Networks ICANN'05, LNCS 3696, pp. 575-581, Springer-Verlag Berlin Heidelberg, 2005.

[LSTM12]

D. Wierstra, F. Gomez, J. Schmidhuber. Modeling systems with internal state using Evolino. In Proc. of the 2005 conference on genetic and evolutionary computation (GECCO), Washington, D. C., pp. 1795-1802, ACM Press, New York, NY, USA, 2005. Got a GECCO best paper award.

[LSTM13]

F. A. Gers and J. Schmidhuber.

LSTM Recurrent Networks Learn Simple Context Free and

Context Sensitive Languages.

IEEE Transactions on Neural Networks 12(6):1333-1340, 2001.

PDF.

[LSTM14]

S. Fernandez, A. Graves, J. Schmidhuber.

Sequence labelling in structured domains with

hierarchical recurrent neural networks. In Proc.

IJCAI 07, p. 774-779, Hyderabad, India, 2007 (talk).

PDF.

[LSTM15]

A. Graves, J. Schmidhuber.

Offline Handwriting Recognition with Multidimensional Recurrent Neural Networks.

Advances in Neural Information Processing Systems 22, NIPS'22, p 545-552,

Vancouver, MIT Press, 2009.

PDF.

[LSTM16]

M. Stollenga, W. Byeon, M. Liwicki, J. Schmidhuber. Parallel Multi-Dimensional LSTM, With Application to Fast Biomedical Volumetric Image Segmentation. Advances in Neural Information Processing Systems (NIPS), 2015.

Preprint: arxiv:1506.07452.

[LSTM17]

J. A. Perez-Ortiz, F. A. Gers, D. Eck, J. Schmidhuber.

Kalman filters improve LSTM network performance in

problems unsolvable by traditional recurrent nets.

Neural Networks 16(2):241-250, 2003.

PDF.

[LSTM-RL]

B. Bakker, F. Linaker, J. Schmidhuber.

Reinforcement Learning in Partially Observable Mobile Robot

Domains Using Unsupervised Event Extraction.

In Proceedings of the 2002

IEEE/RSJ International Conference on

Intelligent Robots and Systems (IROS 2002), Lausanne, 2002.

PDF.

[LSTMPG]

J. Schmidhuber (AI Blog, Dec 2020). 10-year anniversary of our journal paper on deep reinforcement learning with policy gradients for LSTM (2007-2010). Recent famous applications: DeepMind's Starcraft player (2019) and OpenAI's dextrous robot hand & Dota player (2018)—Bill Gates called this a huge milestone in advancing AI.

[MC43]

W. S. McCulloch, W. Pitts. A Logical Calculus of Ideas Immanent in Nervous Activity.

Bulletin of Mathematical Biophysics, Vol. 5, p. 115-133, 1943.

[MIR] J. Schmidhuber (Oct 2019, updated '21, '22, '25, '26). Deep Learning: Our Miraculous Year 1990-1991. Preprint

arXiv:2005.05744. The Deep Learning Artificial Neural Networks (NNs)

of our team have

revolutionised

Machine Learning & AI.

Many of the basic ideas behind this revolution were published within the 12 months of our "Annus Mirabilis" 1990-1991 at our lab in TU Munich.

Back then, few people were interested. But a quarter century later, NNs based on our "Miraculous Year"

were on over 3 billion devices,

and used many billions of times per day,

consuming a significant fraction of the world's compute.

In particular, in 1990-91, we laid foundations of Generative AI, publishing principles of

(1) Transformers (the T in ChatGPT—see the 1991 Unnormalized Linear Transformer),

(2) Pre-training for deep NNs (see the P in ChatGPT),

(3) NN distillation (key for DeepSeek etc.),

(4) Generative Adversarial Networks for Artificial Curiosity and Creativity (now used for deepfakes),

and (5) recurrent World Models for

Reinforcement Learning and Planning in partially observable environments. The year 1991 also marks the emergence of the defining features of (6)

LSTM, the most cited AI paper of the 20th century (based on deep residual learning through residual NN connections), and (7) the most cited paper of the 21st century, based on our LSTM-inspired Highway Net that was

10 times deeper than previous feedforward NNs.

As of 2025, the two most frequently cited scientific articles of all time (with the most Google Scholar citations within 3 years—manuals excluded) are both directly based on our 1991 work. See also the 2019 inaugural tweet!

[MLP1] D. C. Ciresan, U. Meier, L. M. Gambardella, J. Schmidhuber. Deep Big Simple Neural Nets For Handwritten Digit Recognition. Neural Computation 22(12): 3207-3220, 2010. ArXiv Preprint.

Showed that plain backprop for deep standard NNs is sufficient to break benchmark records, without any unsupervised pre-training.

[MLP2] J. Schmidhuber

(AI Blog, Sep 2020). 10-year anniversary of supervised deep learning breakthrough (2010). No unsupervised pre-training. By 2010, when compute was 100 times more expensive than today, both the feedforward NNs[MLP1] and the earlier recurrent NNs of Schmidhuber's team were able to beat all competing algorithms on important problems of that time.

[MLP3] J. Schmidhuber

(AI Blog, 2025). 2010: Breakthrough of end-to-end deep learning (no layer-by-layer training, no unsupervised pre-training). The rest is history.

By 2010, when compute was 1000 times more expensive than in 2025, both our feedforward NNs[MLP1] and our earlier recurrent NNs were able to beat all competing algorithms on important problems of that time.

This deep learning revolution quickly spread from Europe to North America and Asia.

[MOST]

J. Schmidhuber (AI Blog, 2021, updated 2025). The most cited neural networks all build on work done in my labs: 1. Long Short-Term Memory (LSTM), the most cited AI of the 20th century. 2. ResNet (open-gated Highway Net), the most cited AI of the 21st century. 3. AlexNet & VGG Net (the similar but earlier DanNet of 2011 won 4 image recognition challenges before them). 4. GAN (an instance of Adversarial Artificial Curiosity of 1990). 5. Transformer variants—see the 1991 unnormalised linear Transformer (ULTRA). Foundations of Generative AI were published in 1991: the principles of GANs (now used for deepfakes), Transformers (the T in ChatGPT), Pre-training for deep NNs (the P in ChatGPT), NN distillation (key for DeepSeek and other LLMs—see the tweet). As of 2025, the two most frequently cited scientific articles of all time (with the most Google Scholar citations within 3 years—manuals excluded) are both directly based on our 1991 work.

[MOST25]

H. Pearson, H. Ledford, M. Hutson, R. Van Noorden.

Exclusive: the most-cited papers of the twenty-first century.

Nature, 15 April 2025.

[MOST25b]

R. Van Noorden.

Science’s golden oldies: the decades-old research papers still heavily cited today.

Nature, 15 April 2025.

[MOST26]

J. Schmidhuber. The two most frequently cited papers of all time are based on our 1991 work. Technical Note IDSIA-1-26, January 2026.

[NDR]

R. Csordas, K. Irie, J. Schmidhuber.

The Neural Data Router: Adaptive Control Flow in Transformers Improves Systematic Generalization. Proc. ICLR 2022. Preprint arXiv/2110.07732.

[NAT1] J. Schmidhuber. Citation bubble about to burst? Nature, vol. 469, p. 34, 6 January 2011.

HTML.

[MOZ]

M. Mozer. A Focused Backpropagation Algorithm for Temporal Pattern Recognition.

Complex Systems, 1989.

[NYT1]

NY Times article

by J. Markoff, Nov. 27, 2016: When A.I. Matures, It May Call Jürgen Schmidhuber 'Dad'

[NOB] J. Schmidhuber.

A Nobel Prize for Plagiarism.

Technical Report IDSIA-24-24 (7 Dec 2024, updated Oct 2025).

The 2024 Nobel Prize in Physics was awarded to Hopfield & Hinton. They republished foundational methodologies for artificial neural networks developed by Ivakhnenko, Amari and others in Ukraine and Japan during the 1960s and 1970s, as well as other techniques, without citing the original papers. Even in their subsequent surveys and recent 2025 articles, they failed to acknowledge the original inventors. This apparently turned what may have been unintentional plagiarism into a deliberate act. Hopfield and Hinton did not invent any of the key algorithms that underpin modern artificial intelligence.

See also popular

tweet1,

tweet2, and

LinkedIn post.

See also [PLAG1-6][FAKE1-3][DLH].

[NOB25a]

G. Hinton. Nobel Lecture: Boltzmann machines. Rev. Mod. Phys. 97, 030502, 25 August 2025. One of the many problematic statements in this lecture is this: "Boltzmann machines are no longer used, but they were historically important [...] In the 1980s, they demonstrated that it was possible to learn appropriate weights for hidden neurons using only locally available information without requiring a biologically implausible backward pass." Again, Hinton fails to mention Ivakhnenko who had shown this 2 decades earlier in the 1960s [DEEP1-2]. He has plagiarized Ivakhnenko and others in many additional ways [NOB][DLP].

[OAI1]

G. Powell, J. Schneider, J. Tobin, W. Zaremba, A. Petron, M. Chociej, L. Weng, B. McGrew, S. Sidor, A. Ray, P. Welinder, R. Jozefowicz, M. Plappert, J. Pachocki, M. Andrychowicz, B. Baker.

Learning Dexterity. OpenAI Blog, 2018.

[OAI1a]

OpenAI, M. Andrychowicz, B. Baker, M. Chociej, R. Jozefowicz, B. McGrew, J. Pachocki, A. Petron, M. Plappert, G. Powell, A. Ray, J. Schneider, S. Sidor, J. Tobin, P. Welinder, L. Weng, W. Zaremba.

Learning Dexterous In-Hand Manipulation. arxiv:1312.5602 (PDF).

[OAI2]

OpenAI:

C. Berner, G. Brockman, B. Chan, V. Cheung, P. Debiak, C. Dennison, D. Farhi, Q. Fischer, S. Hashme, C. Hesse, R. Jozefowicz, S. Gray, C. Olsson, J. Pachocki, M. Petrov, H. P. de Oliveira Pinto, J. Raiman, T. Salimans, J. Schlatter, J. Schneider, S. Sidor, I. Sutskever, J. Tang, F. Wolski, S. Zhang (Dec 2019).

Dota 2 with Large Scale Deep Reinforcement Learning.

Preprint

arxiv:1912.06680.

An LSTM composes 84% of the model's total parameter count.

[OAI2a]

J. Rodriguez. The Science Behind OpenAI Five that just Produced One of the Greatest Breakthrough in the History of AI. Towards Data Science, 2018. An LSTM with 84% of the model's total parameter count was the core of OpenAI Five.

[PDA1]

G.Z. Sun, H.H. Chen, C.L. Giles, Y.C. Lee, D. Chen. Neural Networks with External Memory Stack that Learn Context—Free Grammars from Examples. Proceedings of the 1990 Conference on Information Science and Systems, Vol.II, pp. 649-653, Princeton University, Princeton, NJ, 1990.

[PDA2]

M. Mozer, S. Das. A connectionist symbol manipulator that discovers the structure of context-free languages. Proc. NIPS 1993.

[PLAN4]

J. Schmidhuber.

On Learning to Think: Algorithmic Information Theory for Novel Combinations of Reinforcement Learning Controllers and Recurrent Neural World Models.

Report arXiv:1210.0118 [cs.AI], 2015.

[PLAN5]

One Big Net For Everything. Preprint arXiv:1802.08864 [cs.AI], Feb 2018. See also

the DeepSeek tweet of Jan 2025.

[PM0] J. Schmidhuber. Learning factorial codes by predictability minimization. TR CU-CS-565-91, Univ. Colorado at Boulder, 1991. PDF.

More.

[PM1] J. Schmidhuber. Learning factorial codes by predictability minimization. Neural Computation, 4(6):863-879, 1992. Based on [PM0], 1991. PDF.

More.

[PM2] J. Schmidhuber, M. Eldracher, B. Foltin. Semilinear predictability minimzation produces well-known feature detectors. Neural Computation, 8(4):773-786, 1996.

PDF. More.

Relevant threads with many comments at reddit.com/r/MachineLearning, the largest machine learning forum with over 800k subscribers in 2019 (note that my name is often misspelled):

[R2] Reddit/ML, 2019. J. Schmidhuber really had GANs in 1990.

[R3] Reddit/ML, 2019. NeurIPS 2019 Bengio Schmidhuber Meta-Learning Fiasco.

Schmidhuber started

metalearning (learning to learn—now a hot topic)

in 1987[META1][META] long before Bengio

who suggested in public at N(eur)IPS 2019

that he did it before Schmidhuber.

[R4] Reddit/ML, 2019. Five major deep learning papers by G. Hinton did not cite similar earlier work by J. Schmidhuber.

[R5] Reddit/ML, 2019. The 1997 LSTM paper by Hochreiter & Schmidhuber has become the most cited deep learning research paper of the 20th century.

[R6] Reddit/ML, 2019. DanNet, the CUDA CNN of Dan Ciresan in J. Schmidhuber's team, won 4 image recognition challenges prior to AlexNet.

[R7] Reddit/ML, 2019. J. Schmidhuber on Seppo Linnainmaa, inventor of backpropagation in 1970.

[R8] Reddit/ML, 2019. J. Schmidhuber on Alexey Ivakhnenko, godfather of deep learning 1965.

[RCNN]

R. Girshick, J. Donahue, T. Darrell, J. Malik.

Rich feature hierarchies for accurate object detection and semantic segmentation.

Preprint arXiv/1311.2524, Nov 2013.

[RCNN2]

R. Girshick.

Fast R-CNN. Proc. of the IEEE international conference on computer vision, p. 1440-1448, 2015.

[RCNN3]

K. He, G. Gkioxari, P. Dollar, R. Girshick.

Mask R-CNN.

Preprint arXiv/1703.06870, 2017.

[RELU1]

K. Fukushima (1969). Visual feature extraction by a multilayered network of analog threshold elements. IEEE Transactions on Systems Science and Cybernetics. 5 (4): 322-333. doi:10.1109/TSSC.1969.300225.

This work introduced rectified linear units or ReLUs.

[RELU2]

C. v. d. Malsburg (1973).

Self-Organization of Orientation Sensitive Cells in the Striate Cortex. Kybernetik, 14:85-100, 1973. See Table 1 for rectified linear units or ReLUs. Possibly this was also the first work on applying an EM algorithm to neural nets.

[RPG]

D. Wierstra, A. Foerster, J. Peters, J. Schmidhuber (2010). Recurrent policy gradients. Logic Journal of the IGPL, 18(5), 620-634.

[RPG07]

D. Wierstra, A. Foerster, J. Peters, J. Schmidhuber. Solving Deep Memory POMDPs

with Recurrent Policy Gradients.

Intl. Conf. on Artificial Neural Networks ICANN'07,

2007.

PDF.

[SHA48]

C. E. Shannon.

A mathematical theory of communication (parts I and II).

Bell System Technical Journal, XXVII:379-423, 1948.

Boltzmann's entropy formula (1877) seen from the perspective of information transmission.

[T22] J. Schmidhuber (AI Blog, 2022).

Scientific Integrity and the History of Deep Learning: The 2021 Turing Lecture, and the 2018 Turing Award. Technical Report IDSIA-77-21, IDSIA, Lugano, Switzerland, 2022. See also [DLP].

[TR1]

A. Vaswani, N. Shazeer, N. Parmar, J. Uszkoreit, L. Jones, A. N. Gomez, L. Kaiser, I. Polosukhin (2017). Attention is all you need. NIPS 2017, pp. 5998-6008.

This paper introduced the name Transformer for a now widely used NN type.[TR25] It did not cite

the 1991 publication on what's now called unnormalized "linear Transformers" with "linearized self-attention."[ULTRA]

Schmidhuber also introduced the now popular

attention terminology in 1993.[ATT][FWP2][R4]

See tweet of 2022 for 30-year anniversary.

[TR2]

J. Devlin, M. W. Chang, K. Lee, K. Toutanova (2018). Bert: Pre-training of deep bidirectional Transformers for language understanding. Preprint arXiv:1810.04805.

[TR3] K. Tran, A. Bisazza, C. Monz. The Importance of Being Recurrent for Modeling Hierarchical Structure. EMNLP 2018, p 4731-4736. ArXiv preprint 1803.03585.

[TR4]

M. Hahn. Theoretical Limitations of Self-Attention in Neural Sequence Models. Transactions of the Association for Computational Linguistics, Volume 8, p.156-171, 2020.

[TR5]

A. Katharopoulos, A. Vyas, N. Pappas, F. Fleuret.

Transformers are RNNs: Fast autoregressive Transformers

with linear attention. In Proc. Int. Conf. on Machine

Learning (ICML), July 2020.

[TR5a] Z. Shen, M. Zhang, H. Zhao, S. Yi, H. Li.

Efficient Attention: Attention with Linear Complexities.

WACV 2021.

[TR6]

K. Choromanski, V. Likhosherstov, D. Dohan, X. Song,

A. Gane, T. Sarlos, P. Hawkins, J. Davis, A. Mohiuddin,

L. Kaiser, et al. Rethinking attention with Performers.

In Int. Conf. on Learning Representations (ICLR), 2021.

[TR6a] H. Peng, N. Pappas, D. Yogatama, R. Schwartz, N. A. Smith, L. Kong.

Random Feature Attention.

ICLR 2021.

[TR7]

S. Bhattamishra, K. Ahuja, N. Goyal.

On the Ability and Limitations of Transformers to Recognize Formal Languages.

EMNLP 2020.

[TR8]

W. Merrill, A. Sabharwal.

The Parallelism Tradeoff: Limitations of Log-Precision Transformers.

TACL 2023.

[TR25]

J. Schmidhuber. Who Invented Transformer Neural Networks? Technical Note IDSIA-11-25, IDSIA, Switzerland, Nov 2025.

[ULTRA]

References on the 1991 unnormalized linear Transformer (ULTRA): original tech report (March 1991) [FWP0]. Journal publication (1992) [FWP1]. Recurrent ULTRA extension (1993) introducing the terminology of learning "internal spotlights of attention” [FWP2]. Modern "quadratic" Transformer (2017: "attention is all you need") scaling quadratically in input size [TR1]. 2020 paper [TR5] using the terminology

"linear Transformer" for a more efficient Transformer variant that scales linearly, leveraging linearized attention [TR5a].

2021 paper [FWP6] pointing out that ULTRA dates back to 1991 [FWP0] when compute was a million times more expensive.

Overview of ULTRA and other Fast Weight Programmers (2021) [FWP].

Who Invented Transformer Neural Networks? [TR25].

See the T in ChatGPT! See also surveys [DLH][DLP], 2022 tweet for ULTRA's 30-year anniversary, and 2024 tweet.

[UN]

J. Schmidhuber (AI Blog, 2021, updated 2025). 1991: First very deep learning with unsupervised pre-training (see the P in ChatGPT). First neural network distillation (key for DeepSeek). Unsupervised hierarchical predictive coding (with self-supervised target generation) finds compact internal representations of sequential data to facilitate downstream deep learning. The hierarchy can be distilled into a single deep neural network (suggesting a simple model of conscious and subconscious information processing). 1993: solving problems of depth >1000.

[UN0]

J. Schmidhuber.

Neural sequence chunkers.

Technical Report FKI-148-91, Institut für Informatik, Technische

Universität München, April 1991.

PDF.

Unsupervised/self-supervised pre-training for deep neural networks

(see the P in ChatGPT) and predictive coding is used

in a deep hierarchy of recurrent nets (RNNs)

to find compact internal

representations of long sequences of data,

across multiple time scales and levels of abstraction.

Each RNN tries to solve the pretext task of predicting its next input, sending only unexpected inputs to the next RNN above.

The resulting compressed sequence representations

greatly facilitate downstream supervised deep learning such as sequence classification.

By 1993, the approach solved problems of depth 1000 [UN2]

(requiring 1000 subsequent computational stages/layers—the more such stages, the deeper the learning).

A variant collapses the hierarchy into a single deep net.

It uses a so-called conscious chunker RNN

which attends to unexpected events that surprise

a lower-level so-called subconscious automatiser RNN.

The chunker learns to understand the surprising events by predicting them.

The automatiser uses a

neural knowledge distillation procedure (key for the famous 2025 DeepSeek)

to compress and absorb the formerly conscious insights and

behaviours of the chunker, thus making them subconscious.

The systems of 1991 allowed for much deeper learning than previous methods.

[UN1] J. Schmidhuber. Learning complex, extended sequences using the principle of history compression. Neural Computation, 4(2):234-242, 1992. Based on TR FKI-148-91, TUM, 1991.[UN0] PDF.

First working Deep Learner based on a deep RNN hierarchy (with different self-organising time scales),

overcoming the vanishing gradient problem through unsupervised pre-training of deep NNs (see the P in ChatGPT) and predictive coding (with self-supervised target generation).

Also: compressing or distilling a teacher net (the chunker) into a student net (the automatizer) that does not forget its old skills—such approaches are now widely used, e.g., by DeepSeek. See also this tweet. More.

[UN2] J. Schmidhuber. Habilitation thesis, TUM, 1993. PDF.

An ancient experiment on "Very Deep Learning" with credit assignment across 1200 time steps or virtual layers and unsupervised / self-supervised pre-training for a stack of recurrent NN

can be found here (depth > 1000).

[UN3]

J. Schmidhuber, M. C. Mozer, and D. Prelinger.

Continuous history compression.

In H. Hüning, S. Neuhauser, M. Raus, and W. Ritschel, editors,

Proc. of Intl. Workshop on Neural Networks, RWTH Aachen, pages 87-95.

Augustinus, 1993.

[UN4] G. E. Hinton, R. R. Salakhutdinov. Reducing the dimensionality of data with neural networks. Science, Vol. 313. no. 5786, pp. 504—507, 2006. PDF.

This work describes unsupervised layer-wise pre-training of stacks of feedforward NNs (FNNs)

called Deep Belief Networks (DBNs).

It neither cited the original layer-wise training of deep NNs by Ivakhnenko & Lapa (1965)[DEEP1-2][NOB] nor

the 1991 unsupervised pre-training of stacks of more general recurrent NNs (RNNs)[UN0-3]

which introduced

the first NNs shown to solve very deep problems.

The 2006 justification of the authors was essentially the one Schmidhuber used for the 1991 RNN stack:

each higher level tries to reduce the description length

(or negative log probability) of the data representation in the level below.[HIN][T22][MIR]

This can greatly facilitate very deep downstream learning.[UN0-3]

[UN5]

Y. Bengio, P. Lamblin, D. Popovici, H. Larochelle.

Greedy layer-wise training of deep networks.

Proc. NIPS 06, pages 153-160, Dec. 2006.

The comment under reference[UN4] applies here as well.

[VAN1] S. Hochreiter. Untersuchungen zu dynamischen neuronalen Netzen. Diploma thesis, TUM, 1991 (advisor J. Schmidhuber). PDF.

More on the Fundamental Deep Learning Problem.

[VID1] G. Hinton.

The Next Generation of Neural Networks.

Youtube video [see 28:16].

GoogleTechTalk, 2007.

Quote: "Nobody in their right mind would ever suggest"

to use plain backpropagation for training deep networks.

However, in 2010, Schmidhuber's team in Switzerland showed[MLP1-3]

that

unsupervised pre-training is not necessary to train deep feedforward NNs by backpropagation.

[WHO4]

J. Schmidhuber. Who invented artificial neural networks? Technical Note IDSIA-15-25, IDSIA, Switzerland, Nov 2025.

[WHO5]

J. Schmidhuber. Who invented deep learning? Technical Note IDSIA-16-25, IDSIA, Switzerland, Nov 2025.

[WHO6] J. Schmidhuber (AI Blog, 2014; updated 2025).

Who invented backpropagation?

See also LinkedIn post.

[WHO7]

J. Schmidhuber.

Who invented convolutional neural networks? Technical Note IDSIA-17-25, IDSIA, Switzerland, 2025. See popular tweet.

[WHO8]

J. Schmidhuber. Who Invented Generative Adversarial Networks? Technical Note IDSIA-14-25, IDSIA, Switzerland, Dec 2025.

[WHO9]

J. Schmidhuber. Who invented knowledge distillation with artificial neural networks? Technical Note IDSIA-12-25, IDSIA, Nov 2025.

[WHO10]

J. Schmidhuber. Who Invented Transformer Neural Networks? Technical Note IDSIA-11-25, IDSIA, Switzerland, Nov 2025.

[WHO11]

J. Schmidhuber. Who Invented Deep Residual Learning? Technical Report IDSIA-09-25, IDSIA, Switzerland, Sept 2025. Preprint arXiv:2509.24732.

[WU] Y. Wu et al. Google's Neural Machine Translation System: Bridging the Gap between Human and Machine Translation.

Preprint arXiv:1609.08144 (PDF), 2016. Based on LSTM which it mentions at least 50 times.

.