The concept of a mental model of the world—a world model—dates back millennia. Plato [PLA1] suggested that we recognize objects by recollecting internal blueprints or templates, today often called internal representations. Aristotle wrote that phantasia or mental images allow humans to imagine the future and to plan action sequences by mentally manipulating images in the absence of the actual objects [ARI1].

Only 2,370 years later—a mere blink of an eye by cosmical standards—we are witnessing a boom in world models based on artificial neural networks (NNs) [DLH][WHO4-11] for artificial intelligence (AI) in the physical world. New startups on this are emerging. To explain what's going on, I'll take you on a little journey through the history of general purpose neural world models, based on my opening keynote for the World Modeling Workshop (Agora, Mila - Quebec AI Institute, 4-6 Feb 2026) [WM26b][WM26] (see video tweet).

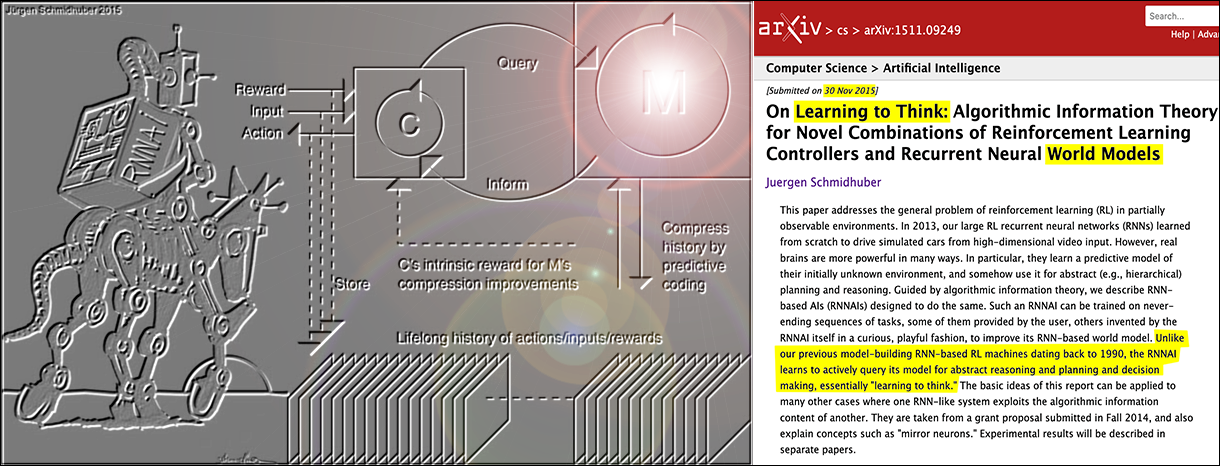

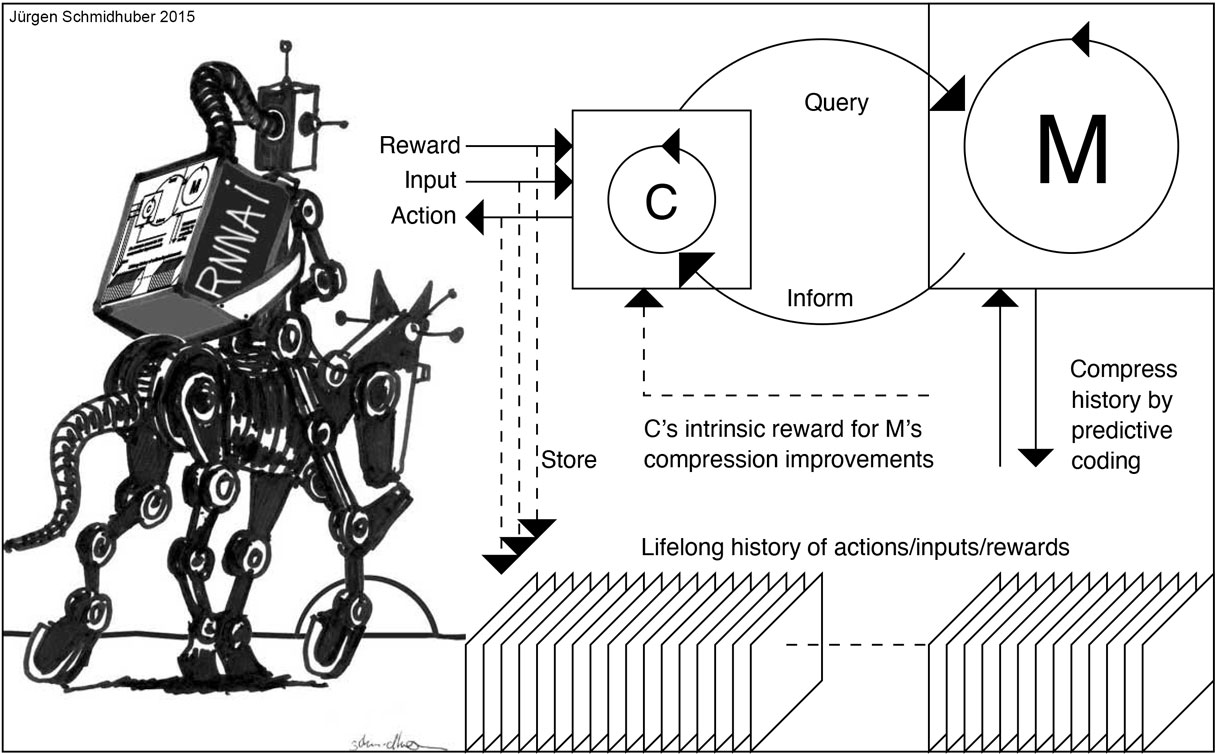

★ 1990: recurrent NNs as general purpose world models. In 1990, I studied adaptive agents living in partially observable environments where non-trivial kinds of memory are required to act successfully [AC90][PLAN2]. I used the term world model for a recurrent NN (RNN) that learns to predict the agent's sensory inputs (including pain and reward signals) reflecting the consequences of the actions of a separate controller RNN steering the agent. The controller C used the world model M to plan its action sequences through mental experiments [PLAN]. Compute was 10 million times more expensive than today (2026).

Since RNNs are general purpose computers, this approach went beyond previous, less general, feedforward NN-based systems (since 1987) for fully observable environments (which weren't called "world models" by their authors) [WER87-89][MUN87][NGU89]—in the 1980s, Werbos connected this work to even earlier work in control theory, e.g., [WER87-89].

★ 1990: artificial curiosity for NNs.

In the beginning, my 1990 world model M knew nothing. That's why my 1990 controller C (a generative model with stochastic neurons) was intrinsically motivated through adversarial artificial curiosity [AC90][AC90b][AC] to invent action sequences or experiments that yield data from which M can learn something: C simply tried to maximize the prediction error minimized by M. Today, they call this a generative adversarial network (GAN) [GAN90-25].

The 1990 system didn't learn like today's foundation models and large language models (LLMs) by downloading and imitating the web. No, it generated its own self-invented experiments to collect limited but relevant data from the environment, like a physicist, or a baby [AC90,AC90b][AC]. It was a simple kind of artificial scientist.

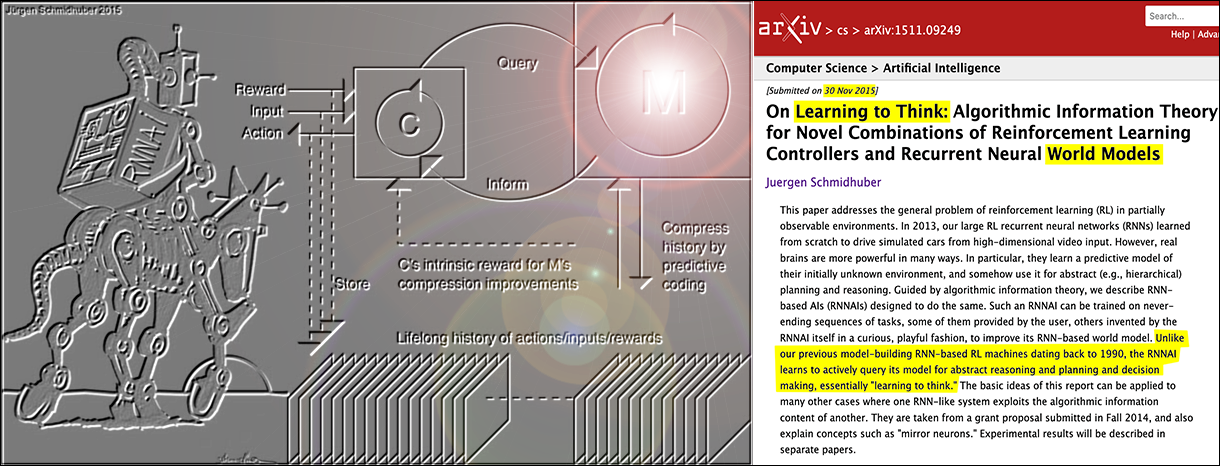

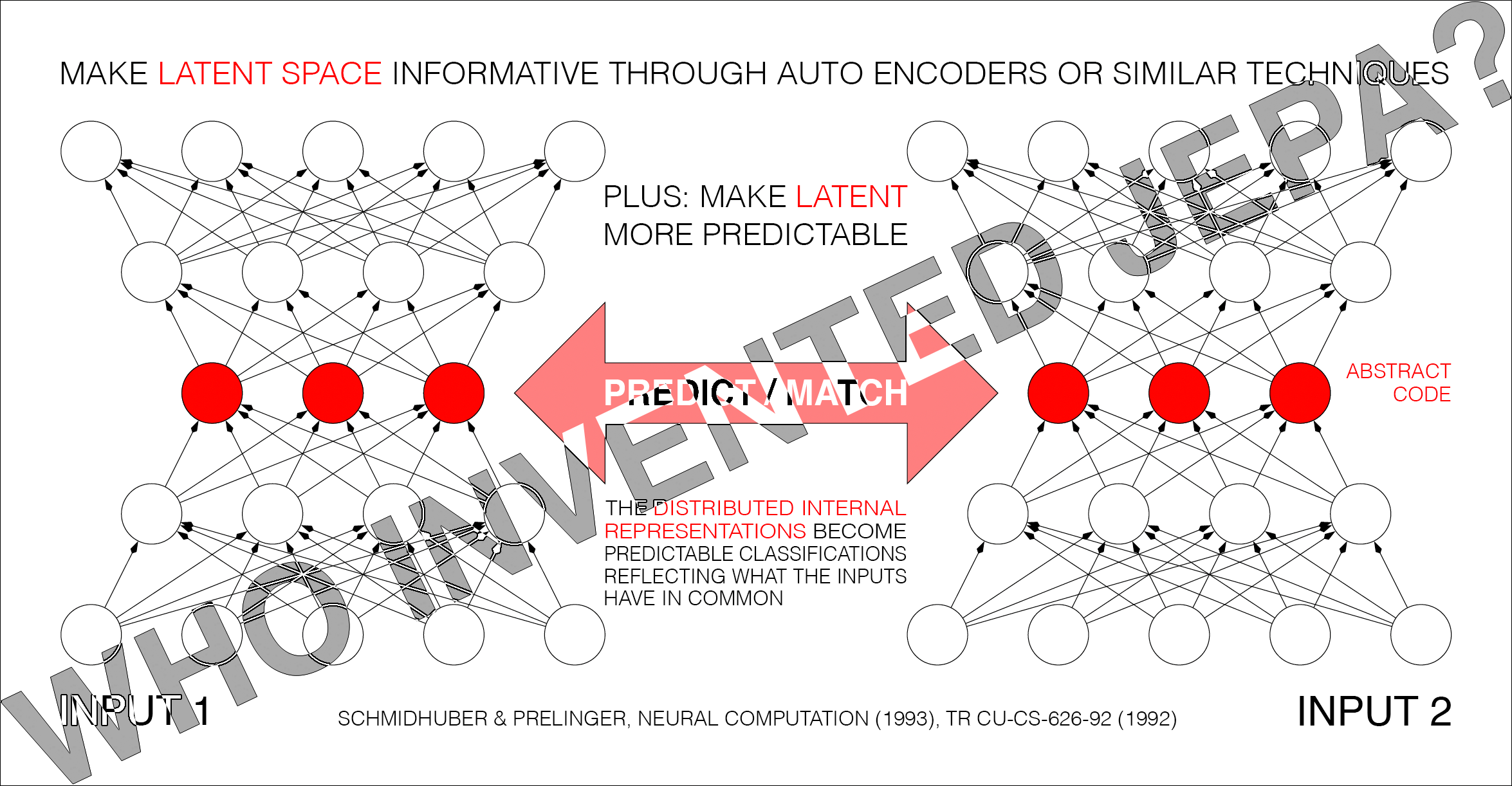

★ March-June 1991: linear Transformers and deep residual learning. The above-mentioned gradient-based RNN world models of 1990 did not work well for long time lags between relevant input events—they were not very deep. To overcome this, my little AI lab at TU Munich came up with various innovations, in the process laying the foundations of today's foundation models and LLMs. We published the first Transformer variants (see the T in ChatGPT) including the now-so-called unnormalized linear Transformer [ULTRA][FWP0-6][WHO10], Pre-training for deep NNs (see the P in ChatGPT) [UN0][UN1][DLH][MIR], NN distillation (central to the famous 2025 DeepSeek and other LLMs) [WHO9][DLH], as well as deep residual learning [VAN1][WHO11] for very deep NNs such as Long Short-Term Memory [LSTM1], the most cited AI of the 20th century, basis of the first LLMs. In fact, as of 2026, the two most frequently cited papers of all time (with the most citations within 3 years—manuals excluded) are directly based on this work of 1991 [MOST26].

★ March-June 1991: linear Transformers and deep residual learning. The above-mentioned gradient-based RNN world models of 1990 did not work well for long time lags between relevant input events—they were not very deep. To overcome this, my little AI lab at TU Munich came up with various innovations, in the process laying the foundations of today's foundation models and LLMs. We published the first Transformer variants (see the T in ChatGPT) including the now-so-called unnormalized linear Transformer [ULTRA][FWP0-6][WHO10], Pre-training for deep NNs (see the P in ChatGPT) [UN0][UN1][DLH][MIR], NN distillation (central to the famous 2025 DeepSeek and other LLMs) [WHO9][DLH], as well as deep residual learning [VAN1][WHO11] for very deep NNs such as Long Short-Term Memory [LSTM1], the most cited AI of the 20th century, basis of the first LLMs. In fact, as of 2026, the two most frequently cited papers of all time (with the most citations within 3 years—manuals excluded) are directly based on this work of 1991 [MOST26].

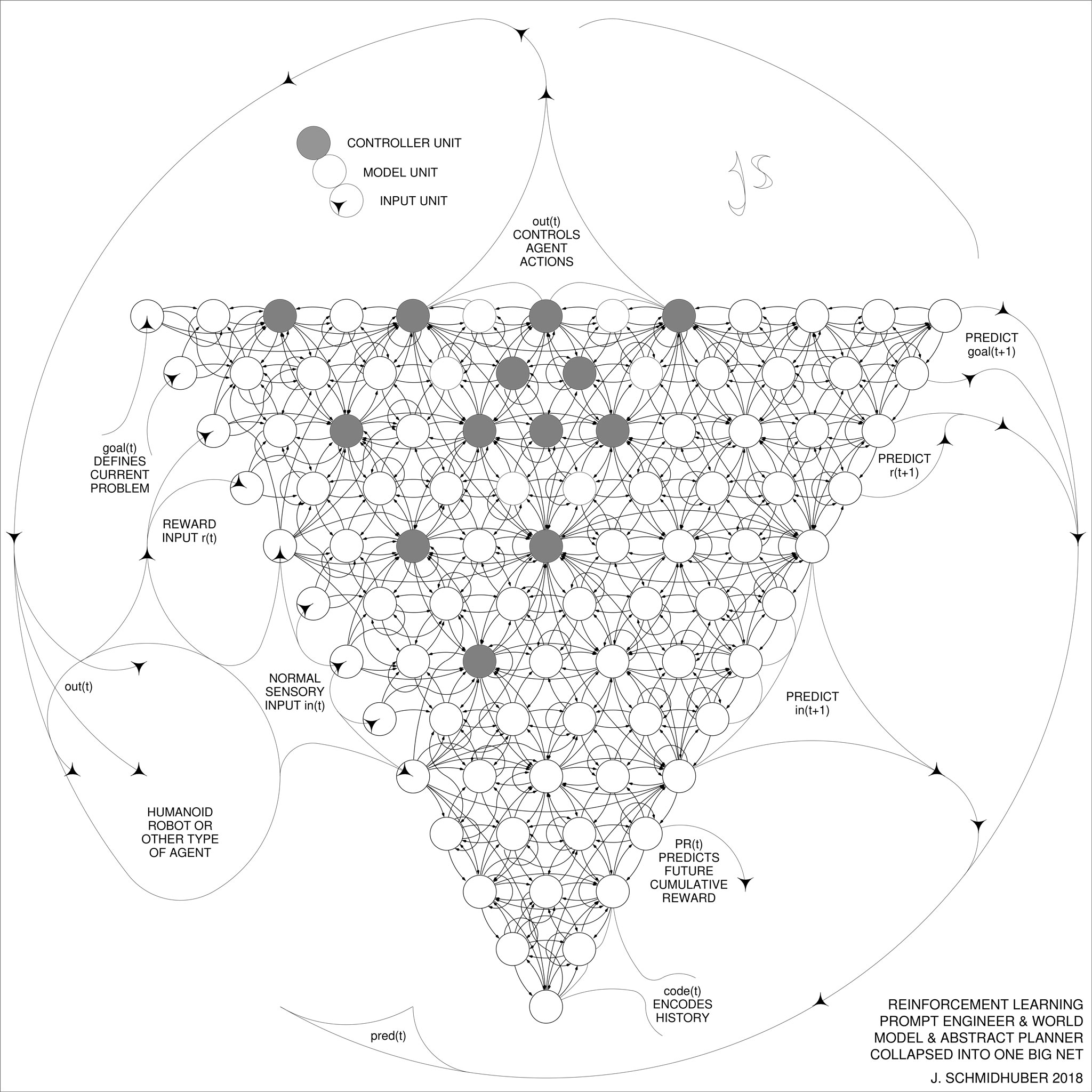

Back then, however, it was already totally obvious that LLM-type NNs alone are not enough to achieve Artificial General Intelligence (AGI). No AGI without mastery of the real world [95-25][DLH]! True AGI in the physical world must somehow learn a model of its changing environment, and use the model to plan action sequences that solve its goals. Sure, one can train a foundation model to become a world model M, but additional elements are needed for decision making and planning. In particular, some sort of controller C must learn to use M to achieve its goals.

★ 1991-: reward C for M's improvements, not M's errors. Many things are fundamentally unpredictable by M, e.g., white noise on a screen (the noisy TV problem) [AC10]. To deal with this problem, in 1991, I used M's improvements rather than M's errors as C's intrinsic curiosity reward [AC91][AC91b]. In 1995, we used the information gain [AC95] (optimally since 2011 [AC11]).

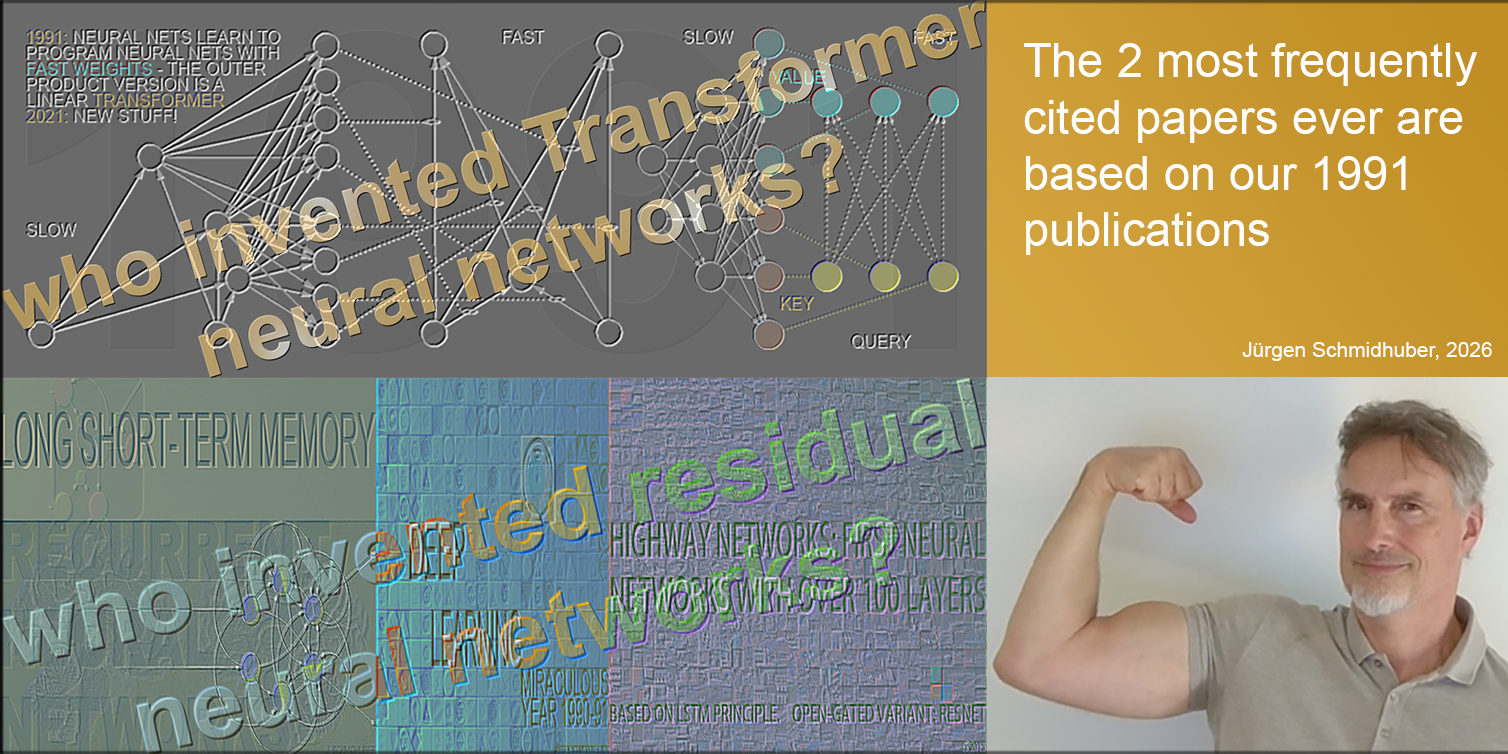

★ 1991-: predicting latent space. My NNs also started to predict latent space and hidden units rather than raw pixels. For example, I had a hierarchical architecture for predictive models that learn representations at multiple levels of abstraction and multiple time scales [UN0][UN1][UN][DLH][LEC][DLP]. Here an automatizer NN learns to predict the informative hidden units of a chunker NN, thus collapsing or distilling the chunker's knowledge into the automatizer [WHO9]. This can greatly facilitate downstream deep learning [UN0-2].

In 1992, my other combination of two NNs also learned to create informative yet predictable internal representations in latent space [PMAX]. Both NNs saw different but related inputs which they tried to represent internally. For example, the first NN tried to predict the hidden units of an autoencoder NN, which in turn tried to make its hidden units more predictable, while leaving them as informative as possible. This was called Predictability Maximization [PMAX][UN2], complementing my earlier 1991 work on Predictability Minimization (PMIN): adversarial NNs learning to create informative yet unpredictable internal representations [PM0-2].

30 years later,

Dr. LeCun called this "JEPA" without citing PMAX [LEC22a][LEC][WHO12]. See also tweets [LE3-9].

★ 1997-: predicting in latent space for reinforcement learning (RL) and control. I applied the above concepts of hidden state prediction to RL, building controllers that follow a self-supervised learning paradigm that produces informative yet predictable internal abstractions of complex spatio-temporal events [AC97][AC99][AC02]. Instead of predicting all details of future inputs (e.g., raw pixels), the 1997 system could ask arbitrary abstract questions with computable answers encoded in representation space. It could even focus its attention on small relevant parts of its latent space, and ignore the rest. Two learning, reward-maximizing adversaries called left brain and right brain played a zero-sum game, trying to surprise each other, occasionally betting on different yes/no outcomes of computational experiments, until the outcomes became predictable and boring. Remarkably, this type of self-guided learning and exploration can accelerate external reward intake [AC02].

★ Early 2000s: theoretically optimal controllers and universal world models. My postdoc Marcus Hutter, working under my SNF grant at IDSIA, even had a mathematically optimal (yet computationally infeasible) way of learning a world model and exploiting it to plan optimal actions sequences: the famous AIXI model [HUT4].

★ 2006: Formal theory of fun & creativity. C's intrinsic reward or curiosity reward was redefined as M's compression progress [AC06][AC07][AC09] (rather than M's traditional information gain [AC95]). This led to the

formal theory of fun & creativity [AC10].

The basic insight was: interestingness is the first derivative of subjective beauty or compressibility (in space and time) of the lifelong sensory input stream [AC07], and curiosity & creativity is the drive to maximize it [AC06][AC10]. I think this is the essence of what scientists and artists do.

★ 2014: we founded an AGI company for Physical AI in the real world, based on neural world models [NAI]. It achieved lots of remarkable milestones in collaboration with world-famous companies. Alas, like some of our projects, the company may have been a bit ahead of time, because real world robots and hardware are so challenging. Nevertheless, it's great that in the 2020s, new world model startups have been created!

★ 2015: Planning with spatio-temporal abstractions in world models / RL prompt engineer / chain of thought.

The 2015 paper [PLAN4] went beyond the inefficient millisecond by millisecond planning of 1990 [AC90][PLAN2], addressing planning and reasoning in abstract concept spaces and learning to think [PLAN4] (including ways of learning to act largely by observation), going beyond

our hierarchical neural subgoal generators and planners of 1990-92

[HRL0-2]. The controller C became an RL prompt engineer that learns to create a chain of thought: to speed up RL, C learns to query its world model M for abstract reasoning and decision making. This has become popular.

★ 2018: The paper [PLAN5] finally collapsed C and M into a single One Big Net for everything, using my NN distillation procedure of 1991 [UN0-1]. Apparently, this is what DeepSeek [DS1] used to shock the stock market in 2025.

And the other 2018 paper [PLAN6] with David Ha was the one that finally made world models popular :-)

Please have a look at the overviews [PLAN][AC][AIB] for additional, more recent work.

★ What's next? As compute keeps getting 10 times cheaper every 5 years [RAW], the Machine Learning community will combine the puzzle pieces above into one simple, coherent whole, and scale it up.

Acknowledgments

Thanks to several expert reviewers for useful comments. (Let me know under juergen@idsia.ch if you can spot any remaining error.)

The contents of this article may be used for educational and non-commercial purposes, including articles for Wikipedia and similar sites.

This work is licensed under a Creative Commons Attribution-NonCommercial-ShareAlike 4.0 International License.

Thanks to several expert reviewers for useful comments. (Let me know under juergen@idsia.ch if you can spot any remaining error.)

The contents of this article may be used for educational and non-commercial purposes, including articles for Wikipedia and similar sites.

This work is licensed under a Creative Commons Attribution-NonCommercial-ShareAlike 4.0 International License.

References

[95-25]

J. Schmidhuber (AI Blog, 2025).

1995-2025: The Decline of Germany & Japan vs US & China. Can All-Purpose Robots Fuel a Comeback? In 1995, in terms of nominal gross domestic product (GDP), a combined Germany and Japan were almost 1:1 economically with a combined USA and China, according to IMF. Only 3 decades later, this ratio is now down to 1:5! Self-replicating AI-driven all-purpose robots may be the answer.

Based on a 2024 F.A.Z. guest article.

[AC]

J. Schmidhuber (AI Blog, 2021, updated 2025). 3 decades of artificial curiosity & creativity. Schmidhuber's artificial scientists not only answer given questions but also invent new questions. They achieve curiosity through: (1990) the principle of generative adversarial networks, (1991) neural nets that maximise learning progress, (1995) neural nets that maximise information gain (optimally since 2011), (1997) adversarial design of surprising computational experiments, (2006) maximizing compression progress like scientists/artists/comedians do, (2011) PowerPlay... Since 2012: applications to real robots.

[AC90]

J. Schmidhuber.

Making the world differentiable: On using fully recurrent

self-supervised neural networks for dynamic reinforcement learning and

planning in non-stationary environments.

Technical Report FKI-126-90, TUM, Feb 1990, revised Nov 1990.

PDF.

The first paper on planning with reinforcement learning recurrent neural networks (NNs) and recurrent world models (more), and on generative adversarial networks

where a generator NN is fighting a predictor NN in a minimax game

(more).

Apparently, it was also the first paper of this kind to use the term "world model" for the predictor NN (although the basic concept of a world model is much older than that.)

[AC90b]

J. Schmidhuber.

A possibility for implementing curiosity and boredom in

model-building neural controllers.

In J. A. Meyer and S. W. Wilson, editors, Proc. of the

International Conference on Simulation

of Adaptive Behavior: From Animals to

Animats, pages 222-227. MIT Press/Bradford Books, 1991. Based on [AC90].

PDF.

More.

[AC91]

J. Schmidhuber. Adaptive confidence and adaptive curiosity. Technical Report FKI-149-91, Inst. f. Informatik, Tech. Univ. Munich, April 1991.

PDF.

[AC91b]

J. Schmidhuber.

Curious model-building control systems.

Proc. International Joint Conference on Neural Networks,

Singapore, volume 2, pages 1458-1463. IEEE, 1991.

PDF.

[AC95]

J. Storck, S. Hochreiter, and J. Schmidhuber. Reinforcement-driven information acquisition in non-deterministic environments. In Proc. ICANN'95, vol. 2, pages 159-164. EC2 & CIE, Paris, 1995. PDF.

[AC97]

J. Schmidhuber.

What's interesting?

Technical Report IDSIA-35-97, IDSIA, July 1997.

Focus

on automatic creation of predictable internal

abstractions of complex spatio-temporal events:

two competing, intrinsically motivated agents agree on essentially

arbitrary algorithmic experiments and bet

on their possibly surprising (not yet predictable)

outcomes in zero-sum games,

each agent potentially profiting from outwitting / surprising

the other by inventing experimental protocols where both

modules disagree on the predicted outcome. The focus is on exploring

the space of general algorithms (as opposed to

traditional simple mappings from inputs to

outputs); the

general system

focuses on the interesting

things by losing interest in both predictable and

unpredictable aspects of the world. Unlike Schmidhuber et al.'s previous

systems with intrinsic motivation,[AC90-AC95] the system also

takes into account

the computational cost of learning new skills, learning when to learn and what to learn.

See later publications.[AC99][AC02]

[AC98]

M. Wiering and J. Schmidhuber.

Efficient model-based exploration.

In R. Pfeiffer, B. Blumberg, J. Meyer, S. W. Wilson, eds.,

From Animals to Animats 5: Proceedings

of the Fifth International Conference on Simulation of Adaptive

Behavior, p. 223-228, MIT Press, 1998.

[AC98b]

M. Wiering and J. Schmidhuber.

Learning exploration policies with models.

In Proc. CONALD, 1998.

[AC99]

J. Schmidhuber.

Artificial Curiosity Based on Discovering Novel Algorithmic

Predictability Through Coevolution.

In P. Angeline, Z. Michalewicz, M. Schoenauer, X. Yao, Z.

Zalzala, eds., Congress on Evolutionary Computation, p. 1612-1618,

IEEE Press, Piscataway, NJ, 1999.

[AC02]

J. Schmidhuber.

Exploring the Predictable.

In Ghosh, S. Tsutsui, eds., Advances in Evolutionary Computing,

p. 579-612, Springer, 2002.

PDF.

[AC06]

J. Schmidhuber.

Developmental Robotics,

Optimal Artificial Curiosity, Creativity, Music, and the Fine Arts.

Connection Science, 18(2): 173-187, 2006.

PDF.

[AC07]

J. Schmidhuber.

Simple Algorithmic Principles of Discovery, Subjective Beauty,

Selective Attention, Curiosity & Creativity.

In V. Corruble, M. Takeda, E. Suzuki, eds.,

Proc. 10th Intl. Conf. on Discovery Science (DS 2007)

p. 26-38, LNAI 4755, Springer, 2007.

Also in M. Hutter, R. A. Servedio, E. Takimoto, eds.,

Proc. 18th Intl. Conf. on Algorithmic Learning Theory (ALT 2007)

p. 32, LNAI 4754, Springer, 2007.

(Joint invited lecture for DS 2007 and ALT 2007, Sendai, Japan, 2007.)

Preprint: arxiv:0709.0674.

PDF.

Curiosity as the drive to improve the compression

of the lifelong sensory input stream: interestingness as

the first derivative of subjective "beauty" or compressibility.

[AC09]

J. Schmidhuber. Art & science as by-products of the search for novel patterns, or data compressible in unknown yet learnable ways. In M. Botta (ed.), Et al. Edizioni, 2009, pp. 98-112.

PDF. (More on

artificial scientists and artists.)

[AC10]

J. Schmidhuber. Formal Theory of Creativity, Fun, and Intrinsic Motivation (1990-2010). IEEE Transactions on Autonomous Mental Development, 2(3):230-247, 2010.

IEEE link.

PDF.

With a brief summary of the generative adversarial neural networks of 1990[AC90,90b][AC20]

where a generator NN is fighting a predictor NN in a minimax game

(more).

[AC11]

Sun Yi, F. Gomez, J. Schmidhuber.

Planning to Be Surprised: Optimal Bayesian Exploration in Dynamic Environments.

In Proc. Fourth Conference on Artificial General Intelligence (AGI-11),

Google, Mountain View, California, 2011.

PDF.

[AC11a]

V. Graziano, T. Glasmachers, T. Schaul, L. Pape, G. Cuccu, J. Leitner, J. Schmidhuber. Artificial Curiosity for Autonomous Space Exploration. Acta Futura 4:41-51, 2011 (DOI: 10.2420/AF04.2011.41). PDF.

[AC11b]

G. Cuccu, M. Luciw, J. Schmidhuber, F. Gomez.

Intrinsically Motivated Evolutionary Search for Vision-Based Reinforcement Learning.

In Proc. Joint IEEE International Conference on Development and Learning (ICDL) and on Epigenetic Robotics (ICDL-EpiRob 2011), Frankfurt, 2011.

PDF.

[AC11c]

M. Luciw, V. Graziano, M. Ring, J. Schmidhuber.

Artificial Curiosity with Planning for Autonomous Visual and Perceptual Development.

In Proc. Joint IEEE International Conference on Development and Learning (ICDL) and on Epigenetic Robotics (ICDL-EpiRob 2011), Frankfurt, 2011.

PDF.

[AC11d]

T. Schaul, L. Pape, T. Glasmachers, V. Graziano J. Schmidhuber.

Coherence Progress: A Measure of Interestingness Based on Fixed Compressors.

In Proc. Fourth Conference on Artificial General Intelligence (AGI-11),

Google, Mountain View, California, 2011.

PDF.

[AC11e]

T. Schaul, Yi Sun, D. Wierstra, F. Gomez, J. Schmidhuber. Curiosity-Driven Optimization. IEEE Congress on Evolutionary Computation (CEC-2011), 2011.

PDF.

[AC11f]

H. Ngo, M. Ring, J. Schmidhuber.

Curiosity Drive based on Compression Progress for Learning Environment Regularities.

In Proc. Joint IEEE International Conference on Development and Learning (ICDL) and on Epigenetic Robotics (ICDL-EpiRob 2011), Frankfurt, 2011.

[AC12]

L. Pape, C. M. Oddo, M. Controzzi, C. Cipriani, A. Foerster, M. C. Carrozza, J. Schmidhuber.

Learning tactile skills through curious exploration.

Frontiers in Neurorobotics 6:6, 2012, doi: 10.3389/fnbot.2012.00006

[AC12a]

H. Ngo, M. Luciw, A. Foerster, J. Schmidhuber.

Learning Skills from Play: Artificial Curiosity on a Katana Robot Arm.

Proc. IJCNN 2012.

PDF.

Video.

[AC12b]

V. R. Kompella, M. Luciw, M. Stollenga, L. Pape, J. Schmidhuber.

Autonomous Learning of Abstractions using Curiosity-Driven Modular Incremental Slow Feature Analysis.

Proc. IEEE Conference on Development and Learning / EpiRob 2012

(ICDL-EpiRob'12), San Diego, 2012.

[AC12c]

J. Schmidhuber. Maximizing Fun By Creating Data With Easily Reducible Subjective Complexity.

In G. Baldassarre and M. Mirolli (eds.), Roadmap for Intrinsically Motivated Learning.

Springer, 2012.

[AC20]

J. Schmidhuber. Generative Adversarial Networks are Special Cases of Artificial Curiosity (1990) and also Closely Related to Predictability Minimization (1991).

Neural Networks, Volume 127, p 58-66, 2020.

Preprint arXiv/1906.04493.

[AC22]

A. Ramesh, L. Kirsch, S. van Steenkiste, J. Schmidhuber.

Exploring through Random Curiosity with General Value Functions.

Advances in Neural Information Processing Systems (NeurIPS), New Orleans, 2022.

Preprint arXiv:2211.10282.

[AC22b]

V. Herrmann, L. Kirsch, J. Schmidhuber

Learning One Abstract Bit at a Time Through Self-Invented Experiments Encoded as Neural Networks.

Preprint arXiv:2212.14374, 2022.

[AIB]

J. Schmidhuber's AI Blog.

With lessons on the history of AI & computing, e.g.:

Who invented deep learning?

Who invented backpropagation?

Who invented convolutional neural networks?

Who invented artificial neural networks?

Who invented generative adversarial networks?

Who invented Transformer neural networks?

Who invented deep residual learning?

Who invented neural knowledge distillation?

Who invented the computer?

Who invented the transistor?

Who invented the integrated circuit?

...

[ARI1]

Aristotle (circa 350 BC). De Anima (On the Soul).

[DLH]

J. Schmidhuber.

Annotated History of Modern AI and Deep Learning. Technical Report IDSIA-22-22, IDSIA, Switzerland, 2022, updated 2025.

Preprint arXiv:2212.11279.

Tweet.

[DLP]

J. Schmidhuber.

How 3 Turing awardees republished key methods and ideas whose creators they failed to credit. Technical Report IDSIA-23-23, Swiss AI Lab IDSIA, 14 Dec 2023, updated 2025.

Tweet of 2023.

[DS1]

DeepSeek-AI (2025).

DeepSeek-R1: Incentivizing Reasoning Capability in LLMs via Reinforcement Learning. Preprint arXiv:2501.12948. See the popular DeepSeek tweet of Jan 2025.

[FWP]

J. Schmidhuber (AI Blog, 26 March 2021, updated 2025).

26 March 1991: Neural nets learn to program neural nets with fast weights—like Transformer variants. 2021: New stuff!

See tweet of 2022.

[FWP0]

J. Schmidhuber.

Learning to control fast-weight memories: An alternative to recurrent nets.

Technical Report FKI-147-91, Institut für Informatik, Technische

Universität München, 26 March 1991.

PDF.

First paper on neural fast weight programmers that separate storage and control: a slow net learns by gradient descent to compute weight changes of a fast net. The outer product-based version (Eq. 5) is now known as the unnormalized linear Transformer or the "Transformer with linearized self-attention."[ULTRA][FWP]

[FWP1] J. Schmidhuber. Learning to control fast-weight memories: An alternative to recurrent nets. Neural Computation, 4(1):131-139, 1992. Based on [FWP0].

PDF.

HTML.

Pictures (German).

See tweet of 2022 for 30-year anniversary.

[FWP2] J. Schmidhuber. Reducing the ratio between learning complexity and number of time-varying variables in fully recurrent nets. In Proceedings of the International Conference on Artificial Neural Networks, Amsterdam, pages 460-463. Springer, 1993.

PDF.

A recurrent extension of the unnormalized linear Transformer,[ULTRA] introducing the terminology of learning "internal spotlights of attention." First recurrent NN-based fast weight programmer using outer products to program weight matrices.

[FWP3a] I. Schlag, J. Schmidhuber. Learning to Reason with Third Order Tensor Products. Advances in Neural Information Processing Systems (N(eur)IPS), Montreal, 2018.

Preprint: arXiv:1811.12143. PDF.

[FWP6] I. Schlag, K. Irie, J. Schmidhuber.

Linear Transformers Are Secretly Fast Weight Programmers. ICML 2021. Preprint: arXiv:2102.11174.

[GAN90]

J. Schmidhuber.

Making the world differentiable: On using fully recurrent

self-supervised neural networks for dynamic reinforcement learning and

planning in non-stationary environments.

Technical Report FKI-126-90, TUM, Feb 1990, revised Nov 1990.

PDF.

The first paper on planning with reinforcement learning recurrent neural networks (NNs) and recurrent world models (more), and on generative adversarial networks

where a generator NN is fighting a predictor NN in a minimax game

(more).

Apparently, it was also the first paper of this kind to use the term "world model" for the predictor NN (although the basic concept of a world model is much older than that.)

[GAN91]

J. Schmidhuber.

A possibility for implementing curiosity and boredom in

model-building neural controllers.

In J. A. Meyer and S. W. Wilson, editors, Proc. of the

International Conference on Simulation

of Adaptive Behavior: From Animals to

Animats, pages 222-227. MIT Press/Bradford Books, 1991.

PDF.

More.

Based on [GAN90].

See [AC90b].

[GAN10]

J. Schmidhuber. Formal Theory of Creativity, Fun, and Intrinsic Motivation (1990-2010). IEEE Transactions on Autonomous Mental Development, 2(3):230-247, 2010.

IEEE link.

PDF.

This well-known 2010 survey summarised the generative adversarial NNs of 1990 as follows: a

"neural network as a predictive world model is used to maximize the controller's intrinsic reward, which is proportional to the model's prediction errors" (which are minimized).

See [AC10].

[GAN10b]

O. Niemitalo. A method for training artificial neural networks to generate missing data within a variable context.

Blog post, Internet Archive, 2010.

A blog post describing the basic ideas[GAN90-91][GAN20][AC] of GANs.

[GAN14]

I. Goodfellow, J. Pouget-Abadie, M. Mirza, B. Xu, D. Warde-Farley, S. Ozair,

A. Courville, Y. Bengio.

Generative adversarial nets. NIPS 2014, 2672-2680, Dec 2014.

A description of GANs that does not cite Schmidhuber's original GAN principle of 1990[GAN90-91][GAN20][AC][R2][DLP] and contains wrong claims about Schmidhuber's adversarial NNs for

Predictability Minimization.[PM0-2][GAN20][DLP]

[GAN19]

T. Karras, S. Laine, T. Aila. A style-based generator architecture for generative adversarial

networks. In Proc. IEEE Conf. on Computer Vision and Pattern Recognition (CVPR), pages

4401-4410, 2019.

[GAN19b]

D. Fallis. The epistemic threat of deepfakes. Philosophy & Technology 34.4 (2021):623-643.

[GAN20]

J. Schmidhuber. Generative Adversarial Networks are Special Cases of Artificial Curiosity (1990) and also Closely Related to Predictability Minimization (1991).

Neural Networks, Volume 127, p 58-66, 2020.

Preprint arXiv/1906.04493. See [AC20].

[GAN25]

J. Schmidhuber. Who Invented Generative Adversarial Networks? Technical Note IDSIA-14-25, IDSIA, December 2025.

[PLA1]

M. Uehleke (2022).

Plato's Theory of Forms: How Ancient Philosophy Still Shapes Modern Thinking. The Philosopher's Shirt, 2022.

[HRL0]

J. Schmidhuber.

Towards compositional learning with dynamic neural networks.

Technical Report FKI-129-90, Institut für Informatik, Technische

Universität München, 1990.

PDF.

An RL machine gets extra command inputs of the form (start, goal). An evaluator NN learns to predict the current rewards/costs of going from start to goal. An (R)NN-based subgoal generator also sees (start, goal), and uses (copies of) the evaluator NN to learn by gradient descent a sequence of cost-minimising intermediate subgoals. The RL machine tries to use such subgoal sequences to achieve final goals.

The system is learning action plans

at multiple levels of abstraction and multiple time scales and solves what Y. LeCun called an "open problem" in 2022.[LEC]

[HRL1]

J. Schmidhuber. Learning to generate sub-goals for action sequences. In T. Kohonen, K. Mäkisara, O. Simula, and J. Kangas, editors, Artificial Neural Networks, pages 967-972. Elsevier Science Publishers B.V., North-Holland, 1991. PDF. Extending TR FKI-129-90, TUM, 1990.

[HRL2]

J. Schmidhuber and R. Wahnsiedler.

Planning simple trajectories using neural subgoal generators.

In J. A. Meyer, H. L. Roitblat, and S. W. Wilson, editors, Proc.

of the 2nd International Conference on Simulation of Adaptive Behavior,

pages 196-202. MIT Press, 1992.

PDF.

[HRL4]

M. Wiering and J. Schmidhuber. HQ-Learning. Adaptive Behavior 6(2):219-246, 1997.

PDF.

[HRLW]

C. Watkins (1989). Learning from delayed rewards.

[HUT4]

M. Hutter.

Universal Artificial Intelligence: Sequential Decisions based on Algorithmic Probability. Springer, Berlin, 2004. (Based on work done under J. Schmidhuber's SNF grant 20-61847: unification of universal induction and sequential decision theory, 2000).

[LE1]

J. Schmidhuber.

Who invented convolutional neural networks (CNNs)?

Tweet of 3 Aug 2025. See also

LinkedIn post.

See also F. Chollet's tweet of 3 Dec 2025.

[LE2]

J. Schmidhuber.

Fukushima's video (1986) shows a CNN that recognises handwritten digits, three years before LeCun's video (1989).

Tweet of 2 Dec 2025.

See also L. Bayer's tweet of 3 Dec 2025.

[LE3]

J. Schmidhuber. LeCun’s 2022 paper on autonomous machine intelligence rehashes but doesn’t cite essential work of 1990-2015.

Tweet of 7 Jul 2022.

[LE4]

J. Schmidhuber. LeCun's "5 best ideas 2012-22” are mostly from my lab, and older: 1 Self-supervised 1991 RNN stack; 2 ResNet = open-gated 2015 Highway Net; 3&4 Key/Value-based fast weights 1991; 5 Transformers with linearized self-attention 1991. (Also GAN 1990.)

Tweet of 15 Sep 2022.

[LE5]

J. Schmidhuber. Quote tweet (19 Nov 2025) of "The False Glorification ..." by G. Marcus.

[LE6]

J. Schmidhuber. How 3 Turing awardees republished key methods and ideas whose creators they failed to credit.

Tweet of 14 Dec 2023.

[LE7]

J. Schmidhuber.

LeCun’s 2025 company on physical AI with world models looks a lot like our 2014 company on physical AI with world models.

Tweet of 21 Dec 2025.

LinkedIn Post.

[LE8]

J. Schmidhuber.

Dr. LeCun's heavily promoted Joint Embedding Predictive Architecture (JEPA, 2022) is the heart of his new company. However, the core ideas are not original to LeCun. Instead, JEPA is essentially identical to our 1992 Predictability Maximization system (PMAX).

Tweet of 31 Mar 2026.

LinkedIn Post.

[LE9]

Calc Consulting. PMAX tweet of 6 April 2026. Quote: "In that sense, Juergen Schmidhuber made the real and original conceptual breakthrough: non-generative, latent-to-latent predictive learning."

[LEC] J. Schmidhuber (AI Blog, 2022). LeCun's 2022 paper on autonomous machine intelligence rehashes but does not cite essential work of 1990-2015. Years ago, Schmidhuber's team published most of what Y. LeCun calls his "main original contributions:" neural nets that learn multiple time scales and levels of abstraction, generate subgoals, use intrinsic motivation to improve world models, and plan (1990); controllers that learn informative predictable representations (1997), etc. This was also discussed on Hacker News, reddit, and in the media.

See tweet1.

LeCun also listed the "5 best ideas 2012-2022" without mentioning that

most of them are from Schmidhuber's lab, and older.

See tweet2.

[LEC22a]

Y. LeCun (27 June 2022).

A Path Towards Autonomous Machine Intelligence.

OpenReview Archive.

Link. See critique [LEC].

[LSTM0]

S. Hochreiter and J. Schmidhuber.

Long Short-Term Memory.

TR FKI-207-95, TUM, August 1995.

PDF.

[LSTM1a]

S. Hochreiter and J. Schmidhuber.

LSTM can solve hard long time lag problems. Proceedings of the 9th International Conference on Neural Information Processing Systems (NIPS'96). Cambridge, MA, USA, MIT Press, p. 473–479.

[LSTM1] S. Hochreiter, J. Schmidhuber. Long Short-Term Memory. Neural Computation, 9(8):1735-1780, 1997. PDF.

Based on [LSTM0]. More.

[LSTM2] F. A. Gers, J. Schmidhuber, F. Cummins. Learning to Forget: Continual Prediction with LSTM. Neural Computation, 12(10):2451-2471, 2000.

PDF.

The "vanilla LSTM architecture" with forget gates

that everybody is using today, e.g., in Google's Tensorflow.

[LSTM3] A. Graves, J. Schmidhuber. Framewise phoneme classification with bidirectional LSTM and other neural network architectures. Neural Networks, 18:5-6, pp. 602-610, 2005.

PDF.

[LSTM4]

S. Fernandez, A. Graves, J. Schmidhuber. An application of

recurrent neural networks to discriminative keyword

spotting.

Intl. Conf. on Artificial Neural Networks ICANN'07,

2007.

PDF.

[LSTM5] A. Graves, M. Liwicki, S. Fernandez, R. Bertolami, H. Bunke, J. Schmidhuber. A Novel Connectionist System for Improved Unconstrained Handwriting Recognition. IEEE Transactions on Pattern Analysis and Machine Intelligence, vol. 31, no. 5, 2009.

PDF.

[LSTM6] A. Graves, J. Schmidhuber. Offline Handwriting Recognition with Multidimensional Recurrent Neural Networks. NIPS'22, p 545-552, Vancouver, MIT Press, 2009.

PDF.

[LSTM7] J. Bayer, D. Wierstra, J. Togelius, J. Schmidhuber.

Evolving memory cell structures for sequence learning.

Proc. ICANN-09, Cyprus, 2009.

PDF.

[MIR] J. Schmidhuber (Oct 2019, updated 2021, 2022, 2025). Deep Learning: Our Miraculous Year 1990-1991. Preprint

arXiv:2005.05744. The Deep Learning Artificial Neural Networks (NNs)

of our team have

revolutionised

Machine Learning & AI.

Many of the basic ideas behind this revolution were published within the 12 months of our "Annus Mirabilis" 1990-1991 at our lab in TU Munich.

Back then, few people were interested. But a quarter century later, NNs based on our "Miraculous Year"

were on over 3 billion devices,

and used many billions of times per day,

consuming a significant fraction of the world's compute.

In particular, in 1990-91, we laid foundations of Generative AI, publishing principles of (1)

Generative Adversarial Networks for Artificial Curiosity and Creativity (now used for deepfakes), (2) Transformers (the T in ChatGPT—see the 1991 Unnormalized Linear Transformer), (3) Pre-training for deep NNs (see the P in ChatGPT), (4) NN distillation (key for DeepSeek), and (5) recurrent World Models for

Reinforcement Learning and Planning in partially observable environments. The year 1991 also marks the emergence of the defining features of (6)

LSTM, the most cited AI paper of the 20th century (based on constant error flow through residual NN connections), and (7) ResNet, the most cited AI paper of the 21st century, based on our LSTM-inspired Highway Net that was

10 times deeper than previous feedforward NNs.

[MOST]

J. Schmidhuber (AI Blog, 2021, updated 2025). The most cited neural networks all build on work done in my labs: 1. Long Short-Term Memory (LSTM), the most cited AI of the 20th century. 2. ResNet (open-gated Highway Net), the most cited AI of the 21st century. 3. AlexNet & VGG Net (the similar but earlier DanNet of 2011 won 4 image recognition challenges before them). 4. GAN (an instance of Adversarial Artificial Curiosity of 1990). 5. Transformer variants—see the 1991 unnormalised linear Transformer (ULTRA). Foundations of Generative AI were published in 1991: the principles of GANs (now used for deepfakes), Transformers (the T in ChatGPT), Pre-training for deep NNs (the P in ChatGPT), NN distillation, and the famous DeepSeek—see the tweet.

[MOST25]

H. Pearson, H. Ledford, M. Hutson, R. Van Noorden.

Exclusive: the most-cited papers of the twenty-first century.

Nature, 15 April 2025.

[MOST25b]

R. Van Noorden.

Science’s golden oldies: the decades-old research papers still heavily cited today.

Nature, 15 April 2025.

[MOST26]

J. Schmidhuber. The two most frequently cited papers of all time are based on our 1991 work. Technical Note IDSIA-1-26, January 2026.

[MUN87]

P. W. Munro. A dual back-propagation scheme for scalar reinforcement learning. Proceedings of the Ninth Annual Conference of the Cognitive Science Society, Seattle, WA, pages 165-176, 1987.

[NAI] NNAISENSE, the AGI company for AI in the physical world, founded in 2014, based on neural network world models. J. Schmidhuber was its President and Chief Scientist. See the 2020 NNAISENSE web page in the Internet Archive. (Lately, however, NNAISENSE has become less AGI-focused and more specialised, with a focus on asset management.)

[NGU89]

D. Nguyen and B. Widrow; The truck backer-upper: An example of self learning in neural networks. In IEEE/INNS International Joint Conference on Neural Networks, Washington, D.C., volume 1, pages 357-364, 1989.

[PLAN]

J. Schmidhuber (AI Blog, 2020). 30-year anniversary of planning & reinforcement learning with recurrent world models and artificial curiosity (1990). This work also introduced high-dimensional reward signals, deterministic policy gradients for RNNs, and

the GAN principle (widely used today). Agents with adaptive recurrent world models even suggest a simple explanation of consciousness & self-awareness.

[PLAN1]

J. Schmidhuber.

Making the world differentiable: On using fully recurrent

self-supervised neural networks for dynamic reinforcement learning and

planning in non-stationary environments.

Technical Report FKI-126-90, TUM, Feb 1990, revised Nov 1990.

PDF.

The first paper on long-term planning with self-supervised reinforcement learning recurrent neural networks (NNs) and recurrent predictive world models (more), and on generative adversarial networks

where a generator NN is fighting a predictor NN in a minimax game

(more).

Apparently, it was also the first paper of this kind to use the term "world model" for the predictor NN (although the basic concept of a world model is much older than that.)

[PLAN2]

J. Schmidhuber.

An on-line algorithm for dynamic reinforcement learning and planning

in reactive environments.

Proc. IEEE/INNS International Joint Conference on Neural

Networks, San Diego, volume 2, pages 253-258, June 17-21, 1990.

Based on TR FKI-126-90 (1990) [PLAN1].

More.

[PLAN3]

J. Schmidhuber.

Reinforcement learning in Markovian and non-Markovian environments.

In D. S. Lippman, J. E. Moody, and D. S. Touretzky, editors,

Advances in Neural Information Processing Systems 3, NIPS'3, pages 500-506. San

Mateo, CA: Morgan Kaufmann, 1991.

PDF.

Partially based on [PLAN1].

[PLAN4]

J. Schmidhuber.

On Learning to Think: Algorithmic Information Theory for Novel Combinations of Reinforcement Learning Controllers and Recurrent Neural World Models.

Report arXiv:1210.0118 [cs.AI], 2015.

This paper went beyond the inefficient millisecond by millisecond planning of 1990 [PLAN1], addressing planning and reasoning in abstract concept spaces. The controller C became an RL prompt engineer that learns to create a chain of thought: to speed up RL, C learns to query its world model for abstract reasoning and decision making.

[PLAN5]

One Big Net For Everything. Preprint arXiv:1802.08864 [cs.AI], Feb 2018.

This paper collapsed the control network and the world model network of [PLAN4] into a single One Big Net for everything, using my neural distillation procedure of 1991 [UN0-1]. Apparently, this is what DeepSeek used to shock the stock market in 2025.

[PLAN6]

D. Ha, J. Schmidhuber. Recurrent World Models Facilitate Policy Evolution. Advances in Neural Information Processing Systems (NIPS), Montreal, 2018. (Talk.)

Preprint: arXiv:1809.01999.

Github: World Models.

[PM0] J. Schmidhuber. Learning factorial codes by predictability minimization. TR CU-CS-565-91, Univ. Colorado at Boulder, 1991. PDF.

More.

[PM1] J. Schmidhuber. Learning factorial codes by predictability minimization. Neural Computation, 4(6):863-879, 1992. PDF.

More.

[PM2] J. Schmidhuber, M. Eldracher, B. Foltin. Semilinear predictability minimzation produces well-known feature detectors. Neural Computation, 8(4):773-786, 1996.

PDF. More.

[PMAX] J. Schmidhuber and D. Prelinger. Discovering predictable classifications. Neural Computation, 5(4):625-635, 1993. Based on TR CU-CS-626-92 (1992). PDF.

[RAW]

J. Schmidhuber (AI Blog, 2001). Raw Computing Power.

[ULTRA]

References on the 1991 unnormalized linear Transformer (ULTRA): original tech report (March 1991) [FWP0]. Journal publication (1992) [FWP1]. Recurrent ULTRA extension (1993) introducing the terminology of learning "internal spotlights of attention” [FWP2]. Modern "quadratic" Transformer (2017: "attention is all you need") scaling quadratically in input size [TR1]. 2020 paper [TR5] using the terminology

"linear Transformer" for a more efficient Transformer variant that scales linearly, leveraging linearized attention [TR5a].

2021 paper [FWP6] pointing out that ULTRA dates back to 1991 [FWP0] when compute was a million times more expensive.

Overview of ULTRA and other Fast Weight Programmers (2021) [FWP].

See the T in ChatGPT! See also surveys [DLH][DLP], 2022 tweet for ULTRA's 30-year anniversary, and 2024 tweet.

[UN]

J. Schmidhuber (AI Blog, 2021, updated 2025). 1991: First very deep learning with unsupervised pre-training (see the P in ChatGPT). First neural network distillation (key for DeepSeek). Unsupervised hierarchical predictive coding (with self-supervised target generation) finds compact internal representations of sequential data to facilitate downstream deep learning. The hierarchy can be distilled into a single deep neural network (suggesting a simple model of conscious and subconscious information processing). 1993: solving problems of depth >1000.

[UN0]

J. Schmidhuber.

Neural sequence chunkers.

Technical Report FKI-148-91, Institut für Informatik, Technische

Universität München, April 1991.

PDF.

Unsupervised/self-supervised pre-training for deep neural networks

(see the P in ChatGPT) and predictive coding is used

in a deep hierarchy of recurrent nets (RNNs)

to find compact internal

representations of long sequences of data,

across multiple time scales and levels of abstraction.

Each RNN tries to solve the pretext task of predicting its next input, sending only unexpected inputs to the next RNN above.

The resulting compressed sequence representations

greatly facilitate downstream supervised deep learning such as sequence classification.

By 1993, the approach solved problems of depth 1000 [UN2]

(requiring 1000 subsequent computational stages/layers—the more such stages, the deeper the learning).

A variant collapses the hierarchy into a single deep net.

It uses a so-called conscious chunker RNN

which attends to unexpected events that surprise

a lower-level so-called subconscious automatiser RNN.

The chunker learns to understand the surprising events by predicting them.

The automatiser uses a

neural knowledge distillation procedure (key for the famous 2025 DeepSeek)

to compress and absorb the formerly conscious insights and

behaviours of the chunker, thus making them subconscious.

The systems of 1991 allowed for much deeper learning than previous methods.

[UN1] J. Schmidhuber. Learning complex, extended sequences using the principle of history compression. Neural Computation, 4(2):234-242, 1992. Based on TR FKI-148-91, TUM, 1991.[UN0] PDF.

First working Deep Learner based on a deep RNN hierarchy (with different self-organising time scales),

overcoming the vanishing gradient problem through unsupervised pre-training of deep NNs (see the P in ChatGPT) and predictive coding (with self-supervised target generation).

Also: compressing or distilling a teacher net (the chunker) into a student net (the automatizer) that does not forget its old skills—such approaches are now widely used, e.g., by DeepSeek. See also this tweet. More.

[UN2] J. Schmidhuber. Habilitation thesis, TUM, 1993. PDF.

An ancient experiment on "Very Deep Learning" with credit assignment across 1200 time steps or virtual layers and unsupervised / self-supervised pre-training for a stack of recurrent NN

can be found here (depth > 1000).

See also Sec. 5.5 on "Vorhersagbarkeitsmaximierung" (Predictability Maximization).

[UN3]

J. Schmidhuber, M. C. Mozer, and D. Prelinger.

Continuous history compression.

In H. Hüning, S. Neuhauser, M. Raus, and W. Ritschel, editors,

Proc. of Intl. Workshop on Neural Networks, RWTH Aachen, pages 87-95.

Augustinus, 1993.

[UNI]

Theory of Universal Learning Machines & Universal AI.

Work of Marcus Hutter (in the early 2000s) on J.

Schmidhuber's SNF project 20-61847:

Unification of universal induction and sequential decision theory.

[VAN1] S. Hochreiter. Untersuchungen zu dynamischen neuronalen Netzen. Diploma thesis, TUM, 1991 (advisor J. Schmidhuber). PDF. More on the Fundamental Deep Learning Problem.

[WER87]

P. J. Werbos. Building and understanding adaptive systems: A statistical/numerical approach to factory automation and brain research. IEEE Transactions on Systems, Man, and Cybernetics, 17, 1987.

[WER89]

P. J. Werbos. Backpropagation and neurocontrol: A review and prospectus. In IEEE/INNS International Joint Conference on Neural Networks, Washington, D.C., volume 1, pages 209-216, 1989.

[WHO4]

J. Schmidhuber. Who invented artificial neural networks? Technical Note IDSIA-15-25, IDSIA, Switzerland, Nov 2025.

[WHO5]

J. Schmidhuber. Who invented deep learning? Technical Note IDSIA-16-25, IDSIA, Switzerland, Nov 2025.

[WHO6] J. Schmidhuber (AI Blog, 2014; updated 2025).

Who invented backpropagation?

See also LinkedIn post.

[WHO7]

J. Schmidhuber.

Who invented convolutional neural networks? Technical Note IDSIA-17-25, IDSIA, Switzerland, 2025. See popular tweet.

[WHO8]

J. Schmidhuber. Who Invented Generative Adversarial Networks? Technical Note IDSIA-14-25, IDSIA, Switzerland, Dec 2025.

[WHO9]

J. Schmidhuber. Who invented knowledge distillation with artificial neural networks? Technical Note IDSIA-12-25, IDSIA, Nov 2025.

[WHO10]

J. Schmidhuber. Who Invented Transformer Neural Networks? Technical Note IDSIA-11-25, IDSIA, Switzerland, Nov 2025.

[WHO11]

J. Schmidhuber. Who Invented Deep Residual Learning? Technical Report IDSIA-09-25, IDSIA, Switzerland, Sept 2025. Preprint arXiv:2509.24732.

[WHO12]

J. Schmidhuber. Who invented JEPA? Technical Note IDSIA-3-22, IDSIA, Switzerland, March 2026.

[WM26] J. Schmidhuber. The Neural World Model Boom. Technical Note IDSIA-2-26, 4 Feb 2026 (updated April 2024).

[WM26b] J. Schmidhuber. Simple but powerful ways of using world models and their latent space. Opening Keynote at the World Modeling Workshop, Agora, Mila - Quebec AI Institute, 4 Feb 2026. Also on YouTube (starts around 10:44). See video tweet.