There are tens of millions of research papers spanning many scientific disciplines.

According to Google Scholar (2025), the two most frequently cited papers of all time (with the most citations within 3 years—manuals excluded) are both about deep artificial neural networks (NNs).[DLH][WHO4-11] At the current growth rate, perhaps they will soon be the two most-cited papers ever, period.1

Both articles are directly based on what Juergen Schmidhuber's team published 35 years ago in their Miraculous Year[MIR] at TU Munich between March and June of 1991, when compute was about 10 million times more expensive than today.

1.

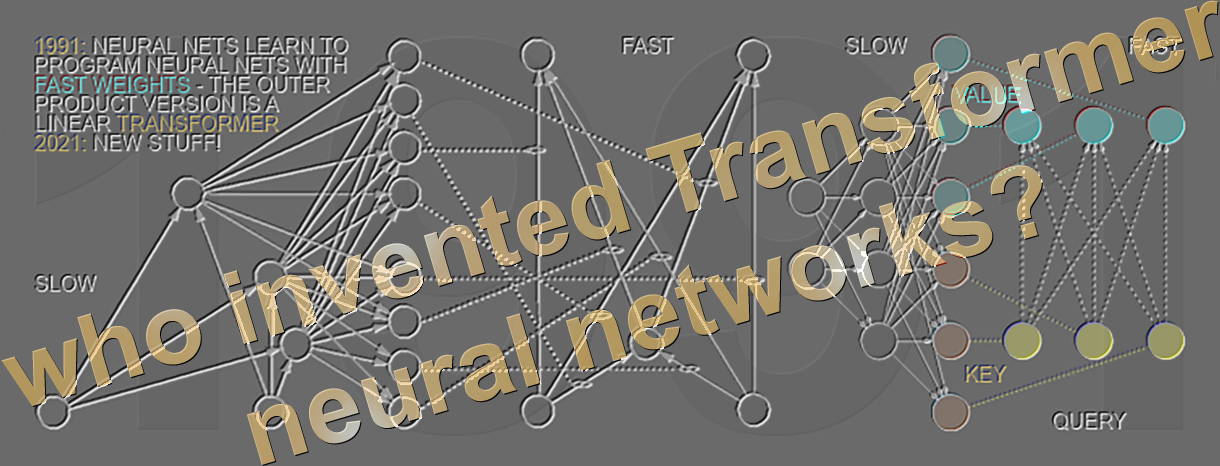

One of the two papers is about a neural network (NN) called Transformer (see the T in ChatGPT). From 2023 to 2025, it received over 157,000 Google Scholar citations. It's a type of Fast Weight Programmer based on principles of Schmidhuber's Unnormalized Linear Transformer (ULTRA) published in March 1991 (using different terminology, e.g., KEY/VALUE was called FROM/TO).

See details in the technical report: Who invented Transformer neural networks?[WHO10][DLH]

2.

The other paper is about deep residual learning with NNs. From 2023 to 2025, it received over 150,000 Google Scholar citations. Deep residual learning with residual networks was invented and further developed between June 1991 and May 2015—for both recurrent NNs (RNNs) and feedforward NNs (FNNs)—by Schmidhuber's students

Sepp Hochreiter,

Felix Gers,

Alex Graves,

Rupesh Kumar Srivastava & Klaus Greff.

The deep residual RNN called LSTM became the most-cited AI of the 20th century, a deep residual FNN variant the most-cited AI of the 21st.[MOST]

See details in the technical report: Who invented deep residual learning?[WHO11]

What are the odds of the two most frequently cited papers ever being both rooted in the same place and time at TUM? One in a quadrillion or so? Much higher than that! Why? In 1990, very deep NNs couldn't learn much. I desperately wanted to solve this problem, even though few researchers cared about it; in other words, there wasn't much international competition. We tried several approaches, some of them conceptually related, and in fact, the successful methods of March 1991 and June 1991 underpinning the two papers are not totally independent, as pointed out in Sec. 5 of [FWP]: they represent dual ways of overcoming the fundamental deep learning problem of vanishing gradients identified in June 1991. In retrospect, given the goal, we had almost no choice but to stumble across such related solutions!

Other milestones of 1991. April:

pre-training for deep NNs[DLH] (the P in ChatGPT)—yet another way of overcoming the vanishing gradient problem (identified a few months later). April:

NN distillation (central to the famous 2025 DeepSeek).[WHO9][DLH]

February:

first peer-reviewed paper on generative adversarial networks (GANs) for world models and artificial curiosity[WHO8][DLH]—much later, a 2014 paper on GANs became the most-cited paper of the "most-cited living scientist."

In 1991, few people expected these ideas to shape modern AI and the world's most valuable companies.[DLH] Many didn't even notice that 1991 was the only palindromic year of the 20th century.

Footnote 1

The two papers have competition in the form of a highly cited psychology paper.[PSY06]

It should also be mentioned that various databases tracking academic citations look at different document sets and differ in citation numbers.[MOST25,25b] Some include highly cited manuals.[RMAN][PSY13]

Some papers have numerous co-authors, and many researchers have pointed out that citation rankings should be normalized to take this into account. The paper with the most Google Scholar citations per co-author (over 300,000) is still the one by Swiss biologist Ulrich Laemmli (1970).[LAE]

Generally speaking, however, be skeptical about citation rankings!

In 2011, Schmidhuber wrote in Citation bubble about to burst?[NAT1]

"Like the less-than-worthless collateralized debt obligations that drove the recent financial bubble, and unlike concrete goods and real exports, citations are easy to print and inflate. Financial deregulation led to short-term incentives for bankers and rating agencies to overvalue their CDOs, bringing down entire economies. Likewise, today's academic rankings provide an incentive for professors to maximize citation counts instead of scientific progress [...] We may already be in the middle of a citation bubble—witness how relatively unknown scientists can now collect more citations than the most influential founders of their fields [...] Note that I write from the country with the most citations per capita and per scientist."[NAT1]

Finally, one should not cite certain papers just because many others are citing them! Don't fall prey to the ancient idiom: “Eat shit—a billion flies can’t be wrong!”

Acknowledgments

Thanks to several expert reviewers (including neural network pioneers) for useful comments. (Let me know under juergen@idsia.ch if you can spot any remaining error.)

The contents of this article may be used for educational and non-commercial purposes, including articles for Wikipedia and similar sites.

This work is licensed under a Creative Commons Attribution-NonCommercial-ShareAlike 4.0 International License.

Thanks to several expert reviewers (including neural network pioneers) for useful comments. (Let me know under juergen@idsia.ch if you can spot any remaining error.)

The contents of this article may be used for educational and non-commercial purposes, including articles for Wikipedia and similar sites.

This work is licensed under a Creative Commons Attribution-NonCommercial-ShareAlike 4.0 International License.

References

[DLH]

J. Schmidhuber.

Annotated History of Modern AI and Deep Learning. Technical Report IDSIA-22-22, IDSIA, Switzerland, 2022, updated 2025.

Preprint arXiv:2212.11279.

Tweet.

[FWP]

J. Schmidhuber (AI Blog, 26 March 2021, updated 2025).

26 March 1991: Neural nets learn to program neural nets with fast weights—like Transformer variants. 2021: New stuff!

See tweet of 2022.

[LAE]

U. K. Laemmli. Cleavage of structural proteins during the assembly of the head of bacteriophage T4. Nature, 227 (5259):680-685, 1970.

[MIR] J. Schmidhuber (Oct 2019, updated 2021, 2022, 2025). Deep Learning: Our Miraculous Year 1990-1991. Preprint

arXiv:2005.05744. The Deep Learning Artificial Neural Networks (NNs)

of our team have

revolutionised

Machine Learning & AI.

Many of the basic ideas behind this revolution were published within the 12 months of our "Annus Mirabilis" 1990-1991 at our lab in TU Munich.

Back then, few people were interested. But a quarter century later, NNs based on our "Miraculous Year"

were on over 3 billion devices,

and used many billions of times per day,

consuming a significant fraction of the world's compute.

In particular, in 1990-91, we laid foundations of Generative AI, publishing principles of (1)

Generative Adversarial Networks for Artificial Curiosity and Creativity (now used for deepfakes), (2) Transformers (the T in ChatGPT—see the 1991 Unnormalized Linear Transformer), (3) Pre-training for deep NNs (see the P in ChatGPT), (4) NN distillation (key for DeepSeek), and (5) recurrent World Models for

Reinforcement Learning and Planning in partially observable environments. The year 1991 also marks the emergence of the defining features of (6)

LSTM, the most cited AI paper of the 20th century (based on constant error flow through residual NN connections), and (7) ResNet, the most cited AI paper of the 21st century, based on our LSTM-inspired Highway Net that was

10 times deeper than previous feedforward NNs.

[MOST]

J. Schmidhuber (AI Blog, 2021, updated 2025). The most cited neural networks all build on work done in my labs: 1. Long Short-Term Memory (LSTM), the most cited AI of the 20th century. 2. ResNet (open-gated Highway Net), the most cited AI of the 21st century. 3. AlexNet & VGG Net (the similar but earlier DanNet of 2011 won 4 image recognition challenges before them). 4. GAN (an instance of Adversarial Artificial Curiosity of 1990). 5. Transformer variants—see the 1991 unnormalised linear Transformer (ULTRA). Foundations of Generative AI were published in 1991: the principles of GANs (now used for deepfakes), Transformers (the T in ChatGPT), Pre-training for deep NNs (the P in ChatGPT), NN distillation, and the famous DeepSeek—see the tweet.

[MOST25]

H. Pearson, H. Ledford, M. Hutson, R. Van Noorden.

Exclusive: the most-cited papers of the twenty-first century.

Nature, 15 April 2025.

[MOST25b]

R. Van Noorden.

Science’s golden oldies: the decades-old research papers still heavily cited today.

Nature, 15 April 2025.

[MOST26]

J. Schmidhuber. The two most frequently cited papers of all time are based on our 1991 work. Technical Note IDSIA-1-26, January 2026.

[NAT1] J. Schmidhuber. Citation bubble about to burst? Nature, vol. 469, p. 34, 6 January 2011.

HTML.

[PSY06]

V. Braun & V. Clarke (2006). Using thematic analysis in psychology. Qualitative research in psychology, 3(2):77-101, 2006.

[PSY13]

American Psychiatric Association (2013). Diagnostic and statistical manual of mental disorders.

[RMAN]

R Core Team. R: A Language and Environment for Statistical Computing. Manual of the R Foundation for Statistical Computing, Vienna, Austria, https://www.R-project.org

[WHO4]

J. Schmidhuber. Who invented artificial neural networks? Technical Note IDSIA-15-25, IDSIA, Switzerland, Nov 2025.

[WHO5]

J. Schmidhuber. Who invented deep learning? Technical Note IDSIA-16-25, IDSIA, Switzerland, Nov 2025.

[WHO6] J. Schmidhuber (AI Blog, 2014; updated 2025).

Who invented backpropagation?

See also LinkedIn post.

[WHO7]

J. Schmidhuber.

Who invented convolutional neural networks? Technical Note IDSIA-17-25, IDSIA, Switzerland, 2025. See popular tweet.

[WHO8]

J. Schmidhuber. Who Invented Generative Adversarial Networks? Technical Note IDSIA-14-25, IDSIA, Switzerland, Dec 2025.

[WHO9]

J. Schmidhuber. Who invented knowledge distillation with artificial neural networks? Technical Note IDSIA-12-25, IDSIA, Nov 2025.

[WHO10]

J. Schmidhuber. Who Invented Transformer Neural Networks? Technical Note IDSIA-11-25, IDSIA, Switzerland, Nov 2025.

[WHO11]

J. Schmidhuber. Who Invented Deep Residual Learning? Technical Report IDSIA-09-25, IDSIA, Switzerland, Sept 2025. Preprint arXiv:2509.24732.