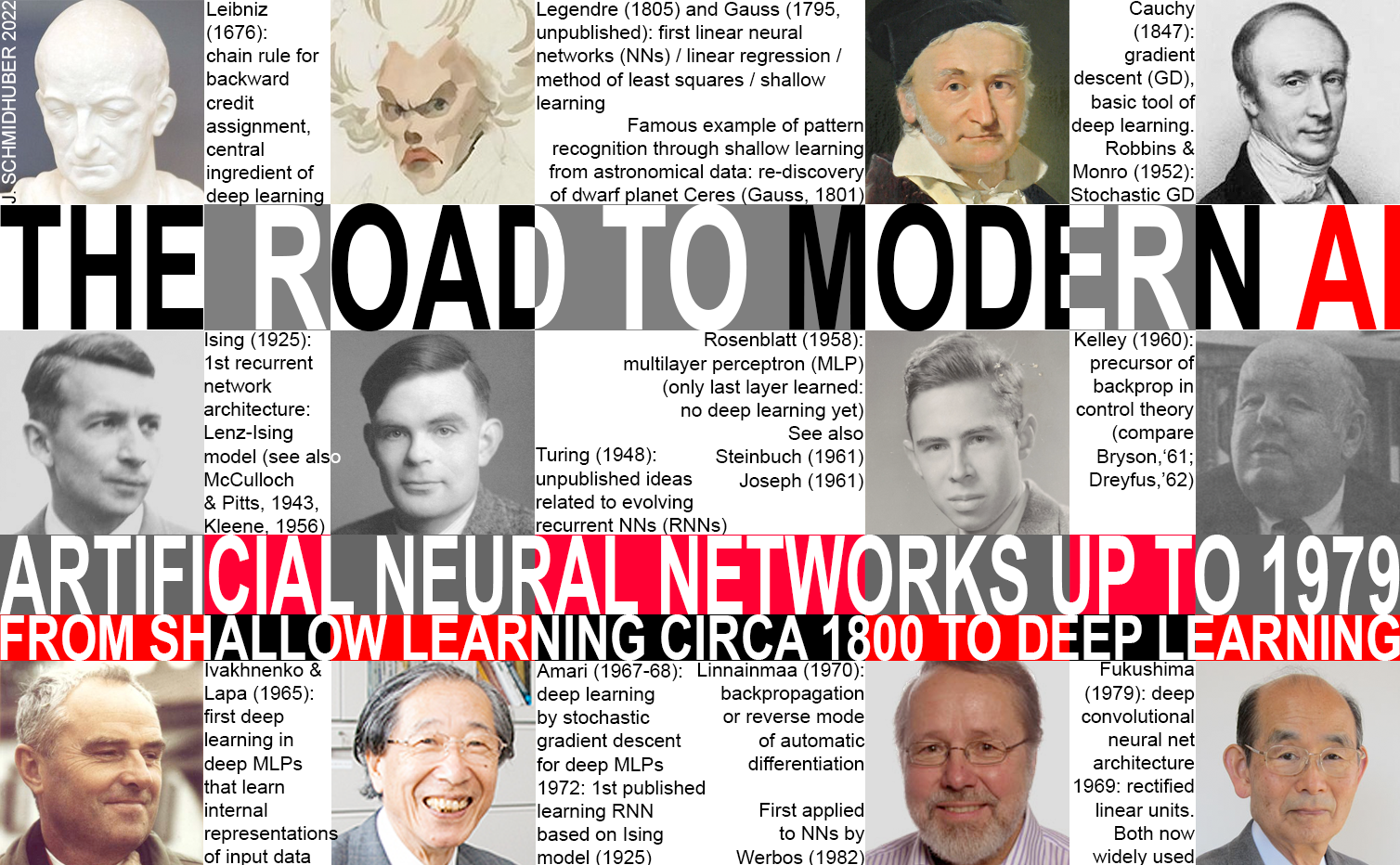

Modern Artificial Intelligence (AI) is based on learning Artificial Neural Networks[DLH] (NNs).

Who invented them?

Biological neural nets were discovered in the 1880s.[CAJ88-06] The term "neuron" was coined in 1891.[CAJ06]

Many think that artificial neural nets were developed after that. But that's not the case: the first "modern" NNs with 2 layers of units were invented over 2 centuries ago (1795-1805) by Adrien-Marie Legendre (1805) and Johann Carl Friedrich Gauss (1795, unpublished), [STI81] when compute was many trillions of times more expensive than in 2025.

True, the terminology of artificial neural nets was introduced only much later in the 1900s. For example, certain non-learning NNs were discussed in 1943[MC43] and formally analyzed in 1956.[K56] Informal thoughts about a simple NN learning rule were published in 1948[HEB48] and 1949.[HEB49]

Evolutionary computation[EVO1-7] for NNs[EVONN1-3] was mentioned in an unpublished 1948 report.[TUR1]

Various concrete learning NNs were published in 1958,[R58] 1961,[R61][ST61-95]

and 1962.[WID62]

See also the 1959 Pandemonium.[SE59]

True, the terminology of artificial neural nets was introduced only much later in the 1900s. For example, certain non-learning NNs were discussed in 1943[MC43] and formally analyzed in 1956.[K56] Informal thoughts about a simple NN learning rule were published in 1948[HEB48] and 1949.[HEB49]

Evolutionary computation[EVO1-7] for NNs[EVONN1-3] was mentioned in an unpublished 1948 report.[TUR1]

Various concrete learning NNs were published in 1958,[R58] 1961,[R61][ST61-95]

and 1962.[WID62]

See also the 1959 Pandemonium.[SE59]

However, while these NN papers of the mid 1900s are of historical interest, they have actually less to do with modern AI than the much older adaptive NN by Gauss & Legendre, still heavily used today, the very foundation of all NNs, including the recent

deeper NNs.

The Gauss-Legendre NN from over 2 centuries ago[NN25][DLH] has an input layer with several input units, and an output layer. For simplicity, let's assume the latter consists of a single output unit. Each input unit can hold a real-valued number and is connected to the output unit by a connection with a real-valued weight. The NN's output is the sum of the products of the inputs and their weights. Given a training set of input vectors and desired target values for each of them, the NN weights are adjusted such that the sum of the squared errors between the NN outputs and the corresponding targets is minimized.[DLH] Now the NN can be used to process previously unseen test data.

Of course, back then this was not called an NN, because people didn't even know about biological neurons yet—the first microscopic image of a nerve cell was created decades later by Valentin in 1836, and the term "neuron" was coined by Waldeyer in 1891.[CAJ06] Instead, the technique was called the Method of Least Squares, also widely known in statistics as Linear Regression. But it is mathematically identical to today's linear 2-layer NNs: same basic algorithm, same error function, same adaptive parameters/weights. Such simple NNs perform "shallow learning," as opposed to "deep learning" with many nonlinear layers.[DL25] In fact, many modern NN courses start by introducing this method, then move on to more complex, deeper NNs.[DLH]

Even the applications of the early 1800s were similar to today's: learn to predict the next element of a sequence, given previous elements. That's what ChatGPT does!

The first famous example of pattern recognition through an NN dates back over 200 years: the rediscovery of the dwarf planet Ceres in 1801 through Gauss, who collected noisy data points from previous astronomical observations, then used them to adjust the parameters of a predictor, which essentially learned to generalise from the training data to correctly predict the new location of Ceres. That's what made the young Gauss famous.[DLH]

The old Gauss-Legendre NNs are still being used today in innumerable applications. What's the main difference to the NNs used in some of the impressive AI applications since the 2010s? The latter are typically much deeper and have many intermediate layers of learning "hidden" units. Who invented this? Short answer: Ivakhnenko & Lapa (1965).[DEEP1-2] Others refined this.[DLH]

See also: who invented deep learning?[DL25]

Some people still believe that modern NNs were somehow inspired by the biological brain. But that's simply not true: decades before biological nerve cells were discovered, plain engineering and mathematical problem solving already led to what's now called NNs. In fact, in the past 2 centuries, not so much has changed in AI research: as of 2025, NN progress is still mostly driven by engineering, not by neurophysiological insights. (Certain exceptions dating back many decadese.g.,[CN25] confirm the rule.)

Footnote 1.

In 1958, simple NNs in the style of Gauss & Legendre were combined with an output threshold function to obtain pattern classifiers called Perceptrons.[R58][R61][DLH] Astonishingly, the authors[R58][R61] seemed unaware of the much earlier NN (1795-1805) famously known in the field of statistics as "method of least squares" or "linear regression."

Remarkably, today's most frequently used 2-layer NNs are those of Gauss & Legendre, not those of the 1940s[MC43] and 1950s[R58] (which were not even differentiable)!

Footnote 2. Today, students of all technical disciplines are required to take math classes, in particular, analysis, linear algebra, and statistics. In all of these fields, essential results and methods are (at least partially) due to Gauss: the fundamental theorem of algebra, Gauss elimination, the Gaussian distribution of statistics, etc. The so-called "greatest mathematician since antiquity" also pioneered differential geometry, number theory (his favorite subject), and non-Euclidean geometry. Furthermore, he made major contributions to astronomy and physics. Modern engineering including AI would be unthinkable without his results.

Acknowledgments

Thanks to several expert reviewers for useful comments. (Let me know under juergen@idsia.ch if you can spot any remaining error.)

The contents of this article may be used for educational and non-commercial purposes, including articles for Wikipedia and similar sites.

This work is licensed under a Creative Commons Attribution-NonCommercial-ShareAlike 4.0 International License.

Thanks to several expert reviewers for useful comments. (Let me know under juergen@idsia.ch if you can spot any remaining error.)

The contents of this article may be used for educational and non-commercial purposes, including articles for Wikipedia and similar sites.

This work is licensed under a Creative Commons Attribution-NonCommercial-ShareAlike 4.0 International License.

References

[BP4] J. Schmidhuber (AI Blog, 2014; updated 2025).

Who invented backpropagation?

See also LinkedIn post (2025).

[CAJ88]

S. R. Cajal.

Estructura de los centros nerviosos de las aves.

Rev. Trim. Histol. Norm. Patol., 1 (1888), pp. 1-10.

[CAJ88b]

S. R. Cajal.

Sobre las fibras nerviosas de la capa molecular del cerebelo.

Rev. Trim. Histol. Norm. Patol., 1 (1888), pp. 33-49.

[CAJ89]

Conexión general de los elementos nerviosos.

Med. Práct., 2 (1889), pp. 341-346.

[CAJ06]

F. López-Muñoz, J. Boya b, C. Alamo (2006).

Neuron theory, the cornerstone of neuroscience, on the centenary of the Nobel Prize award to Santiago Ramón y Cajal.

Brain Research Bulletin,

Volume 70, Issues 4–6, 16 October 2006, Pages 391-405.

[CN25]

J. Schmidhuber (AI Blog, 2025).

Who invented convolutional neural networks? See popular tweet.

[DEEP1]

Ivakhnenko, A. G. and Lapa, V. G. (1965). Cybernetic Predicting Devices. CCM Information Corporation. First working Deep Learners with many layers, learning internal representations.

[DEEP1a]

Ivakhnenko, Alexey Grigorevich. The group method of data of handling; a rival of the method of stochastic approximation. Soviet Automatic Control 13 (1968): 43-55.

[DEEP2]

Ivakhnenko, A. G. (1971). Polynomial theory of complex systems. IEEE Transactions on Systems, Man and Cybernetics, (4):364-378.

[DL1] J. Schmidhuber, 2015.

Deep learning in neural networks: An overview. Neural Networks, 61, 85-117.

More.

Got the first Best Paper Award ever issued by the journal Neural Networks, founded in 1988.

[DL25]

J. Schmidhuber (AI Blog, 2025). Who invented deep learning? Technical Note IDSIA-16-25, IDSIA, November 2025.

[DLH]

J. Schmidhuber.

Annotated History of Modern AI and Deep Learning. Technical Report IDSIA-22-22, IDSIA, Lugano, Switzerland, 2022.

Preprint arXiv:2212.11279.

Tweet of 2022.

[DLP]

J. Schmidhuber.

How 3 Turing awardees republished key methods and ideas whose creators they failed to credit. Technical Report IDSIA-23-23, Swiss AI Lab IDSIA, 14 Dec 2023.

Tweet of 2023.

[ELM1]

G.-B. Huang, Q.-Y. Zhu, and C.-K. Siew. Extreme learning machine: A new learning scheme of feedforward neural networks. Proc. IEEE Int. Joint Conf. on Neural Networks, Vol. 2, 2004, pp. 985-990. This paper does not mention that the "ELM" concept goes back to Rosenblatt's work in the 1950s.[R62][DLP]

[ELM2]

ELM-ORIGIN, 2004.

The Official Homepage on Origins of Extreme Learning Machines (ELM).

"Extreme Learning Machine Duplicates Others' Papers from 1988-2007."

Local copy.

This overview does not mention that the "ELM" concept goes back to Rosenblatt's work in the 1950s.[R62][DLP]

[EVO1]

N. A. Barricelli. Esempi numerici di processi di evoluzione. Methodos: 45-68, 1954.

Possibly the first publication on artificial evolution.

[EVO2]

L. Fogel, A. Owens, M. Walsh. Artificial Intelligence through Simulated Evolution.

Wiley, New York, 1966.

[EVO3] I. Rechenberg. Evolutionsstrategie—Optimierung technischer Systeme nach Prinzipien der biologischen Evolution. Dissertation, 1971.

[EVO4] H. P. Schwefel. Numerische Optimierung von Computer-Modellen. Dissertation, 1974.

[EVO5] J. H. Holland. Adaptation in Natural and Artificial Systems. University of Michigan Press, Ann Arbor, 1975.

[EVO6] S. F. Smith. A Learning System Based on Genetic Adaptive Algorithms, PhD Thesis, Univ. Pittsburgh, 1980

[EVO7] N. L. Cramer. A representation for the adaptive generation of simple sequential programs. In J. J. Grefenstette, editor, Proceedings of an International Conference on Genetic Algorithms and Their Applications, Carnegie-Mellon University, July 24-26, 1985, Hillsdale NJ, 1985. Lawrence Erlbaum Associates.

[EVONN1]

Montana, D. J. and Davis, L. (1989). Training feedforward neural networks using genetic algorithms.

In Proceedings of the 11th International Joint Conference on Artificial Intelligence (IJCAI)—Volume

1, IJCAI'89, pages 762–767, San Francisco, CA, USA. Morgan Kaufmann Publishers Inc.

[EVONN2]

Miller, G., Todd, P., and Hedge, S. (1989). Designing neural networks using genetic algorithms. In

Proceedings of the 3rd International Conference on Genetic Algorithms, pages 379–384. Morgan

Kauffman.

[EVONN3] H. Kitano. Designing neural networks using genetic algorithms with graph generation system. Complex Systems, 4:461-476, 1990.

[GD']

C. Lemarechal. Cauchy and the Gradient Method. Doc Math Extra, pp. 251-254, 2012.

[GD'']

J. Hadamard. Memoire sur le probleme d'analyse relatif a Vequilibre des plaques elastiques encastrees. Memoires presentes par divers savants estrangers à l'Academie des Sciences de l'Institut de France, 33, 1908.

[GDa]

Y. Z. Tsypkin (1966). Adaptation, training and self-organization automatic control systems,

Avtomatika I Telemekhanika, 27, 23-61.

On gradient descent-based on-line learning for non-linear systems.

[GDb]

Y. Z. Tsypkin (1971). Adaptation and Learning in Automatic Systems, Academic Press, 1971.

On gradient descent-based on-line learning for non-linear systems.

[GD1]

S. I. Amari (1967).

A theory of adaptive pattern classifier, IEEE Trans, EC-16, 279-307 (Japanese version published in 1965).

PDF.

Probably the first paper on using stochastic gradient descent[STO51-52] for learning in multilayer neural networks

(without specifying the specific gradient descent method now known as reverse mode of automatic differentiation or backpropagation[BP1]).

[GD2]

S. I. Amari (1968).

Information Theory—Geometric Theory of Information, Kyoritsu Publ., 1968 (in Japanese).

OCR-based PDF scan of pages 94-135 (see pages 119-120).

Contains computer simulation results for a five layer network (with 2 modifiable layers) which learns internal representations to classify

non-linearily separable pattern classes.

[GD2a]

S. Saito (1967). Master's thesis, Graduate School of Engineering, Kyushu University, Japan.

Implementation of Amari's 1967 stochastic gradient descent method for multilayer perceptrons.[GD1] (S. Amari, personal communication, 2021.)

[GD3]

S. I. Amari (1977).

Neural Theory of Association and Concept Formation.

Biological Cybernetics, vol. 26, p. 175-185, 1977.

See Section 3.1 on using gradient descent for learning in multilayer networks.

[HEB48]

J. Konorski (1948). Conditioned reflexes and neuron organization. Translation from the Polish manuscript under the author's supervision. Cambridge University Press, 1948. Konorski published the so-called "Hebb rule" before Hebb [HEB49].

[HEB49]

D. O. Hebb. The Organization of Behavior. Wiley, New York, 1949.

Konorski [HEB48] published the so-called "Hebb rule" before Hebb.

[K56]

S.C. Kleene. Representation of Events in Nerve Nets and Finite Automata. Automata Studies, Editors: C.E. Shannon and J. McCarthy, Princeton University Press, p. 3-42, Princeton, N.J., 1956.

[L84]

G. Leibniz (1684).

Nova Methodus pro Maximis et Minimis.

First publication of "modern" infinitesimal calculus.

[LEI07]

J. M. Child (translator), G. W. Leibniz (Author). The Early Mathematical Manuscripts of Leibniz. Merchant Books, 2007. See p. 126: the chain rule appeared in a 1676 memoir by Leibniz.

[LEI10]

O. H. Rodriguez, J. M. Lopez Fernandez (2010). A semiotic reflection on the didactics of the Chain rule. The Mathematics Enthusiast: Vol. 7 : No. 2 , Article 10. DOI: https://doi.org/10.54870/1551-3440.1191.

[LEI21] J. Schmidhuber (AI Blog, 2021). 375th birthday of Leibniz, founder of computer science.

[MC43]

W. S. McCulloch, W. Pitts. A Logical Calculus of Ideas Immanent in Nervous Activity.

Bulletin of Mathematical Biophysics, Vol. 5, p. 115-133, 1943.

[NN25]

J. Schmidhuber

(AI Blog, 2025). Who invented artificial neural networks? Technical Note IDSIA-15-25, IDSIA, November 2025.

[R58]

Rosenblatt, F. (1958). The perceptron: a probabilistic model for information storage and organization

in the brain. Psychological review, 65(6):386.

This paper not only described single layer perceptrons, but also deeper multilayer perceptrons (MLPs).

Although these MLPs did not yet have deep learning, because only the last layer learned,[DL1]

Rosenblatt basically had what much later was rebranded as Extreme Learning Machines (ELMs) without proper attribution.[ELM1-2][DLP]

[R61]

Joseph, R. D. (1961). Contributions to perceptron theory. PhD thesis, Cornell Univ.

[R62]

Rosenblatt, F. (1962). Principles of Neurodynamics. Spartan, New York.

[SE59]

O. G. Selfridge (1959). Pandemonium: a paradigm for learning. In D. V. Blake and A. M. Uttley, editors, Proc. Symposium on Mechanisation of Thought Processes, p 511-529, London, 1959.

[ST61]

K. Steinbuch. Die Lernmatrix. (The learning matrix.) Kybernetik, 1(1):36-45, 1961.

[ST95]

W. Hilberg (1995). Karl Steinbuch, ein zu Unrecht vergessener Pionier

der künstlichen neuronalen Systeme. (Karl Steinbuch, an unjustly forgotten pioneer of artificial neural systems.) Frequenz, 49(1995)1-2.

[STI81]

S. M. Stigler. Gauss and the Invention of Least Squares. Ann. Stat. 9(3):465-474, 1981.

[STI83]

S. M. Stigler. Who Discovered Bayes' Theorem? The American Statistician. 37(4):290-296, 1983.

Bayes' theorem is actually Laplace's theorem or possibly Saunderson's theorem.

[STI85]

S. M. Stigler (1986). Inverse Probability. The History of Statistics: The Measurement of Uncertainty Before 1900. Harvard University Press, 1986.

[STO51]

H. Robbins, S. Monro (1951). A Stochastic Approximation Method. The Annals of Mathematical Statistics. 22(3):400, 1951.

[STO52]

J. Kiefer, J. Wolfowitz (1952). Stochastic Estimation of the Maximum of a Regression Function.

The Annals of Mathematical Statistics. 23(3):462, 1952.

[TUR1]

A. M. Turing. Intelligent Machinery. Unpublished Technical Report, 1948.

Link.

In: Ince DC, editor. Collected works of AM Turing—Mechanical Intelligence. Elsevier Science Publishers, 1992.

[TUR21] J. Schmidhuber (AI Blog, Sep 2021). Turing Oversold. It's not Turing's fault, though.

[WID62]

Widrow, B. and Hoff, M. (1962). Associative storage and retrieval of digital information in networks

of adaptive neurons. Biological Prototypes and Synthetic Systems, 1:160, 1962.