Recently a famous entrepreneur and author visited us here at KAUST and told me: "You are working in paradise!"

In 2016, King Abdullah University of Science and Technology (KAUST) was named the university with the highest impact per faculty, ahead of the usual suspects such as Caltech.[ATL18][CHA17] Since then, KAUST has topped the citations per faculty sorting of the QS World University Rankings.[QS21] This may be not surprising as KAUST also boasts one of the world's highest endowments. In terms of endowment per faculty it is far ahead of the usual suspects such as Harvard and Yale.[ATL18]

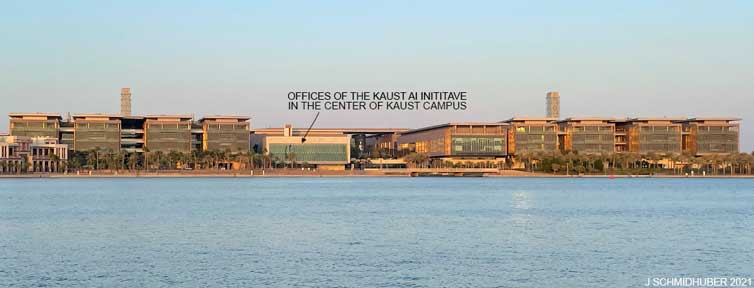

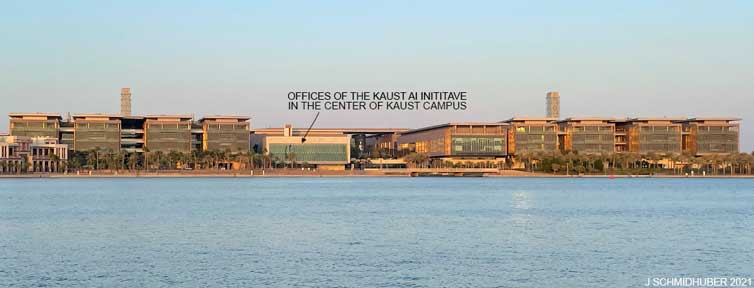

The rapidly growing KAUST on the coast of the Red Sea—not far from the origins of human civilization—is packed full of brilliant academics from all over the world, enjoying excellent working conditions and a high quality of life.

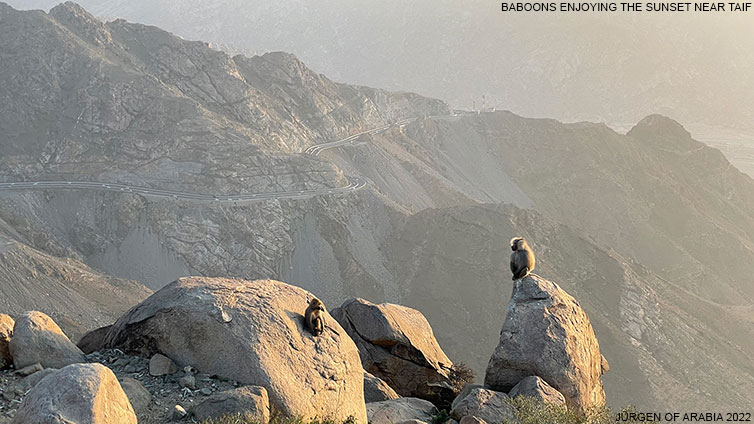

I photographed the Dolphin above on a snorkeling trip off the coast of KAUST, and took many additional photos, some of which you'll find below.

On October 1, 2021, I started directing the ambitious KAUST Artificial Intelligence Initiative. The goal is to advance fundamental AI research on all fronts, in line with my old objective of the 1970s:

"build an AI smarter than myself such that I can retire."

I am still Chief Scientist of NNAISENSE and

Scientific Director of the Swiss AI Lab, IDSIA, with a part-time adjunct professorship at the University of Lugano, Switzerland,

supervising my research team co-funded by grants from ERC and SNSF.

At KAUST,

we are now hiring outstanding professors, postdocs, and PhD students.

For student admissions, please use this link.

Are you an AI expert interested in working with KAUST faculty on X+AI,

where X is some other field of your expertise?

If so, then this website is for you.

Some of our current co-workers are shown above. Supported by excellent hardware and KAUST's outstanding interdisciplinary

expertise in many different areas of science (see the KAUST Discovery Magazines), the AI Initiative supports AI applications in all fields, including

healthcare, drug design, chemistry, materials science, speech recognition and natural language processing, automation, robotics, soft robotics, and other areas.

KAUST's outstanding

visual computing team has regularly been among the top groups at leading conferences in computer vision, computer graphics, and visualization.

I am especially proud of my incredible colleagues Bernard Ghanem, Peter Richtarik, and their awesome teams at our KAUST AI Initiative. For example, Bernard & team had 7 papers at CVPR 2022, the world's most impactful scientific conference, and 8 at CVPR 2023. Peter & team had 12 papers at NeurIPS 2022, a leading conference on AI and neural networks (KAUST as a whole had 24).

KAUST's Shaheen 2 supercomputer entered the world at #7 on the Top 500 list in June 2015, and is scheduled to be replaced with a brand new, much faster Shaheen 3: very few universities (if any) can boast their own supercomputer with over 2800 top-end GPUs for AI applications.

My own research team will continue to focus on the deepest and most popular artificial neural networks (NNs)[MOST][DLH] including the

LSTM family,[LSTM1-17] the closely related Highway Net/ResNet family,[HW1-3][R5] large language models and

variants of our attention-based[ATT,0-3] linear

Transformers and

other Fast Weight Programmers since 1991,[FWP,0-7][TR1-6]

fast and deep[MLP1-2][DAN,1][GPUCNN1-9] convolutional NNs[CNN1-4] for

image recognition,

Unsupervised/Self-Supervised Learning,[UN,0-3]

and combinations thereof. We are especially interested in

Reinforcement Learning and Planning,[PLAN,2-6]

Policy Gradients,[RPG][LSTMPG]

Artificial Curiosity and GANs,[AC,90-20]

automatic discovery of (hierarchically) structured

concept spaces based on abstract objects,[AC02][OBJ2-5][PLAN4-5]

Metalearning Machines that Learn to Learn,[META,1][FWPMETA1-7][METARL2-10] and Soft Robotics.

We hope that our work will continue[DEC] to impact the lives of billions of people as well as all of the world's most valuable companies.[DL4] In fact, the planet's most profitable company is located next door: Saudi Aramco. For many decades, it was by far the world's most valuable company (and was once estimated to have a value of 10 trillion USD[ARA1-3]). Even in today's age of oil's declining importance, Aramco's market cap is still close to 2 trillion USD,[ARA4] with a profit of over 160 billion USD in 2022. It keeps investing into AI and computing[ARA5,b] as well as renewable energy[ARA7-8] including Energy Vault in Lugano, Switzerland.[ARA9]

We hope the new AI initiative will contribute to a new Golden Age for science, analogous to the Islamic Golden Age that started over a millennium ago when the Middle East was leading the world in science and technology—notably, in automatic information processing. Recall that the word "algorithm" derives from the work of al-Khwarizmi around 800 AD. Also remember that the 9th century music automaton

by the Banu Musa brothers

was perhaps the world's first machine with a stored program.[BAN][KOE1][GOD21,a,b][ZUS21,a,b] It used pins on

a revolving cylinder to store programs controlling a steam-driven

flute—compare Al-Jazari's programmable drum machine of 1206.[SHA7b] Many other pioneers of computing contributed to that legendary Golden Age.

Let us now turn the power of modern understanding to extending their work!

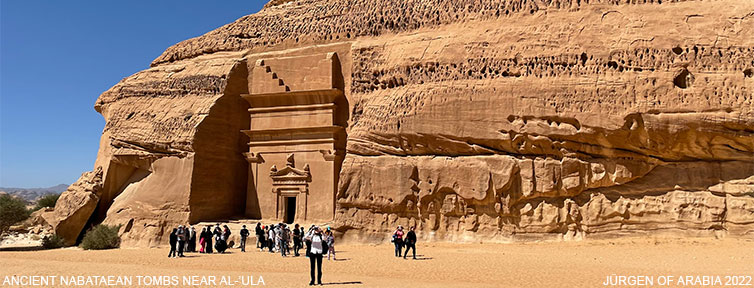

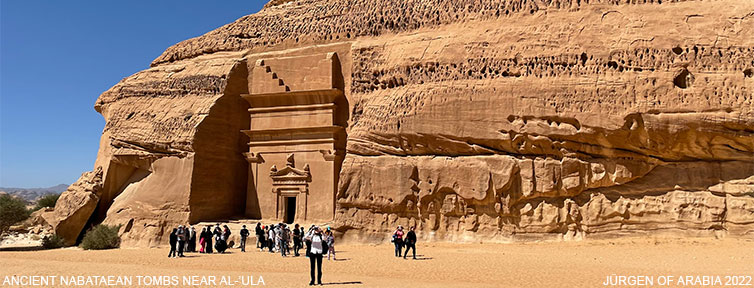

Can you spot the baby camel in the above image which I took in Al-'Ula? Click to enlarge!

References

[AC]

J. Schmidhuber (AI Blog, 2021). 3 decades of artificial curiosity & creativity. Our artificial scientists not only answer given questions but also invent new questions. They achieve curiosity through: (1990) the principle of generative adversarial networks, (1991) neural nets that maximise learning progress, (1995) neural nets that maximise information gain (optimally since 2011), (1997) adversarial design of surprising computational experiments, (2006) maximizing compression progress like scientists/artists/comedians do, (2011) PowerPlay... Since 2012: applications to real robots.

[AC90]

J. Schmidhuber.

Making the world differentiable: On using fully recurrent

self-supervised neural networks for dynamic reinforcement learning and

planning in non-stationary environments.

Technical Report FKI-126-90, TUM, Feb 1990, revised Nov 1990.

PDF.

More.

[AC90b]

J. Schmidhuber.

A possibility for implementing curiosity and boredom in

model-building neural controllers.

In J. A. Meyer and S. W. Wilson, editors, Proc. of the

International Conference on Simulation

of Adaptive Behavior: From Animals to

Animats, pages 222-227. MIT Press/Bradford Books, 1991.

PDF.

More.

[AC02]

J. Schmidhuber.

Exploring the Predictable.

In Ghosh, S. Tsutsui, eds., Advances in Evolutionary Computing,

p. 579-612, Springer, 2002.

PDF.

[AC09]

J. Schmidhuber. Art & science as by-products of the search for novel patterns, or data compressible in unknown yet learnable ways. In M. Botta (ed.), Et al. Edizioni, 2009, pp. 98-112.

PDF. (More on

artificial scientists and artists.)

[AC10]

J. Schmidhuber. Formal Theory of Creativity, Fun, and Intrinsic Motivation (1990-2010). IEEE Transactions on Autonomous Mental Development, 2(3):230-247, 2010.

IEEE link.

PDF.

[AC20]

J. Schmidhuber. Generative Adversarial Networks are Special Cases of Artificial Curiosity (1990) and also Closely Related to Predictability Minimization (1991).

Neural Networks, Volume 127, p 58-66, 2020.

Preprint arXiv/1906.04493.

[ARA1] I. Black, T. Macalister (2016). Saudi Aramco—the $10tn mystery at the heart of the Gulf state. The Guardian, 16 Jan 2016.

[ARA2] K. Baxter, S. Said (2016). Could Saudi Aramco Be Worth 20 Times Exxon? The Wall Street Journal, 8 Jan 2016.

[ARA3] T. Heath (2019). For oil watchers, a first look at Saudi Aramco's $110 billion annual profit gusher. The Washington Post, 5 April 2019.

[ARA4] Saudi Arabia Insisted Aramco Was Worth $2 Trillion. Now It Is. The New York Times, 16 Dec 2019.

[ARA5] A. Sharma (2021). Aramco and Saudi Telecom Company roll out Dammam 7 supercomputer. In Business, 26 Jan 2021.

[ARA5b] Tabot Network (2021). Saudi Aramco Unveils Dammam 7, Its New Top Ten Supercomputer. HPCWire, 21 Jan 2021.

[ARA6] D. Saadi (2021). Saudi Aramco joins local 1.5 GW solar project with a 30% stake in renewables push. S & P Global, 15 Aug 2021.

[ARA6a] A. Di Paola (2021). Aramco Joins Group Building Giant Solar Plant in Saudi Arabia. Bloomberg, 15 Aug 2021.

[ARA7] A. Saiyid (2021). Saudi Arabia recommits to 50% renewable power by 2030. IHS Markit, 29 Mar 2021.

[ARA8] A. Cohen (2021). Saudi Energy Giants Join The Green Revolution. Forbes, 23 Apr 2021.

[ARA9] Business Wire (2021). Saudi Aramco Energy Ventures Invests in Energy Vault. 2 June 2021.

[ATL18] W. Al-Shobakky (2018). The University the King Built. A Saudi experiment in education aims to solve the West's science malaise—and become a global research powerhouse. The New Atlantis, Number 54, Winter 2018, pp. 51-65.

[ATT] J. Schmidhuber (AI Blog, 2020). 30-year anniversary of end-to-end differentiable sequential neural attention. Plus goal-conditional reinforcement learning. We had both hard attention[ATT0-2] (1990) and soft attention (1991-93).[FWP] Today, both types are very popular.

[ATT0] J. Schmidhuber and R. Huber.

Learning to generate focus trajectories for attentive vision.

Technical Report FKI-128-90, Institut für Informatik, Technische

Universität München, 1990.

PDF.

[ATT1] J. Schmidhuber and R. Huber. Learning to generate artificial fovea trajectories for target detection. International Journal of Neural Systems, 2(1 & 2):135-141, 1991. Based on TR FKI-128-90, TUM, 1990.

PDF.

More.

[ATT2]

J. Schmidhuber.

Learning algorithms for networks with internal and external feedback.

In D. S. Touretzky, J. L. Elman, T. J. Sejnowski, and G. E. Hinton,

editors, Proc. of the 1990 Connectionist Models Summer School, pages

52-61. San Mateo, CA: Morgan Kaufmann, 1990.

PS. (PDF.)

[BAN]

Banu Musa brothers (9th century). The book of ingenious devices (Kitab al-hiyal). Translated by D. R. Hill (1979), Springer, p. 44, ISBN 90-277-0833-9.

[Perhaps the Banu Musa music automaton was the world's first machine with a stored program.]

[CHA17] Jean-Lou Chameau (2017). Celebrating Global Recognition. KAUST Administration, Office of the President,

News, 2017.

[CNN1] K. Fukushima: Neural network model for a mechanism of pattern

recognition unaffected by shift in position—Neocognitron.

Trans. IECE, vol. J62-A, no. 10, pp. 658-665, 1979.

The first deep convolutional neural network architecture, with alternating convolutional layers and downsampling layers. In Japanese. English version: [CNN1+]. More in Scholarpedia.

[CNN1+]

K. Fukushima: Neocognitron: a self-organizing neural network model for a mechanism of pattern recognition unaffected by shift in position.

Biological Cybernetics, vol. 36, no. 4, pp. 193-202 (April 1980).

Link.

[CNN1a] A. Waibel. Phoneme Recognition Using Time-Delay Neural Networks. Meeting of IEICE, Tokyo, Japan, 1987. First application of backpropagation[BP1][BP2] and weight-sharing

to a convolutional architecture.

[CNN1b] A. Waibel, T. Hanazawa, G. Hinton, K. Shikano and K. J. Lang. Phoneme recognition using time-delay neural networks. IEEE Transactions on Acoustics, Speech, and Signal Processing, vol. 37, no. 3, pp. 328-339, March 1989.

[CNN2] Y. LeCun, B. Boser, J. S. Denker, D. Henderson, R. E. Howard, W. Hubbard, L. D. Jackel: Backpropagation Applied to Handwritten Zip Code Recognition, Neural Computation, 1(4):541-551, 1989.

PDF.

[CNN3] Weng, J.,

Ahuja, N., and Huang, T. S. (1993). Learning recognition and segmentation of 3-D objects from 2-D images. Proc. 4th Intl. Conf. Computer Vision, Berlin, Germany, pp. 121-128. A CNN whose downsampling layers use Max-Pooling

(which has become very popular) instead of Fukushima's

Spatial Averaging.[CNN1]

[CNN4] M. A. Ranzato, Y. LeCun: A Sparse and Locally Shift Invariant Feature Extractor Applied to Document Images. Proc. ICDAR, 2007

[DAN]

J. Schmidhuber (AI Blog, 2021).

10-year anniversary. In 2011, DanNet triggered the deep convolutional neural network (CNN) revolution. Named after my outstanding postdoc Dan Ciresan, it was the first deep and fast CNN to win international computer vision contests, and had a temporary monopoly on winning them, driven by a very fast implementation based on graphics processing units (GPUs).

1st superhuman result in 2011.[DAN1]

Now everybody is using this approach.

[DAN1]

J. Schmidhuber (AI Blog, 2011; updated 2021 for 10th birthday of DanNet): First superhuman visual pattern recognition.

At the IJCNN 2011 computer vision competition in Silicon Valley,

our artificial neural network called DanNet performed twice better than humans, three times better than the closest artificial competitor, and six times better than the best non-neural method.

[DEC] J. Schmidhuber (AI Blog, 02/20/2020; revised 2021). The 2010s: Our Decade of Deep Learning / Outlook on the 2020s. The recent decade's most important developments and industrial applications based on our AI, with an outlook on the 2020s, also addressing privacy and data markets.

[DEEP1]

Ivakhnenko, A. G. and Lapa, V. G. (1965). Cybernetic Predicting Devices. CCM Information Corporation. First working Deep Learners with many layers, learning internal representations.

[DEEP1a]

Ivakhnenko, Alexey Grigorevich. The group method of data of handling; a rival of the method of stochastic approximation. Soviet Automatic Control 13 (1968): 43-55.

[DEEP2]

Ivakhnenko, A. G. (1971). Polynomial theory of complex systems. IEEE Transactions on Systems, Man and Cybernetics, (4):364-378.

[DL1] J. Schmidhuber, 2015.

Deep learning in neural networks: An overview. Neural Networks, 61, 85-117.

More.

Got the first Best Paper Award ever issued by the journal Neural Networks, founded in 1988.

[DL2] J. Schmidhuber, 2015.

Deep Learning.

Scholarpedia, 10(11):32832.

[DL4] J. Schmidhuber (AI Blog, 2017).

Our impact on the world's most valuable public companies: Apple, Google, Microsoft, Facebook, Amazon... By 2015-17, neural nets developed in my labs were on over 3 billion devices such as smartphones, and used many billions of times per day, consuming a significant fraction of the world's compute. Examples: greatly improved (CTC-based) speech recognition on all Android phones, greatly improved machine translation through Google Translate and Facebook (over 4 billion LSTM-based translations per day), Apple's Siri and Quicktype on all iPhones, the answers of Amazon's Alexa, etc. Google's 2019

on-device speech recognition

(on the phone, not the server)

is still based on

LSTM.

[DLH]

J. Schmidhuber (AI Blog, 2022).

Annotated History of Modern AI and Deep Learning. Technical Report IDSIA-22-22, IDSIA, Lugano, Switzerland, 2022.

Preprint arXiv:2212.11279.

Tweet of 2022.

[FAST] C. v.d. Malsburg. Tech Report 81-2, Abteilung f. Neurobiologie,

Max-Planck Institut f. Biophysik und Chemie, Goettingen, 1981.

First paper on fast weights or dynamic links.

[FASTa]

J. A. Feldman. Dynamic connections in neural networks.

Biological Cybernetics, 46(1):27-39, 1982.

2nd paper on fast weights.

[FWP]

J. Schmidhuber (AI Blog, 26 March 2021).

26 March 1991: Neural nets learn to program neural nets with fast weights—like Transformer variants. 2021: New stuff!

30-year anniversary of a now popular

alternative[FWP0-1] to recurrent NNs.

A slow feedforward NN learns by gradient descent to program the changes of

the fast weights[FAST,FASTa] of

another NN.

Such Fast Weight Programmers[FWP0-6,FWPMETA1-7] can learn to memorize past data, e.g.,

by computing fast weight changes through additive outer products of self-invented activation patterns[FWP0-1]

(now often called keys and values for self-attention[TR1-6]).

The similar Transformers[TR1-2] combine this with projections

and softmax and

are now widely used in natural language processing.

For long input sequences, their efficiency was improved through

linear Transformers or Performers[TR5-6]

which are formally equivalent to the 1991 Fast Weight Programmers (apart from normalization).

In 1993, I introduced

the attention terminology[FWP2] now used

in this context,[ATT] and

extended the approach to

RNNs that program themselves.

[FWP0]

J. Schmidhuber.

Learning to control fast-weight memories: An alternative to recurrent nets.

Technical Report FKI-147-91, Institut für Informatik, Technische

Universität München, 26 March 1991.

PDF.

First paper on fast weight programmers: a slow net learns by gradient descent to compute weight changes of a fast net.

[FWP1] J. Schmidhuber. Learning to control fast-weight memories: An alternative to recurrent nets. Neural Computation, 4(1):131-139, 1992.

PDF.

HTML.

Pictures (German).

[FWP2] J. Schmidhuber. Reducing the ratio between learning complexity and number of time-varying variables in fully recurrent nets. In Proceedings of the International Conference on Artificial Neural Networks, Amsterdam, pages 460-463. Springer, 1993.

PDF.

First recurrent fast weight programmer based on outer products. Introduced the terminology of learning "internal spotlights of attention."

[FWP3] I. Schlag, J. Schmidhuber. Gated Fast Weights for On-The-Fly Neural Program Generation. Workshop on Meta-Learning, @N(eur)IPS 2017, Long Beach, CA, USA.

[FWP3a] I. Schlag, J. Schmidhuber. Learning to Reason with Third Order Tensor Products. Advances in Neural Information Processing Systems (N(eur)IPS), Montreal, 2018.

Preprint: arXiv:1811.12143. PDF.

[FWP4a] J. Ba, G. Hinton, V. Mnih, J. Z. Leibo, C. Ionescu. Using Fast Weights to Attend to the Recent Past. NIPS 2016.

PDF. Like [FWP0-2].

[FWP4b]

D. Bahdanau, K. Cho, Y. Bengio (2014).

Neural Machine Translation by Jointly Learning to Align and Translate. Preprint arXiv:1409.0473 [cs.CL].

[FWP4d]

Y. Tang, D. Nguyen, D. Ha (2020).

Neuroevolution of Self-Interpretable Agents.

Preprint: arXiv:2003.08165.

[FWP5]

F. J. Gomez and J. Schmidhuber.

Evolving modular fast-weight networks for control.

In W. Duch et al. (Eds.):

Proc. ICANN'05,

LNCS 3697, pp. 383-389, Springer-Verlag Berlin Heidelberg, 2005.

PDF.

HTML overview.

Reinforcement-learning fast weight programmer.

[FWP6] I. Schlag, K. Irie, J. Schmidhuber.

Linear Transformers Are Secretly Fast Weight Programmers. ICML 2021. Preprint: arXiv:2102.11174.

[FWP7] K. Irie, I. Schlag, R. Csordas, J. Schmidhuber.

Going Beyond Linear Transformers with Recurrent Fast Weight Programmers.

Preprint: arXiv:2106.06295 (June 2021).

[FWPMETA1] J. Schmidhuber. Steps towards `self-referential' learning. Technical Report CU-CS-627-92, Dept. of Comp. Sci., University of Colorado at Boulder, November 1992.

First recurrent fast weight programmer that can learn

to run a learning algorithm or weight change algorithm on itself.

[FWPMETA2] J. Schmidhuber. A self-referential weight matrix.

In Proceedings of the International Conference on Artificial

Neural Networks, Amsterdam, pages 446-451. Springer, 1993.

PDF.

[FWPMETA3] J. Schmidhuber.

An introspective network that can learn to run its own weight change algorithm. In Proc. of the Intl. Conf. on Artificial Neural Networks,

Brighton, pages 191-195. IEE, 1993.

[FWPMETA4]

J. Schmidhuber.

A neural network that embeds its own meta-levels.

In Proc. of the International Conference on Neural Networks '93,

San Francisco. IEEE, 1993.

[FWPMETA5]

J. Schmidhuber. Habilitation thesis, TUM, 1993. PDF.

A recurrent neural net with a self-referential, self-reading, self-modifying weight matrix

can be found here.

[FWPMETA6]

L. Kirsch and J. Schmidhuber. Meta Learning Backpropagation & Improving It. Metalearning Workshop at NeurIPS, 2020.

Preprint arXiv:2012.14905 [cs.LG], 2020.

[FWPMETA7]

I. Schlag, T. Munkhdalai, J. Schmidhuber.

Learning Associative Inference Using Fast Weight Memory.

ICLR 2021.

Report arXiv:2011.07831 [cs.AI], 2020.

[GOD21] J. Schmidhuber (AI Blog, 2021). 90th anniversary celebrations: 1931: Kurt Gödel, founder of theoretical computer science, shows limits of math, logic, computing, and artificial intelligence. This was number 1 on Hacker News.

[GOD21a]

J. Schmidhuber (2021). Als Kurt Gödel die Grenzen des Berechenbaren entdeckte.

(When Kurt Gödel discovered the limits of computability.)

Frankfurter Allgemeine Zeitung, 16/6/2021.

[GOD21b]

J. Schmidhuber (AI Blog, 2021). 80. Jahrestag: 1931: Kurt Gödel, Vater der theoretischen Informatik, entdeckt die Grenzen des Berechenbaren und der künstlichen Intelligenz.

[GPT3]

T. B. Brown, B. Mann, N. Ryder, M. Subbiah, J. Kaplan, P. Dhariwal, A. Neelakantan, P. Shyam, G. Sastry, A. Askell, S. Agarwal, A. Herbert-Voss, G. Krueger, T. Henighan, R. Child, A. Ramesh, D. M. Ziegler, J. Wu, C. Winter, C. Hesse, M. Chen, E. Sigler, M. Litwin, S. Gray, B. Chess, J. Clark, C. Berner, S. McCandlish, A. Radford, I. Sutskever, D. Amodei.

Language Models are Few-Shot Learners (2020).

Preprint arXiv/2005.14165.

[GPUNN]

Oh, K.-S. and Jung, K. (2004). GPU implementation of neural networks. Pattern Recognition, 37(6):1311-1314. Speeding up traditional NNs on GPU by a factor of 20.

[GPUCNN]

K. Chellapilla, S. Puri, P. Simard. High performance convolutional neural networks for document processing. International Workshop on Frontiers in Handwriting Recognition, 2006. Speeding up shallow CNNs on GPU by a factor of 4.

[GPUCNN1] D. C. Ciresan, U. Meier, J. Masci, L. M. Gambardella, J. Schmidhuber. Flexible, High Performance Convolutional Neural Networks for Image Classification. International Joint Conference on Artificial Intelligence (IJCAI-2011, Barcelona), 2011. PDF. ArXiv preprint.

Speeding up deep CNNs on GPU by a factor of 60.

Used to

win four important computer vision competitions 2011-2012 before others won any

with similar approaches.

[GPUCNN2] D. C. Ciresan, U. Meier, J. Masci, J. Schmidhuber.

A Committee of Neural Networks for Traffic Sign Classification.

International Joint Conference on Neural Networks (IJCNN-2011, San Francisco), 2011.

PDF.

HTML overview.

First superhuman performance in a computer vision contest, with half the error rate of humans, and one third the error rate of the closest competitor.[DAN1] This led to massive interest from industry.

[GPUCNN3] D. C. Ciresan, U. Meier, J. Schmidhuber. Multi-column Deep Neural Networks for Image Classification. Proc. IEEE Conf. on Computer Vision and Pattern Recognition CVPR 2012, p 3642-3649, July 2012. PDF. Longer TR of Feb 2012: arXiv:1202.2745v1 [cs.CV]. More.

[GPUCNN4] A. Krizhevsky, I. Sutskever, G. E. Hinton. ImageNet Classification with Deep Convolutional Neural Networks. NIPS 25, MIT Press, Dec 2012.

PDF.

[GPUCNN5]

J. Schmidhuber (AI Blog, 2017; updated 2021 for 10th birthday of DanNet): History of computer vision contests won by deep CNNs since 2011. DanNet won 4 of them in a row before the similar AlexNet/VGG Net and the Resnet (a Highway Net with open gates) joined the party. Today, deep CNNs are standard in computer vision.

[GPUCNN6] J. Schmidhuber, D. Ciresan, U. Meier, J. Masci, A. Graves. On Fast Deep Nets for AGI Vision. In Proc. Fourth Conference on Artificial General Intelligence (AGI-11), Google, Mountain View, California, 2011.

PDF.

[GPUCNN7] D. C. Ciresan, A. Giusti, L. M. Gambardella, J. Schmidhuber. Mitosis Detection in Breast Cancer Histology Images using Deep Neural Networks. MICCAI 2013.

PDF.

[GPUCNN8] J. Schmidhuber. First deep learner to win a contest on object detection in large images—

first deep learner to win a medical imaging contest (AI Blog, 2012). HTML.

How IDSIA used GPU-based CNNs to win the

ICPR 2012 Contest on Mitosis Detection

and the

MICCAI 2013 Grand Challenge.

[GPUCNN9]

K. Simonyan, A. Zisserman. Very deep convolutional networks for large-scale image recognition. Preprint arXiv:1409.1556 (2014).

[HW1] R. K. Srivastava, K. Greff, J. Schmidhuber. Highway networks.

Preprints arXiv:1505.00387 (May 2015) and arXiv:1507.06228 (July 2015). Also at NIPS 2015. The first working very deep feedforward nets with over 100 layers (previous NNs had at most a few tens of layers). Let g, t, h, denote non-linear differentiable functions. Each non-input layer of a highway net computes g(x)x + t(x)h(x), where x is the data from the previous layer. (Like LSTM with forget gates[LSTM2] for RNNs.) Resnets[HW2] are a version of this where the gates are always open: g(x)=t(x)=const=1.

Highway Nets perform roughly as well as ResNets[HW2] on ImageNet.[HW3] Highway layers are also often used for natural language processing, where the simpler residual layers do not work as well.[HW3]

More.

[HW1a]

R. K. Srivastava, K. Greff, J. Schmidhuber. Highway networks. Presentation at the Deep Learning Workshop, ICML'15, July 10-11, 2015.

Link.

[HW2] He, K., Zhang,

X., Ren, S., Sun, J. Deep residual learning for image recognition. Preprint

arXiv:1512.03385

(Dec 2015). Residual nets are a version of Highway Nets[HW1]

where the gates are always open:

g(x)=1 (a typical highway net initialization) and t(x)=1.

More.

[HW3]

K. Greff, R. K. Srivastava, J. Schmidhuber. Highway and Residual Networks learn Unrolled Iterative Estimation. Preprint

arxiv:1612.07771 (2016). Also at ICLR 2017.

[KOE1]

T. Koetsier (2001). On the prehistory of programmable machines: musical automata, looms, calculators. Mechanism and Machine Theory, Elsevier, 36 (5): 589-603, 2001.

[LSTM0]

S. Hochreiter and J. Schmidhuber.

Long Short-Term Memory.

TR FKI-207-95, TUM, August 1995.

PDF.

[LSTM1] S. Hochreiter, J. Schmidhuber. Long Short-Term Memory. Neural Computation, 9(8):1735-1780, 1997. PDF.

Based on [LSTM0]. More.

[LSTM2] F. A. Gers, J. Schmidhuber, F. Cummins. Learning to Forget: Continual Prediction with LSTM. Neural Computation, 12(10):2451-2471, 2000.

PDF.

The "vanilla LSTM architecture" with forget gates

that everybody is using today, e.g., in Google's Tensorflow.

[LSTM3] A. Graves, J. Schmidhuber. Framewise phoneme classification with bidirectional LSTM and other neural network architectures. Neural Networks, 18:5-6, pp. 602-610, 2005.

PDF.

[LSTM4]

S. Fernandez, A. Graves, J. Schmidhuber. An application of

recurrent neural networks to discriminative keyword

spotting.

Intl. Conf. on Artificial Neural Networks ICANN'07,

2007.

PDF.

[LSTM5] A. Graves, M. Liwicki, S. Fernandez, R. Bertolami, H. Bunke, J. Schmidhuber. A Novel Connectionist System for Improved Unconstrained Handwriting Recognition. IEEE Transactions on Pattern Analysis and Machine Intelligence, vol. 31, no. 5, 2009.

PDF.

[LSTM6] A. Graves, J. Schmidhuber. Offline Handwriting Recognition with Multidimensional Recurrent Neural Networks. NIPS'22, p 545-552, Vancouver, MIT Press, 2009.

PDF.

[LSTM7] J. Bayer, D. Wierstra, J. Togelius, J. Schmidhuber.

Evolving memory cell structures for sequence learning.

Proc. ICANN-09, Cyprus, 2009.

PDF.

[LSTM8] A. Graves, A. Mohamed, G. E. Hinton. Speech Recognition with Deep Recurrent Neural Networks. ICASSP 2013, Vancouver, 2013.

PDF.

[LSTM9]

O. Vinyals, L. Kaiser, T. Koo, S. Petrov, I. Sutskever, G. Hinton.

Grammar as a Foreign Language. Preprint arXiv:1412.7449 [cs.CL].

[LSTM10]

A. Graves, D. Eck and N. Beringer, J. Schmidhuber. Biologically Plausible Speech Recognition with LSTM Neural Nets. In J. Ijspeert (Ed.), First Intl. Workshop on Biologically Inspired Approaches to Advanced Information Technology, Bio-ADIT 2004, Lausanne, Switzerland, p. 175-184, 2004.

PDF.

[LSTM11]

N. Beringer and A. Graves and F. Schiel and J. Schmidhuber. Classifying unprompted speech by retraining LSTM Nets. In W. Duch et al. (Eds.): Proc. Intl. Conf. on Artificial Neural Networks ICANN'05, LNCS 3696, pp. 575-581, Springer-Verlag Berlin Heidelberg, 2005.

[LSTM12]

D. Wierstra, F. Gomez, J. Schmidhuber. Modeling systems with internal state using Evolino. In Proc. of the 2005 conference on genetic and evolutionary computation (GECCO), Washington, D. C., pp. 1795-1802, ACM Press, New York, NY, USA, 2005. Got a GECCO best paper award.

[LSTM13]

F. A. Gers and J. Schmidhuber.

LSTM Recurrent Networks Learn Simple Context Free and

Context Sensitive Languages.

IEEE Transactions on Neural Networks 12(6):1333-1340, 2001.

PDF.

[LSTM14]

S. Fernandez, A. Graves, J. Schmidhuber.

Sequence labelling in structured domains with

hierarchical recurrent neural networks. In Proc.

IJCAI 07, p. 774-779, Hyderabad, India, 2007 (talk).

PDF.

[LSTM15]

A. Graves, J. Schmidhuber.

Offline Handwriting Recognition with Multidimensional Recurrent Neural Networks.

Advances in Neural Information Processing Systems 22, NIPS'22, p 545-552,

Vancouver, MIT Press, 2009.

PDF.

[LSTM16]

M. Stollenga, W. Byeon, M. Liwicki, J. Schmidhuber. Parallel Multi-Dimensional LSTM, With Application to Fast Biomedical Volumetric Image Segmentation. Advances in Neural Information Processing Systems (NIPS), 2015.

Preprint: arxiv:1506.07452.

[LSTM17]

J. A. Perez-Ortiz, F. A. Gers, D. Eck, J. Schmidhuber.

Kalman filters improve LSTM network performance in

problems unsolvable by traditional recurrent nets.

Neural Networks 16(2):241-250, 2003.

PDF.

[LSTM-RL]

B. Bakker, F. Linaker, J. Schmidhuber.

Reinforcement Learning in Partially Observable Mobile Robot

Domains Using Unsupervised Event Extraction.

In Proceedings of the 2002

IEEE/RSJ International Conference on

Intelligent Robots and Systems (IROS 2002), Lausanne, 2002.

PDF.

[LSTMPG]

J. Schmidhuber (AI Blog, Dec 2020). 10-year anniversary of our journal paper on deep reinforcement learning with policy gradients for LSTM (2007-2010). Recent famous applications of policy gradients to LSTM: DeepMind's Starcraft player (2019) and OpenAI's dextrous robot hand & Dota player (2018)—Bill Gates called this a huge milestone in advancing AI.

[MC43]

W. S. McCulloch, W. Pitts. A Logical Calculus of Ideas Immanent in Nervous Activity.

Bulletin of Mathematical Biophysics, Vol. 5, p. 115-133, 1943.

[META]

J. Schmidhuber (AI Blog, 2020). 1/3 century anniversary of

first publication on metalearning machines that learn to learn (1987).

For its cover I drew a robot that bootstraps itself.

1992-: gradient descent-based neural metalearning. 1994-: Meta-Reinforcement Learning with self-modifying policies. 1997: Meta-RL plus artificial curiosity and intrinsic motivation.

2002-: asymptotically optimal metalearning for curriculum learning. 2003-: mathematically optimal Gödel Machine. 2020: new stuff!

[META1]

J. Schmidhuber.

Evolutionary principles in self-referential learning, or on learning

how to learn: The meta-meta-... hook. Diploma thesis,

Institut für Informatik, Technische Universität München, 1987.

Searchable PDF scan (created by OCRmypdf which uses

LSTM).

HTML.

For example,

Genetic Programming

(GP) is applied to itself, to recursively evolve

better GP methods through Meta-Evolution. More.

[METARL2]

J. Schmidhuber.

On learning how to learn learning strategies.

Technical Report FKI-198-94, Fakultät für Informatik,

Technische Universität München, November 1994.

PDF.

On Single Life Meta-Reinforcement Learning.

[METARL4]

M. Wiering and J. Schmidhuber.

Solving POMDPs using Levin search and EIRA.

In L. Saitta, ed.,

Machine Learning:

Proceedings of the 13th International Conference (ICML 1996),

pages 534-542,

Morgan Kaufmann Publishers, San Francisco, CA, 1996.

PDF.

HTML.

[METARL5]

J. Schmidhuber and J. Zhao and M. Wiering.

Simple principles of metalearning.

Technical Report IDSIA-69-96, IDSIA, June 1996.

PDF.

[METARL7]

Shifting inductive bias with success-story algorithm,

adaptive Levin search, and incremental self-improvement.

Machine Learning 28:105-130, 1997.

PDF.

[METARL8]

J. Schmidhuber, J. Zhao, N. Schraudolph.

Reinforcement learning with self-modifying policies.

In S. Thrun and L. Pratt, eds.,

Learning to learn, Kluwer, pages 293-309, 1997.

PDF;

HTML.

[METARL10]

L. Kirsch, S. van Steenkiste, J. Schmidhuber. Improving Generalization in Meta Reinforcement Learning using Neural Objectives. International Conference on Learning Representations (ICLR), 2020.

Preprint arXiv:1910.04098 [cs.LG], 2020.

[MIR] J. Schmidhuber (AI Blog, Oct 2019, revised 2021). Deep Learning: Our Miraculous Year 1990-1991. Preprint

arXiv:2005.05744, 2020.

The deep learning neural networks of our team have revolutionised pattern recognition and machine learning, and are now heavily used in academia and industry. In 2020-21, we celebrate that many of the basic ideas behind this revolution were published within fewer than 12 months in our "Annus Mirabilis" 1990-1991 at TU Munich.

[MLP1] D. C. Ciresan, U. Meier, L. M. Gambardella, J. Schmidhuber. Deep Big Simple Neural Nets For Handwritten Digit Recognition. Neural Computation 22(12): 3207-3220, 2010. ArXiv Preprint.

Showed that plain backprop for deep standard NNs is sufficient to break benchmark records, without any unsupervised pre-training.

[MLP2] J. Schmidhuber

(AI Blog, Sep 2020). 10-year anniversary of supervised deep learning breakthrough (2010). No unsupervised pre-training.

By 2010, when compute was 100 times more expensive than today, both our feedforward NNs[MLP1] and our earlier recurrent NNs were able to beat all competing algorithms on important problems of that time. This deep learning revolution quickly spread from Europe to North America and Asia. The rest is history.

[MOST]

J. Schmidhuber (AI Blog, 2021). The most cited neural networks all build on work done in my labs. Foundations of the most popular NNs originated in Schmidhuber's labs at TU Munich and IDSIA. (1) Long Short-Term Memory (LSTM), (2) ResNet (which is the earlier Highway Net with open gates), (3) AlexNet and VGG Net (both building on the similar earlier DanNet: the first deep convolutional NN to win

image recognition competitions),

(4) Generative Adversarial Networks (an instance of the much earlier

Adversarial Artificial Curiosity), and (5) variants of Transformers (Transformers with linearized self-attention are formally equivalent to the much earlier Fast Weight Programmers).

Most of this started with the

Annus Mirabilis of 1990-1991.[MIR]

[OBJ1] K. Greff, A. Rasmus, M. Berglund, T. Hao, H. Valpola, J. Schmidhuber (2016). Tagger: Deep unsupervised perceptual grouping. NIPS 2016, pp. 4484-4492.

[OBJ2] K. Greff, S. van Steenkiste, J. Schmidhuber (2017). Neural expectation maximization. NIPS 2017, pp. 6691-6701.

[OBJ3] S. van Steenkiste, M. Chang, K. Greff, J. Schmidhuber (2018). Relational neural expectation maximization: Unsupervised discovery of objects and their interactions. ICLR 2018.

[OBJ4]

A. Stanic, S. van Steenkiste, J. Schmidhuber (2021). Hierarchical Relational Inference. AAAI 2021.

[OBJ5]

A. Gopalakrishnan, S. van Steenkiste, J. Schmidhuber (2020). Unsupervised Object Keypoint Learning using Local Spatial Predictability.

In Proc. ICLR 2021.

Preprint arXiv/2011.12930.

[PLAN]

J. Schmidhuber (AI Blog, 2020). 30-year anniversary of planning & reinforcement learning with recurrent world models and artificial curiosity (1990). This work also introduced high-dimensional reward signals, deterministic policy gradients for RNNs,

the GAN principle (widely used today). Agents with adaptive recurrent world models even suggest a simple explanation of consciousness & self-awareness.

[PLAN2]

J. Schmidhuber.

An on-line algorithm for dynamic reinforcement learning and planning

in reactive environments.

Proc. IEEE/INNS International Joint Conference on Neural

Networks, San Diego, volume 2, pages 253-258, 1990.

Based on TR FKI-126-90 (1990).[AC90]

More.

[PLAN3]

J. Schmidhuber.

Reinforcement learning in Markovian and non-Markovian environments.

In D. S. Lippman, J. E. Moody, and D. S. Touretzky, editors,

Advances in Neural Information Processing Systems 3, NIPS'3, pages 500-506. San

Mateo, CA: Morgan Kaufmann, 1991.

PDF.

Partially based on TR FKI-126-90 (1990).[AC90]

[PLAN4]

J. Schmidhuber.

On Learning to Think: Algorithmic Information Theory for Novel Combinations of Reinforcement Learning Controllers and Recurrent Neural World Models.

Report arXiv:1210.0118 [cs.AI], 2015.

[PLAN5]

One Big Net For Everything. Preprint arXiv:1802.08864 [cs.AI], Feb 2018.

[PLAN6]

D. Ha, J. Schmidhuber. Recurrent World Models Facilitate Policy Evolution. Advances in Neural Information Processing Systems (NIPS), Montreal, 2018. (Talk.)

Preprint: arXiv:1809.01999.

Github: World Models.

[PM0] J. Schmidhuber. Learning factorial codes by predictability minimization. TR CU-CS-565-91, Univ. Colorado at Boulder, 1991. PDF.

More.

[PM1] J. Schmidhuber. Learning factorial codes by predictability minimization. Neural Computation, 4(6):863-879, 1992. Based on [PM0], 1991. PDF.

More.

[PM2] J. Schmidhuber, M. Eldracher, B. Foltin. Semilinear predictability minimzation produces well-known feature detectors. Neural Computation, 8(4):773-786, 1996.

PDF. More.

[QS21] Citations per faculty sorting of the QS World University Rankings. From 2016 through 2021 inclusive, KAUST has come out on top (#1) of this metric.

[R2] Reddit/ML, 2019. J. Schmidhuber really had GANs in 1990.

[R5] Reddit/ML, 2019. The 1997 LSTM paper by Hochreiter & Schmidhuber has become the most cited deep learning research paper of the 20th century.

[RPG]

D. Wierstra, A. Foerster, J. Peters, J. Schmidhuber (2010). Recurrent policy gradients. Logic Journal of the IGPL, 18(5), 620-634.

[RPG07]

D. Wierstra, A. Foerster, J. Peters, J. Schmidhuber. Solving Deep Memory POMDPs

with Recurrent Policy Gradients.

Intl. Conf. on Artificial Neural Networks ICANN'07,

2007.

PDF.

[SHA7b]

N. Sharkey (2007). A 13th Century Programmable Robot. Univ. of Sheffield, 2007.

[On a programmable drum machine of 1206 by Al-Jazari.]

[TR1]

A. Vaswani, N. Shazeer, N. Parmar, J. Uszkoreit, L. Jones, A. N. Gomez, L. Kaiser, I. Polosukhin (2017). Attention is all you need. NIPS 2017, pp. 5998-6008.

[TR2]

J. Devlin, M. W. Chang, K. Lee, K. Toutanova (2018). Bert: Pre-training of deep bidirectional transformers for language understanding. Preprint arXiv:1810.04805.

[TR3] K. Tran, A. Bisazza, C. Monz. The Importance of Being Recurrent for Modeling Hierarchical Structure. EMNLP 2018, p 4731-4736. ArXiv preprint 1803.03585.

[TR4]

M. Hahn. Theoretical Limitations of Self-Attention in Neural Sequence Models. Transactions of the Association for Computational Linguistics, Volume 8, p.156-171, 2020.

[TR5]

A. Katharopoulos, A. Vyas, N. Pappas, F. Fleuret.

Transformers are RNNs: Fast autoregressive Transformers

with linear attention. In Proc. Int. Conf. on Machine

Learning (ICML), July 2020.

[TR6]

K. Choromanski, V. Likhosherstov, D. Dohan, X. Song,

A. Gane, T. Sarlos, P. Hawkins, J. Davis, A. Mohiuddin,

L. Kaiser, et al. Rethinking attention with Performers.

In Int. Conf. on Learning Representations (ICLR), 2021.

[UN]

J. Schmidhuber (AI Blog, 2021). 30-year anniversary. 1991: First very deep learning with unsupervised pre-training. Unsupervised hierarchical predictive coding finds compact internal representations of sequential data to facilitate downstream learning. The hierarchy can be distilled into a single deep neural network (suggesting a simple model of conscious and subconscious information processing). 1993: solving problems of depth >1000.

[UN0]

J. Schmidhuber.

Neural sequence chunkers.

Technical Report FKI-148-91, Institut für Informatik, Technische

Universität München, April 1991.

PDF.

[UN1] J. Schmidhuber. Learning complex, extended sequences using the principle of history compression. Neural Computation, 4(2):234-242, 1992. Based on TR FKI-148-91, TUM, 1991.[UN0] PDF.

First working Deep Learner based on a deep RNN hierarchy (with different self-organising time scales),

overcoming the vanishing gradient problem through unsupervised pre-training and predictive coding.

Also: compressing or distilling a teacher net (the chunker) into a student net (the automatizer) that does not forget its old skills—such approaches are now widely used. More.

[UN2] J. Schmidhuber. Habilitation thesis, TUM, 1993. PDF.

An ancient experiment on "Very Deep Learning" with credit assignment across 1200 time steps or virtual layers and unsupervised pre-training for a stack of recurrent NN

can be found here (depth > 1000).

[UN3]

J. Schmidhuber, M. C. Mozer, and D. Prelinger.

Continuous history compression.

In H. Hüning, S. Neuhauser, M. Raus, and W. Ritschel, editors,

Proc. of Intl. Workshop on Neural Networks, RWTH Aachen, pages 87-95.

Augustinus, 1993.

[VAN1] S. Hochreiter. Untersuchungen zu dynamischen neuronalen Netzen. Diploma thesis, TUM, 1991 (advisor J. Schmidhuber). PDF.

More on the Fundamental Deep Learning Problem.

[ZUS21]

J. Schmidhuber (AI Blog, 2021). 80th anniversary celebrations: 1941: Konrad Zuse completes the first working general computer, based on his 1936 patent application.

[ZUS21a]

J. Schmidhuber (AI Blog, 2021). 80. Jahrestag: 1941: Konrad Zuse baut ersten funktionalen Allzweckrechner, basierend auf der Patentanmeldung von 1936.

[ZUS21b]

J. Schmidhuber (2021).

Der Mann, der den Computer

erfunden hat. (The man who invented the computer.)

Weltwoche, Nr. 33.21, 19 August 2021.

PDF.

.