Edit of 4/24/2020: Reply to Dr. Hintons's Reply

Dr. Hinton's response to a relevant post on Reddit/ML [R11] is copied below. I am inserting answers marked by "Reply." Summary: The facts presented in Sec. I, II, III, IV, V, VI still stand.

Dr. Hinton: Having a public debate with Schmidhuber about academic credit is not advisable because it just encourages him and there is no limit to the time and effort that he is willing to put into trying to discredit his perceived rivals.

Reply: This is apparently an

ad hominem argument [AH3] [AH2]

true to the motto:

"If you cannot dispute a fact-based message, attack the messenger himself."

Obviously I am not "discrediting" others (e.g., popularisers) by crediting the

inventors.

Dr. Hinton: He has even resorted to tricks like having multiple aliases in Wikipedia to make it look as if other people are agreeing with what he says.

Reply: Another ad hominem attack which I reject.

(Many of my web pages encourage others though through this statement: "The contents of this article may be used for educational and non-commercial purposes, including articles for Wikipedia and similar sites.")

Dr. Hinton: The page on his website about Alan Turing is a nice example of how he goes about trying to diminish other people's contributions.

Reply: This is yet another fact-free comment that has nothing to do with the contents of

my post. Nevertheless, I'll take the bait and respond (skip this reply if you are

not interested in this deviation from the topic). I believe that

my web pages on

Kurt Gödel (the founder of theoretical computer science in 1931 [GOD])

and Alan Turing

paint an accurate picture of the origins of our field

(also crediting important pioneers ignored by certain movies about Turing). As always, in the interest of self-correcting science [SV20], I'll be happy to correct the pages based on evidence.

But what exactly should I correct? Here the brief summary:

Both

Gödel and the American computer science pioneer

Alonzo Church (1935) [CHU] were cited by Turing who published later (1936) [TUR]. Gödel introduced the first universal coding language (based on the integers). He used it to represent both data (such as axioms and theorems) and programs (such as proof-generating sequences of operations on the data). He famously constructed formal statements that talk about the computation of other formal statements, especially self-referential statements which state that they are not provable by any computational theorem prover. Thus he exhibited the fundamental limits of mathematics and computing and Artificial Intelligence [GOD]. Compare [MIR] (Sec. 18). Church (1935) extended Gödel's result to the famous Entscheidungsproblem (decision problem) [CHU], using his alternative universal language called Lambda Calculus, basis of LISP. Later, Turing

introduced yet another universal model (the Turing Machine) to do the same (1936) [TUR].

Nevertheless, although he was standing on the shoulders of others, Turing

was certainly one of the most important computer science pioneers.

Dr. Hinton: Despite my own best judgement, I feel that I cannot leave his charges completely unanswered so I am going to respond once and only once. I have never claimed that I invented backpropagation. David Rumelhart invented it independently long after people in other fields had invented it. It is true that when we first published we did not know the history so there were previous inventors that we failed to cite. What I have claimed is that I was the person to clearly demonstrate that backpropagation could learn interesting internal representations and that this is what made it popular. I did this by forcing a neural net to learn vector representations for words such that it could predict the next word in a sequence from the vector representations of the previous words. It was this example that convinced the Nature referees to publish the 1986 paper.

It is true that many people in the press have said I invented backpropagation and I have spent a lot of time correcting them. Here is an excerpt from the 2018 book by Michael Ford entitled "Architects of Intelligence": "Lots of different people invented different versions of backpropagation before David Rumelhart. They were mainly independent inventions and sit's something I feel I have got too much credit for. I've seen things in the press that say that I invented backpropagation, and that is completely wrong. It's one of these rare cases where an academic feels he has got too much credit for something! My main contribution was to show how you can use it for learning distributed representations, so I'd like to set the record straight on that."

Reply: This is finally a response related to my post.

However, it does not at all contradict what I wrote in the relevant Sec. I.

It is true that Dr. Hinton credited in 2018 his co-author

Rumelhart [RUM] with the "invention" of backpropagation [AOI]. But neither in [AOI]

nor in his 2015 survey [DL3] he

mentioned Linnainmaa (1970) [BP1], the true inventor of

this efficient algorithm for applying the chain rule to networks with differentiable nodes [BP4].

It should be mentioned

that [DL3] does cite Werbos (1974) who however described the method correctly only

later in 1982 [BP2] and

also failed to cite [BP1].

Linnainmaa's method was well-known, e.g.,

[BP5] [DL1] [DL2] [DLC].

It wasn't created by "lots of different people" but by exactly

one person who published first [BP1] and therefore should get the credit.

(Sec. I above also mentions the method's precursors [BPA] [BPB] [BPC].)

Dr. Hinton accepted the Honda Prize

although he apparently agrees that Honda's claims (e.g., Sec. I) are false.

He should ask Honda to correct their statements.

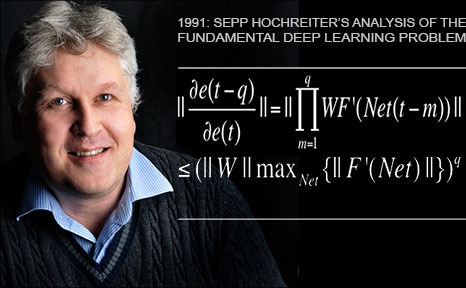

Dr. Hinton: Maybe Juergen would like to set the record straight on who invented LSTMs?

Reply: This question is again deviating from what's in my post. Nevertheless, I'll happily respond:

See [MIR], Sec. 3 and

Sec. 4 on the fundamental contributions of my

former student Sepp Hochreiter in his 1991 diploma thesis [VAN1] which I called "one of the most important documents in the history of machine learning." (Sec. 4 also mentions later great contributions by other students including Felix Gers, Alex Graves, and others.)

To summarize, Dr. Hintons comments and ad hominem arguments

diverge from the contents of my post and do not challenge

any of the facts presented in Sec. I, II, III, IV, V, VI. The facts still stand.

Acknowledgments

Thanks to several expert reviewers for useful comments. Since science is about self-correction, let me know under juergen@idsia.ch if you can spot any remaining error. The contents of this article may be used for educational and non-commercial purposes, including articles for Wikipedia and similar sites.

References (mostly from [DEC])

[DEC] J. Schmidhuber (02/20/2020). The 2010s: Our Decade of Deep Learning / Outlook on the 2020s. (Containing most references cited above. For convenience also appended below.)

[SV20] S. Vazire (2020). A toast to the error detectors. Let 2020 be the year in which we value those who ensure that science is self-correcting. Nature, vol 577, p 9, 2/2/2020.

[HON]

Honda Prize, Sept 20, 2019. WWW link. PDF. Local copy.

[Drop1] Hanson, S. J.(1990). A Stochastic Version of the Delta Rule, PHYSICA D,42, 265-272. (Compare preprint

arXiv:1808.03578 on dropout as a special case, 2018.)

[CMB]

C. v. d. Malsburg (1973).

Self-Organization of Orientation Sensitive Cells in the Striate Cortex. Kybernetik, 14:85-100, 1973. [See Table 1 for rectified linear units or ReLUs. Possibly this was also the first work on applying an EM algorithm to neural nets.]

[HAH] Hahnloser et al. (2000). Digital selection and analogue amplification coexist in a cortex-inspired silicon circuit. Nature, 405, 2000.

[MAL]

Malik, J. and Perona, P. (1990). Preattentive texture discrimination with early vision mechanisms.

Journal of the Optical Society of America A, 7(5):923-932.

[BM]

D. Ackley, G. Hinton, T. Sejnowski (1985). A Learning Algorithm for Boltzmann Machines. Cognitive Science, 9(1):147-169.

[CDI]

G. E. Hinton. Training products of experts by minimizing contrastive divergence. Neural computation 14.8 (2002): 1771-1800.

[RMSP]

T. Tieleman, G. E. Hinton. Lecture 6.5-rmsprop: Divide the gradient by a running average of its recent magnitude. COURSERA: Neural networks for machine learning 4.2 (2012): 26-31.

[TSNE]

L. V. D. Maaten, G. E. Hinton (2008). Visualizing data using t-SNE. Journal of Machine Learning research, 9, 2579-2605.

[CAPS]

S. Sabour, N. Frosst, G. E. Hinton (2017).

Dynamic routing between capsules. Proc. NIPS 2017, pp. 3856-3866.

[RUM] DE Rumelhart, GE Hinton, RJ Williams (1985). Learning Internal Representations by Error Propagation. TR No. ICS-8506, California Univ San Diego La Jolla Inst for Cognitive Science. Later version published as:

Learning representations by back-propagating errors. Nature, 323, p. 533-536 (1986).

[MC43]

W. S. McCulloch, W. Pitts. A Logical Calculus of Ideas Immanent in Nervous Activity.

Bulletin of Mathematical Biophysics, Vol. 5, p. 115-133, 1943.

[K56]

S.C. Kleene. Representation of Events in Nerve Nets and Finite Automata. Automata Studies, Editors: C.E. Shannon and J. McCarthy, Princeton University Press, p. 3-42, Princeton, N.J., 1956.

[R58]

Rosenblatt, F. (1958). The perceptron: a probabilistic model for information storage and organization

in the brain. Psychological review, 65(6):386.

[R61]

Joseph, R. D. (1961). Contributions to perceptron theory. PhD thesis, Cornell Univ.

[R62]

Rosenblatt, F. (1962). Principles of Neurodynamics. Spartan, New York.

[M69] M. Minsky, S. Papert. Perceptrons (MIT Press, Cambridge, MA, 1969).

[S20]

T. Sejnowski. The unreasonable effectiveness of deep learning in artificial intelligence. PNAS, January 28, 2020.

Link.

[NASC1] J. Schmidhuber. First Pow(d)ered flight / plane truth. Correspondence, Nature, 421 p 689, Feb 2003.

[NASC2]

J. Schmidhuber. Zooming in on aviation history.

Correspondence, Nature, vol 566, p 39, 7 Feb 2019.

[NASC3] J. Schmidhuber. The last inventor of the telephone. Letter, Science, 319, no. 5871, p. 1759, March 2008.

[NASC4] J. Schmidhuber. Turing: Keep his work in perspective.

Correspondence, Nature, vol 483, p 541, March 2012, doi:10.1038/483541b.

[NASC5] J. Schmidhuber. Turing in Context.

Letter, Science, vol 336, p 1639, June 2012.

(On Gödel, Zuse, Turing.)

See also comment on response by A. Hodges (DOI:10.1126/science.336.6089.1639-a)

[NASC6] J. Schmidhuber. Colossus was the first electronic digital computer. Correspondence, Nature, 441 p 25, May 2006.

[NASC7] J. Schmidhuber. Turing's impact. Correspondence, Nature, 429 p 501, June 2004

[NASC8] J. Schmidhuber. Prototype resilient, self-modeling robots. Correspondence, Science, 316, no. 5825 p 688, May 2007.

[NASC9] J. Schmidhuber. Comparing the legacies of Gauss, Pasteur, Darwin. Correspondence, Nature, vol 452, p 530, April 2008.

[MIR] J. Schmidhuber (2019). Deep Learning: Our Miraculous Year 1990-1991. (Containing most references cited above and in [DEC]. For convenience also appended below.)

[BW] H. Bourlard, C. J. Wellekens (1989).

Links between Markov models and multilayer perceptrons. NIPS 1989, p. 502-510.

[BRI] Bridle, J.S. (1990). Alpha-Nets: A Recurrent "Neural" Network Architecture with a Hidden Markov Model Interpretation, Speech Communication, vol. 9, no. 1, pp. 83-92.

[BOU] H Bourlard, N Morgan (1993). Connectionist speech recognition. Kluwer, 1993.

[HYB12]

Hinton, G. E., Deng, L., Yu, D., Dahl, G. E., Mohamed, A., Jaitly, N., Senior, A., Vanhoucke, V.,

Nguyen, P., Sainath, T. N., and Kingsbury, B. (2012). Deep neural networks for acoustic modeling

in speech recognition: The shared views of four research groups. IEEE Signal Process. Mag.,

29(6):82-97.

[R2] Reddit/ML, 2019. J. Schmidhuber really had GANs in 1990.

[R3] Reddit/ML, 2019. NeurIPS 2019 Bengio Schmidhuber Meta-Learning Fiasco.

[R11] Reddit/ML, 2020. Schmidhuber: Critique of Honda Prize for Dr. Hinton

[R4] Reddit/ML, 2019. Five major deep learning papers by G. Hinton did not cite similar earlier work by J. Schmidhuber.

[R5] Reddit/ML, 2019. The 1997 LSTM paper by Hochreiter & Schmidhuber has become the most cited deep learning research paper of the 20th century.

[R6] Reddit/ML, 2019. DanNet, the CUDA CNN of Dan Ciresan in J. Schmidhuber's team, won 4 image recognition challenges prior to AlexNet.

[R7] Reddit/ML, 2019. J. Schmidhuber on Seppo Linnainmaa, inventor of backpropagation in 1970.

[R8] Reddit/ML, 2019. J. Schmidhuber on Alexey Ivakhnenko, godfather of deep learning 1965.

[DL1] J. Schmidhuber, 2015.

Deep Learning in neural networks: An overview. Neural Networks, 61, 85-117.

More.

[DL2] J. Schmidhuber, 2015.

Deep Learning.

Scholarpedia, 10(11):32832.

[DL3] Y. LeCun, Y. Bengio, G. Hinton (2015). Deep Learning. Nature 521, 436-444.

HTML.

[DL4] J. Schmidhuber, 2017. Our impact on the world's most valuable public companies: 1. Apple, 2. Alphabet (Google), 3. Microsoft, 4. Facebook, 5. Amazon ...

HTML.

[DLC] J. Schmidhuber, 2015. Critique of Paper by "Deep Learning Conspiracy" (Nature 521 p 436). June 2015.

HTML.

[DM2] V. Mnih, K. Kavukcuoglu, D. Silver, A. A. Rusu, J. Veness, M. G. Bellemare, A. Graves, M. Riedmiller, A. K. Fidjeland, G. Ostrovski, S. Petersen, C. Beattie, A. Sadik, I. Antonoglou, H. King, D. Kumaran, D. Wierstra, S. Legg, D. Hassabis. Human-level control through deep reinforcement learning. Nature, vol. 518, p 1529, 26 Feb. 2015.

Link.

[DM2] V. Mnih, K. Kavukcuoglu, D. Silver, A. A. Rusu, J. Veness, M. G. Bellemare, A. Graves, M. Riedmiller, A. K. Fidjeland, G. Ostrovski, S. Petersen, C. Beattie, A. Sadik, I. Antonoglou, H. King, D. Kumaran, D. Wierstra, S. Legg, D. Hassabis. Human-level control through deep reinforcement learning. Nature, vol. 518, p 1529, 26 Feb. 2015.

Link.

[DM3]

S. Stanford. DeepMind's AI, AlphaStar Showcases Significant Progress Towards AGI. Medium ML Memoirs, 2019.

[Alphastar has a "deep LSTM core."]

[OAI1]

G. Powell, J. Schneider, J. Tobin, W. Zaremba, A. Petron, M. Chociej, L. Weng, B. McGrew, S. Sidor, A. Ray, P. Welinder, R. Jozefowicz, M. Plappert, J. Pachocki, M. Andrychowicz, B. Baker.

Learning Dexterity. OpenAI Blog, 2018.

[OAI1a]

OpenAI, M. Andrychowicz, B. Baker, M. Chociej, R. Jozefowicz, B. McGrew, J. Pachocki, A. Petron, M. Plappert, G. Powell, A. Ray, J. Schneider, S. Sidor, J. Tobin, P. Welinder, L. Weng, W. Zaremba.

Learning Dexterous In-Hand Manipulation. arxiv:1312.5602 (PDF).

[OAI2]

OpenAI et al. (Dec 2019).

Dota 2 with Large Scale Deep Reinforcement Learning.

Preprint

arxiv:1912.06680.

[An LSTM composes 84% of the model's total parameter count.]

[OAI2a]

J. Rodriguez. The Science Behind OpenAI Five that just Produced One of the Greatest Breakthrough in the History of AI. Towards Data Science, 2018. [An LSTM was the core of OpenAI Five.]

[VAN1] S. Hochreiter. Untersuchungen zu dynamischen neuronalen Netzen. Diploma thesis, TUM, 1991 (advisor J.S.) PDF.

[More on the Fundamental Deep Learning Problem.]

[VAN1] S. Hochreiter. Untersuchungen zu dynamischen neuronalen Netzen. Diploma thesis, TUM, 1991 (advisor J.S.) PDF.

[More on the Fundamental Deep Learning Problem.]

[VAN2] Y. Bengio, P. Simard, P. Frasconi. Learning long-term dependencies with gradient descent is difficult. IEEE TNN 5(2), p 157-166, 1994

[VAN3] S. Hochreiter, Y. Bengio, P. Frasconi, J. Schmidhuber. Gradient flow in recurrent nets: the difficulty of learning long-term dependencies. In S. C. Kremer and J. F. Kolen, eds., A Field Guide to Dynamical Recurrent Neural Networks. IEEE press, 2001.

PDF.

[LSTM0]

S. Hochreiter and J. Schmidhuber.

Long Short-Term Memory.

TR FKI-207-95, TUM, August 1995.

PDF.

[LSTM1] S. Hochreiter, J. Schmidhuber. Long Short-Term Memory. Neural Computation, 9(8):1735-1780, 1997. PDF.

Based on [LSTM0]. More.

[LSTM1] S. Hochreiter, J. Schmidhuber. Long Short-Term Memory. Neural Computation, 9(8):1735-1780, 1997. PDF.

Based on [LSTM0]. More.

[LSTM2] F. A. Gers, J. Schmidhuber, F. Cummins. Learning to Forget: Continual Prediction with LSTM. Neural Computation, 12(10):2451-2471, 2000.

PDF.

[The "vanilla LSTM architecture" that everybody is using today, e.g., in Google's Tensorflow.]

[LSTM3] A. Graves, J. Schmidhuber. Framewise phoneme classification with bidirectional LSTM and other neural network architectures. Neural Networks, 18:5-6, pp. 602-610, 2005.

PDF.

[LSTM4]

S. Fernandez, A. Graves, J. Schmidhuber. An application of

recurrent neural networks to discriminative keyword

spotting.

Intl. Conf. on Artificial Neural Networks ICANN'07,

2007.

PDF.

[LSTM4]

S. Fernandez, A. Graves, J. Schmidhuber. An application of

recurrent neural networks to discriminative keyword

spotting.

Intl. Conf. on Artificial Neural Networks ICANN'07,

2007.

PDF.

[LSTM5] A. Graves, M. Liwicki, S. Fernandez, R. Bertolami, H. Bunke, J. Schmidhuber. A Novel Connectionist System for Improved Unconstrained Handwriting Recognition. IEEE Transactions on Pattern Analysis and Machine Intelligence, vol. 31, no. 5, 2009.

PDF.

[LSTM6] A. Graves, J. Schmidhuber. Offline Handwriting Recognition with Multidimensional Recurrent Neural Networks. NIPS'22, p 545-552, Vancouver, MIT Press, 2009.

PDF.

[LSTM7] J. Bayer, D. Wierstra, J. Togelius, J. Schmidhuber.

Evolving memory cell structures for sequence learning.

Proc. ICANN-09, Cyprus, 2009.

PDF.

[LSTM7] J. Bayer, D. Wierstra, J. Togelius, J. Schmidhuber.

Evolving memory cell structures for sequence learning.

Proc. ICANN-09, Cyprus, 2009.

PDF.

[LSTM8] A. Graves, A. Mohamed, G. E. Hinton. Speech Recognition with Deep Recurrent Neural Networks. ICASSP 2013, Vancouver, 2013.

PDF.

[LSTM9]

O. Vinyals, L. Kaiser, T. Koo, S. Petrov, I. Sutskever, G. Hinton.

Grammar as a Foreign Language. Preprint arXiv:1412.7449 [cs.CL].

[LSTM10]

A. Graves, D. Eck and N. Beringer, J. Schmidhuber. Biologically Plausible Speech Recognition with LSTM Neural Nets. In J. Ijspeert (Ed.), First Intl. Workshop on Biologically Inspired Approaches to Advanced Information Technology, Bio-ADIT 2004, Lausanne, Switzerland, p. 175-184, 2004.

PDF.

[LSTM11]

N. Beringer and A. Graves and F. Schiel and J. Schmidhuber. Classifying unprompted speech by retraining LSTM Nets. In W. Duch et al. (Eds.): Proc. Intl. Conf. on Artificial Neural Networks ICANN'05, LNCS 3696, pp. 575-581, Springer-Verlag Berlin Heidelberg, 2005.

[LSTM12]

D. Wierstra, F. Gomez, J. Schmidhuber. Modeling systems with internal state using Evolino. In Proc. of the 2005 conference on genetic and evolutionary computation (GECCO), Washington, D. C., pp. 1795-1802, ACM Press, New York, NY, USA, 2005. Got a GECCO best paper award.

[LSTM12]

D. Wierstra, F. Gomez, J. Schmidhuber. Modeling systems with internal state using Evolino. In Proc. of the 2005 conference on genetic and evolutionary computation (GECCO), Washington, D. C., pp. 1795-1802, ACM Press, New York, NY, USA, 2005. Got a GECCO best paper award.

[LSTM13]

F. A. Gers and J. Schmidhuber.

LSTM Recurrent Networks Learn Simple Context Free and

Context Sensitive Languages.

IEEE Transactions on Neural Networks 12(6):1333-1340, 2001.

PDF.

[LSTM14]

S. Fernandez, A. Graves, J. Schmidhuber.

Sequence labelling in structured domains with

hierarchical recurrent neural networks. In Proc.

IJCAI 07, p. 774-779, Hyderabad, India, 2007 (talk).

PDF.

[LSTM15]

A. Graves, J. Schmidhuber.

Offline Handwriting Recognition with Multidimensional Recurrent Neural Networks.

Advances in Neural Information Processing Systems 22, NIPS'22, p 545-552,

Vancouver, MIT Press, 2009.

PDF.

[S2S]

I. Sutskever, O. Vinyals, Quoc V. Le. Sequence to sequence learning with neural networks. In: Advances in Neural Information Processing Systems (NIPS), 2014, 3104-3112.

[CTC] A. Graves, S. Fernandez, F. Gomez, J. Schmidhuber. Connectionist Temporal Classification: Labelling Unsegmented Sequence Data with Recurrent Neural Networks. ICML 06, Pittsburgh, 2006.

PDF.

[GSR]

H. Sak, A. Senior, K. Rao, F. Beaufays, J. Schalkwyk - Google Speech Team.

Google voice search: faster and more accurate.

Google Research Blog, Sep 2015, see also

Aug 2015

[GSR15] Dramatic

improvement of Google's speech recognition through LSTM:

Alphr Technology, Jul 2015, or 9to5google, Jul 2015

[GSR15] Dramatic

improvement of Google's speech recognition through LSTM:

Alphr Technology, Jul 2015, or 9to5google, Jul 2015

[WU] Y. Wu et al. Google's Neural Machine Translation System: Bridging the Gap between Human and Machine Translation.

Preprint arXiv:1609.08144 (PDF), 2016.

[GT16] Google's

dramatically improved Google Translate of 2016 is based on LSTM, e.g.,

WIRED, Sep 2016,

or

siliconANGLE, Sep 2016

[FB17]

By 2017, Facebook

used LSTM

to handle

over 4 billion automatic translations per day (The Verge, August 4, 2017);

see also

Facebook blog by J.M. Pino, A. Sidorov, N.F. Ayan (August 3, 2017)

[LSTM-RL]

B. Bakker, F. Linaker, J. Schmidhuber.

Reinforcement Learning in Partially Observable Mobile Robot

Domains Using Unsupervised Event Extraction.

In Proceedings of the 2002

IEEE/RSJ International Conference on

Intelligent Robots and Systems (IROS 2002), Lausanne, 2002.

PDF.

[RPG]

D. Wierstra, A. Foerster, J. Peters, J. Schmidhuber (2010). Recurrent policy gradients. Logic Journal of the IGPL, 18(5), 620-634.

[HW1] Srivastava, R. K., Greff, K., Schmidhuber, J. Highway networks.

Preprints arXiv:1505.00387 (May 2015) and arXiv:1507.06228 (July 2015). Also at NIPS'2015. The first working very deep feedforward nets with over 100 layers. Let g, t, h, denote non-linear differentiable functions. Each non-input layer of a highway net computes g(x)x + t(x)h(x), where x is the data from the previous layer. (Like LSTM with forget gates [LSTM2] for RNNs.) Resnets [HW2] are a special case of this where g(x)=t(x)=const=1.

More.

[HW1] Srivastava, R. K., Greff, K., Schmidhuber, J. Highway networks.

Preprints arXiv:1505.00387 (May 2015) and arXiv:1507.06228 (July 2015). Also at NIPS'2015. The first working very deep feedforward nets with over 100 layers. Let g, t, h, denote non-linear differentiable functions. Each non-input layer of a highway net computes g(x)x + t(x)h(x), where x is the data from the previous layer. (Like LSTM with forget gates [LSTM2] for RNNs.) Resnets [HW2] are a special case of this where g(x)=t(x)=const=1.

More.

[HW2] He, K., Zhang,

X., Ren, S., Sun, J. Deep residual learning for image recognition. Preprint arXiv:1512.03385 (Dec 2015). Residual nets are a special case of highway nets [HW1], with

g(x)=1 (a typical highway net initialization) and t(x)=1.

More.

[HW3]

K. Greff, R. K. Srivastava, J. Schmidhuber. Highway and Residual Networks learn Unrolled Iterative Estimation. Preprint

arxiv:1612.07771 (2016). Also at ICLR 2017.

[HW3]

K. Greff, R. K. Srivastava, J. Schmidhuber. Highway and Residual Networks learn Unrolled Iterative Estimation. Preprint

arxiv:1612.07771 (2016). Also at ICLR 2017.

[JOU17] Jouppi et al. (2017). In-Datacenter Performance Analysis of a Tensor Processing Unit.

Preprint arXiv:1704.04760

[CNN1] K. Fukushima: Neural network model for a mechanism of pattern

recognition unaffected by shift in position - Neocognitron.

Trans. IECE, vol. J62-A, no. 10, pp. 658-665, 1979.

[The first deep convolutional neural network architecture, with alternating convolutional layers and downsampling layers. More in Scholarpedia.]

[CNN1a] A. Waibel. Phoneme Recognition Using Time-Delay Neural Networks. Meeting of IEICE, Tokyo, Japan, 1987. [First application of backpropagation [BP1][BP2] and weight-sharing

to a convolutional architecture.]

[CNN1b] A. Waibel, T. Hanazawa, G. Hinton, K. Shikano and K. J. Lang. Phoneme recognition using time-delay neural networks. IEEE Transactions on Acoustics, Speech, and Signal Processing, vol. 37, no. 3, pp. 328-339, March 1989.

[CNN2] Y. LeCun, B. Boser, J. S. Denker, D. Henderson, R. E. Howard, W. Hubbard, L. D. Jackel: Backpropagation Applied to Handwritten Zip Code Recognition, Neural Computation, 1(4):541-551, 1989.

PDF.

[CNN3] Weng, J.,

Ahuja, N., and Huang, T. S. (1993). Learning recognition and segmentation of 3-D objects from 2-D images. Proc. 4th Intl. Conf. Computer Vision, Berlin, Germany, pp. 121-128. [A CNN whose downsampling layers use Max-Pooling

(which has become very popular) instead of Fukushima's

Spatial Averaging [CNN1].]

[CNN3] Weng, J.,

Ahuja, N., and Huang, T. S. (1993). Learning recognition and segmentation of 3-D objects from 2-D images. Proc. 4th Intl. Conf. Computer Vision, Berlin, Germany, pp. 121-128. [A CNN whose downsampling layers use Max-Pooling

(which has become very popular) instead of Fukushima's

Spatial Averaging [CNN1].]

[CNN4] M. A. Ranzato, Y. LeCun: A Sparse and Locally Shift Invariant Feature Extractor Applied to Document Images. Proc. ICDAR, 2007

[GPUCNN]

K. Chellapilla, S. Puri, P. Simard. High performance convolutional neural networks for document processing. International Workshop on Frontiers in Handwriting Recognition, 2006. [Speeding up shallow CNNs on GPU by a factor of 4.]

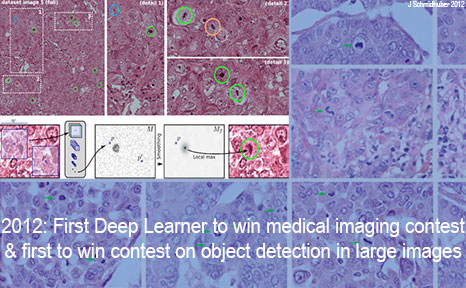

[GPUCNN1] D. C. Ciresan, U. Meier, J. Masci, L. M. Gambardella, J. Schmidhuber. Flexible, High Performance Convolutional Neural Networks for Image Classification. International Joint Conference on Artificial Intelligence (IJCAI-2011, Barcelona), 2011. PDF. ArXiv preprint.

[Speeding up deep CNNs on GPU by a factor of 60.

Used to

win four important computer vision competitions 2011-2012 before others won any

with similar approaches.]

[GPUCNN2] D. C. Ciresan, U. Meier, J. Masci, J. Schmidhuber.

A Committee of Neural Networks for Traffic Sign Classification.

International Joint Conference on Neural Networks (IJCNN-2011, San Francisco), 2011.

PDF.

HTML overview.

[First superhuman performance in a computer vision contest, with half the error rate of humans, and one third the error rate of the closest competitor. This led to massive interest from industry.]

[GPUCNN2] D. C. Ciresan, U. Meier, J. Masci, J. Schmidhuber.

A Committee of Neural Networks for Traffic Sign Classification.

International Joint Conference on Neural Networks (IJCNN-2011, San Francisco), 2011.

PDF.

HTML overview.

[First superhuman performance in a computer vision contest, with half the error rate of humans, and one third the error rate of the closest competitor. This led to massive interest from industry.]

[GPUCNN3] D. C. Ciresan, U. Meier, J. Schmidhuber. Multi-column Deep Neural Networks for Image Classification. Proc. IEEE Conf. on Computer Vision and Pattern Recognition CVPR 2012, p 3642-3649, July 2012. PDF. Longer TR of Feb 2012: arXiv:1202.2745v1 [cs.CV]. More.

[GPUCNN4] A. Krizhevsky, I. Sutskever, G. E. Hinton. ImageNet Classification with Deep Convolutional Neural Networks. NIPS 25, MIT Press, Dec 2012.

PDF.

[GPUCNN5] J. Schmidhuber. History of computer vision contests won by deep CNNs on GPU. March 2017. HTML.

[How IDSIA used GPU-based CNNs to win four important computer vision competitions 2011-2012 before others started using similar approaches.]

[GPUCNN5] J. Schmidhuber. History of computer vision contests won by deep CNNs on GPU. March 2017. HTML.

[How IDSIA used GPU-based CNNs to win four important computer vision competitions 2011-2012 before others started using similar approaches.]

[GPUCNN6] J. Schmidhuber, D. Ciresan, U. Meier, J. Masci, A. Graves. On Fast Deep Nets for AGI Vision. In Proc. Fourth Conference on Artificial General Intelligence (AGI-11), Google, Mountain View, California, 2011.

PDF.

[SCAN] J. Masci,

A. Giusti, D. Ciresan, G. Fricout, J. Schmidhuber. A Fast Learning Algorithm for Image Segmentation with Max-Pooling Convolutional Networks. ICIP 2013. Preprint arXiv:1302.1690.

[SCAN] J. Masci,

A. Giusti, D. Ciresan, G. Fricout, J. Schmidhuber. A Fast Learning Algorithm for Image Segmentation with Max-Pooling Convolutional Networks. ICIP 2013. Preprint arXiv:1302.1690.

[ST]

J. Masci, U. Meier, D. Ciresan, G. Fricout, J. Schmidhuber

Steel Defect Classification with Max-Pooling Convolutional Neural Networks.

Proc. IJCNN 2012.

PDF.

[MLP1] D. C. Ciresan, U. Meier, L. M. Gambardella, J. Schmidhuber. Deep Big Simple Neural Nets For Handwritten Digit Recognition. Neural Computation 22(12): 3207-3220, 2010. ArXiv Preprint.

[Showed that plain backprop for deep standard NNs is sufficient to break benchmark records, without any unsupervised pre-training.]

[BPA]

H. J. Kelley. Gradient Theory of Optimal Flight Paths. ARS Journal, Vol. 30, No. 10, pp. 947-954, 1960.

[BPB]

A. E. Bryson. A gradient method for optimizing multi-stage allocation processes. Proc. Harvard Univ. Symposium on digital computers and their applications, 1961.

[BPC]

S. E. Dreyfus. The numerical solution of variational problems. Journal of Mathematical Analysis and Applications, 5(1): 30-45, 1962.

[BP1] S. Linnainmaa. The representation of the cumulative rounding error of an algorithm as a Taylor expansion of the local rounding errors. Master's Thesis (in Finnish), Univ. Helsinki, 1970.

See chapters 6-7 and FORTRAN code on pages 58-60.

PDF.

See also BIT 16, 146-160, 1976.

Link.

[BP1] S. Linnainmaa. The representation of the cumulative rounding error of an algorithm as a Taylor expansion of the local rounding errors. Master's Thesis (in Finnish), Univ. Helsinki, 1970.

See chapters 6-7 and FORTRAN code on pages 58-60.

PDF.

See also BIT 16, 146-160, 1976.

Link.

[BP2] P. J. Werbos. Applications of advances in nonlinear sensitivity analysis. In R. Drenick, F. Kozin, (eds): System Modeling and Optimization: Proc. IFIP,

Springer, 1982.

PDF.

[Extending thoughts in his 1974 thesis.]

[BP4] J. Schmidhuber.

Who invented backpropagation?

More in [DL2].

[BP5]

A. Griewank (2012). Who invented the reverse mode of differentiation?

Documenta Mathematica, Extra Volume ISMP (2012): 389-400.

[UN0]

J. Schmidhuber.

Neural sequence chunkers.

Technical Report FKI-148-91, Institut für Informatik, Technische

Universität München, April 1991.

PDF.

[UN1]

J. Schmidhuber. Learning complex, extended sequences using the principle of history compression. Neural Computation, 4(2):234-242, 1992. Based on TR FKI-148-91, TUM, 1991 [UN0]. PDF.

[First working Deep Learner based on a deep RNN hierarchy, overcoming the vanishing gradient problem. Also: compressing or distilling a teacher net (the chunker) into a student net (the automatizer) that does not forget its old skills - such approaches are now widely used. More.]

J. Schmidhuber. Learning complex, extended sequences using the principle of history compression. Neural Computation, 4(2):234-242, 1992. Based on TR FKI-148-91, TUM, 1991 [UN0]. PDF.

[First working Deep Learner based on a deep RNN hierarchy, overcoming the vanishing gradient problem. Also: compressing or distilling a teacher net (the chunker) into a student net (the automatizer) that does not forget its old skills - such approaches are now widely used. More.]

[UN2] J. Schmidhuber. Habilitation thesis, TUM, 1993. PDF.

[An ancient experiment on "Very Deep Learning" with credit assignment across 1200 time steps or virtual layers and unsupervised pre-training for a stack of recurrent NN

can be found here. Plus lots of additional material and images related to other refs in the present page.]

[UN3]

J. Schmidhuber, M. C. Mozer, and D. Prelinger.

Continuous history compression.

In H. Hüning, S. Neuhauser, M. Raus, and W. Ritschel, editors,

Proc. of Intl. Workshop on Neural Networks, RWTH Aachen, pages 87-95.

Augustinus, 1993.

[UN4] G. E. Hinton, R. R. Salakhutdinov. Reducing the dimensionality of data with neural networks. Science, Vol. 313. no. 5786, pp. 504 - 507, 2006. PDF.

[PM2] J. Schmidhuber, M. Eldracher, B. Foltin. Semilinear predictability minimzation produces well-known feature detectors. Neural Computation, 8(4):773-786, 1996.

PDF. More.

[SNT]

J. Schmidhuber, S. Heil (1996).

Sequential neural text compression.

IEEE Trans. Neural Networks, 1996.

PDF.

(An earlier version appeared at NIPS 1995.)

[DEEP1]

Ivakhnenko, A. G. and Lapa, V. G. (1965). Cybernetic Predicting Devices. CCM Information Corporation. [First working Deep Learners with many layers, learning internal representations.]

[DEEP1a]

Ivakhnenko, Alexey Grigorevich. The group method of data of handling; a rival of the method of stochastic approximation. Soviet Automatic Control 13 (1968): 43-55.

[DEEP2]

Ivakhnenko, A. G. (1971). Polynomial theory of complex systems. IEEE Transactions on Systems, Man and Cybernetics, (4):364-378.

[NAT1] J. Schmidhuber. Citation bubble about to burst? Nature, vol. 469, p. 34, 6 January 2011.

HTML.

[GOD]

Kurt Gödel. Über formal unentscheidbare Sätze der Principia Mathematica und verwandter Systeme I. Monatshefte für Mathematik und Physik, 38:173-198, 1931.

[CHU]

A. Church (1935). An unsolvable problem of elementary number theory. Bulletin of the American Mathematical Society, 41: 332-333. Abstract of a talk given on 19 April 1935, to the American Mathematical Society.

Also in American Journal of Mathematics, 58(2), 345-363 (1 Apr 1936).

[TUR]

A. M. Turing. On computable numbers, with an application to the Entscheidungsproblem. Proceedings of the London Mathematical Society, Series 2, 41:230-267. Received 28 May 1936. Errata appeared in Series 2, 43, pp 544-546 (1937).

[AOI] M. Ford. Architects of Intelligence: The truth about AI from the people building it. Packt Publishing, 2018.

(Preface to German edition by J. Schmidhuber.)

[AH2]

F. H. van Eemeren , B. Garssen & B. Meuffels.

The disguised abusive ad hominem empirically investigated: Strategic manoeuvring with direct personal attacks.

Journal Thinking & Reasoning, Vol. 18, 2012, Issue 3, p. 344-364.

Link.

[AH3]

D. Walton (PhD Univ. Toronto, 1972), 1998. Ad hominem arguments. University of Alabama Press.

.