My First Deep Learning System of 1991

+ Deep Learning Timeline 1960-2013

Jürgen Schmidhuber

Pronounce: You_again Shmidhoobuh

Note: This text went through massive open online peer review in 2013.

(As a machine learning researcher I am obsessed with proper credit assignment.)

On 19 Dec 2013 a snapshot was stored as Technical Report

arXiv:1312.5548v1 [cs.NE]

(minor updates added up to 2019).

The comprehensive deep learning survey (2015, 888 references) can be found here.

In 2009, our Deep Learning Artificial Neural Networks became the first Deep Learners to win official international pattern recognition competitions [A9] (with secret test set known only to the organisers); by 2012 they had won eight of them [A12], including the first contests on object detection in

large images [54] (at ICPR 2012) and image segmentation [53] (at ISBI 2012). In 2011, they achieved the

world's first superhuman visual pattern recognition results [A11]. Others implemented variants and have won additional contests since 2012, e.g., [A12,A13].

The field of Deep Learning research is far older though (see timeline further down).

In 1965,

Ivakhnenko and Lapa [71] published the first general,

working learning algorithm for supervised

deep feedforward multilayer perceptrons [A0]

with arbitrarily many layers of neuron-like elements,

using nonlinear activation functions based on additions (i.e., linear perceptrons) and multiplications (i.e., gates).

They incrementally trained and pruned their network layer by layer to learn internal representations,

using regression and a separate validation set. (They did not call this a neural network, but that's what it was.)

For example, Ivakhnenko's 1971 paper [72] already described a deep learning net with 8 layers,

trained by their highly cited method (the "Group Method of Data Handling")

which was still popular in the new millennium, especially in Eastern Europe, where much of Machine Learning was born.

That is, Minsky & Papert's later 1969 book about the limitations of shallow nets with a single layer ("Perceptrons") addressed a "problem" that had already been solved for 4 years :-) Maybe Minsky did not even know, but he should have. Some claim that Minsky's book killed NN-related research, but of course it didn't, at least not outside the US.

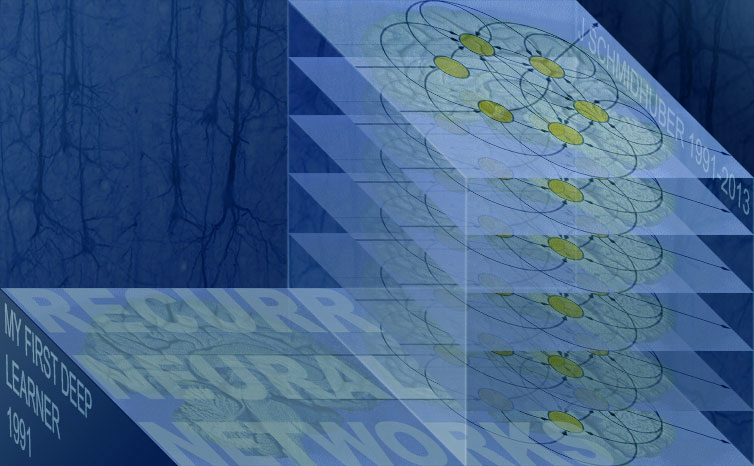

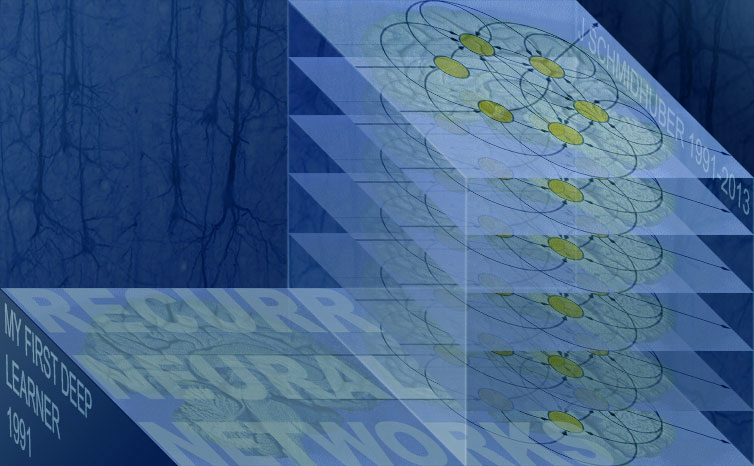

My own first Deep Learner dates back to 1991 [1,2].

To my knowledge, it also was the first "Very Deep Learner," much deeper than those of

Ivakhnenko, the father of Deep Learning: it was able to

perform credit assignment across hundreds of nonlinear operators or neural layers, by using unsupervised pre-training for a stack of recurrent neural networks (RNN) (deep by nature) as in the figure above. (RNN architectures were

first informally proposed in 1945 [75],

then formalised in 1956 [76]. Such RNNs are general computers more powerful than normal feedforward NNs, and can encode entire sequences of inputs. RNNs relate to FNNs like general computers relate to mere calculators. In particular, unlike FNNs, RNNs can in principle deal with problems of arbitrary depth.)

The basic idea of my first Deep Learner is still relevant today. Each RNN is trained for a while in unsupervised fashion to predict its next input. From then on, only unexpected inputs (errors) convey new information and get fed to the next higher RNN which thus ticks on a slower, self-organising time scale. It can easily be shown that no information gets lost. It just gets compressed (note that much of machine learning is essentially about compression). We get less and less redundant input sequence encodings in deeper and deeper levels of this hierarchical temporal memory, which compresses data in both space (like feedforward NN) and time. There also is a continuous variant [47].

One ancient illustrative Deep Learning experiment of 1993 [2] required credit assignment across 1200 time steps, or through 1200 subsequent nonlinear virtual layers. The top level code of the initially unsupervised RNN stack, however, got so compact that (previously infeasible) sequence classification through additional supervised learning became possible.

There is a way of compressing or distilling higher levels down into lower levels, thus partially collapsing the hierarchical temporal memory. The trick is to retrain lower-level RNN to continually imitate (predict) the hidden units of already trained, slower, higher-level RNN, through additional predictive output neurons [1,2]. This helps the lower RNN to develop appropriate, rarely changing memories that may bridge very long time lags.

The Deep Learner of 1991 was a first way of overcoming the

Fundamental Deep Learning Problem

identified and analysed in 1991 by my very first student (now professor) Sepp Hochreiter: the problem of vanishing or exploding gradients [3,4,4a,A5]. The latter motivated all our subsequent Deep Learning research of the 1990s and 2000s.

Through supervised

LSTM RNN (1997)

(e.g., [5,6,7,A7]) we could eventually perform similar feats as with the 1991 system [1,2], overcoming the

Fundamental Deep Learning Problem without any unsupervised pre-training. Moreover, LSTM could also learn tasks unlearnable by the partially unsupervised 1991 chunker [1,2].

Particularly successful are stacks of LSTM RNNs [10] trained by

Connectionist Temporal Classification (CTC) [8]. On faster computers of 2009, this became the first RNN system ever to win an official international pattern recognition competition [A9], through the work of my PhD student and postdoc Alex Graves, e.g., [10]. To my knowledge, this also was the first Deep Learning system ever (recurrent or not) to win such a contest. (In fact, it won three different ICDAR 2009 contests on connected handwriting in three different languages, e.g., [11,A9,A13].) Alex later moved on to Geoffrey Hinton's lab (Univ. Toronto), where a stack [10] of our bidirectional LSTM RNNs [7] also broke a famous TIMIT speech recognition record [12,A13], despite thousands of man years previously spent on HMM-based speech recognition research.

CTC-LSTM also helped to score first at NIST's OpenHaRT2013 evaluation [12a].

As of 2015, the large IT companies

(Google, Microsoft, IBM, Baidu, many others) have used our recurrent neural networks (especially LSTM) to greatly improve speech recognition, machine translation, image caption generation, syntactic parsing, text-to-speech synthesis, photo-real talking heads, prosody detection, video-to-text translation, and many other important applications. For example,

this Google blog describes how our CTC-based LSTM greatly improved Google Voice (by 49%);

now it is on billions of smartphones.

Well-known entrepreneurs also got interested in such hierarchical temporal memories [13,14].

The ancient term Deep Learning was actually first introduced

to Machine Learning by Dechter (1986),

and to Artificial Neural Networks (NNs) by Aizenberg et al (2000).

Subsequently it became especially popular in the context of deep NNs,

the most successful Deep Learners,

which are much older though, dating back half a century.

Around 2006, in the context of unsupervised pre-training for less general feedforward networks [15,A8], a Deep Learner reached 1.2% error rate [15] on the MNIST handwritten digits [16], back then the most famous benchmark of Machine Learning. Our team then showed that good old backpropagation [A1] on GPUs (with training pattern distortions [42,43] but without any unsupervised pre-training) can actually achieve a three times better result of 0.35% [17,A10] - back then, a world record (a previous standard net achieved 0.7% [43]; a backprop-trained [16] Convolutional NNs (CNNs) [19a,19,16,16a] got 0.39% [49,A8]; plain backprop without distortions except for small saccadic eye movement-like translations already got 0.95%). Then we replaced our standard net by a biologically rather plausible architecture inspired by early neuroscience-related work [19a,18,19,16]: Deep and Wide GPU-based Multi-Column Max-Pooling CNNs (MCMPCNNs) [21,22,A11] with alternating backprop-based [16,16a,50] weight-sharing convolutional layers [19,16,23] and winner-take-all [19a,19] max-pooling [20,24,50,46] layers (see [55] for early GPU-based CNNs). MCMPCNNs are committees of MPCNNs [25a] with simple democratic output averaging (compare earlier more sophisticated ensemble methods [48]). Object detection [54,54c,54a,A12] and image segmentation [53,A12] profit from fast MPCNN-based image scans [28,28a]. Our supervised GPU-MCMPCNN was the first method to achieve superhuman performance in an official international competition (with secret test set known only to the organisers) [25,25a-c,A11] (compare [51]), and the first with human-competitive performance (around 0.2%) on MNIST [22]. Since 2011, it has won numerous additional competitions on a routine basis [A11-A13].

Our GPU-MPCNNs [21,A11] were adopted by the groups of Univ. Toronto/Stanford/Google, e.g., [26,27,A12,A13].

Apple Inc., the most profitable smartphone maker, hired Ueli Meier, member of our Deep Learning team that won the ICDAR 2011 Chinese handwriting contest [11,22].

ArcelorMittal, the world's top steel producer, is using our methods for material defect detection, e.g., [28]. One of the most important applications of our techniques is biomedical imaging [54], e.g., for cancer prognosis or plaque detection in CT heart scans.

Other users include

a leading automotive supplier, and companies such as

Deepmind, whose first PhDs in Machine Learning & AI (one of them co-founder)

were trained in my lab where they first met.

Remarkably, the most successful Deep Learning algorithms in most international contests since 2009 [A9-A13] are adaptations and extensions of an over 40-year-old algorithm, namely, Linnainmaa's (1970) supervised efficient backpropagation [A1,60,29a] (compare [30,31,58,59,61]) or BPTT/RTRL for RNNs, e.g., [32-34,37-39]. (Exceptions include two 2011 contests specialised on

transfer learning [44] - but compare [45]). In particular, as of 2013, state-of-the-art feedforward nets [A11-A13] are GPU-based [21] multi-column [22] combinations of two ancient concepts: Backpropagation [A1] applied [16a] to Neocognitron-like convolutional architectures [A2] (with max-pooling layers [20,50,46] instead of alternative [19a,19,40,20a] local winner-take-all methods). (Plus additional tricks from the 1990s and 2000s, e.g., [41a,41b,41c].) In the quite different deep recurrent case, supervised systems also dominate, e.g., [5,8,10,9,39,12,A9,A13].

In particular, most competition-winning or benchmark record-setting Deep Learners [A9-A13] now use one of two supervised techniques developed in my lab: (1) recurrent LSTM (1997) [A7] trained by CTC (2006) [8], or (2) feedforward GPU-MPCNNs (2011) [21,A11] (building on earlier work since the 1960s mentioned in the text above).

Nevertheless, in many applications it can still be advantageous to combine the best of both worlds - supervised learning and unsupervised pre-training, like in my 1991 system described above [1,2,A6].

Acknowledgments: Thanks for valuable comments to Geoffrey Hinton, Kunihiko Fukushima, Yoshua Bengio, Sven Behnke, Yann LeCun, Sepp Hochreiter, Mike Mozer, Marc'Aurelio Ranzato, Andreas Griewank, Paul Werbos, Shun-ichi Amari, Seppo Linnainmaa, Peter Norvig, Yu-Chi Ho, Alex Graves, Dan Ciresan, Jonathan Masci, Stuart Dreyfus, and others. Graphics: Fibonacci Web Design

Timeline of Deep Learning Highlights 1960-2013

(compare references below)

[A] 1962: Neurobiological Inspiration Through Simple Cells and Complex Cells

Hubel and Wiesel described simple cells and complex cells in the visual cortex [18], inspiration for later deep artificial neural network (NN) architectures [A2] used in certain modern award-winning Deep Learners [A11-A12]

(I was conceived in 1962)

[A0] 1965: First Deep Learners

Ivakhnenko and Lapa published the first general,

working learning algorithm for supervised

deep feedforward multilayer perceptrons [71].

A paper from 1971 already described a deep network with 8 layers [72]

trained by the "Group Method of Data Handling,"

still popular in the new millennium.

Given a training set of input vectors with corresponding target output vectors,

layers are incrementally grown and trained by regression analysis,

then pruned with the help of a separate validation set.

Regularisation is used to weed out superfluous units, thus

learning better and better internal representations of the data.

The numbers of layers and units per layer can be learned in problem-dependent fashion.

[A1] 1970 ± a Decade or so: Backpropagation

Error functions and their gradients for complex, nonlinear, multi-stage, differentiable, NN-related systems have been discussed at least

since the early 1960s, e.g., [56-58,64-66]. Gradient descent [70] in such systems can be performed

[57a,57,58] by iterating the ancient chain rule [68,69] in dynamic

programming style [67] (compare simplified derivation using chain rule only [57b]).

However, efficient error backpropagation (BP) in arbitrary, possibly sparse, NN-like networks

apparently was first described by Linnainmaa

in 1970 [60-61]. This is also known as the reverse mode of automatic differentiation [56],

where the costs of forward activation spreading

essentially equal the costs of backward derivative calculation.

See early FORTRAN code [60]. Compare [62,29c] and some

NN-related discussion [29] (section 5.5.1),

and the first NN-specific efficient BP of 1982 by Werbos [29a,29b].

Compare [30,31,59]

and generalisations for sequence-processing recurrent NNs, e.g., [32-34,37-39].

See also natural gradients [63].

As of 2013, BP is still the central Deep Learning algorithm.

[A2] 1979: Deep Neocognitron, Weight Sharing, Convolution

Fukushima's

Deep Neocognitron Architecture [19a,19,40] incorporated neurophysiological insights [A,18] and introduced weight-sharing convolutional neural layers as well as winner-take-all layers. It is very similar to the architecture of modern, feedforward, competition-winning, purely supervised, gradient-based Deep Learners [A11-A12] (but uses local unsupervised learning rules instead).

[A3] 1987: Autoencoder Hierarchies

Ideas published by Ballard on unsupervised autoencoder hierarchies [35], related to post-2000 feedforward Deep Learners based on unsupervised pre-training, e.g., [15,A8]; compare survey [36] and somewhat related RAAMs [52]

[A4] 1987-89: Backpropagation for CNNs

In 1987, backprop [A1] was applied by Waibel [15a] to NNs with convolutions and weight sharing.

In 1989, LeCun et al. [16,16a] applied it to Fukushima's 2D convolutional systems [A2,19a,19,16] - this combination has become an essential ingredient of many modern, feedforward, computer vision contest-winning Deep Learners [A11-A12]

[A5] 1991: Fundamental Deep Learning Problem

By the early 1990s, experiments had shown that deep feedforward or recurrent networks are hard to

train by backpropagation [A1]. My student

Hochreiter discovered and analyzed the reason, namely, the

Fundamental Deep Learning Problem

due to vanishing or exploding gradients [3]. Compare [4]

[A6] 1991: Deep Hierarchy of Recurrent NNs

My first recurrent Deep Learning system (present page) [1,2]

partially overcame the fundamental problem [A5]

through a deep RNN stack pre-trained in unsupervised fashion

[1,2]

to accelerate subsequent supervised learning.

This was a working Deep Learner in the

modern post-2000 sense, and also the first Neural Hierarchical Temporal Memory,

and also the first "Very Deep Learner."

[A7] 1997: Supervised Very Deep Learner (LSTM)

Long Short-Term Memory (LSTM) RNN

became the

first purely supervised Very Deep Learner,

e.g., [5-10,12,A9]. LSTM RNNs were able to learn solutions to many previously unlearnable problems.

[A8] 2006: Deep Belief Networks / CNN Results

A paper by Hinton and Salakhutdinov [15] focused on unsupervised pre-training of feedforward NNs

to accelerate subsequent supervised learning (compare [A6]) (keywords: restricted Boltzmann machines, Deep Belief Networks).

In the same year, a supervised BP-trained [A1,A4] CNNs [A2,A4] by Ranzato et al. set a new record [49] on the famous MNIST handwritten digit recognition benchmark [16], using training pattern

deformations [42,43].

[A9] 2009: First Competitions Won by Deep Learning

First official international pattern recognition contests (with secret test sets) won by Deep Learning: Several connected handwriting competitions at ICDAR 2009 were won by LSTM RNNs [A7] performing simultaneous segmentation and recognition [10,11].

[A10] 2010: Plain Backpropagation on GPUs Yields Excellent Results

New MNIST record [17] set

by good old backpropagation [A1] in deep but otherwise

standard NNs (no unsupervised pre-training, no convolution, but training pattern

deformations), through a fast GPU implementation [17].

(A year later, the first human-competitive performance on MNIST

was achieved by a deep MCMPCNN [22,A11].)

[A11] 2011: MPCNNs on GPU - First Superhuman Visual Pattern Recognition

Ciresan et al. introduced

supervised GPU-based Max-Pooling CNNs or convnets (GPU-MPCNNs) [21],

today used by most if not all feedforward competition-winning deep NN [A12-A13]. The

first superhuman visual pattern recognition (on a secret test set) was achieved [25,25a-c] (twice better than humans, three times better than the closest artificial NN competitor, six times better than the best non-neural method),

through deep and wide Multi-Column (MC) [25a,48] GPU-MPCNN [21], the current gold standard for deep feedforward NNs, now used in many applications [A12-A13].

[A12] 2012: First Contests Won on Object Detection and Image Segmentation

An image-scanning [28,28a] GPU-MPCNN [21,A11] became the

first Deep Learner to win a

contest on visual object detection in large images [54,54c,54d,54a]

(as opposed to mere recognition/classification): the ICPR 2012 contest on mitosis detection.

New record [26] set on the ImageNet classification benchmark with the help of

an MC [A11] GPU-MPCNN variant popular in the computer vision community.

First pure image segmentation contest (ISBI 2012) won by a Deep Learner

(again an image-scanning GPU-MPCNN) [53,53a,53b] - the 8th international pattern recognition contest won by my team since 2009 (interview).

[A13] 2013: More Contests and Benchmark Records

New TIMIT phoneme recognition record

set by deep LSTM RNNs [12].

New record (almost human performance) [45a] on the ICDAR Chinese handwriting recognition

benchmark (over 3700 classes) set on a desktop machine

by a deep GPU-MCMPCNN.

MICCAI 2013 Grand Challenge on Mitosis Detection

won by a GPU-MPCNN [54-54b].

GPU-MPCNNs [21] also help to achieve new best results

on ImageNet classification [26a] and PASCAL object detection [54e].

Additional contests mentioned in the web pages of

J.S. at

the Swiss AI Lab IDSIA and

G.H. at

the University of Toronto.

References

[1] J. Schmidhuber. Learning complex, extended sequences using the principle of history compression, Neural Computation, 4(2):234-242, 1992 (based on TR FKI-148-91, 1991). PDF.

[2] J. Schmidhuber. Habilitation thesis, TUM, 1993. PDF. An ancient experiment with credit assignment across 1200 time steps or virtual layers and unsupervised pre-training for a stack of recurrent NNs

can be found here - try Google Translate in your mother tongue.

[3] S. Hochreiter. Untersuchungen zu dynamischen neuronalen Netzen. Diploma thesis, TUM, 1991 (advisor J.S.) PDF.

[4] S. Hochreiter, Y. Bengio, P. Frasconi, J. Schmidhuber. Gradient flow in recurrent nets: the difficulty of learning long-term dependencies. In S. C. Kremer and J. F. Kolen, eds., A Field Guide to Dynamical Recurrent Neural Networks. IEEE press, 2001.

PDF.

[4a] Y. Bengio, P. Simard, P. Frasconi. Learning long-term dependencies with gradient descent is difficult. IEEE TNN 5(2), p 157-166, 1994

[5] S. Hochreiter, J. Schmidhuber. Long Short-Term Memory. Neural Computation, 9(8):1735-1780, 1997. PDF. Led to a lot of follow-up work.

[6] F. A. Gers, J. Schmidhuber, F. Cummins. Learning to Forget: Continual Prediction with LSTM. Neural Computation, 12(10):2451--2471, 2000.

PDF.

[7] A. Graves, J. Schmidhuber. Framewise phoneme classification with bidirectional LSTM and other neural network architectures. Neural Networks, 18:5-6, pp. 602-610, 2005.

PDF.

[8] A. Graves, S. Fernandez, F. Gomez, J. Schmidhuber. Connectionist Temporal Classification: Labelling Unsegmented Sequence Data with Recurrent Neural Networks. ICML 06, Pittsburgh, 2006.

PDF.

[9] A. Graves, M. Liwicki, S. Fernandez, R. Bertolami, H. Bunke, J. Schmidhuber. A Novel Connectionist System for Improved Unconstrained Handwriting Recognition. IEEE Transactions on Pattern Analysis and Machine Intelligence, vol. 31, no. 5, 2009.

PDF.

[10] A. Graves, J. Schmidhuber. Offline Handwriting Recognition with Multidimensional Recurrent Neural Networks. NIPS'22, p 545-552, Vancouver, MIT Press, 2009.

PDF.

[11] J. Schmidhuber, D. Ciresan, U. Meier, J. Masci, A. Graves. On Fast Deep Nets for AGI Vision. In Proc. Fourth Conference on Artificial General Intelligence (AGI-11), Google, Mountain View, California, 2011.

PDF.

[12] A. Graves, A. Mohamed, G. E. Hinton. Speech Recognition with Deep Recurrent Neural Networks. ICASSP 2013, Vancouver, 2013.

PDF.

[12a] T. Bluche, J. Louradour, M. Knibbe, B. Moysset, F. Benzeghiba, C. Kermorvant. The A2iA Arabic Handwritten Text Recognition System at the OpenHaRT2013 Evaluation. Submitted to DAS 2014.

[13] J. Hawkins, D. George. Hierarchical Temporal Memory - Concepts, Theory, and Terminology. Numenta Inc., 2006.

[14] R. Kurzweil. How to Create a Mind: The Secret of Human Thought Revealed. ISBN 0670025291, 2012.

[15] G. E. Hinton, R. R. Salakhutdinov. Reducing the dimensionality of data with neural networks. Science, Vol. 313. no. 5786, pp. 504 - 507, 2006. PDF.

[16] Y. LeCun, B. Boser, J. S. Denker, D. Henderson, R. E. Howard, W. Hubbard, L. D. Jackel: Backpropagation Applied to Handwritten Zip Code Recognition, Neural Computation, 1(4):541-551, 1989.

PDF.

[16a] Y. LeCun, B. Boser, J. S. Denker, D. Henderson, R. E. Howard, W. Hubbard and L. D. Jackel: Handwritten digit recognition with a back-propagation network. Proc. NIPS 1989, 2, Morgan Kaufman, Denver, CO, 1990.

[17] Dan Claudiu Ciresan, U. Meier, L. M. Gambardella, J. Schmidhuber. Deep Big Simple Neural Nets For Handwritten Digit Recognition. Neural Computation 22(12): 3207-3220, 2010. ArXiv Preprint.

[18] D. H. Hubel, T. N. Wiesel. Receptive Fields, Binocular Interaction And Functional Architecture In The Cat's Visual Cortex. Journal of Physiology, 1962.

[19] K. Fukushima. Neocognitron: A self-organizing neural network model for a mechanism of pattern recognition unaffected by shift in position. Biological Cybernetics, 36(4): 193-202, 1980.

Scholarpedia.

[19a] K. Fukushima: Neural network model for a mechanism of pattern

recognition unaffected by shift in position - Neocognitron.

Trans. IECE, vol. J62-A, no. 10, pp. 658-665, 1979.

[19b] A. Waibel. Phoneme Recognition Using Time-Delay Neural Networks. Meeting of IEICE, Tokyo, Japan, 1987.

[20] M. Riesenhuber, T. Poggio. Hierarchical models of object recognition in cortex. Nature Neuroscience 11, p 1019-1025, 1999. PDF.

[20a]

J. Schmidhuber.

A local learning algorithm for dynamic feedforward and

recurrent networks.

Connection Science, 1(4):403-412, 1989.

PDF.

HTML.

Local competition in the Neural Bucket Brigade (figures omitted).

[21] D. C. Ciresan, U. Meier, J. Masci, L. M. Gambardella, J. Schmidhuber. Flexible, High Performance Convolutional Neural Networks for Image Classification. International Joint Conference on Artificial Intelligence (IJCAI-2011, Barcelona), 2011. PDF. ArXiv preprint.

[22] D. C. Ciresan, U. Meier, J. Schmidhuber. Multi-column Deep Neural Networks for Image Classification. Proc. IEEE Conf. on Computer Vision and Pattern Recognition CVPR 2012, p 3642-3649, 2012. PDF. Longer TR: arXiv:1202.2745v1 [cs.CV]

[23] Y. LeCun, Y. Bottou, Y. Bengio, P. Haffner. Gradient-based learning applied to document

recognition. Proceedings of the IEEE, 86(11):2278-2324, 1998

PDF.

[24] S. Behnke. Hierarchical Neural Networks for Image Interpretation. Dissertation, FU Berlin, 2002. LNCS 2766, Springer 2003. PDF.

[25] D. C. Ciresan, U. Meier, J. Masci, J. Schmidhuber. Multi-Column Deep Neural Network for Traffic Sign Classification. Neural Networks 32: 333-338, 2012. PDF of preprint.

[25a] D. C. Ciresan, U. Meier, J. Masci, J. Schmidhuber.

A Committee of Neural Networks for Traffic Sign Classification.

International Joint Conference on Neural Networks (IJCNN-2011, San Francisco), 2011.

PDF.

[25b] J. Stallkamp, M. Schlipsing, J. Salmen, C. Igel. INI Benchmark Website: The German Traffic Sign Recognition Benchmark for IJCNN 2011.

[25c] Qualifying for IJCNN 2011

competition: results of 1st stage (January 2011)

[25d] Results for IJCNN 2011 competition (2 August 2011)

[26] A. Krizhevsky, I. Sutskever, G. E. Hinton. ImageNet Classification with Deep Convolutional Neural Networks. NIPS 25, MIT Press, 2012.

PDF.

[26a] M. D. Zeiler, R. Fergus. Visualizing and Understanding Convolutional Networks.

TR arXiv:1311.2901 [cs.CV], 2013.

[27] A. Coates, B. Huval, T. Wang, D. J. Wu, Andrew Y. Ng, B. Catanzaro. Deep Learning with COTS HPC Systems, ICML 2013.

PDF.

[28] J. Masci, A. Giusti, D. Ciresan, G. Fricout, J. Schmidhuber. A Fast Learning Algorithm for Image Segmentation with Max-Pooling Convolutional Networks. ICIP 2013. Preprint arXiv:1302.1690

[28a]

A. Giusti, D. Ciresan, J. Masci, L. M. Gambardella, J. Schmidhuber. Fast Image Scanning with Deep Max-Pooling Convolutional Neural Networks. ICIP 2013. Preprint arXiv:1302.1700

[29] P. J. Werbos. Beyond Regression: New Tools for Prediction and Analysis in the Behavioral Sciences. PhD thesis, Harvard University, 1974

[29a] P. J. Werbos. Applications of advances in nonlinear sensitivity analysis. In R. Drenick, F. Kozin, (eds): System Modeling and Optimization: Proc. IFIP (1981), Springer, 1982.

PDF.

[29b] P. J. Werbos. Backwards Differentiation in AD and Neural Nets: Past Links and New Opportunities. In H.M. Bücker, G. Corliss, P. Hovland, U. Naumann, B. Norris (Eds.), Automatic Differentiation: Applications, Theory, and Implementations, 2006. PDF.

[29c] S. E. Dreyfus. The computational solution of optimal control problems with time lag. IEEE Transactions on Automatic Control, 18(4):383-385, 1973.

[30] Y. LeCun: Une procedure d'apprentissage pour reseau a seuil asymetrique. Proceedings of Cognitiva 85, 599-604, Paris, France, 1985.

PDF.

[31] D. E. Rumelhart, G. E. Hinton, R. J. Williams. Learning internal representations by error propagation. In D. E. Rumelhart and J. L. McClelland, editors, Parallel Distributed Processing, volume 1, pages 318-362. MIT Press, 1986

[32] Ron J. Williams. Complexity of exact gradient computation algorithms for recurrent neural networks. Technical Report Technical Report NU-CCS-89-27, Boston: Northeastern University, College of Computer Science, 1989

[33] A. J. Robinson and F. Fallside. The utility driven dynamic error propagation network. TR CUED/F-INFENG/TR.1, Cambridge University Engineering Department, 1987

[34] P. J. Werbos. Generalization of backpropagation with application to a recurrent gas market model. Neural Networks, 1, 1988

[35] D. H. Ballard. Modular learning in neural networks. Proc. AAAI-87, Seattle, WA, p 279-284, 1987

[36] G. E. Hinton. Connectionist learning procedures. Artificial Intelligence 40, 185-234, 1989.

PDF.

[37] B. A. Pearlmutter. Learning state space trajectories in recurrent neural networks.

Neural Computation, 1(2):263-269, 1989

[38] J. Schmidhuber. A fixed size

storage O(n^3) time complexity learning algorithm for fully recurrent

continually running networks.

Neural Computation, 4(2):243-248, 1992.

PDF.

[39] J. Martens and I. Sutskever. Training Recurrent Neural Networks with Hessian-Free Optimization. In Proc.

ICML 2011. PDF.

[40] K. Fukushima: Artificial vision by multi-layered neural networks:

Neocognitron and its advances, Neural Networks, vol. 37, pp. 103-119, 2013.

Link.

[41a] G. B. Orr, K.R. Müller, eds., Neural Networks: Tricks of the Trade. LNCS 1524, Springer, 1999.

[41b] G. Montavon, G. B. Orr, K. R. Müller, eds., Neural Networks: Tricks of the Trade. LNCS 7700, Springer, 2012.

[41c] Lots of additional tricks for improving (e.g., accelerating, robustifying, simplifying, regularising) NNs can be found in the proceedings of NIPS (since 1987), IJCNN (of IEEE & INNS, since 1989), ICANN (since 1991), and other NN conferences since the late 1980s. Given the recent attention to NNs, many of the old tricks may get revived.

[42]

H. Baird. Document image defect models.

IAPR Workshop, Syntactic & Structural Pattern Recognition, p 38-46, 1990

[43]

P. Y. Simard, D. Steinkraus, J.C. Platt. Best Practices for Convolutional Neural Networks Applied to Visual Document Analysis. ICDAR 2003, p 958-962, 2003.

[44] I. J. Goodfellow, A. Courville, Y. Bengio. Spike-and-Slab Sparse Coding for Unsupervised Feature Discovery. Proc. ICML, 2012.

[45]

D. Ciresan, U. Meier, J. Schmidhuber. Transfer Learning for Latin and Chinese Characters with Deep Neural Networks. Proc. IJCNN 2012, p 1301-1306, 2012. PDF

[45a] D. Ciresan, J. Schmidhuber. Multi-Column Deep Neural Networks for Offline Handwritten Chinese Character Classification. Preprint arXiv:1309.0261, 1 Sep 2013.

[46] D. Scherer, A. Mueller, S. Behnke. Evaluation of pooling operations in convolutional architectures for object recognition. In Proc. ICANN 2010. PDF

[47]

J. Schmidhuber, M. C. Mozer, and D. Prelinger.

Continuous history compression.

In H. Hüning, S. Neuhauser, M. Raus, and W. Ritschel, editors,

Proc. of Intl. Workshop on Neural Networks, RWTH Aachen, pages 87-95.

Augustinus, 1993.

[48]

R. E. Schapire. The Strength of Weak Learnability. Machine Learning 5 (2): 197-227, 1990.

[49]

M. A. Ranzato, C. Poultney, S. Chopra, Y. Lecun. Efficient learning of sparse representations with an energy-based

model. Proc. NIPS, 2006.

[50]

M. Ranzato, F. J. Huang, Y. Boureau, Y. LeCun. Unsupervised Learning of Invariant Feature Hierarchies with Applications to Object Recognition. Proc. CVPR 2007, Minneapolis, 2007.

PDF

[51]

P. Sermanet, Y. LeCun. Tra�ffic sign recognition with multi-scale convolutional networks. Proc. IJCNN 2011, p 2809-2813, IEEE, 2011

[52]

J. B. Pollack. Implications of Recursive Distributed Representations. Advances in Neural Information Processing Systems I, NIPS, 527-536, 1989.

[53] Deep Learning NNs win 2012 Brain Image Segmentation Contest

[53a] D. Ciresan, A. Giusti, L. Gambardella, J. Schmidhuber.

Deep Neural Networks Segment Neuronal Membranes in Electron Microscopy Images.

In Advances in Neural Information Processing Systems (NIPS 2012), Lake Tahoe,

2012. PDF.

[53b]

Segmentation of neuronal structures in EM stacks challenge - IEEE International Symposium on Biomedical Imaging (ISBI) 2012

[54]

Deep Learning NNs win MICCAI 2013 Grand Challenge and 2012 ICPR Contest on Mitosis Detection

[54a] D. Ciresan, A. Giusti, L. Gambardella, J. Schmidhuber. Mitosis Detection in Breast Cancer Histology Images using Deep Neural Networks. MICCAI 2013.

PDF.

[54b] MICCAI 2013 Grand Challenge on Mitosis Detection, organised by M. Veta, M.A. Viergever, J.P.W. Pluim, N. Stathonikos, P. J. van Diest of University Medical Center Utrecht

[54c]

ICPR 2012 Contest on Mitosis Detection in Breast Cancer Histological Images (MITOS dataset). Organizers: IPAL Laboratory, TRIBVN Company, Pitie-Salpetriere Hospital, CIALAB of Ohio State Univ.

[54d] L. Roux, D. Racoceanu, N. Lomenie, M. Kulikova, H. Irshad, J. Klossa, F. Capron, C. Genestie, G. Le Naour, M. N. Gurcan. Mitosis detection in breast cancer histological images - An ICPR 2012 contest. J Pathol. Inform. 4:8, 2013.

[54e] R. Girshick, J. Donahue, T. Darrell, J. Malik. Rich feature hierarchies for accurate object detection and semantic segmentation. TR arxiv.org/abs/1311.2524

[55]

K. Chellapilla, S. Puri, P. Simard. High performance convolutional neural networks for document processing.

International Workshop on Frontiers in Handwriting Recognition, 2006.

[56]

A. Griewank.

Who invented the reverse mode of differentiation? Documenta Mathematica, Extra Volume ISMP, p 389-400, 2012

[57]

H. J. Kelley. Gradient Theory of Optimal Flight Paths. ARS Journal, Vol. 30, No. 10, pp. 947-954, 1960.

[57a]

A. E. Bryson. A gradient method for optimizing multi-stage allocation processes. Proc. Harvard Univ. Symposium on digital computers and their applications, 1961.

[57b]

S. E. Dreyfus. The numerical solution of variational problems. Journal of Mathematical Analysis and Applications, 5(1): 30-45, 1962.

[58]

A. E. Bryson, Y. Ho. Applied optimal control: optimization, estimation, and control.

Waltham, MA: Blaisdell, 1969.

[59]

D.B. Parker. Learning-logic, TR-47, Sloan School of Management, MIT, Cambridge, MA, 1985.

[60] S. Linnainmaa. The representation of the cumulative rounding error of an algorithm as a Taylor expansion of the local rounding errors. Master's Thesis (in Finnish), Univ. Helsinki, 1970.

See chapters 6-7 and FORTRAN code on pages 58-60.

PDF.

[61] S. Linnainmaa. Taylor expansion of the accumulated rounding error. BIT 16, 146-160, 1976.

Link.

[62] G. M. Ostrovskii, Y. M. Volin, W. W. Borisov. Über die Berechnung von

Ableitungen. Wiss. Z. Tech. Hochschule für Chemie 13, 382-384, 1971.

[63] S. Amari, Natural gradient works efficiently in learning, Neural Computation, 10, 4-10, 1998

[64]

G. J. H. Wilkinson. The algebraic eigenvalue problem. Clarendon Press, Oxford, UK, 1965.

[65] S. Amari, Theory of Adaptive Pattern Classifiers. IEEE Trans., EC-16, No. 3, pp. 299-307, 1967

[66] S. W. Director, R. A. Rohrer. Automated network design - the frequency-domain case. IEEE Trans. Circuit Theory CT-16,

330-337, 1969.

[67]

R. Bellman. Dynamic Programming. Princeton University Press, 1957.

[68]

G. W. Leibniz. Memoir using the chain rule, 1676. (Cited in TMME 7:2&3 p 321-332, 2010)

[69]

G. F. A. L'Hospital. Analyse des infiniment petits - Pour l'intelligence des lignes courbes. Paris: L'Imprimerie Royale, 1696.

[70] J. Hadamard.

Memoire sur le probleme d'analyse relatif a l'equilibre des plaques elastiques encastrees. Memoires presentes par divers savants a l'Academie des Sciences 33, 1908.

[71]

Ivakhnenko, A. G. and Lapa, V. G. (1965). Cybernetic Predicting Devices. CCM Information Corporation.

[72]

Ivakhnenko, A. G. (1971). Polynomial theory of complex systems. IEEE Transactions on Systems, Man and Cybernetics, (4):364-378.

[73]

R. Dechter (1986). Learning while searching in constraint-satisfaction problems. University of California, Computer Science Department, Cognitive Systems Laboratory.

[74]

I. Aizenberg, N.N. Aizenberg, and J. P.L. Vandewalle (2000). Multi-Valued and Universal Binary Neurons: Theory, Learning and Applications. Springer Science & Business Media.

[75]

W. S. McCulloch, W. Pitts. A Logical Calculus of Ideas Immanent in Nervous Activity.

Bulletin of Mathematical Biophysics, Vol. 5, p. 115-133, 1943.

[76]

S.C. Kleene. Representation of Events in Nerve Nets and Finite Automata. Automata Studies, Editors: C.E. Shannon and J. McCarthy, Princeton University Press, p. 3-42, Princeton, N.J., 1956.

.

|