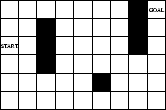

Non-Markovian variant of Sutton's Markovian

maze task [47],

with changing policy environment.

The external environment consists of a two-dimensional grid with

9 by 6 fields. ![]() denotes the field in the

denotes the field in the ![]() -th row and

the

-th row and

the ![]() -th column.

The following fields are blocked by obstacles:

-th column.

The following fields are blocked by obstacles:

![]() ,

,

![]() ,

,

![]() ,

,

![]() ,

,

![]() ,

,

![]() ,

,

![]() .

In the beginning, an artificial ``animat'' is placed on

.

In the beginning, an artificial ``animat'' is placed on

![]() (the start field).

In addition to the 17 general primitives from Table 1 (not counting

input/output primitives),

there are four problem-specific primitives with obvious meaning:

one-step-north(), one-step-south(),

one-step-east(), one-step-west().

The system cannot execute actions that would lead outside the grid or into

an obstacle.

Again, the following values are written into special cells

in the input area

whenever they change: IP,

sp, remainder

(the start field).

In addition to the 17 general primitives from Table 1 (not counting

input/output primitives),

there are four problem-specific primitives with obvious meaning:

one-step-north(), one-step-south(),

one-step-east(), one-step-west().

The system cannot execute actions that would lead outside the grid or into

an obstacle.

Again, the following values are written into special cells

in the input area

whenever they change: IP,

sp, remainder![]() .

Another input cell is filled with a 1 whenever the

animat is on the goal field,

otherwise it is filled with a 0.

There is only one way the animat can partially observe

aspects of the external world:

four additional input cells

are rewritten after each execution

of some problem-specific primitive --

the first (second, third, fourth) cell is filled with

.

Another input cell is filled with a 1 whenever the

animat is on the goal field,

otherwise it is filled with a 0.

There is only one way the animat can partially observe

aspects of the external world:

four additional input cells

are rewritten after each execution

of some problem-specific primitive --

the first (second, third, fourth) cell is filled with

![]() if the field to the north (south, east, west)

of the animat is blocked or does not exist, otherwise

the cell is filled with

if the field to the north (south, east, west)

of the animat is blocked or does not exist, otherwise

the cell is filled with ![]() .

Whenever the animat reaches

.

Whenever the animat reaches

![]() (the goal field),

the system receives a constant payoff (100),

and the animat is

transferred back to

(the goal field),

the system receives a constant payoff (100),

and the animat is

transferred back to ![]() (the start field).

Parameters for storage size etc. are the same as with the previous

task, and time is measured the same way.

Clearly, to maximize cumulative payoff, the system has to find

short paths from start to goal.

(the start field).

Parameters for storage size etc. are the same as with the previous

task, and time is measured the same way.

Clearly, to maximize cumulative payoff, the system has to find

short paths from start to goal.

|

Why this task is non-trivial. Unlike previous reinforcement learning systems, the system does not have a smart initial strategy for temporal credit assignment -- it has to develop its own such strategies. Unlike with Sutton's original set-up, the system does not see a built-in unique representation of its current position on the grid. Its interface to the environment is non-Markovian [28]. This represents one reason why most traditional reinforcement learning systems do not have a sound strategy for solving this task. Another is the changing policy environment. See next paragraph.

Changing policy environment. The fact that the obstacles remain where they are and that the animat is occasionally reset to its start position does not imply that the policy environment does not change. In fact, many actions executed by the system change the contents of storage cells, which are never reset: as mentioned above, storage cells are like an external notebook and have to be viewed as part of the policy environment -- there are no exactly repeatable trials with identical initial conditions.