5.

J. Schmidhuber, D. Wierstra, M. Gagliolo, F. Gomez.

Training Recurrent Networks by Evolino.

Neural Computation, 19(3): 757-779, 2007.

PDF.

4.

H. Mayer, F. Gomez, D. Wierstra, I. Nagy, A. Knoll, and J. Schmidhuber (2006).

A System for Robotic Heart Surgery that Learns to Tie Knots Using Recurrent

Neural Networks. Proc. IROS-06, Beijing.

PDF.

3.

J. Schmidhuber, D. Wierstra, F. J. Gomez.

Evolino: Hybrid Neuroevolution / Optimal Linear Search

for Sequence Learning.

Proceedings of the 19th International Joint Conference

on Artificial Intelligence (IJCAI), Edinburgh, p. 853-858, 2005.

PDF.

2.

D. Wierstra, F. Gomez, J. Schmidhuber.

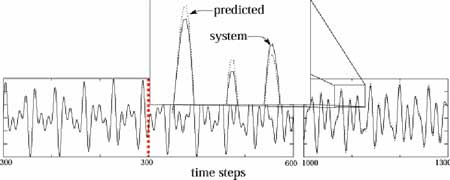

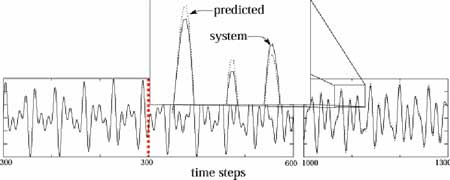

Modeling systems with internal state using Evolino.

In Proc. of the 2005 conference on genetic and

evolutionary computation (GECCO), Washington, D. C.,

pp. 1795-1802, ACM Press, New York, NY, USA, 2005.

PDF.

Got a GECCO best paper award.

1.

J. Schmidhuber, M. Gagliolo, D. Wierstra, F. Gomez.

Evolino for Recurrent Support Vector Machines.

TR IDSIA-19-05, v2, 15 Dec 2005.

PDF.

Short version at ESANN 2006.

|

|

|

|

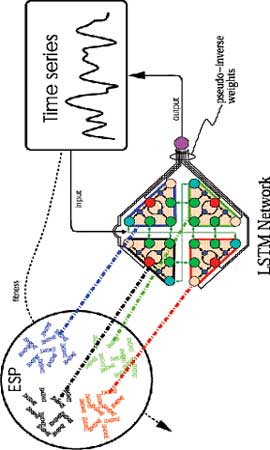

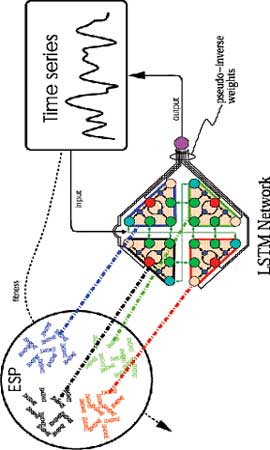

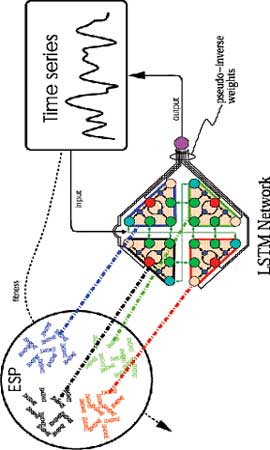

Basic principle: Evolve

an RNN population; to obtain some RNN's fitness DO:

Feed the training sequences into the RNN. This yields sequences of hidden unit

activations. Compute an optimal linear mapping from hidden to target trajectories.

The fitness of the recurrent hidden units is the RNN

performance on a validation set, given this mapping.

If the goal is to minimize mean squared error, then

use the pseudoinverse for computing the optimal mapping (left).

If the goal is to maximize the margin, then

use quadratic programming. This yields

Recurrent Support Vector Machines.

A recent journal publication on an

EVOLINO application to Robotics:

H. Mayer, F. Gomez, D. Wierstra, I. Nagy, A. Knoll, and J. Schmidhuber.

A System for Robotic Heart Surgery that Learns to Tie Knots Using Recurrent Neural Networks.

Advanced Robotics,

22/13-14, p. 1521-1537, 2008, in press.

|

|

|